Ruben Schlagowski

VoiceX: A Text-To-Speech Framework for Custom Voices

Aug 22, 2024Abstract:Modern TTS systems are capable of creating highly realistic and natural-sounding speech. Despite these developments, the process of customizing TTS voices remains a complex task, mostly requiring the expertise of specialists within the field. One reason for this is the utilization of deep learning models, which are characterized by their expansive, non-interpretable parameter spaces, restricting the feasibility of manual customization. In this paper, we present a novel human-in-the-loop paradigm based on an evolutionary algorithm for directly interacting with the parameter space of a neural TTS model. We integrated our approach into a user-friendly graphical user interface that allows users to efficiently create original voices. Those voices can then be used with the backbone TTS model, for which we provide a Python API. Further, we present the results of a user study exploring the capabilities of VoiceX. We show that VoiceX is an appropriate tool for creating individual, custom voices.

Relevant Irrelevance: Generating Alterfactual Explanations for Image Classifiers

May 08, 2024

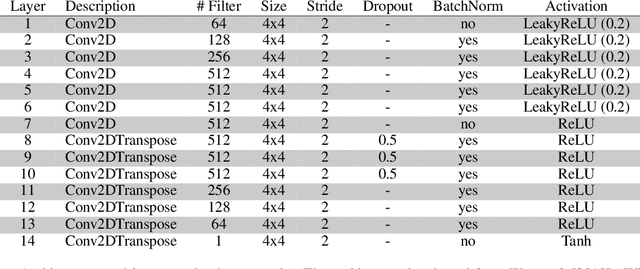

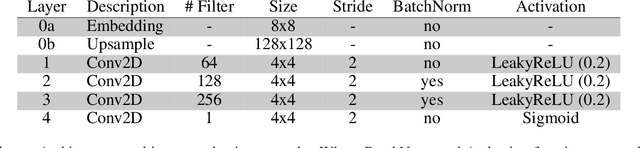

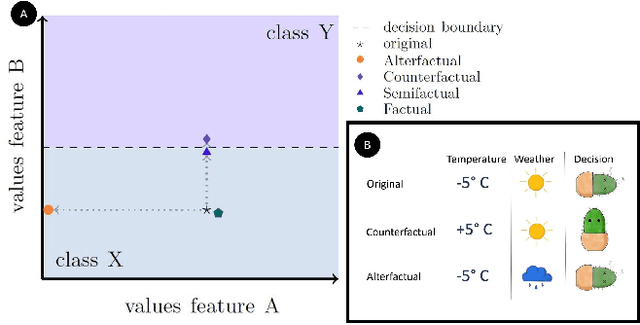

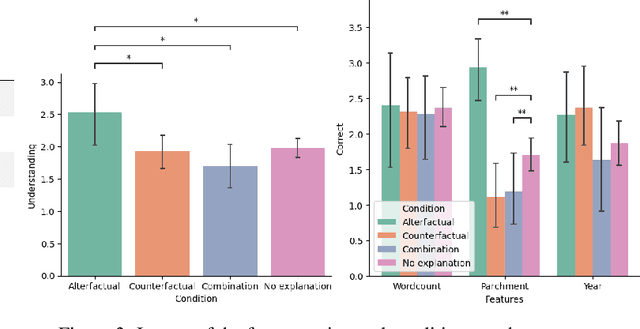

Abstract:In this paper, we demonstrate the feasibility of alterfactual explanations for black box image classifiers. Traditional explanation mechanisms from the field of Counterfactual Thinking are a widely-used paradigm for Explainable Artificial Intelligence (XAI), as they follow a natural way of reasoning that humans are familiar with. However, most common approaches from this field are based on communicating information about features or characteristics that are especially important for an AI's decision. However, to fully understand a decision, not only knowledge about relevant features is needed, but the awareness of irrelevant information also highly contributes to the creation of a user's mental model of an AI system. To this end, a novel approach for explaining AI systems called alterfactual explanations was recently proposed on a conceptual level. It is based on showing an alternative reality where irrelevant features of an AI's input are altered. By doing so, the user directly sees which input data characteristics can change arbitrarily without influencing the AI's decision. In this paper, we show for the first time that it is possible to apply this idea to black box models based on neural networks. To this end, we present a GAN-based approach to generate these alterfactual explanations for binary image classifiers. Further, we present a user study that gives interesting insights on how alterfactual explanations can complement counterfactual explanations.

Alterfactual Explanations -- The Relevance of Irrelevance for Explaining AI Systems

Jul 19, 2022

Abstract:Explanation mechanisms from the field of Counterfactual Thinking are a widely-used paradigm for Explainable Artificial Intelligence (XAI), as they follow a natural way of reasoning that humans are familiar with. However, all common approaches from this field are based on communicating information about features or characteristics that are especially important for an AI's decision. We argue that in order to fully understand a decision, not only knowledge about relevant features is needed, but that the awareness of irrelevant information also highly contributes to the creation of a user's mental model of an AI system. Therefore, we introduce a new way of explaining AI systems. Our approach, which we call Alterfactual Explanations, is based on showing an alternative reality where irrelevant features of an AI's input are altered. By doing so, the user directly sees which characteristics of the input data can change arbitrarily without influencing the AI's decision. We evaluate our approach in an extensive user study, revealing that it is able to significantly contribute to the participants' understanding of an AI. We show that alterfactual explanations are suited to convey an understanding of different aspects of the AI's reasoning than established counterfactual explanation methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge