Rokas Gipiškis

Vilnius University

Deprecating Benchmarks: Criteria and Framework

Jul 08, 2025Abstract:As frontier artificial intelligence (AI) models rapidly advance, benchmarks are integral to comparing different models and measuring their progress in different task-specific domains. However, there is a lack of guidance on when and how benchmarks should be deprecated once they cease to effectively perform their purpose. This risks benchmark scores over-valuing model capabilities, or worse, obscuring capabilities and safety-washing. Based on a review of benchmarking practices, we propose criteria to decide when to fully or partially deprecate benchmarks, and a framework for deprecating benchmarks. Our work aims to advance the state of benchmarking towards rigorous and quality evaluations, especially for frontier models, and our recommendations are aimed to benefit benchmark developers, benchmark users, AI governance actors (across governments, academia, and industry panels), and policy makers.

PreCare: Designing AI Assistants for Advance Care Planning (ACP) to Enhance Personal Value Exploration, Patient Knowledge, and Decisional Confidence

May 14, 2025Abstract:Advance Care Planning (ACP) allows individuals to specify their preferred end-of-life life-sustaining treatments before they become incapacitated by injury or terminal illness (e.g., coma, cancer, dementia). While online ACP offers high accessibility, it lacks key benefits of clinical consultations, including personalized value exploration, immediate clarification of decision consequences. To bridge this gap, we conducted two formative studies: 1) shadowed and interviewed 3 ACP teams consisting of physicians, nurses, and social workers (18 patients total), and 2) interviewed 14 users of ACP websites. Building on these insights, we designed PreCare in collaboration with 6 ACP professionals. PreCare is a website with 3 AI-driven assistants designed to guide users through exploring personal values, gaining ACP knowledge, and supporting informed decision-making. A usability study (n=12) showed that PreCare achieved a System Usability Scale (SUS) rating of excellent. A comparative evaluation (n=12) showed that PreCare's AI assistants significantly improved exploration of personal values, knowledge, and decisional confidence, and was preferred by 92% of participants.

Existing Industry Practice for the EU AI Act's General-Purpose AI Code of Practice Safety and Security Measures

Apr 21, 2025

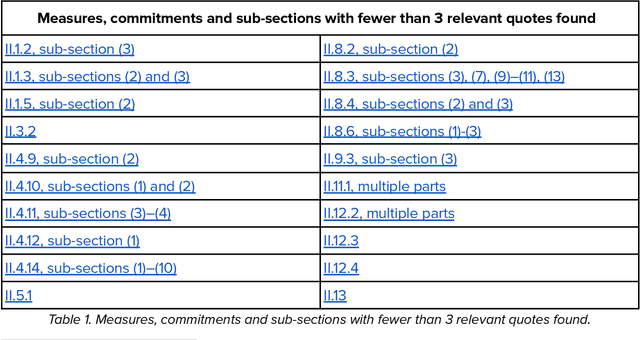

Abstract:This report provides a detailed comparison between the measures proposed in the EU AI Act's General-Purpose AI (GPAI) Code of Practice (Third Draft) and current practices adopted by leading AI companies. As the EU moves toward enforcing binding obligations for GPAI model providers, the Code of Practice will be key to bridging legal requirements with concrete technical commitments. Our analysis focuses on the draft's Safety and Security section which is only relevant for the providers of the most advanced models (Commitments II.1-II.16) and excerpts from current public-facing documents quotes that are relevant to each individual measure. We systematically reviewed different document types - including companies' frontier safety frameworks and model cards - from over a dozen companies, including OpenAI, Anthropic, Google DeepMind, Microsoft, Meta, Amazon, and others. This report is not meant to be an indication of legal compliance nor does it take any prescriptive viewpoint about the Code of Practice or companies' policies. Instead, it aims to inform the ongoing dialogue between regulators and GPAI model providers by surfacing evidence of precedent.

Risk Sources and Risk Management Measures in Support of Standards for General-Purpose AI Systems

Oct 30, 2024

Abstract:There is an urgent need to identify both short and long-term risks from newly emerging types of Artificial Intelligence (AI), as well as available risk management measures. In response, and to support global efforts in regulating AI and writing safety standards, we compile an extensive catalog of risk sources and risk management measures for general-purpose AI (GPAI) systems, complete with descriptions and supporting examples where relevant. This work involves identifying technical, operational, and societal risks across model development, training, and deployment stages, as well as surveying established and experimental methods for managing these risks. To the best of our knowledge, this paper is the first of its kind to provide extensive documentation of both GPAI risk sources and risk management measures that are descriptive, self-contained and neutral with respect to any existing regulatory framework. This work intends to help AI providers, standards experts, researchers, policymakers, and regulators in identifying and mitigating systemic risks from GPAI systems. For this reason, the catalog is released under a public domain license for ease of direct use by stakeholders in AI governance and standards.

Explainable AI (XAI) in Image Segmentation in Medicine, Industry, and Beyond: A Survey

May 02, 2024Abstract:Artificial Intelligence (XAI) has found numerous applications in computer vision. While image classification-based explainability techniques have garnered significant attention, their counterparts in semantic segmentation have been relatively neglected. Given the prevalent use of image segmentation, ranging from medical to industrial deployments, these techniques warrant a systematic look. In this paper, we present the first comprehensive survey on XAI in semantic image segmentation. This work focuses on techniques that were either specifically introduced for dense prediction tasks or were extended for them by modifying existing methods in classification. We analyze and categorize the literature based on application categories and domains, as well as the evaluation metrics and datasets used. We also propose a taxonomy for interpretable semantic segmentation, and discuss potential challenges and future research directions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge