Ze Shen Chin

Dimensional Characterization and Pathway Modeling for Catastrophic AI Risks

Aug 08, 2025

Abstract:Although discourse around the risks of Artificial Intelligence (AI) has grown, it often lacks a comprehensive, multidimensional framework, and concrete causal pathways mapping hazard to harm. This paper aims to bridge this gap by examining six commonly discussed AI catastrophic risks: CBRN, cyber offense, sudden loss of control, gradual loss of control, environmental risk, and geopolitical risk. First, we characterize these risks across seven key dimensions, namely intent, competency, entity, polarity, linearity, reach, and order. Next, we conduct risk pathway modeling by mapping step-by-step progressions from the initial hazard to the resulting harms. The dimensional approach supports systematic risk identification and generalizable mitigation strategies, while risk pathway models help identify scenario-specific interventions. Together, these methods offer a more structured and actionable foundation for managing catastrophic AI risks across the value chain.

Existing Industry Practice for the EU AI Act's General-Purpose AI Code of Practice Safety and Security Measures

Apr 21, 2025

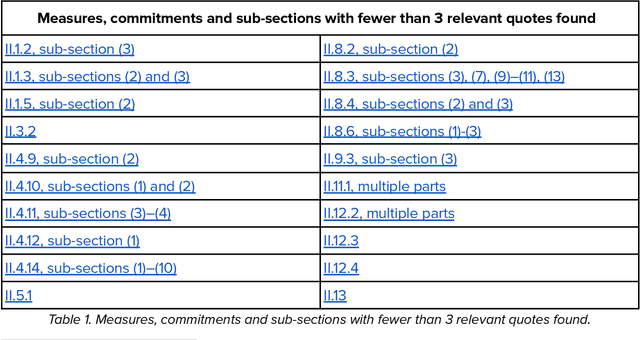

Abstract:This report provides a detailed comparison between the measures proposed in the EU AI Act's General-Purpose AI (GPAI) Code of Practice (Third Draft) and current practices adopted by leading AI companies. As the EU moves toward enforcing binding obligations for GPAI model providers, the Code of Practice will be key to bridging legal requirements with concrete technical commitments. Our analysis focuses on the draft's Safety and Security section which is only relevant for the providers of the most advanced models (Commitments II.1-II.16) and excerpts from current public-facing documents quotes that are relevant to each individual measure. We systematically reviewed different document types - including companies' frontier safety frameworks and model cards - from over a dozen companies, including OpenAI, Anthropic, Google DeepMind, Microsoft, Meta, Amazon, and others. This report is not meant to be an indication of legal compliance nor does it take any prescriptive viewpoint about the Code of Practice or companies' policies. Instead, it aims to inform the ongoing dialogue between regulators and GPAI model providers by surfacing evidence of precedent.

Risk Sources and Risk Management Measures in Support of Standards for General-Purpose AI Systems

Oct 30, 2024

Abstract:There is an urgent need to identify both short and long-term risks from newly emerging types of Artificial Intelligence (AI), as well as available risk management measures. In response, and to support global efforts in regulating AI and writing safety standards, we compile an extensive catalog of risk sources and risk management measures for general-purpose AI (GPAI) systems, complete with descriptions and supporting examples where relevant. This work involves identifying technical, operational, and societal risks across model development, training, and deployment stages, as well as surveying established and experimental methods for managing these risks. To the best of our knowledge, this paper is the first of its kind to provide extensive documentation of both GPAI risk sources and risk management measures that are descriptive, self-contained and neutral with respect to any existing regulatory framework. This work intends to help AI providers, standards experts, researchers, policymakers, and regulators in identifying and mitigating systemic risks from GPAI systems. For this reason, the catalog is released under a public domain license for ease of direct use by stakeholders in AI governance and standards.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge