Robyn L. Miller

Generative forecasting of brain activity enhances Alzheimer's classification and interpretation

Oct 30, 2024

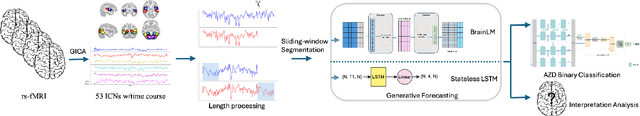

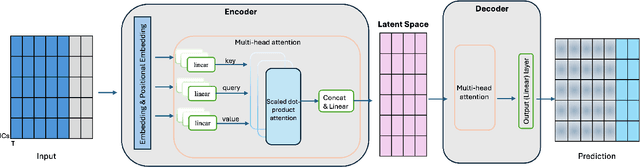

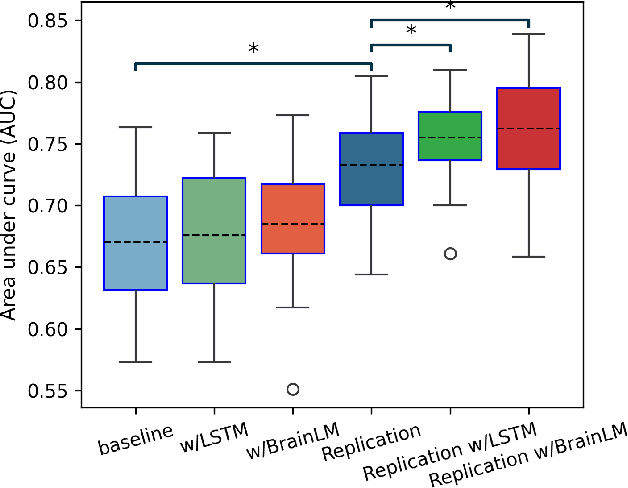

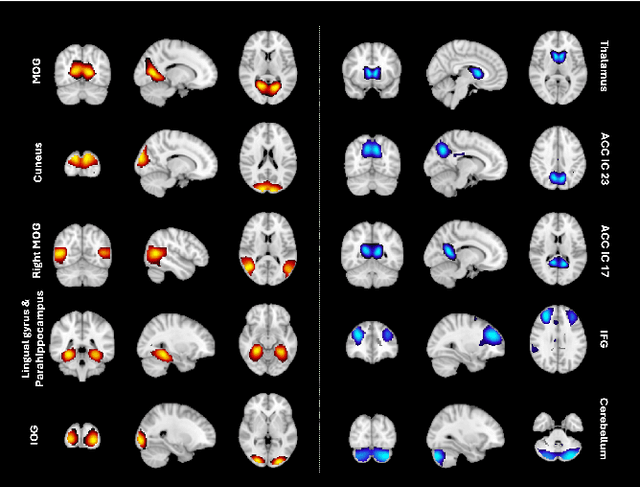

Abstract:Understanding the relationship between cognition and intrinsic brain activity through purely data-driven approaches remains a significant challenge in neuroscience. Resting-state functional magnetic resonance imaging (rs-fMRI) offers a non-invasive method to monitor regional neural activity, providing a rich and complex spatiotemporal data structure. Deep learning has shown promise in capturing these intricate representations. However, the limited availability of large datasets, especially for disease-specific groups such as Alzheimer's Disease (AD), constrains the generalizability of deep learning models. In this study, we focus on multivariate time series forecasting of independent component networks derived from rs-fMRI as a form of data augmentation, using both a conventional LSTM-based model and the novel Transformer-based BrainLM model. We assess their utility in AD classification, demonstrating how generative forecasting enhances classification performance. Post-hoc interpretation of BrainLM reveals class-specific brain network sensitivities associated with AD.

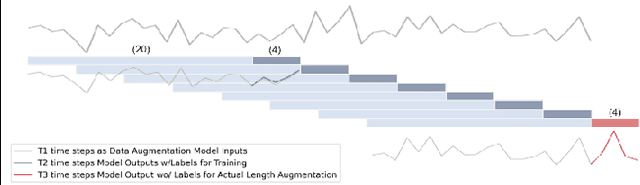

Improving age prediction: Utilizing LSTM-based dynamic forecasting for data augmentation in multivariate time series analysis

Dec 11, 2023

Abstract:The high dimensionality and complexity of neuroimaging data necessitate large datasets to develop robust and high-performing deep learning models. However, the neuroimaging field is notably hampered by the scarcity of such datasets. In this work, we proposed a data augmentation and validation framework that utilizes dynamic forecasting with Long Short-Term Memory (LSTM) networks to enrich datasets. We extended multivariate time series data by predicting the time courses of independent component networks (ICNs) in both one-step and recursive configurations. The effectiveness of these augmented datasets was then compared with the original data using various deep learning models designed for chronological age prediction tasks. The results suggest that our approach improves model performance, providing a robust solution to overcome the challenges presented by the limited size of neuroimaging datasets.

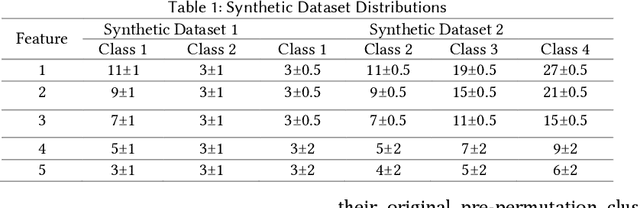

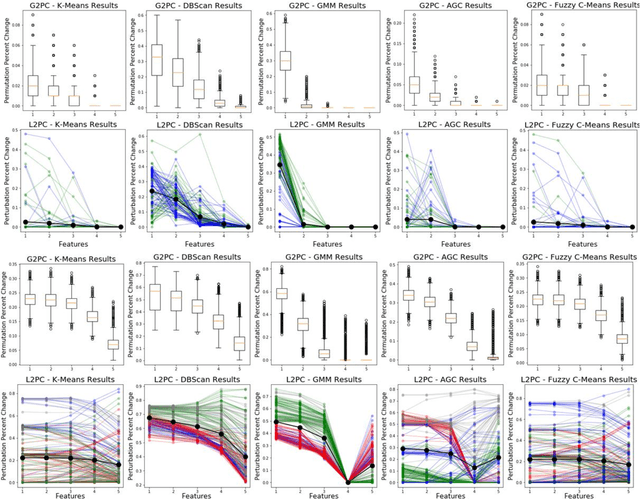

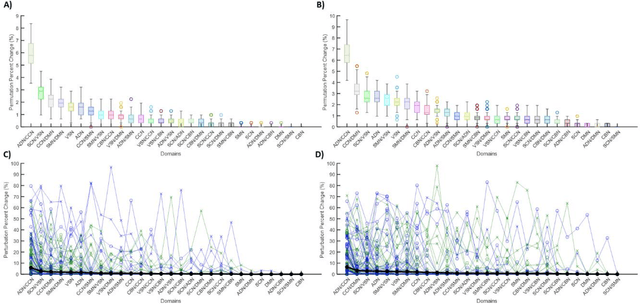

Algorithm-Agnostic Explainability for Unsupervised Clustering

May 17, 2021

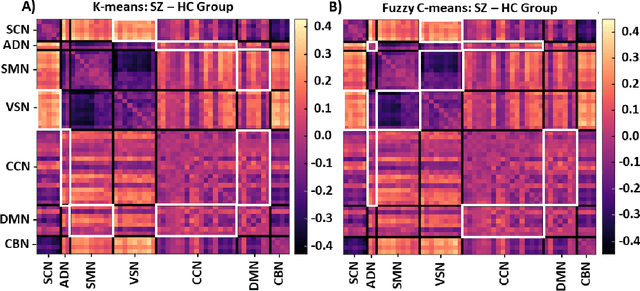

Abstract:Supervised machine learning explainability has greatly expanded in recent years. However, the field of unsupervised clustering explainability has lagged behind. Here, we, to the best of our knowledge, demonstrate for the first time how model-agnostic methods for supervised machine learning explainability can be adapted to provide algorithm-agnostic unsupervised clustering explainability. We present two novel algorithm-agnostic explainability methods, global permutation percent change (G2PC) feature importance and local perturbation percent change (L2PC) feature importance, that can provide insight into many clustering methods on a global level by identifying the relative importance of features to a clustering algorithm and on a local level by identifying the relative importance of features to the clustering of individual samples. We demonstrate the utility of the methods for explaining five popular clustering algorithms on low-dimensional, ground-truth synthetic datasets and on high-dimensional functional network connectivity (FNC) data extracted from a resting state functional magnetic resonance imaging (rs-fMRI) dataset of 151 subjects with schizophrenia (SZ) and 160 healthy controls (HC). Our proposed explainability methods robustly identify the relative importance of features across multiple clustering methods and could facilitate new insights into many applications. We hope that this study will greatly accelerate the development of the field of clustering explainability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge