Rizwan Ahmed Khan

ArrhythmiaVision: Resource-Conscious Deep Learning Models with Visual Explanations for ECG Arrhythmia Classification

Apr 30, 2025Abstract:Cardiac arrhythmias are a leading cause of life-threatening cardiac events, highlighting the urgent need for accurate and timely detection. Electrocardiography (ECG) remains the clinical gold standard for arrhythmia diagnosis; however, manual interpretation is time-consuming, dependent on clinical expertise, and prone to human error. Although deep learning has advanced automated ECG analysis, many existing models abstract away the signal's intrinsic temporal and morphological features, lack interpretability, and are computationally intensive-hindering their deployment on resource-constrained platforms. In this work, we propose two novel lightweight 1D convolutional neural networks, ArrhythmiNet V1 and V2, optimized for efficient, real-time arrhythmia classification on edge devices. Inspired by MobileNet's depthwise separable convolutional design, these models maintain memory footprints of just 302.18 KB and 157.76 KB, respectively, while achieving classification accuracies of 0.99 (V1) and 0.98 (V2) on the MIT-BIH Arrhythmia Dataset across five classes: Normal Sinus Rhythm, Left Bundle Branch Block, Right Bundle Branch Block, Atrial Premature Contraction, and Premature Ventricular Contraction. In order to ensure clinical transparency and relevance, we integrate Shapley Additive Explanations and Gradient-weighted Class Activation Mapping, enabling both local and global interpretability. These techniques highlight physiologically meaningful patterns such as the QRS complex and T-wave that contribute to the model's predictions. We also discuss performance-efficiency trade-offs and address current limitations related to dataset diversity and generalizability. Overall, our findings demonstrate the feasibility of combining interpretability, predictive accuracy, and computational efficiency in practical, wearable, and embedded ECG monitoring systems.

A Comprehensive Framework for Reliable Legal AI: Combining Specialized Expert Systems and Adaptive Refinement

Dec 29, 2024

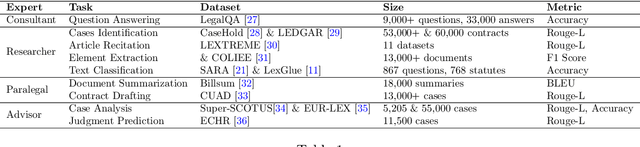

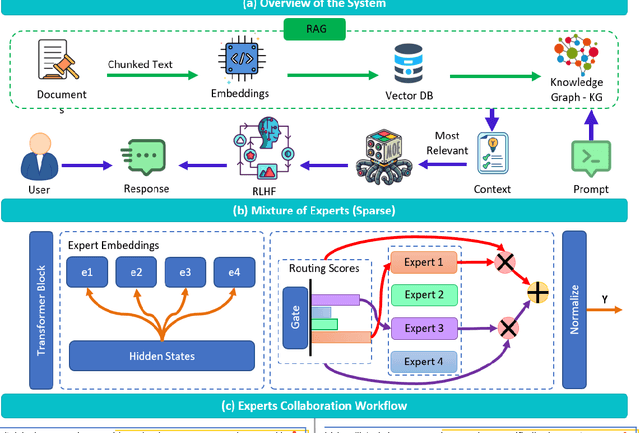

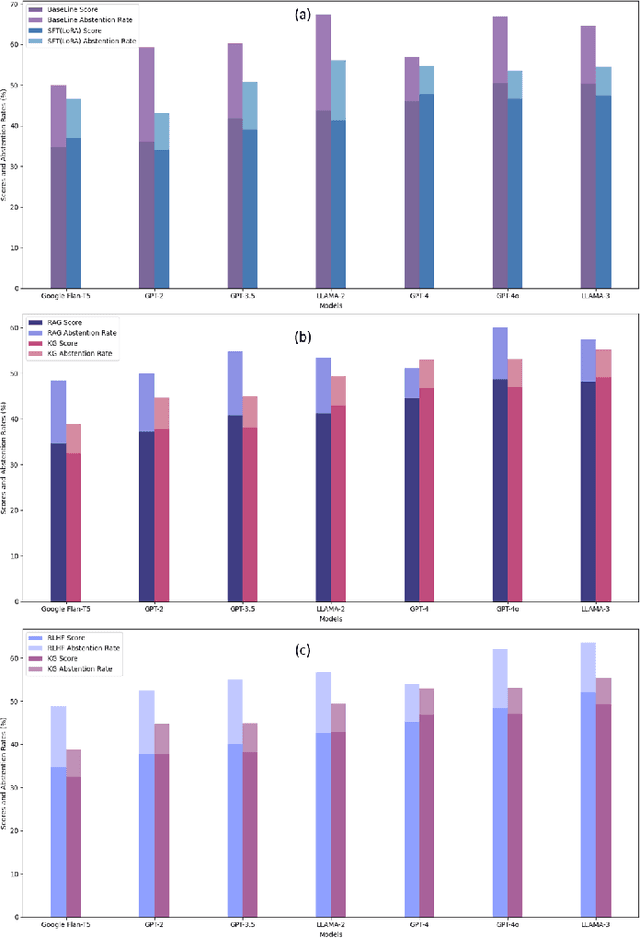

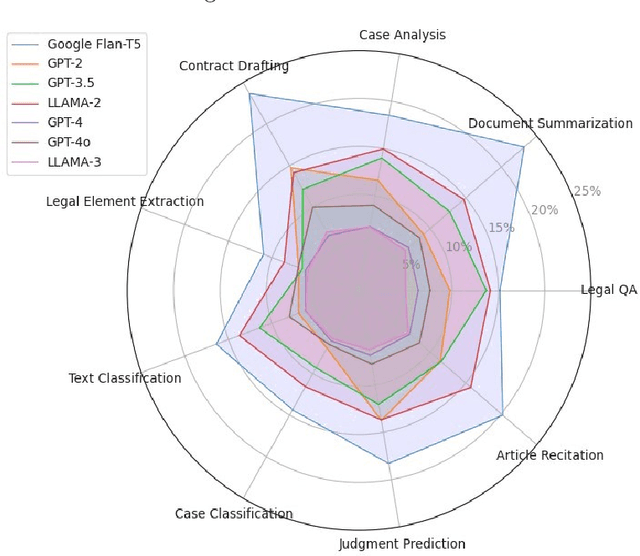

Abstract:This article discusses the evolving role of artificial intelligence (AI) in the legal profession, focusing on its potential to streamline tasks such as document review, research, and contract drafting. However, challenges persist, particularly the occurrence of "hallucinations" in AI models, where they generate inaccurate or misleading information, undermining their reliability in legal contexts. To address this, the article proposes a novel framework combining a mixture of expert systems with a knowledge-based architecture to improve the precision and contextual relevance of AI-driven legal services. This framework utilizes specialized modules, each focusing on specific legal areas, and incorporates structured operational guidelines to enhance decision-making. Additionally, it leverages advanced AI techniques like Retrieval-Augmented Generation (RAG), Knowledge Graphs (KG), and Reinforcement Learning from Human Feedback (RLHF) to improve the system's accuracy. The proposed approach demonstrates significant improvements over existing AI models, showcasing enhanced performance in legal tasks and offering a scalable solution to provide more accessible and affordable legal services. The article also outlines the methodology, system architecture, and promising directions for future research in AI applications for the legal sector.

Robust Feature Engineering Techniques for Designing Efficient Motor Imagery-Based BCI-Systems

Dec 10, 2024

Abstract:A multitude of individuals across the globe grapple with motor disabilities. Neural prosthetics utilizing Brain-Computer Interface (BCI) technology exhibit promise for improving motor rehabilitation outcomes. The intricate nature of EEG data poses a significant hurdle for current BCI systems. Recently, a qualitative repository of EEG signals tied to both upper and lower limb execution of motor and motor imagery tasks has been unveiled. Despite this, the productivity of the Machine Learning (ML) Models that were trained on this dataset was alarmingly deficient, and the evaluation framework seemed insufficient. To enhance outcomes, robust feature engineering (signal processing) methodologies are implemented. A collection of time domain, frequency domain, and wavelet-derived features was obtained from 16-channel EEG signals, and the Maximum Relevance Minimum Redundancy (MRMR) approach was employed to identify the four most significant features. For classification K Nearest Neighbors (KNN), Support Vector Machine (SVM), Decision Tree (DT), and Na\"ive Bayes (NB) models were implemented with these selected features, evaluating their effectiveness through metrics such as testing accuracy, precision, recall, and F1 Score. By leveraging SVM with a Gaussian Kernel, a remarkable maximum testing accuracy of 92.50% for motor activities and 95.48% for imagery activities is achieved. These results are notably more dependable and gratifying compared to the previous study, where the peak accuracy was recorded at 74.36%. This research work provides an in-depth analysis of the MI Limb EEG dataset and it will help in designing and developing simple, cost-effective and reliable BCI systems for neuro-rehabilitation.

Breast Cancer Diagnosis: A Comprehensive Exploration of Explainable Artificial Intelligence (XAI) Techniques

Jun 01, 2024

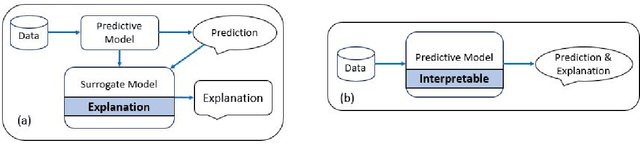

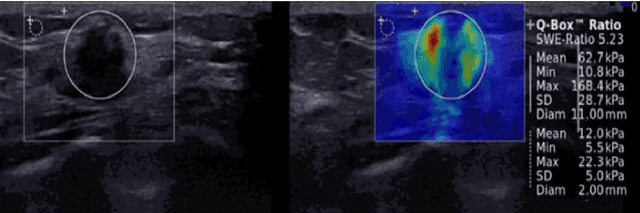

Abstract:Breast cancer (BC) stands as one of the most common malignancies affecting women worldwide, necessitating advancements in diagnostic methodologies for better clinical outcomes. This article provides a comprehensive exploration of the application of Explainable Artificial Intelligence (XAI) techniques in the detection and diagnosis of breast cancer. As Artificial Intelligence (AI) technologies continue to permeate the healthcare sector, particularly in oncology, the need for transparent and interpretable models becomes imperative to enhance clinical decision-making and patient care. This review discusses the integration of various XAI approaches, such as SHAP, LIME, Grad-CAM, and others, with machine learning and deep learning models utilized in breast cancer detection and classification. By investigating the modalities of breast cancer datasets, including mammograms, ultrasounds and their processing with AI, the paper highlights how XAI can lead to more accurate diagnoses and personalized treatment plans. It also examines the challenges in implementing these techniques and the importance of developing standardized metrics for evaluating XAI's effectiveness in clinical settings. Through detailed analysis and discussion, this article aims to highlight the potential of XAI in bridging the gap between complex AI models and practical healthcare applications, thereby fostering trust and understanding among medical professionals and improving patient outcomes.

Enhancing Breast Cancer Diagnosis in Mammography: Evaluation and Integration of Convolutional Neural Networks and Explainable AI

Apr 09, 2024

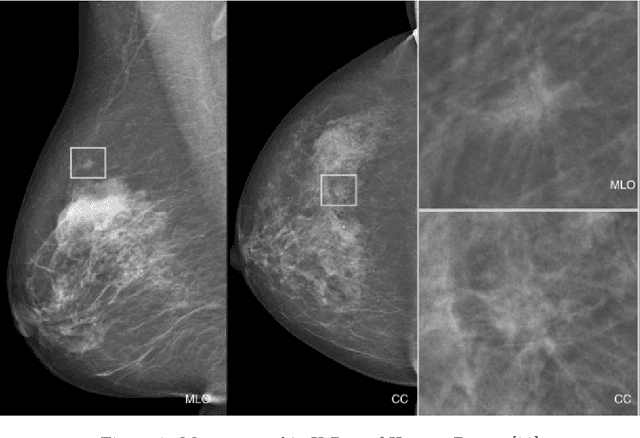

Abstract:The study introduces an integrated framework combining Convolutional Neural Networks (CNNs) and Explainable Artificial Intelligence (XAI) for the enhanced diagnosis of breast cancer using the CBIS-DDSM dataset. Utilizing a fine-tuned ResNet50 architecture, our investigation not only provides effective differentiation of mammographic images into benign and malignant categories but also addresses the opaque "black-box" nature of deep learning models by employing XAI methodologies, namely Grad-CAM, LIME, and SHAP, to interpret CNN decision-making processes for healthcare professionals. Our methodology encompasses an elaborate data preprocessing pipeline and advanced data augmentation techniques to counteract dataset limitations, and transfer learning using pre-trained networks, such as VGG-16, DenseNet and ResNet was employed. A focal point of our study is the evaluation of XAI's effectiveness in interpreting model predictions, highlighted by utilising the Hausdorff measure to assess the alignment between AI-generated explanations and expert annotations quantitatively. This approach plays a critical role for XAI in promoting trustworthiness and ethical fairness in AI-assisted diagnostics. The findings from our research illustrate the effective collaboration between CNNs and XAI in advancing diagnostic methods for breast cancer, thereby facilitating a more seamless integration of advanced AI technologies within clinical settings. By enhancing the interpretability of AI-driven decisions, this work lays the groundwork for improved collaboration between AI systems and medical practitioners, ultimately enriching patient care. Furthermore, the implications of our research extend well beyond the current methodologies, advocating for subsequent inquiries into the integration of multimodal data and the refinement of AI explanations to satisfy the needs of clinical practice.

Ethical Framework for Harnessing the Power of AI in Healthcare and Beyond

Aug 31, 2023

Abstract:In the past decade, the deployment of deep learning (Artificial Intelligence (AI)) methods has become pervasive across a spectrum of real-world applications, often in safety-critical contexts. This comprehensive research article rigorously investigates the ethical dimensions intricately linked to the rapid evolution of AI technologies, with a particular focus on the healthcare domain. Delving deeply, it explores a multitude of facets including transparency, adept data management, human oversight, educational imperatives, and international collaboration within the realm of AI advancement. Central to this article is the proposition of a conscientious AI framework, meticulously crafted to accentuate values of transparency, equity, answerability, and a human-centric orientation. The second contribution of the article is the in-depth and thorough discussion of the limitations inherent to AI systems. It astutely identifies potential biases and the intricate challenges of navigating multifaceted contexts. Lastly, the article unequivocally accentuates the pressing need for globally standardized AI ethics principles and frameworks. Simultaneously, it aptly illustrates the adaptability of the ethical framework proposed herein, positioned skillfully to surmount emergent challenges.

Pneumonia Detection in Chest X-Ray Images : Handling Class Imbalance

Jan 20, 2023Abstract:People all over the globe are affected by pneumonia but deaths due to it are highest in Sub-Saharan Asia and South Asia. In recent years, the overall incidence and mortality rate of pneumonia regardless of the utilization of effective vaccines and compelling antibiotics has escalated. Thus, pneumonia remains a disease that needs spry prevention and treatment. The widespread prevalence of pneumonia has caused the research community to come up with a framework that helps detect, diagnose and analyze diseases accurately and promptly. One of the major hurdles faced by the Artificial Intelligence (AI) research community is the lack of publicly available datasets for chest diseases, including pneumonia . Secondly, few of the available datasets are highly imbalanced (normal examples are over sampled, while samples with ailment are in severe minority) making the problem even more challenging. In this article we present a novel framework for the detection of pneumonia. The novelty of the proposed methodology lies in the tackling of class imbalance problem. The Generative Adversarial Network (GAN), specifically a combination of Deep Convolutional Generative Adversarial Network (DCGAN) and Wasserstein GAN gradient penalty (WGAN-GP) was applied on the minority class ``Pneumonia'' for augmentation, whereas Random Under-Sampling (RUS) was done on the majority class ``No Findings'' to deal with the imbalance problem. The ChestX-Ray8 dataset, one of the biggest datasets, is used to validate the performance of the proposed framework. The learning phase is completed using transfer learning on state-of-the-art deep learning models i.e. ResNet-50, Xception, and VGG-16. Results obtained exceed state-of-the-art.

Artificial Intelligence For Breast Cancer Detection: Trends & Directions

Oct 03, 2021

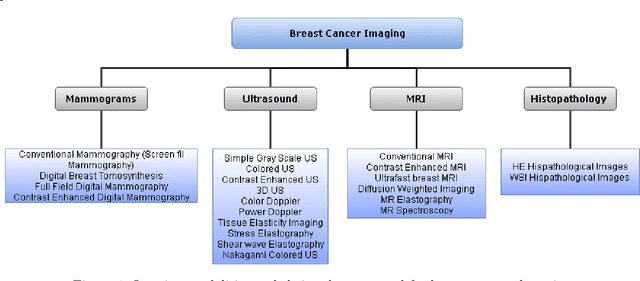

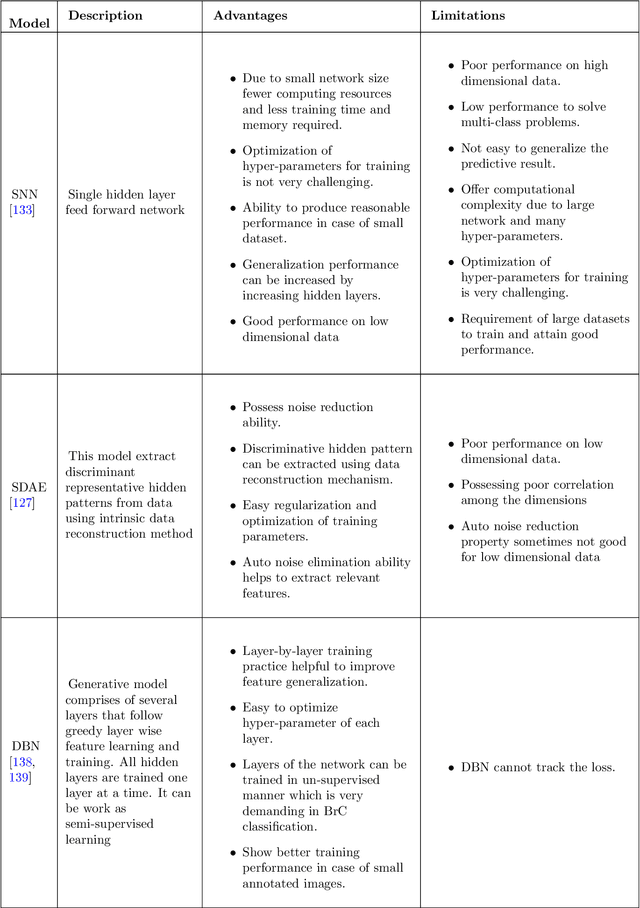

Abstract:In the last decade, researchers working in the domain of computer vision and Artificial Intelligence (AI) have beefed up their efforts to come up with the automated framework that not only detects but also identifies stage of breast cancer. The reason for this surge in research activities in this direction are mainly due to advent of robust AI algorithms (deep learning), availability of hardware that can train those robust and complex AI algorithms and accessibility of large enough dataset required for training AI algorithms. Different imaging modalities that have been exploited by researchers to automate the task of breast cancer detection are mammograms, ultrasound, magnetic resonance imaging, histopathological images or any combination of them. This article analyzes these imaging modalities and presents their strengths, limitations and enlists resources from where their datasets can be accessed for research purpose. This article then summarizes AI and computer vision based state-of-the-art methods proposed in the last decade, to detect breast cancer using various imaging modalities. Generally, in this article we have focused on to review frameworks that have reported results using mammograms as it is most widely used breast imaging modality that serves as first test that medical practitioners usually prescribe for the detection of breast cancer. Second reason of focusing on mammogram imaging modalities is the availability of its labeled datasets. Datasets availability is one of the most important aspect for the development of AI based frameworks as such algorithms are data hungry and generally quality of dataset affects performance of AI based algorithms. In a nutshell, this research article will act as a primary resource for the research community working in the field of automated breast imaging analysis.

Handwritten Optical Character Recognition (OCR): A Comprehensive Systematic Literature Review (SLR)

Jan 01, 2020

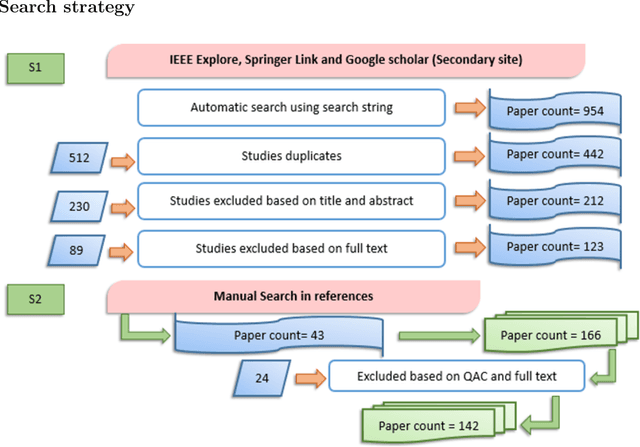

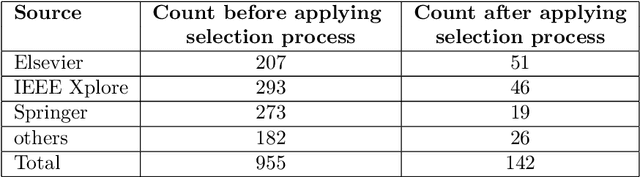

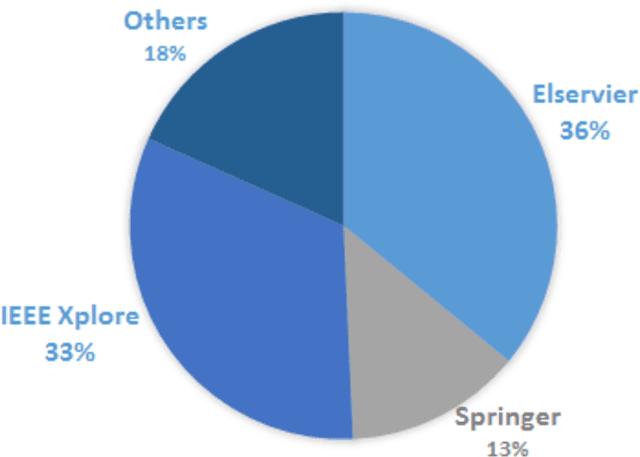

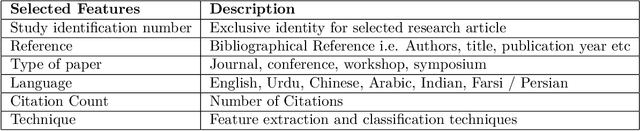

Abstract:Given the ubiquity of handwritten documents in human transactions, Optical Character Recognition (OCR) of documents have invaluable practical worth. Optical character recognition is a science that enables to translate various types of documents or images into analyzable, editable and searchable data. During last decade, researchers have used artificial intelligence / machine learning tools to automatically analyze handwritten and printed documents in order to convert them into electronic format. The objective of this review paper is to summarize research that has been conducted on character recognition of handwritten documents and to provide research directions. In this Systematic Literature Review (SLR) we collected, synthesized and analyzed research articles on the topic of handwritten OCR (and closely related topics) which were published between year 2000 to 2018. We followed widely used electronic databases by following pre-defined review protocol. Articles were searched using keywords, forward reference searching and backward reference searching in order to search all the articles related to the topic. After carefully following study selection process 142 articles were selected for this SLR. This review article serves the purpose of presenting state of the art results and techniques on OCR and also provide research directions by highlighting research gaps.

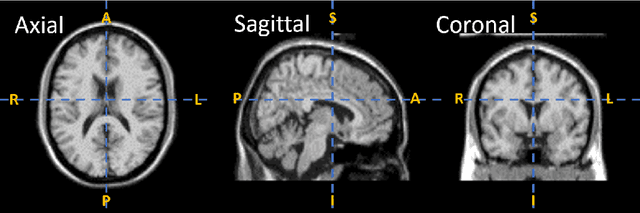

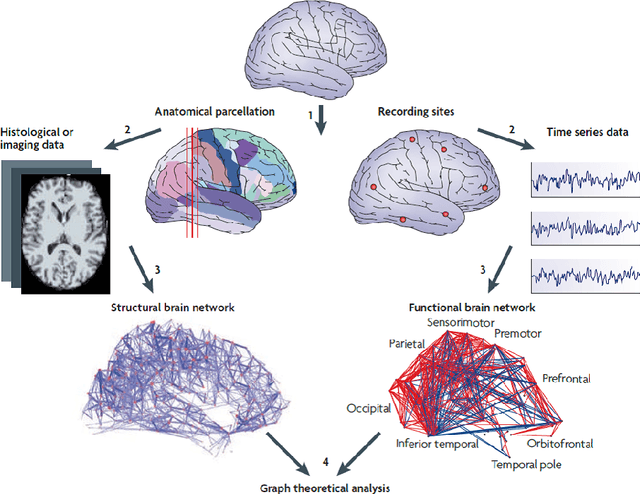

A novel framework for automatic detection of Autism: A study on Corpus Callosum and Intracranial Brain Volume

Mar 27, 2019

Abstract:Computer vision and machine learning are the linchpin of field of automation. The medicine industry has adopted numerous methods to discover the root causes of many diseases in order to automate detection process. But, the biomarkers of Autism Spectrum Disorder (ASD) are still unknown, let alone automating its detection, due to intense connectivity of neurological pattern in brain. Studies from the neuroscience domain highlighted the fact that corpus callosum and intracranial brain volume holds significant information for detection of ASD. Such results and studies are not tested and verified by scientists working in the domain of computer vision / machine learning. Thus, in this study we have applied machine learning algorithms on features extracted from corpus callosum and intracranial brain volume data. Corpus callosum and intracranial brain volume data is obtained from s-MRI (structural Magnetic Resonance Imaging) data-set known as ABIDE (Autism Brain Imaging Data Exchange). Our proposed framework for automatic detection of ASD showed potential of machine learning algorithms for development of neuroimaging data understanding and detection of ASD. Proposed framework enhanced achieved accuracy by calculating weights / importance of features extracted from corpus callosum and intracranial brain volume data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge