Ripon Kumar Saha

From Hubs to Deserts: Urban Cultural Accessibility Patterns with Explainable AI

Nov 08, 2025Abstract:Cultural infrastructures, such as libraries, museums, theaters, and galleries, support learning, civic life, health, and local economies, yet access is uneven across cities. We present a novel, scalable, and open-data framework to measure spatial equity in cultural access. We map cultural infrastructures and compute a metric called Cultural Infrastructure Accessibility Score (CIAS) using exponential distance decay at fine spatial resolution, then aggregate the score per capita and integrate socio-demographic indicators. Interpretable tree-ensemble models with SHapley Additive exPlanation (SHAP) are used to explain associations between accessibility, income, density, and tract-level racial/ethnic composition. Results show a pronounced core-periphery gradient, where non-library cultural infrastructures cluster near urban cores, while libraries track density and provide broader coverage. Non-library accessibility is modestly higher in higher-income tracts, and library accessibility is slightly higher in denser, lower-income areas.

Turbulence Strength $C_n^2$ Estimation from Video using Physics-based Deep Learning

Aug 29, 2024

Abstract:Images captured from a long distance suffer from dynamic image distortion due to turbulent flow of air cells with random temperatures, and thus refractive indices. This phenomenon, known as image dancing, is commonly characterized by its refractive-index structure constant $C_n^2$ as a measure of the turbulence strength. For many applications such as atmospheric forecast model, long-range/astronomy imaging, and aviation safety, optical communication technology, $C_n^2$ estimation is critical for accurately sensing the turbulent environment. Previous methods for $C_n^2$ estimation include estimation from meteorological data (temperature, relative humidity, wind shear, etc.) for single-point measurements, two-ended pathlength measurements from optical scintillometer for path-averaged $C_n^2$, and more recently estimating $C_n^2$ from passive video cameras for low cost and hardware complexity. In this paper, we present a comparative analysis of classical image gradient methods for $C_n^2$ estimation and modern deep learning-based methods leveraging convolutional neural networks. To enable this, we collect a dataset of video capture along with reference scintillometer measurements for ground truth, and we release this unique dataset to the scientific community. We observe that deep learning methods can achieve higher accuracy when trained on similar data, but suffer from generalization errors to other, unseen imagery as compared to classical methods. To overcome this trade-off, we present a novel physics-based network architecture that combines learned convolutional layers with a differentiable image gradient method that maintains high accuracy while being generalizable across image datasets.

* Code Available: https://github.com/Riponcs/Cn2Estimation

Turb-Seg-Res: A Segment-then-Restore Pipeline for Dynamic Videos with Atmospheric Turbulence

Apr 21, 2024

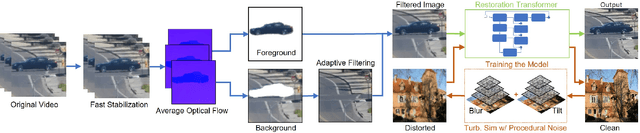

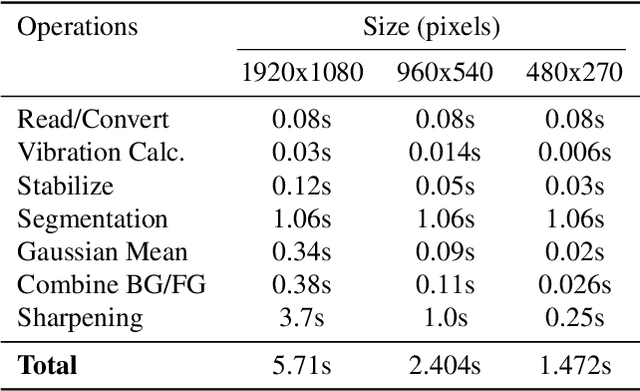

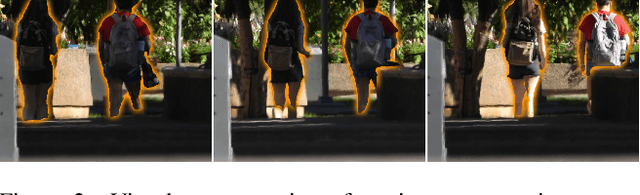

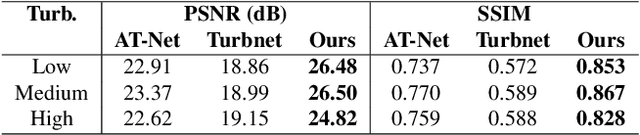

Abstract:Tackling image degradation due to atmospheric turbulence, particularly in dynamic environment, remains a challenge for long-range imaging systems. Existing techniques have been primarily designed for static scenes or scenes with small motion. This paper presents the first segment-then-restore pipeline for restoring the videos of dynamic scenes in turbulent environment. We leverage mean optical flow with an unsupervised motion segmentation method to separate dynamic and static scene components prior to restoration. After camera shake compensation and segmentation, we introduce foreground/background enhancement leveraging the statistics of turbulence strength and a transformer model trained on a novel noise-based procedural turbulence generator for fast dataset augmentation. Benchmarked against existing restoration methods, our approach restores most of the geometric distortion and enhances sharpness for videos. We make our code, simulator, and data publicly available to advance the field of video restoration from turbulence: riponcs.github.io/TurbSegRes

AI-based automated Meibomian gland segmentation, classification and reflection correction in infrared Meibography

May 31, 2022

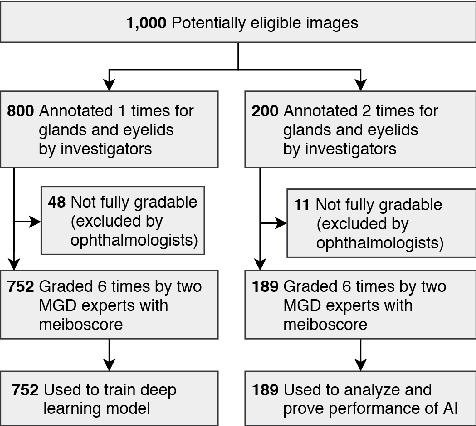

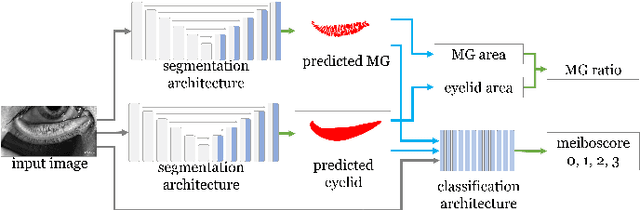

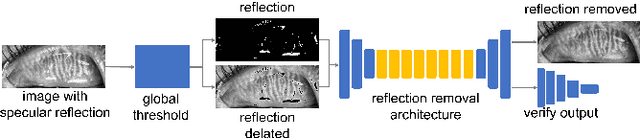

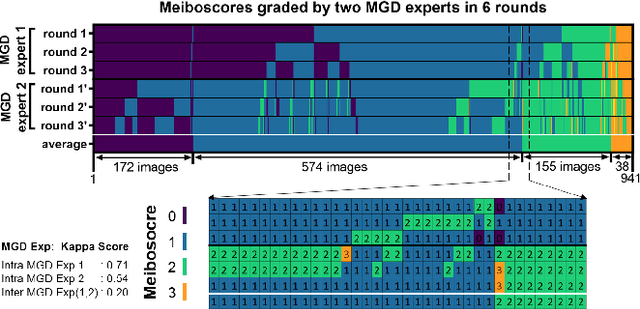

Abstract:Purpose: Develop a deep learning-based automated method to segment meibomian glands (MG) and eyelids, quantitatively analyze the MG area and MG ratio, estimate the meiboscore, and remove specular reflections from infrared images. Methods: A total of 1600 meibography images were captured in a clinical setting. 1000 images were precisely annotated with multiple revisions by investigators and graded 6 times by meibomian gland dysfunction (MGD) experts. Two deep learning (DL) models were trained separately to segment areas of the MG and eyelid. Those segmentation were used to estimate MG ratio and meiboscores using a classification-based DL model. A generative adversarial network was implemented to remove specular reflections from original images. Results: The mean ratio of MG calculated by investigator annotation and DL segmentation was consistent 26.23% vs 25.12% in the upper eyelids and 32.34% vs. 32.29% in the lower eyelids, respectively. Our DL model achieved 73.01% accuracy for meiboscore classification on validation set and 59.17% accuracy when tested on images from independent center, compared to 53.44% validation accuracy by MGD experts. The DL-based approach successfully removes reflection from the original MG images without affecting meiboscore grading. Conclusions: DL with infrared meibography provides a fully automated, fast quantitative evaluation of MG morphology (MG Segmentation, MG area, MG ratio, and meiboscore) which are sufficiently accurate for diagnosing dry eye disease. Also, the DL removes specular reflection from images to be used by ophthalmologists for distraction-free assessment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge