Rasmus Adler

Assurance Cases as Foundation Stone for Auditing AI-enabled and Autonomous Systems: Workshop Results and Political Recommendations for Action from the ExamAI Project

Aug 17, 2022

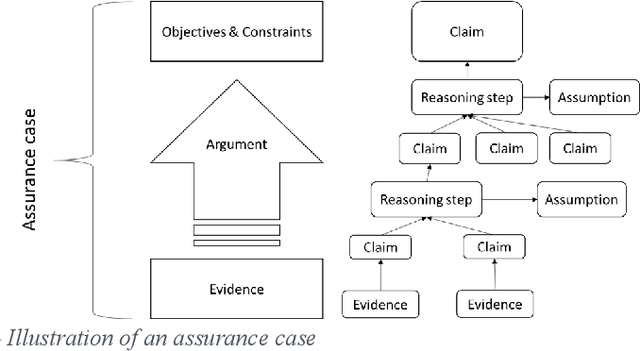

Abstract:The European Machinery Directive and related harmonized standards do consider that software is used to generate safety-relevant behavior of the machinery but do not consider all kinds of software. In particular, software based on machine learning (ML) are not considered for the realization of safety-relevant behavior. This limits the introduction of suitable safety concepts for autonomous mobile robots and other autonomous machinery, which commonly depend on ML-based functions. We investigated this issue and the way safety standards define safety measures to be implemented against software faults. Functional safety standards use Safety Integrity Levels (SILs) to define which safety measures shall be implemented. They provide rules for determining the SIL and rules for selecting safety measures depending on the SIL. In this paper, we argue that this approach can hardly be adopted with respect to ML and other kinds of Artificial Intelligence (AI). Instead of simple rules for determining an SIL and applying related measures against faults, we propose the use of assurance cases to argue that the individually selected and applied measures are sufficient in the given case. To get a first rating regarding the feasibility and usefulness of our proposal, we presented and discussed it in a workshop with experts from industry, German statutory accident insurance companies, work safety and standardization commissions, and representatives from various national, European, and international working groups dealing with safety and AI. In this paper, we summarize the proposal and the workshop discussion. Moreover, we check to which extent our proposal is in line with the European AI Act proposal and current safety standardization initiatives addressing AI and Autonomous Systems

Architectural patterns for handling runtime uncertainty of data-driven models in safety-critical perception

Jun 14, 2022

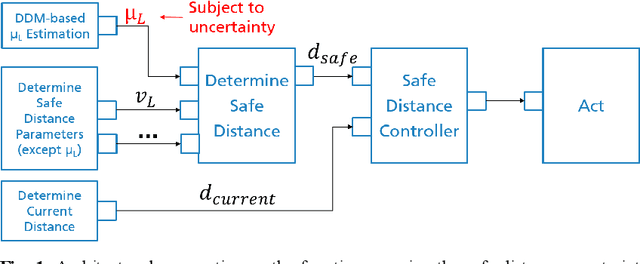

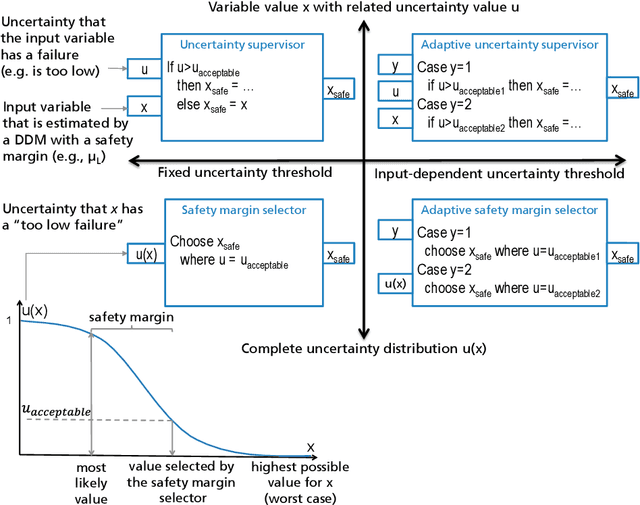

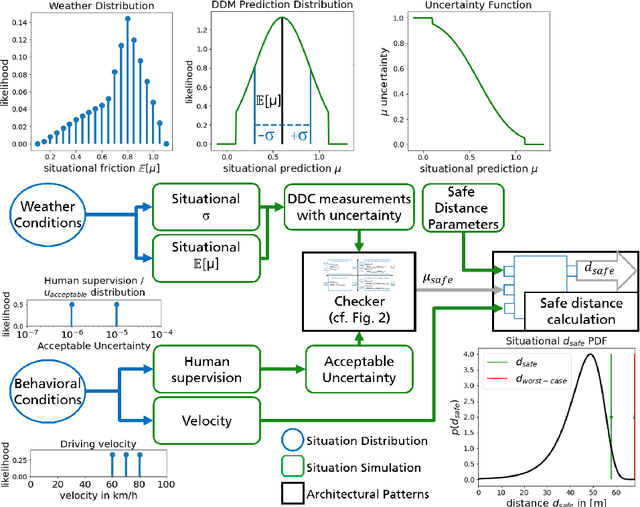

Abstract:Data-driven models (DDM) based on machine learning and other AI techniques play an important role in the perception of increasingly autonomous systems. Due to the merely implicit definition of their behavior mainly based on the data used for training, DDM outputs are subject to uncertainty. This poses a challenge with respect to the realization of safety-critical perception tasks by means of DDMs. A promising approach to tackling this challenge is to estimate the uncertainty in the current situation during operation and adapt the system behavior accordingly. In previous work, we focused on runtime estimation of uncertainty and discussed approaches for handling uncertainty estimations. In this paper, we present additional architectural patterns for handling uncertainty. Furthermore, we evaluate the four patterns qualitatively and quantitatively with respect to safety and performance gains. For the quantitative evaluation, we consider a distance controller for vehicle platooning where performance gains are measured by considering how much the distance can be reduced in different operational situations. We conclude that the consideration of context information of the driving situation makes it possible to accept more or less uncertainty depending on the inherent risk of the situation, which results in performance gains.

Integrating Testing and Operation-related Quantitative Evidences in Assurance Cases to Argue Safety of Data-Driven AI/ML Components

Feb 10, 2022

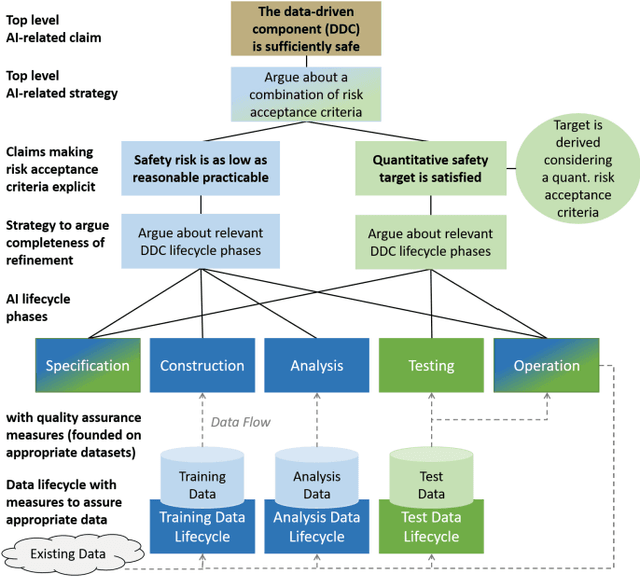

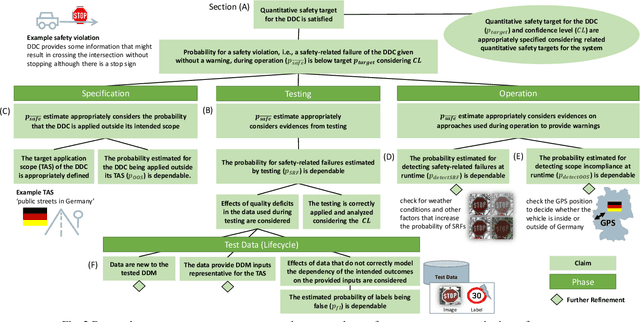

Abstract:In the future, AI will increasingly find its way into systems that can potentially cause physical harm to humans. For such safety-critical systems, it must be demonstrated that their residual risk does not exceed what is acceptable. This includes, in particular, the AI components that are part of such systems' safety-related functions. Assurance cases are an intensively discussed option today for specifying a sound and comprehensive safety argument to demonstrate a system's safety. In previous work, it has been suggested to argue safety for AI components by structuring assurance cases based on two complementary risk acceptance criteria. One of these criteria is used to derive quantitative targets regarding the AI. The argumentation structures commonly proposed to show the achievement of such quantitative targets, however, focus on failure rates from statistical testing. Further important aspects are only considered in a qualitative manner -- if at all. In contrast, this paper proposes a more holistic argumentation structure for having achieved the target, namely a structure that integrates test results with runtime aspects and the impact of scope compliance and test data quality in a quantitative manner. We elaborate different argumentation options, present the underlying mathematical considerations, and discuss resulting implications for their practical application. Using the proposed argumentation structure might not only increase the integrity of assurance cases but may also allow claims on quantitative targets that would not be justifiable otherwise.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge