Rae-Hong Park

SwinLip: An Efficient Visual Speech Encoder for Lip Reading Using Swin Transformer

May 07, 2025Abstract:This paper presents an efficient visual speech encoder for lip reading. While most recent lip reading studies have been based on the ResNet architecture and have achieved significant success, they are not sufficiently suitable for efficiently capturing lip reading features due to high computational complexity in modeling spatio-temporal information. Additionally, using a complex visual model not only increases the complexity of lip reading models but also induces delays in the overall network for multi-modal studies (e.g., audio-visual speech recognition, speech enhancement, and speech separation). To overcome the limitations of Convolutional Neural Network (CNN)-based models, we apply the hierarchical structure and window self-attention of the Swin Transformer to lip reading. We configure a new lightweight scale of the Swin Transformer suitable for processing lip reading data and present the SwinLip visual speech encoder, which efficiently reduces computational load by integrating modified Convolution-augmented Transformer (Conformer) temporal embeddings with conventional spatial embeddings in the hierarchical structure. Through extensive experiments, we have validated that our SwinLip successfully improves the performance and inference speed of the lip reading network when applied to various backbones for word and sentence recognition, reducing computational load. In particular, our SwinLip demonstrated robust performance in both English LRW and Mandarin LRW-1000 datasets and achieved state-of-the-art performance on the Mandarin LRW-1000 dataset with less computation compared to the existing state-of-the-art model.

OLKAVS: An Open Large-Scale Korean Audio-Visual Speech Dataset

Jan 16, 2023

Abstract:Inspired by humans comprehending speech in a multi-modal manner, various audio-visual datasets have been constructed. However, most existing datasets focus on English, induce dependencies with various prediction models during dataset preparation, and have only a small number of multi-view videos. To mitigate the limitations, we recently developed the Open Large-scale Korean Audio-Visual Speech (OLKAVS) dataset, which is the largest among publicly available audio-visual speech datasets. The dataset contains 1,150 hours of transcribed audio from 1,107 Korean speakers in a studio setup with nine different viewpoints and various noise situations. We also provide the pre-trained baseline models for two tasks, audio-visual speech recognition and lip reading. We conducted experiments based on the models to verify the effectiveness of multi-modal and multi-view training over uni-modal and frontal-view-only training. We expect the OLKAVS dataset to facilitate multi-modal research in broader areas such as Korean speech recognition, speaker recognition, pronunciation level classification, and mouth motion analysis.

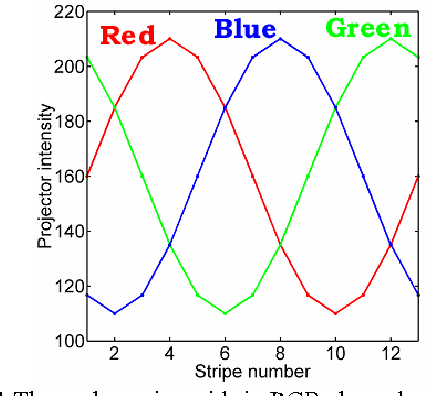

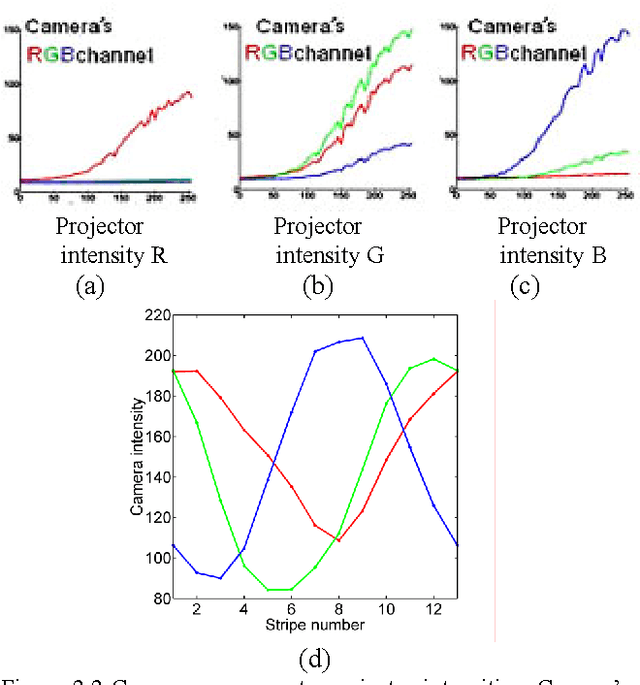

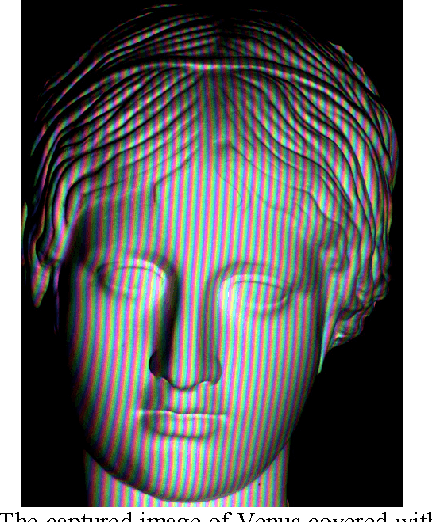

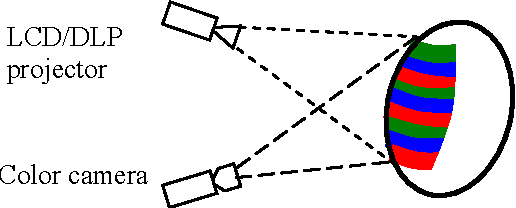

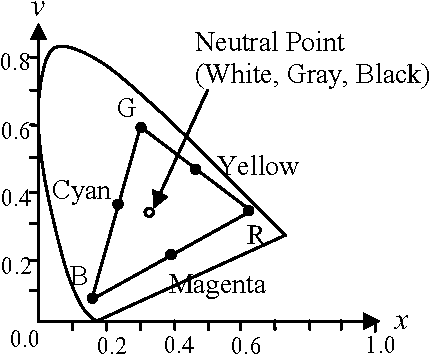

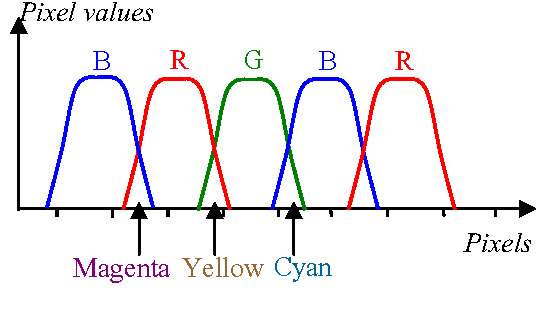

Color-Phase Analysis for Sinusoidal Structured Light in Rapid Range Imaging

Sep 14, 2015

Abstract:Active range sensing using structured-light is the most accurate and reliable method for obtaining 3D information. However, most of the work has been limited to range sensing of static objects, and range sensing of dynamic (moving or deforming) objects has been investigated recently only by a few researchers. Sinusoidal structured-light is one of the well-known optical methods for 3D measurement. In this paper, we present a novel method for rapid high-resolution range imaging using color sinusoidal pattern. We consider the real-world problem of nonlinearity and color-band crosstalk in the color light projector and color camera, and present methods for accurate recovery of color-phase. For high-resolution ranging, we use high-frequency patterns and describe new unwrapping algorithms for reliable range recovery. The experimental results demonstrate the effectiveness of our methods.

* 6 pages, 12 figures. 6th Asian Conference on Computer Vision (ACCV 2004)

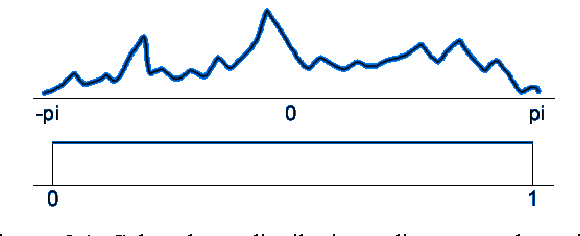

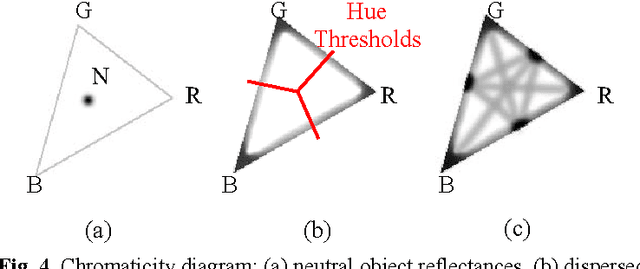

High-Contrast Color-Stripe Pattern for Rapid Structured-Light Range Imaging

Aug 20, 2015

Abstract:For structured-light range imaging, color stripes can be used for increasing the number of distinguishable light patterns compared to binary BW stripes. Therefore, an appropriate use of color patterns can reduce the number of light projections and range imaging is achievable in single video frame or in "one shot". On the other hand, the reliability and range resolution attainable from color stripes is generally lower than those from multiply projected binary BW patterns since color contrast is affected by object color reflectance and ambient light. This paper presents new methods for selecting stripe colors and designing multiple-stripe patterns for "one-shot" and "two-shot" imaging. We show that maximizing color contrast between the stripes in one-shot imaging reduces the ambiguities resulting from colored object surfaces and limitations in sensor/projector resolution. Two-shot imaging adds an extra video frame and maximizes the color contrast between the first and second video frames to diminish the ambiguities even further. Experimental results demonstrate the effectiveness of the presented one-shot and two-shot color-stripe imaging schemes.

* 13 pages, 12 figures, 8th European Conference on Computer Vision (ECCV), Prague, Czech Republic, May 2004, Proceedings, Part I

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge