Pouya Khani

IBO: Inpainting-Based Occlusion to Enhance Explainable Artificial Intelligence Evaluation in Histopathology

Sep 03, 2024Abstract:Histopathological image analysis is crucial for accurate cancer diagnosis and treatment planning. While deep learning models, especially convolutional neural networks, have advanced this field, their "black-box" nature raises concerns about interpretability and trustworthiness. Explainable Artificial Intelligence (XAI) techniques aim to address these concerns, but evaluating their effectiveness remains challenging. A significant issue with current occlusion-based XAI methods is that they often generate Out-of-Distribution (OoD) samples, leading to inaccurate evaluations. In this paper, we introduce Inpainting-Based Occlusion (IBO), a novel occlusion strategy that utilizes a Denoising Diffusion Probabilistic Model to inpaint occluded regions in histopathological images. By replacing cancerous areas with realistic, non-cancerous tissue, IBO minimizes OoD artifacts and preserves data integrity. We evaluate our method on the CAMELYON16 dataset through two phases: first, by assessing perceptual similarity using the Learned Perceptual Image Patch Similarity (LPIPS) metric, and second, by quantifying the impact on model predictions through Area Under the Curve (AUC) analysis. Our results demonstrate that IBO significantly improves perceptual fidelity, achieving nearly twice the improvement in LPIPS scores compared to the best existing occlusion strategy. Additionally, IBO increased the precision of XAI performance prediction from 42% to 71% compared to traditional methods. These results demonstrate IBO's potential to provide more reliable evaluations of XAI techniques, benefiting histopathology and other applications. The source code for this study is available at https://github.com/a-fsh-r/IBO.

TbExplain: A Text-based Explanation Method for Scene Classification Models with the Statistical Prediction Correction

Jul 19, 2023

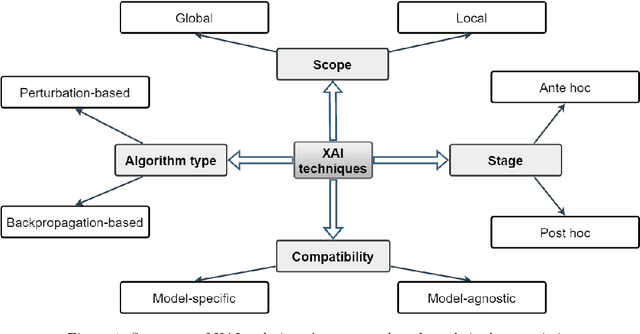

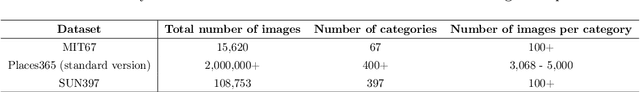

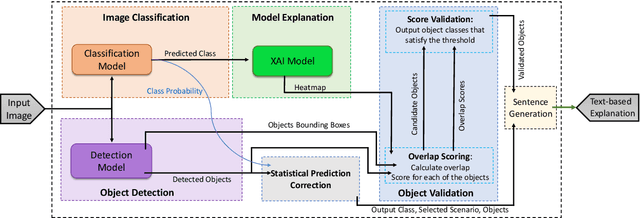

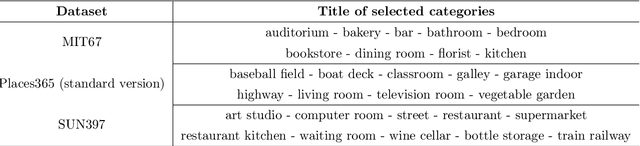

Abstract:The field of Explainable Artificial Intelligence (XAI) aims to improve the interpretability of black-box machine learning models. Building a heatmap based on the importance value of input features is a popular method for explaining the underlying functions of such models in producing their predictions. Heatmaps are almost understandable to humans, yet they are not without flaws. Non-expert users, for example, may not fully understand the logic of heatmaps (the logic in which relevant pixels to the model's prediction are highlighted with different intensities or colors). Additionally, objects and regions of the input image that are relevant to the model prediction are frequently not entirely differentiated by heatmaps. In this paper, we propose a framework called TbExplain that employs XAI techniques and a pre-trained object detector to present text-based explanations of scene classification models. Moreover, TbExplain incorporates a novel method to correct predictions and textually explain them based on the statistics of objects in the input image when the initial prediction is unreliable. To assess the trustworthiness and validity of the text-based explanations, we conducted a qualitative experiment, and the findings indicated that these explanations are sufficiently reliable. Furthermore, our quantitative and qualitative experiments on TbExplain with scene classification datasets reveal an improvement in classification accuracy over ResNet variants.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge