Peter A. Bobbert

Critical nonlinear aspects of hopping transport for reconfigurable logic in disordered dopant networks

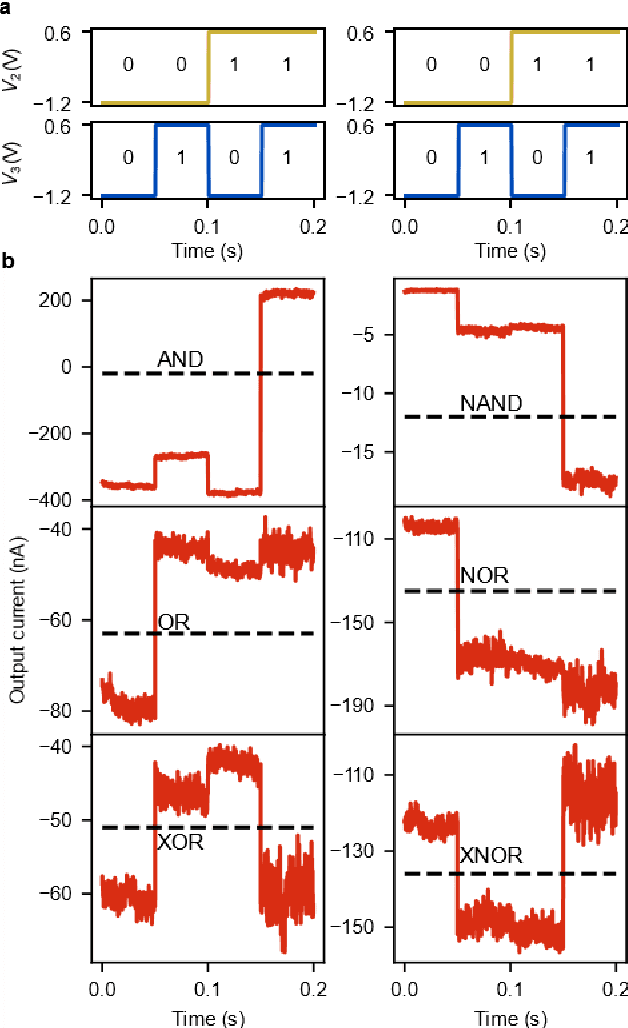

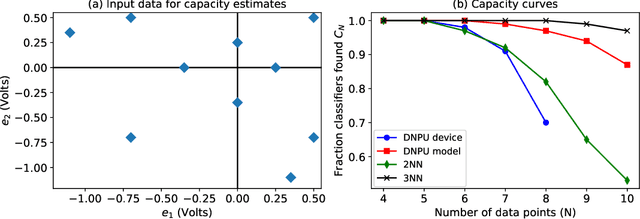

Dec 26, 2023Abstract:Nonlinear behavior in the hopping transport of interacting charges enables reconfigurable logic in disordered dopant network devices, where voltages applied at control electrodes tune the relation between voltages applied at input electrodes and the current measured at an output electrode. From kinetic Monte Carlo simulations we analyze the critical nonlinear aspects of variable-range hopping transport for realizing Boolean logic gates in these devices on three levels. First, we quantify the occurrence of individual gates for random choices of control voltages. We find that linearly inseparable gates such as the XOR gate are less likely to occur than linearly separable gates such as the AND gate, despite the fact that the number of different regions in the multidimensional control voltage space for which AND or XOR gates occur is comparable. Second, we use principal component analysis to characterize the distribution of the output current vectors for the (00,10,01,11) logic input combinations in terms of eigenvectors and eigenvalues of the output covariance matrix. This allows a simple and direct comparison of the behavior of different simulated devices and a comparison to experimental devices. Third, we quantify the nonlinearity in the distribution of the output current vectors necessary for realizing Boolean functionality by introducing three nonlinearity indicators. The analysis provides a physical interpretation of the effects of changing the hopping distance and temperature and is used in a comparison with data generated by a deep neural network trained on a physical device.

Gradient Descent in Materio

May 15, 2021

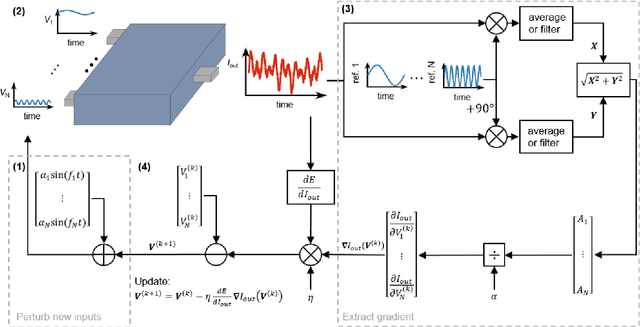

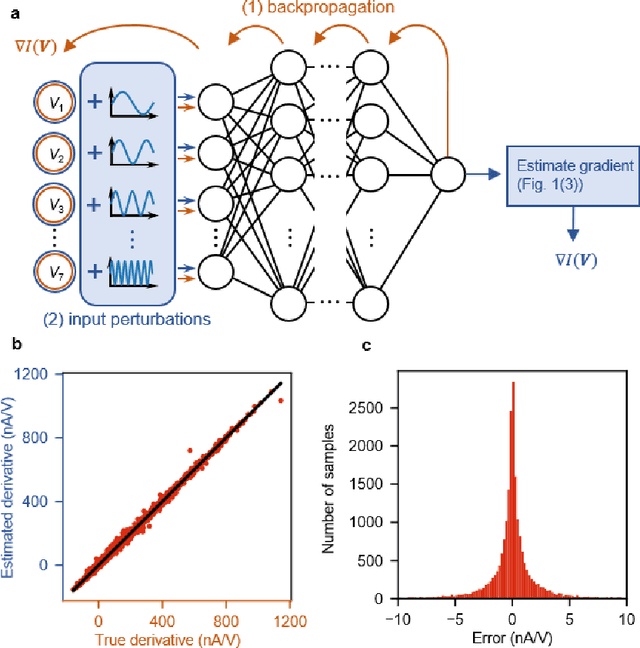

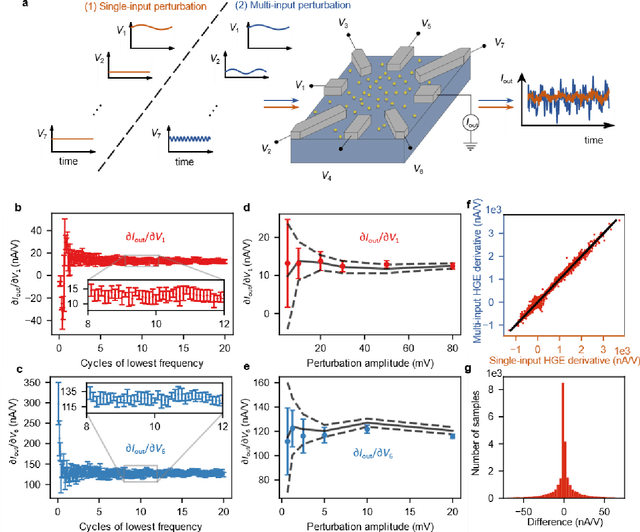

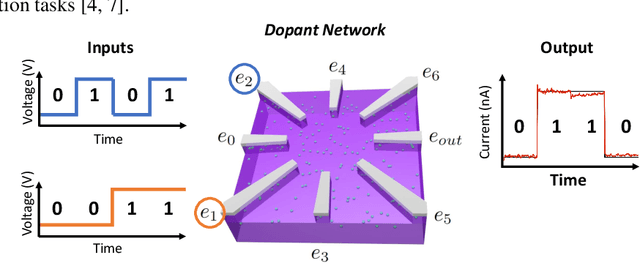

Abstract:Deep learning, a multi-layered neural network approach inspired by the brain, has revolutionized machine learning. One of its key enablers has been backpropagation, an algorithm that computes the gradient of a loss function with respect to the weights in the neural network model, in combination with its use in gradient descent. However, the implementation of deep learning in digital computers is intrinsically wasteful, with energy consumption becoming prohibitively high for many applications. This has stimulated the development of specialized hardware, ranging from neuromorphic CMOS integrated circuits and integrated photonic tensor cores to unconventional, material-based computing systems. The learning process in these material systems, taking place, e.g., by artificial evolution or surrogate neural network modelling, is still a complicated and time-consuming process. Here, we demonstrate an efficient and accurate homodyne gradient extraction method for performing gradient descent on the loss function directly in the material system. We demonstrate the method in our recently developed dopant network processing units, where we readily realize all Boolean gates. This shows that gradient descent can in principle be fully implemented in materio using simple electronics, opening up the way to autonomously learning material systems.

Dopant Network Processing Units: Towards Efficient Neural-network Emulators with High-capacity Nanoelectronic Nodes

Jul 24, 2020

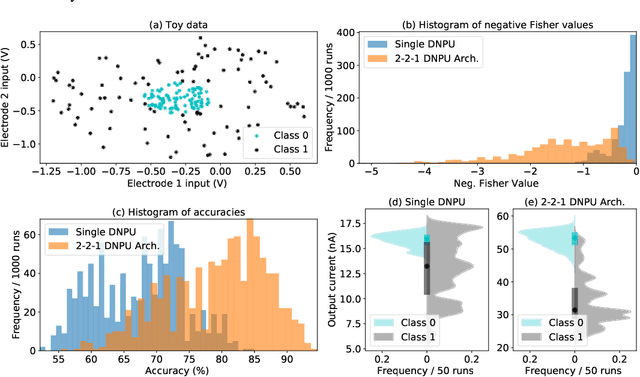

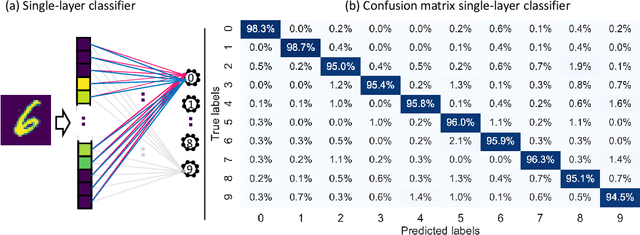

Abstract:The rapidly growing computational demands of deep neural networks require novel hardware designs. Recently, tunable nanoelectronic devices were developed based on hopping electrons through a network of dopant atoms in silicon. These "Dopant Network Processing Units" (DNPUs) are highly energy-efficient and have potentially very high throughput. By adapting the control voltages applied to its terminals, a single DNPU can solve a variety of linearly non-separable classification problems. However, using a single device has limitations due to the implicit single-node architecture. This paper presents a promising novel approach to neural information processing by introducing DNPUs as high-capacity neurons and moving from a single to a multi-neuron framework. By implementing and testing a small multi-DNPU classifier in hardware, we show that feed-forward DNPU networks improve the performance of a single DNPU from 77% to 94% test accuracy on a binary classification task with concentric classes on a plane. Furthermore, motivated by the integration of DNPUs with memristor arrays, we study the potential of using DNPUs in combination with linear layers. We show by simulation that a single-layer MNIST classifier with only 10 DNPUs achieves over 96% test accuracy. Our results pave the road towards hardware neural-network emulators that offer atomic-scale information processing with low latency and energy consumption.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge