Pavan Chakraborty

When Names Change Verdicts: Intervention Consistency Reveals Systematic Bias in LLM Decision-Making

Mar 19, 2026Abstract:Large language models (LLMs) are increasingly used for high-stakes decisions, yet their susceptibility to spurious features remains poorly characterized. We introduce ICE-Guard, a framework applying intervention consistency testing to detect three types of spurious feature reliance: demographic (name/race swaps), authority (credential/prestige swaps), and framing (positive/negative restatements). Across 3,000 vignettes spanning 10 high-stakes domains, we evaluate 11 LLMs from 8 families and find that (1) authority bias (mean 5.8%) and framing bias (5.0%) substantially exceed demographic bias (2.2%), challenging the field's narrow focus on demographics; (2) bias concentrates in specific domains -- finance shows 22.6% authority bias while criminal justice shows only 2.8%; (3) structured decomposition, where the LLM extracts features and a deterministic rubric decides, reduces flip rates by up to 100% (median 49% across 9 models). We demonstrate an ICE-guided detect-diagnose-mitigate-verify loop achieving cumulative 78% bias reduction via iterative prompt patching. Validation against real COMPAS recidivism data shows COMPAS-derived flip rates exceed pooled synthetic rates, suggesting our benchmark provides a conservative estimate of real-world bias. Code and data are publicly available.

ICE: Intervention-Consistent Explanation Evaluation with Statistical Grounding for LLMs

Mar 19, 2026Abstract:Evaluating whether explanations faithfully reflect a model's reasoning remains an open problem. Existing benchmarks use single interventions without statistical testing, making it impossible to distinguish genuine faithfulness from chance-level performance. We introduce ICE (Intervention-Consistent Explanation), a framework that compares explanations against matched random baselines via randomization tests under multiple intervention operators, yielding win rates with confidence intervals. Evaluating 7 LLMs across 4 English tasks, 6 non-English languages, and 2 attribution methods, we find that faithfulness is operator-dependent: operator gaps reach up to 44 percentage points, with deletion typically inflating estimates on short text but the pattern reversing on long text, suggesting that faithfulness should be interpreted comparatively across intervention operators rather than as a single score. Randomized baselines reveal anti-faithfulness in one-third of configurations, and faithfulness shows zero correlation with human plausibility (|r| < 0.04). Multilingual evaluation reveals dramatic model-language interactions not explained by tokenization alone. We release the ICE framework and ICEBench benchmark.

Proof-Carrying Materials: Falsifiable Safety Certificates for Machine-Learned Interatomic Potentials

Mar 12, 2026Abstract:Machine-learned interatomic potentials (MLIPs) are deployed for high-throughput materials screening without formal reliability guarantees. We show that a single MLIP used as a stability filter misses 93% of density functional theory (DFT)-stable materials (recall 0.07) on a 25,000-material benchmark. Proof-Carrying Materials (PCM) closes this gap through three stages: adversarial falsification across compositional space, bootstrap envelope refinement with 95% confidence intervals, and Lean 4 formal certification. Auditing CHGNet, TensorNet and MACE reveals architecture-specific blind spots with near-zero pairwise error correlations (r <= 0.13; n = 5,000), confirmed by independent Quantum ESPRESSO validation (20/20 converged; median DFT/CHGNet force ratio 12x). A risk model trained on PCM-discovered features predicts failures on unseen materials (AUC-ROC = 0.938 +/- 0.004) and transfers across architectures (cross-MLIP AUC-ROC ~ 0.70; feature importance r = 0.877). In a thermoelectric screening case study, PCM-audited protocols discover 62 additional stable materials missed by single-MLIP screening - a 25% improvement in discovery yield.

Budget-Sensitive Discovery Scoring: A Formally Verified Framework for Evaluating AI-Guided Scientific Selection

Mar 12, 2026Abstract:Scientific discovery increasingly relies on AI systems to select candidates for expensive experimental validation, yet no principled, budget-aware evaluation framework exists for comparing selection strategies -- a gap intensified by large language models (LLMs), which generate plausible scientific proposals without reliable downstream evaluation. We introduce the Budget-Sensitive Discovery Score (BSDS), a formally verified metric -- 20 theorems machine-checked by the Lean 4 proof assistant -- that jointly penalizes false discoveries (lambda-weighted FDR) and excessive abstention (gamma-weighted coverage gap) at each budget level. Its budget-averaged form, the Discovery Quality Score (DQS), provides a single summary statistic that no proposer can inflate by performing well at a cherry-picked budget. As a case study, we apply BSDS/DQS to: do LLMs add marginal value to an existing ML pipeline for drug discovery candidate selection? We evaluate 39 proposers -- 11 mechanistic variants, 14 zero-shot LLM configurations, and 14 few-shot LLM configurations -- using SMILES representations on MoleculeNet HIV (41,127 compounds, 3.5% active, 1,000 bootstrap replicates) under both random and scaffold splits. Three findings emerge. First, the simple RF-based Greedy-ML proposer achieves the best DQS (-0.046), outperforming all MLP variants and LLM configurations. Second, no LLM surpasses the Greedy-ML baseline under zero-shot or few-shot evaluation on HIV or Tox21, establishing that LLMs provide no marginal value over an existing trained classifier. Third, the proposer hierarchy generalizes across five MoleculeNet benchmarks spanning 0.18%-46.2% prevalence, a non-drug AV safety domain, and a 9x7 grid of penalty parameters (tau >= 0.636, mean tau = 0.863). The framework applies to any setting where candidates are selected under budget constraints and asymmetric error costs.

Contextual StereoSet: Stress-Testing Bias Alignment Robustness in Large Language Models

Jan 15, 2026Abstract:A model that avoids stereotypes in a lab benchmark may not avoid them in deployment. We show that measured bias shifts dramatically when prompts mention different places, times, or audiences -- no adversarial prompting required. We introduce Contextual StereoSet, a benchmark that holds stereotype content fixed while systematically varying contextual framing. Testing 13 models across two protocols, we find striking patterns: anchoring to 1990 (vs. 2030) raises stereotype selection in all models tested on this contrast (p<0.05); gossip framing raises it in 5 of 6 full-grid models; out-group observer framing shifts it by up to 13 percentage points. These effects replicate in hiring, lending, and help-seeking vignettes. We propose Context Sensitivity Fingerprints (CSF): a compact profile of per-dimension dispersion and paired contrasts with bootstrap CIs and FDR correction. Two evaluation tracks support different use cases -- a 360-context diagnostic grid for deep analysis and a budgeted protocol covering 4,229 items for production screening. The implication is methodological: bias scores from fixed-condition tests may not generalize.This is not a claim about ground-truth bias rates; it is a stress test of evaluation robustness. CSF forces evaluators to ask, "Under what conditions does bias appear?" rather than "Is this model biased?" We release our benchmark, code, and results.

Polyp detection in colonoscopy images using YOLOv11

Jan 15, 2025

Abstract:Colorectal cancer (CRC) is one of the most commonly diagnosed cancers all over the world. It starts as a polyp in the inner lining of the colon. To prevent CRC, early polyp detection is required. Colonosopy is used for the inspection of the colon. Generally, the images taken by the camera placed at the tip of the endoscope are analyzed by the experts manually. Various traditional machine learning models have been used with the rise of machine learning. Recently, deep learning models have shown more effectiveness in polyp detection due to their superiority in generalizing and learning small features. These deep learning models for object detection can be segregated into two different types: single-stage and two-stage. Generally, two stage models have higher accuracy than single stage ones but the single stage models have low inference time. Hence, single stage models are easy to use for quick object detection. YOLO is one of the singlestage models used successfully for polyp detection. It has drawn the attention of researchers because of its lower inference time. The researchers have used Different versions of YOLO so far, and with each newer version, the accuracy of the model is increasing. This paper aims to see the effectiveness of the recently released YOLOv11 to detect polyp. We analyzed the performance for all five models of YOLOv11 (YOLO11n, YOLO11s, YOLO11m, YOLO11l, YOLO11x) with Kvasir dataset for the training and testing. Two different versions of the dataset were used. The first consisted of the original dataset, and the other was created using augmentation techniques. The performance of all the models with these two versions of the dataset have been analysed.

Point-GR: Graph Residual Point Cloud Network for 3D Object Classification and Segmentation

Dec 04, 2024Abstract:In recent years, the challenge of 3D shape analysis within point cloud data has gathered significant attention in computer vision. Addressing the complexities of effective 3D information representation and meaningful feature extraction for classification tasks remains crucial. This paper presents Point-GR, a novel deep learning architecture designed explicitly to transform unordered raw point clouds into higher dimensions while preserving local geometric features. It introduces residual-based learning within the network to mitigate the point permutation issues in point cloud data. The proposed Point-GR network significantly reduced the number of network parameters in Classification and Part-Segmentation compared to baseline graph-based networks. Notably, the Point-GR model achieves a state-of-the-art scene segmentation mean IoU of 73.47% on the S3DIS benchmark dataset, showcasing its effectiveness. Furthermore, the model shows competitive results in Classification and Part-Segmentation tasks.

Inverse kinematics learning of a continuum manipulator using limited real time data

Mar 27, 2024Abstract:Data driven control of a continuum manipulator requires a lot of data for training but generating sufficient amount of real time data is not cost efficient. Random actuation of the manipulator can also be unsafe sometimes. Meta learning has been used successfully to adapt to a new environment. Hence, this paper tries to solve the above mentioned problem using meta learning. We consider two cases for that. First, this paper proposes a method to use simulation data for training the model using MAML(Model-Agnostic Meta-Learning). Then, it adapts to the real world using gradient steps. Secondly,if the simulation model is not available or difficult to formulate, then we propose a CGAN(Conditional Generative adversial network)-MAML based method for it. The model is trained using a small amount of real time data and augmented data for different loading conditions. Then, adaptation is done in the real environment. It has been found out from the experiments that the relative positioning error for both the cases are below 3%. The proposed models are experimentally verified on a real continuum manipulator.

Hybrid CNN Bi-LSTM neural network for Hyperspectral image classification

Feb 15, 2024

Abstract:Hyper spectral images have drawn the attention of the researchers for its complexity to classify. It has nonlinear relation between the materials and the spectral information provided by the HSI image. Deep learning methods have shown superiority in learning this nonlinearity in comparison to traditional machine learning methods. Use of 3-D CNN along with 2-D CNN have shown great success for learning spatial and spectral features. However, it uses comparatively large number of parameters. Moreover, it is not effective to learn inter layer information. Hence, this paper proposes a neural network combining 3-D CNN, 2-D CNN and Bi-LSTM. The performance of this model has been tested on Indian Pines(IP) University of Pavia(PU) and Salinas Scene(SA) data sets. The results are compared with the state of-the-art deep learning-based models. This model performed better in all three datasets. It could achieve 99.83, 99.98 and 100 percent accuracy using only 30 percent trainable parameters of the state-of-art model in IP, PU and SA datasets respectively.

Guidance system for Visually Impaired Persons using Deep Learning and Optical flow

Oct 22, 2023

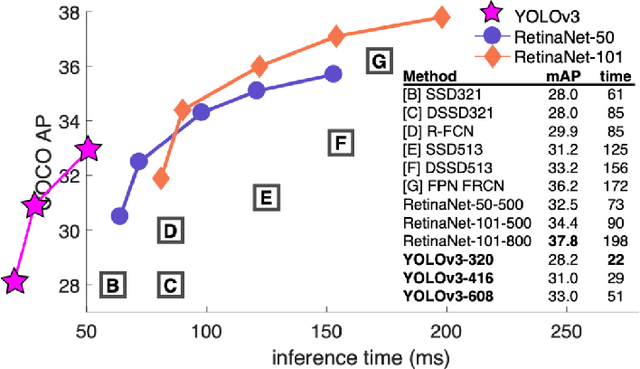

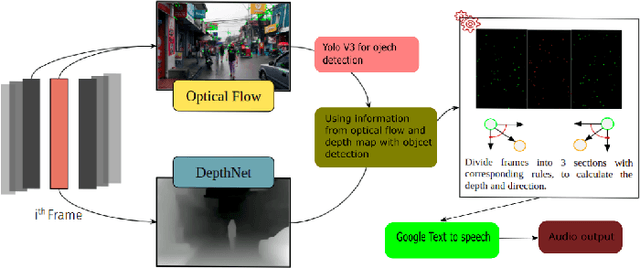

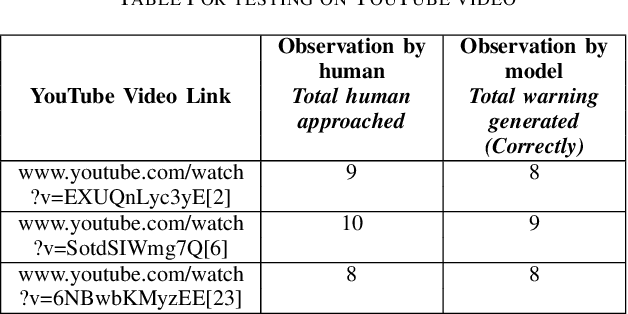

Abstract:Visually impaired persons find it difficult to know about their surroundings while walking on a road. Walking sticks used by them can only give them information about the obstacles in the stick's proximity. Moreover, it is mostly effective in static or very slow-paced environments. Hence, this paper introduces a method to guide them in a busy street. To create such a system it is very important to know about the approaching object and its direction of approach. To achieve this objective we created a method in which the image frame received from the video is divided into three parts i.e. center, left, and right to know the direction of approach of the approaching object. Object detection is done using YOLOv3. Lucas Kanade's optical flow estimation method is used for the optical flow estimation and Depth-net is used for depth estimation. Using the depth information, object motion trajectory, and object category information, the model provides necessary information/warning to the person. This model has been tested in the real world to show its effectiveness.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge