Patrick Wambacq

On the long-term learning ability of LSTM LMs

Jun 16, 2021

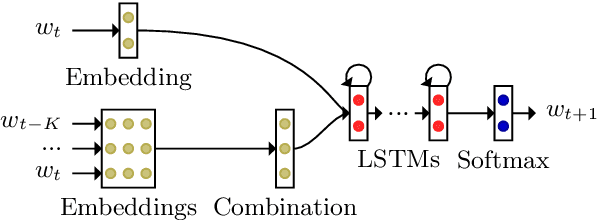

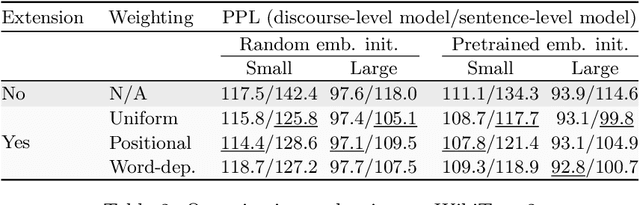

Abstract:We inspect the long-term learning ability of Long Short-Term Memory language models (LSTM LMs) by evaluating a contextual extension based on the Continuous Bag-of-Words (CBOW) model for both sentence- and discourse-level LSTM LMs and by analyzing its performance. We evaluate on text and speech. Sentence-level models using the long-term contextual module perform comparably to vanilla discourse-level LSTM LMs. On the other hand, the extension does not provide gains for discourse-level models. These findings indicate that discourse-level LSTM LMs already rely on contextual information to perform long-term learning.

Information-Weighted Neural Cache Language Models for ASR

Sep 24, 2018

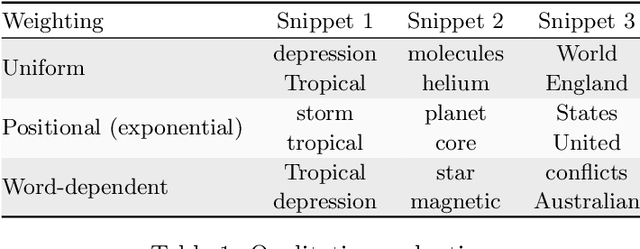

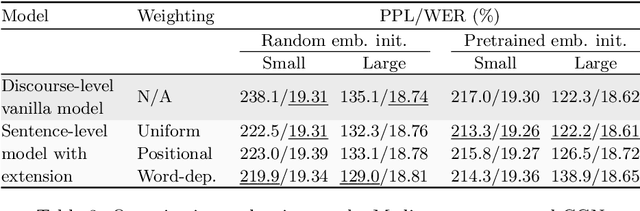

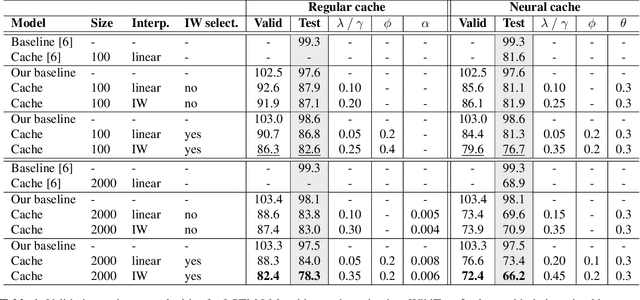

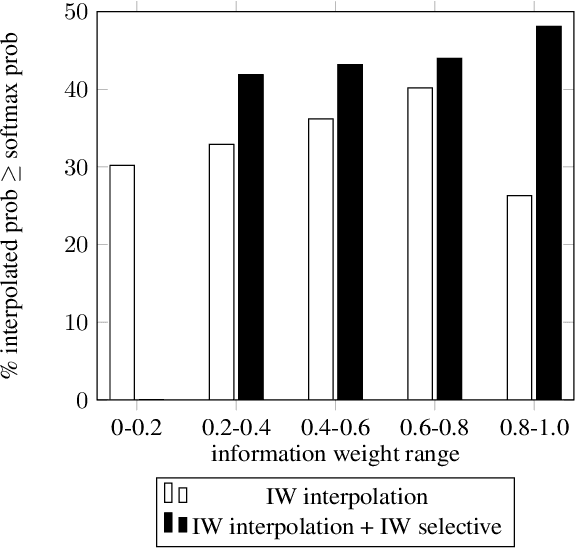

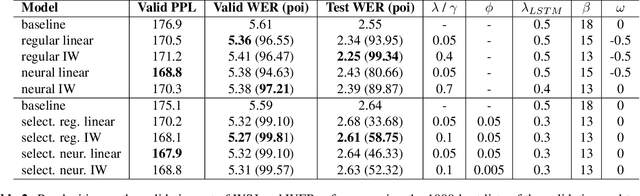

Abstract:Neural cache language models (LMs) extend the idea of regular cache language models by making the cache probability dependent on the similarity between the current context and the context of the words in the cache. We make an extensive comparison of 'regular' cache models with neural cache models, both in terms of perplexity and WER after rescoring first-pass ASR results. Furthermore, we propose two extensions to this neural cache model that make use of the content value/information weight of the word: firstly, combining the cache probability and LM probability with an information-weighted interpolation and secondly, selectively adding only content words to the cache. We obtain a 29.9%/32.1% (validation/test set) relative improvement in perplexity with respect to a baseline LSTM LM on the WikiText-2 dataset, outperforming previous work on neural cache LMs. Additionally, we observe significant WER reductions with respect to the baseline model on the WSJ ASR task.

State Gradients for RNN Memory Analysis

Jun 18, 2018

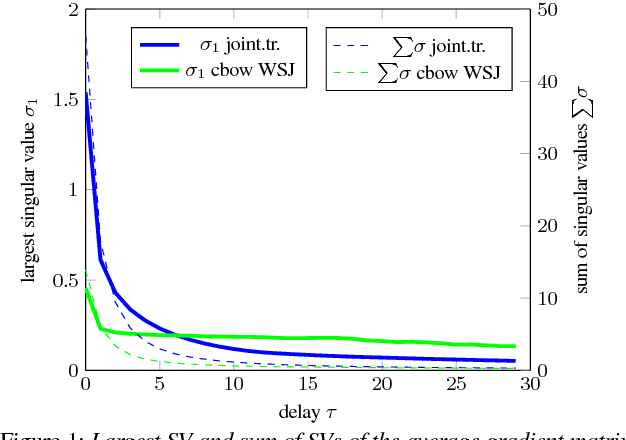

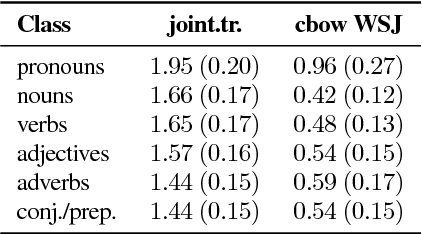

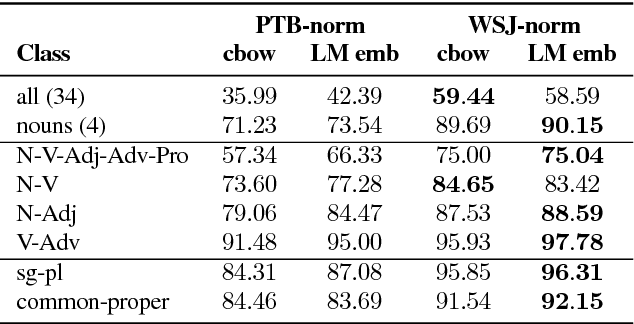

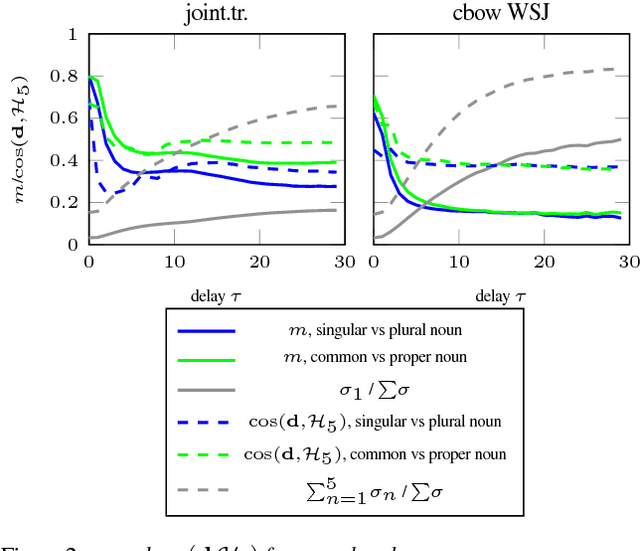

Abstract:We present a framework for analyzing what the state in RNNs remembers from its input embeddings. Our approach is inspired by backpropagation, in the sense that we compute the gradients of the states with respect to the input embeddings. The gradient matrix is decomposed with Singular Value Decomposition to analyze which directions in the embedding space are best transferred to the hidden state space, characterized by the largest singular values. We apply our approach to LSTM language models and investigate to what extent and for how long certain classes of words are remembered on average for a certain corpus. Additionally, the extent to which a specific property or relationship is remembered by the RNN can be tracked by comparing a vector characterizing that property with the direction(s) in embedding space that are best preserved in hidden state space.

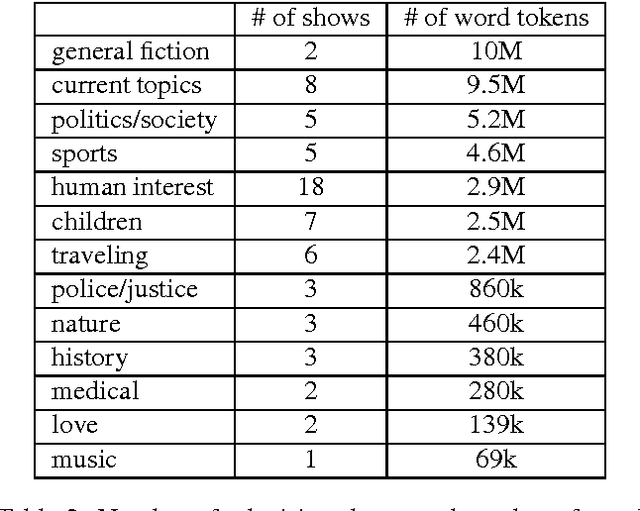

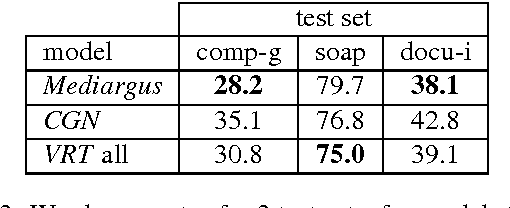

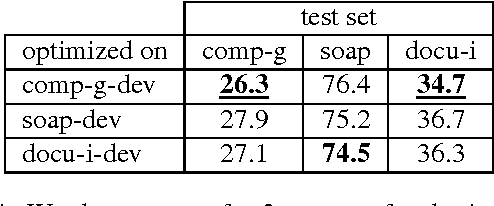

Language Models of Spoken Dutch

Sep 12, 2017

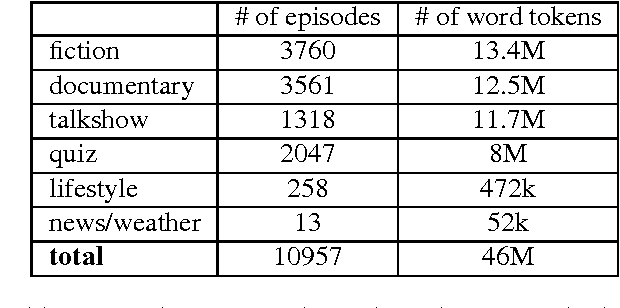

Abstract:In Flanders, all TV shows are subtitled. However, the process of subtitling is a very time-consuming one and can be sped up by providing the output of a speech recognizer run on the audio of the TV show, prior to the subtitling. Naturally, this speech recognition will perform much better if the employed language model is adapted to the register and the topic of the program. We present several language models trained on subtitles of television shows provided by the Flemish public-service broadcaster VRT. This data was gathered in the context of the project STON which has as purpose to facilitate the process of subtitling TV shows. One model is trained on all available data (46M word tokens), but we also trained models on a specific type of TV show or domain/topic. Language models of spoken language are quite rare due to the lack of training data. The size of this corpus is relatively large for a corpus of spoken language (compare with e.g. CGN which has 9M words), but still rather small for a language model. Thus, in practice it is advised to interpolate these models with a large background language model trained on written language. The models can be freely downloaded on http://www.esat.kuleuven.be/psi/spraak/downloads/.

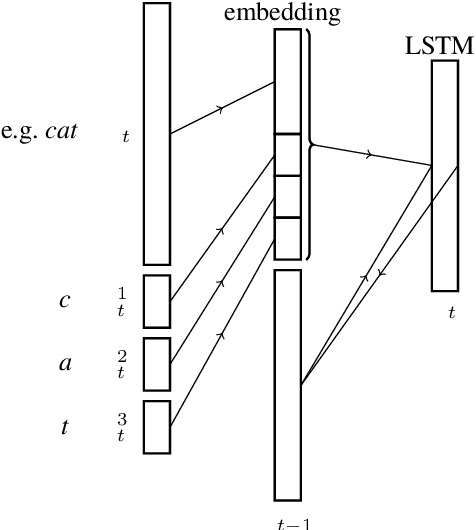

Character-Word LSTM Language Models

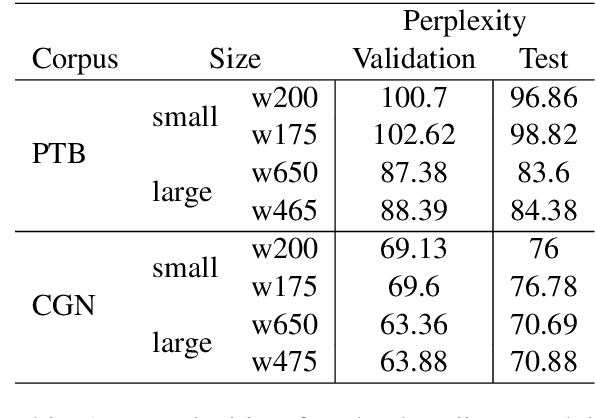

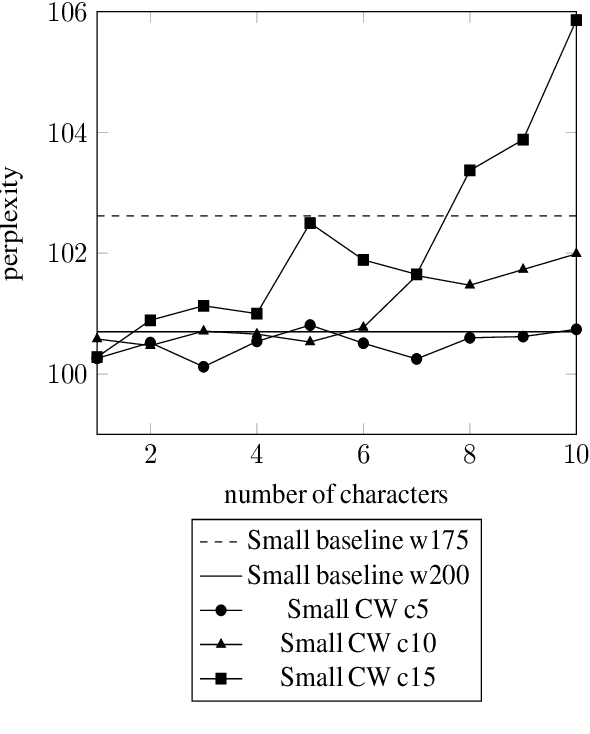

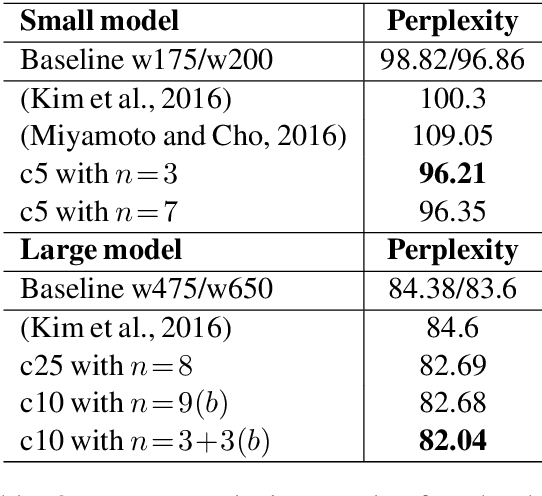

Apr 10, 2017

Abstract:We present a Character-Word Long Short-Term Memory Language Model which both reduces the perplexity with respect to a baseline word-level language model and reduces the number of parameters of the model. Character information can reveal structural (dis)similarities between words and can even be used when a word is out-of-vocabulary, thus improving the modeling of infrequent and unknown words. By concatenating word and character embeddings, we achieve up to 2.77% relative improvement on English compared to a baseline model with a similar amount of parameters and 4.57% on Dutch. Moreover, we also outperform baseline word-level models with a larger number of parameters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge