Parami Wijesinghe

FALCON: Feature Driven Selective Classification for Energy-Efficient Image Recognition

Mar 08, 2017

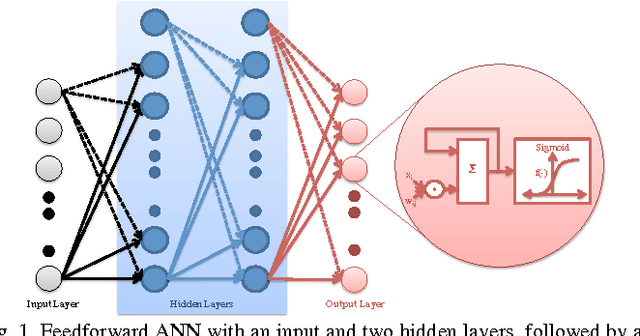

Abstract:Machine-learning algorithms have shown outstanding image recognition or classification performance for computer vision applications. However, the compute and energy requirement for implementing such classifier models for large-scale problems is quite high. In this paper, we propose Feature Driven Selective Classification (FALCON) inspired by the biological visual attention mechanism in the brain to optimize the energy-efficiency of machine-learning classifiers. We use the consensus in the characteristic features (color/texture) across images in a dataset to decompose the original classification problem and construct a tree of classifiers (nodes) with a generic-to-specific transition in the classification hierarchy. The initial nodes of the tree separate the instances based on feature information and selectively enable the latter nodes to perform object specific classification. The proposed methodology allows selective activation of only those branches and nodes of the classification tree that are relevant to the input while keeping the remaining nodes idle. Additionally, we propose a programmable and scalable Neuromorphic Engine (NeuE) that utilizes arrays of specialized neural computational elements to execute the FALCON based classifier models for diverse datasets. The structure of FALCON facilitates the reuse of nodes while scaling up from small classification problems to larger ones thus allowing us to construct classifier implementations that are significantly more efficient. We evaluate our approach for a 12-object classification task on the Caltech101 dataset and 10-object task on CIFAR-10 dataset by constructing FALCON models on the NeuE platform in 45nm technology. Our results demonstrate significant improvement in energy-efficiency and training time for minimal loss in output quality.

Significance Driven Hybrid 8T-6T SRAM for Energy-Efficient Synaptic Storage in Artificial Neural Networks

Feb 27, 2016

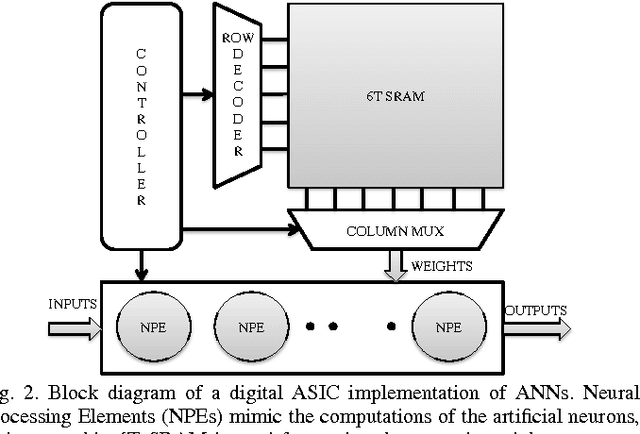

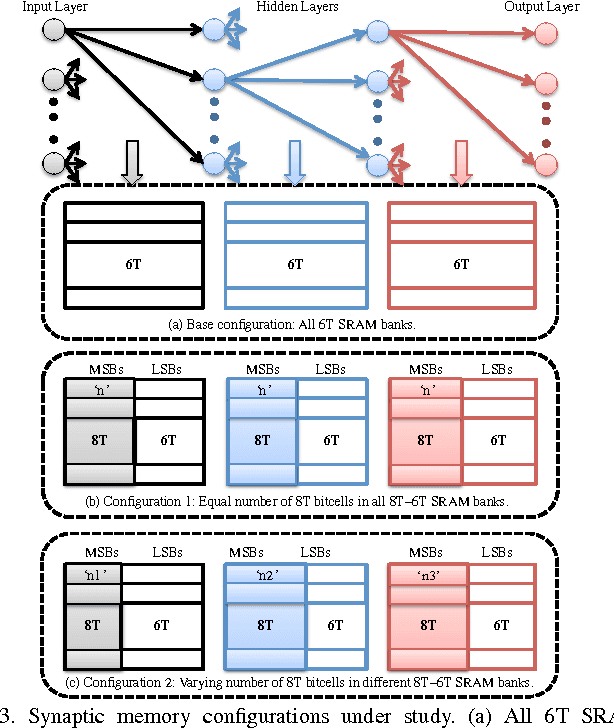

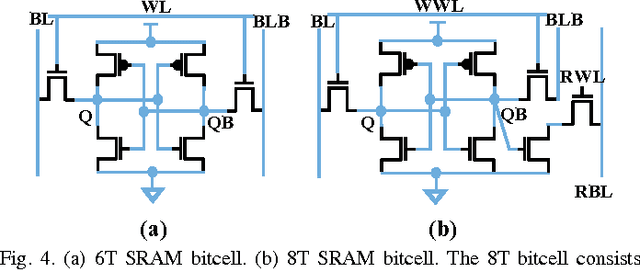

Abstract:Multilayered artificial neural networks (ANN) have found widespread utility in classification and recognition applications. The scale and complexity of such networks together with the inadequacies of general purpose computing platforms have led to a significant interest in the development of efficient hardware implementations. In this work, we focus on designing energy efficient on-chip storage for the synaptic weights. In order to minimize the power consumption of typical digital CMOS implementations of such large-scale networks, the digital neurons could be operated reliably at scaled voltages by reducing the clock frequency. On the contrary, the on-chip synaptic storage designed using a conventional 6T SRAM is susceptible to bitcell failures at reduced voltages. However, the intrinsic error resiliency of NNs to small synaptic weight perturbations enables us to scale the operating voltage of the 6TSRAM. Our analysis on a widely used digit recognition dataset indicates that the voltage can be scaled by 200mV from the nominal operating voltage (950mV) for practically no loss (less than 0.5%) in accuracy (22nm predictive technology). Scaling beyond that causes substantial performance degradation owing to increased probability of failures in the MSBs of the synaptic weights. We, therefore propose a significance driven hybrid 8T-6T SRAM, wherein the sensitive MSBs are stored in 8T bitcells that are robust at scaled voltages due to decoupled read and write paths. In an effort to further minimize the area penalty, we present a synaptic-sensitivity driven hybrid memory architecture consisting of multiple 8T-6T SRAM banks. Our circuit to system-level simulation framework shows that the proposed synaptic-sensitivity driven architecture provides a 30.91% reduction in the memory access power with a 10.41% area overhead, for less than 1% loss in the classification accuracy.

* Accepted in Design, Automation and Test in Europe 2016 conference (DATE-2016)

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge