Oleksii Furman

DiCoFlex: Model-agnostic diverse counterfactuals with flexible control

May 29, 2025Abstract:Counterfactual explanations play a pivotal role in explainable artificial intelligence (XAI) by offering intuitive, human-understandable alternatives that elucidate machine learning model decisions. Despite their significance, existing methods for generating counterfactuals often require constant access to the predictive model, involve computationally intensive optimization for each instance and lack the flexibility to adapt to new user-defined constraints without retraining. In this paper, we propose DiCoFlex, a novel model-agnostic, conditional generative framework that produces multiple diverse counterfactuals in a single forward pass. Leveraging conditional normalizing flows trained solely on labeled data, DiCoFlex addresses key limitations by enabling real-time user-driven customization of constraints such as sparsity and actionability at inference time. Extensive experiments on standard benchmark datasets show that DiCoFlex outperforms existing methods in terms of validity, diversity, proximity, and constraint adherence, making it a practical and scalable solution for counterfactual generation in sensitive decision-making domains.

HyConEx: Hypernetwork classifier with counterfactual explanations

Mar 16, 2025Abstract:In recent years, there has been a growing interest in explainable AI methods. We want not only to make accurate predictions using sophisticated neural networks but also to understand what the model's decision is based on. One of the fundamental levels of interpretability is to provide counterfactual examples explaining the rationale behind the decision and identifying which features, and to what extent, must be modified to alter the model's outcome. To address these requirements, we introduce HyConEx, a classification model based on deep hypernetworks specifically designed for tabular data. Owing to its unique architecture, HyConEx not only provides class predictions but also delivers local interpretations for individual data samples in the form of counterfactual examples that steer a given sample toward an alternative class. While many explainable methods generated counterfactuals for external models, there have been no interpretable classifiers simultaneously producing counterfactual samples so far. HyConEx achieves competitive performance on several metrics assessing classification accuracy and fulfilling the criteria of a proper counterfactual attack. This makes HyConEx a distinctive deep learning model, which combines predictions and explainers as an all-in-one neural network. The code is available at https://github.com/gmum/HyConEx.

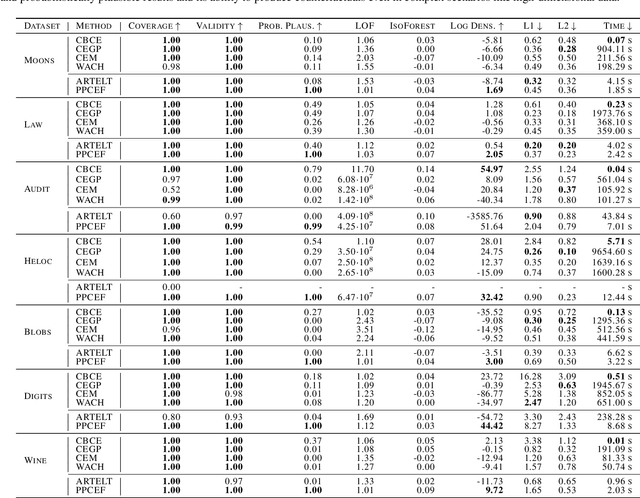

Unifying Perspectives: Plausible Counterfactual Explanations on Global, Group-wise, and Local Levels

May 27, 2024Abstract:Growing regulatory and societal pressures demand increased transparency in AI, particularly in understanding the decisions made by complex machine learning models. Counterfactual Explanations (CFs) have emerged as a promising technique within Explainable AI (xAI), offering insights into individual model predictions. However, to understand the systemic biases and disparate impacts of AI models, it is crucial to move beyond local CFs and embrace global explanations, which offer a~holistic view across diverse scenarios and populations. Unfortunately, generating Global Counterfactual Explanations (GCEs) faces challenges in computational complexity, defining the scope of "global," and ensuring the explanations are both globally representative and locally plausible. We introduce a novel unified approach for generating Local, Group-wise, and Global Counterfactual Explanations for differentiable classification models via gradient-based optimization to address these challenges. This framework aims to bridge the gap between individual and systemic insights, enabling a deeper understanding of model decisions and their potential impact on diverse populations. Our approach further innovates by incorporating a probabilistic plausibility criterion, enhancing actionability and trustworthiness. By offering a cohesive solution to the optimization and plausibility challenges in GCEs, our work significantly advances the interpretability and accountability of AI models, marking a step forward in the pursuit of transparent AI.

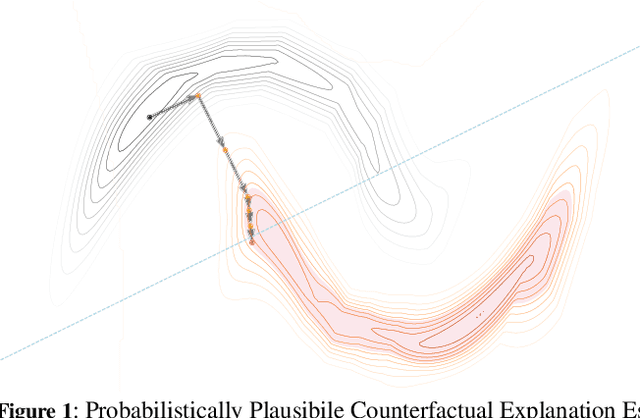

Probabilistically Plausible Counterfactual Explanations with Normalizing Flows

May 27, 2024

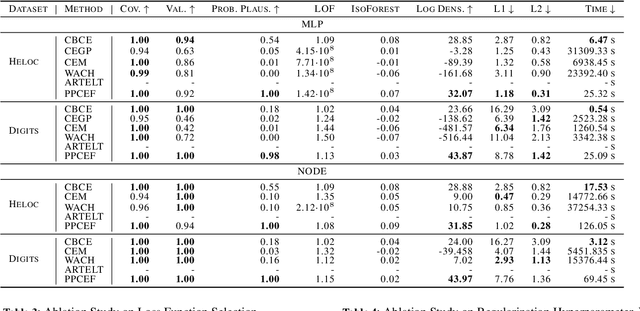

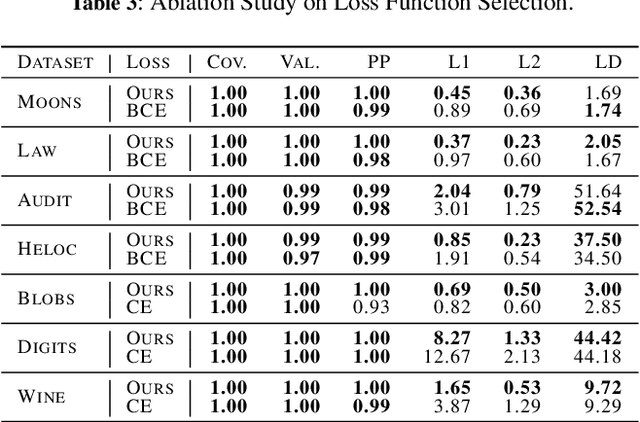

Abstract:We present PPCEF, a novel method for generating probabilistically plausible counterfactual explanations (CFs). PPCEF advances beyond existing methods by combining a probabilistic formulation that leverages the data distribution with the optimization of plausibility within a unified framework. Compared to reference approaches, our method enforces plausibility by directly optimizing the explicit density function without assuming a particular family of parametrized distributions. This ensures CFs are not only valid (i.e., achieve class change) but also align with the underlying data's probability density. For that purpose, our approach leverages normalizing flows as powerful density estimators to capture the complex high-dimensional data distribution. Furthermore, we introduce a novel loss that balances the trade-off between achieving class change and maintaining closeness to the original instance while also incorporating a probabilistic plausibility term. PPCEF's unconstrained formulation allows for efficient gradient-based optimization with batch processing, leading to orders of magnitude faster computation compared to prior methods. Moreover, the unconstrained formulation of PPCEF allows for the seamless integration of future constraints tailored to specific counterfactual properties. Finally, extensive evaluations demonstrate PPCEF's superiority in generating high-quality, probabilistically plausible counterfactual explanations in high-dimensional tabular settings. This makes PPCEF a powerful tool for not only interpreting complex machine learning models but also for improving fairness, accountability, and trust in AI systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge