Nuri Ryu

Edge-Aware Image Manipulation via Diffusion Models with a Novel Structure-Preservation Loss

Jan 23, 2026Abstract:Recent advances in image editing leverage latent diffusion models (LDMs) for versatile, text-prompt-driven edits across diverse tasks. Yet, maintaining pixel-level edge structures-crucial for tasks such as photorealistic style transfer or image tone adjustment-remains as a challenge for latent-diffusion-based editing. To overcome this limitation, we propose a novel Structure Preservation Loss (SPL) that leverages local linear models to quantify structural differences between input and edited images. Our training-free approach integrates SPL directly into the diffusion model's generative process to ensure structural fidelity. This core mechanism is complemented by a post-processing step to mitigate LDM decoding distortions, a masking strategy for precise edit localization, and a color preservation loss to preserve hues in unedited areas. Experiments confirm SPL enhances structural fidelity, delivering state-of-the-art performance in latent-diffusion-based image editing. Our code will be publicly released at https://github.com/gongms00/SPL.

Elevating 3D Models: High-Quality Texture and Geometry Refinement from a Low-Quality Model

Jul 15, 2025

Abstract:High-quality 3D assets are essential for various applications in computer graphics and 3D vision but remain scarce due to significant acquisition costs. To address this shortage, we introduce Elevate3D, a novel framework that transforms readily accessible low-quality 3D assets into higher quality. At the core of Elevate3D is HFS-SDEdit, a specialized texture enhancement method that significantly improves texture quality while preserving the appearance and geometry while fixing its degradations. Furthermore, Elevate3D operates in a view-by-view manner, alternating between texture and geometry refinement. Unlike previous methods that have largely overlooked geometry refinement, our framework leverages geometric cues from images refined with HFS-SDEdit by employing state-of-the-art monocular geometry predictors. This approach ensures detailed and accurate geometry that aligns seamlessly with the enhanced texture. Elevate3D outperforms recent competitors by achieving state-of-the-art quality in 3D model refinement, effectively addressing the scarcity of high-quality open-source 3D assets.

360$^\circ$ Reconstruction From a Single Image Using Space Carved Outpainting

Sep 19, 2023

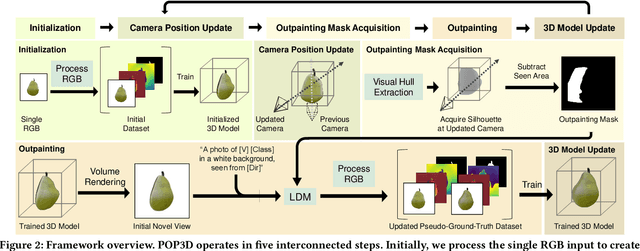

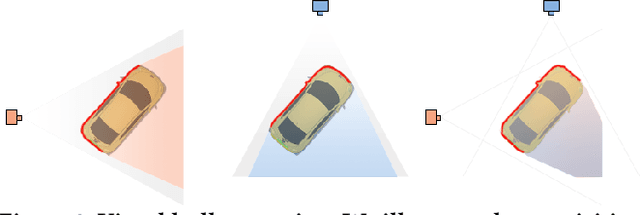

Abstract:We introduce POP3D, a novel framework that creates a full $360^\circ$-view 3D model from a single image. POP3D resolves two prominent issues that limit the single-view reconstruction. Firstly, POP3D offers substantial generalizability to arbitrary categories, a trait that previous methods struggle to achieve. Secondly, POP3D further improves reconstruction fidelity and naturalness, a crucial aspect that concurrent works fall short of. Our approach marries the strengths of four primary components: (1) a monocular depth and normal predictor that serves to predict crucial geometric cues, (2) a space carving method capable of demarcating the potentially unseen portions of the target object, (3) a generative model pre-trained on a large-scale image dataset that can complete unseen regions of the target, and (4) a neural implicit surface reconstruction method tailored in reconstructing objects using RGB images along with monocular geometric cues. The combination of these components enables POP3D to readily generalize across various in-the-wild images and generate state-of-the-art reconstructions, outperforming similar works by a significant margin. Project page: \url{http://cg.postech.ac.kr/research/POP3D}

Dr.3D: Adapting 3D GANs to Artistic Drawings

Nov 30, 2022

Abstract:While 3D GANs have recently demonstrated the high-quality synthesis of multi-view consistent images and 3D shapes, they are mainly restricted to photo-realistic human portraits. This paper aims to extend 3D GANs to a different, but meaningful visual form: artistic portrait drawings. However, extending existing 3D GANs to drawings is challenging due to the inevitable geometric ambiguity present in drawings. To tackle this, we present Dr.3D, a novel adaptation approach that adapts an existing 3D GAN to artistic drawings. Dr.3D is equipped with three novel components to handle the geometric ambiguity: a deformation-aware 3D synthesis network, an alternating adaptation of pose estimation and image synthesis, and geometric priors. Experiments show that our approach can successfully adapt 3D GANs to drawings and enable multi-view consistent semantic editing of drawings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge