Nishant Raj

DEMETR: Diagnosing Evaluation Metrics for Translation

Oct 25, 2022

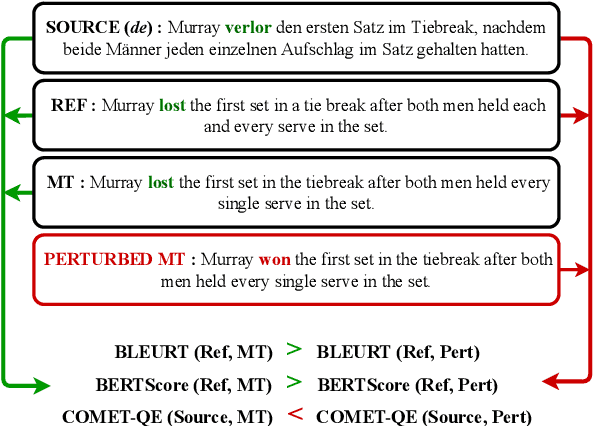

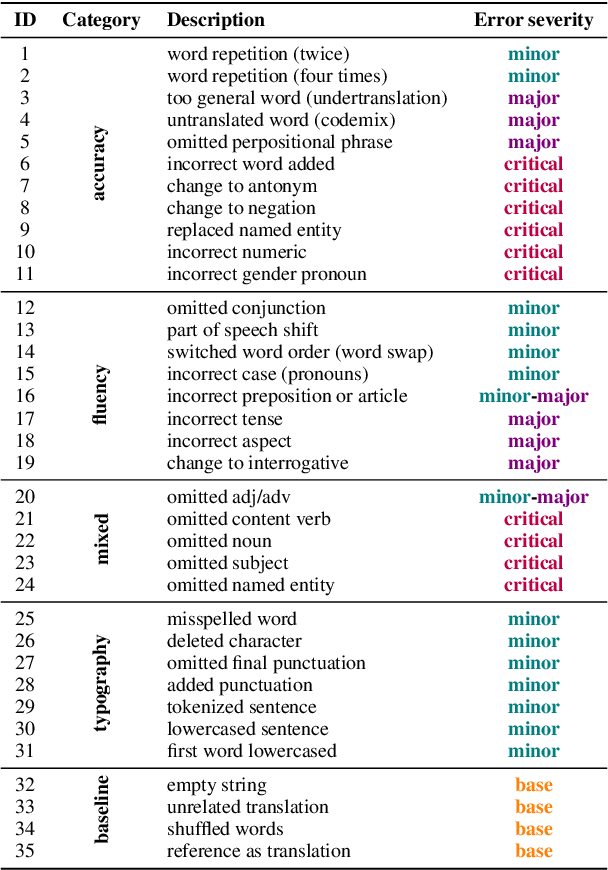

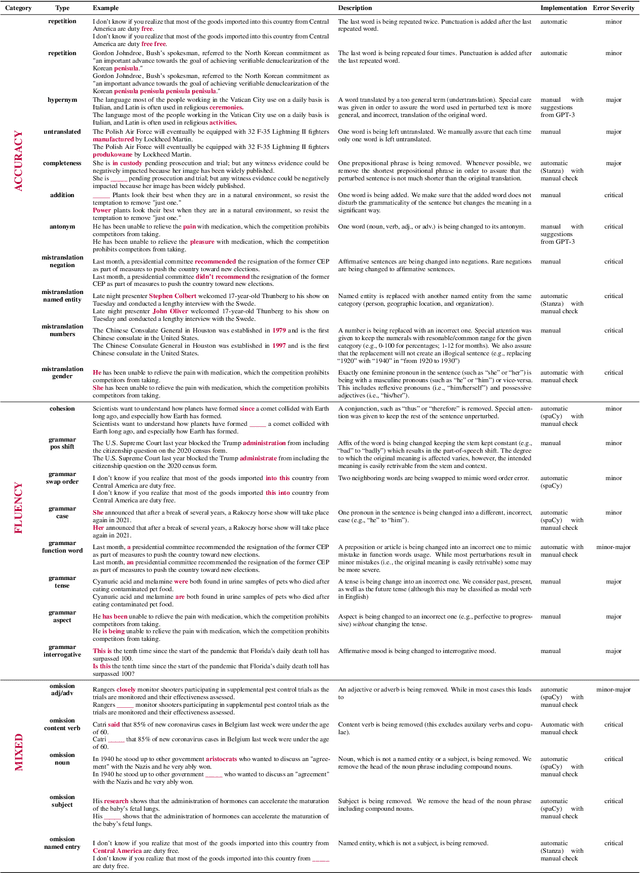

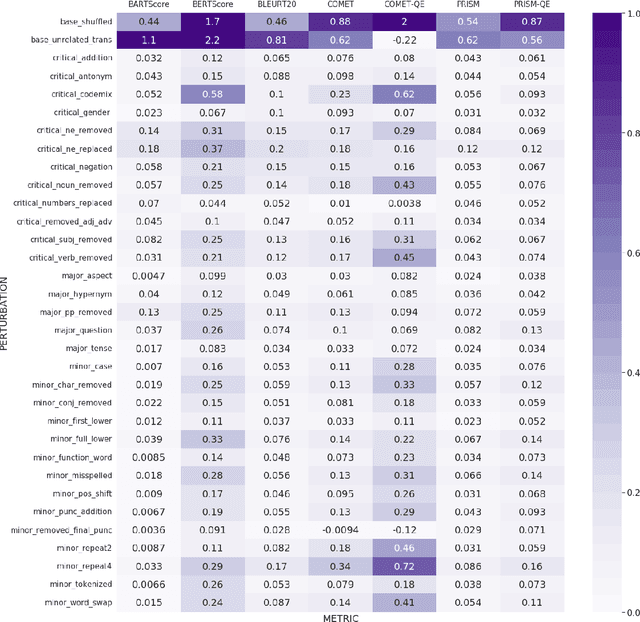

Abstract:While machine translation evaluation metrics based on string overlap (e.g., BLEU) have their limitations, their computations are transparent: the BLEU score assigned to a particular candidate translation can be traced back to the presence or absence of certain words. The operations of newer learned metrics (e.g., BLEURT, COMET), which leverage pretrained language models to achieve higher correlations with human quality judgments than BLEU, are opaque in comparison. In this paper, we shed light on the behavior of these learned metrics by creating DEMETR, a diagnostic dataset with 31K English examples (translated from 10 source languages) for evaluating the sensitivity of MT evaluation metrics to 35 different linguistic perturbations spanning semantic, syntactic, and morphological error categories. All perturbations were carefully designed to form minimal pairs with the actual translation (i.e., differ in only one aspect). We find that learned metrics perform substantially better than string-based metrics on DEMETR. Additionally, learned metrics differ in their sensitivity to various phenomena (e.g., BERTScore is sensitive to untranslated words but relatively insensitive to gender manipulation, while COMET is much more sensitive to word repetition than to aspectual changes). We publicly release DEMETR to spur more informed future development of machine translation evaluation metrics

A Sui Generis QA Approach using RoBERTa for Adverse Drug Event Identification

Oct 30, 2020

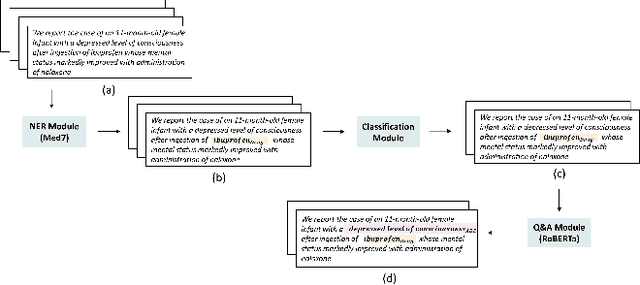

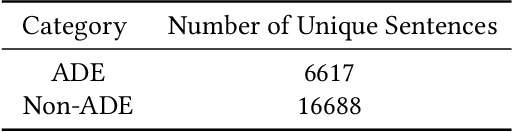

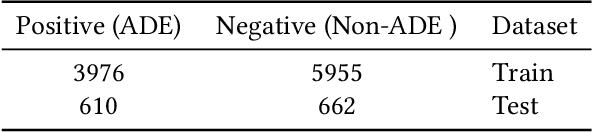

Abstract:Extraction of adverse drug events from biomedical literature and other textual data is an important component to monitor drug-safety and this has attracted attention of many researchers in healthcare. Existing works are more pivoted around entity-relation extraction using bidirectional long short term memory networks (Bi-LSTM) which does not attain the best feature representations. In this paper, we introduce a question answering framework that exploits the robustness, masking and dynamic attention capabilities of RoBERTa by a technique of domain adaptation and attempt to overcome the aforementioned limitations. Our model outperforms the prior work by 9.53% F1-Score.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge