Nima Najafzadeh

Deep Boosting Multi-Modal Ensemble Face Recognition with Sample-Level Weighting

Aug 18, 2023

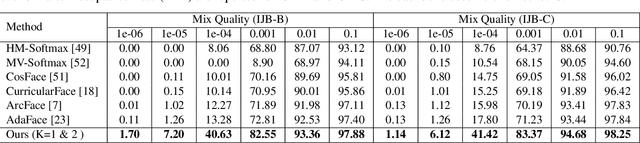

Abstract:Deep convolutional neural networks have achieved remarkable success in face recognition (FR), partly due to the abundant data availability. However, the current training benchmarks exhibit an imbalanced quality distribution; most images are of high quality. This poses issues for generalization on hard samples since they are underrepresented during training. In this work, we employ the multi-model boosting technique to deal with this issue. Inspired by the well-known AdaBoost, we propose a sample-level weighting approach to incorporate the importance of different samples into the FR loss. Individual models of the proposed framework are experts at distinct levels of sample hardness. Therefore, the combination of models leads to a robust feature extractor without losing the discriminability on the easy samples. Also, for incorporating the sample hardness into the training criterion, we analytically show the effect of sample mining on the important aspects of current angular margin loss functions, i.e., margin and scale. The proposed method shows superior performance in comparison with the state-of-the-art algorithms in extensive experiments on the CFP-FP, LFW, CPLFW, CALFW, AgeDB, TinyFace, IJB-B, and IJB-C evaluation datasets.

AAFACE: Attribute-aware Attentional Network for Face Recognition

Aug 14, 2023

Abstract:In this paper, we present a new multi-branch neural network that simultaneously performs soft biometric (SB) prediction as an auxiliary modality and face recognition (FR) as the main task. Our proposed network named AAFace utilizes SB attributes to enhance the discriminative ability of FR representation. To achieve this goal, we propose an attribute-aware attentional integration (AAI) module to perform weighted integration of FR with SB feature maps. Our proposed AAI module is not only fully context-aware but also capable of learning complex relationships between input features by means of the sequential multi-scale channel and spatial sub-modules. Experimental results verify the superiority of our proposed network compared with the state-of-the-art (SoTA) SB prediction and FR methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge