Niki Loppi

Towards Efficient and Scalable Training of Differentially Private Deep Learning

Jun 25, 2024

Abstract:Differentially private stochastic gradient descent (DP-SGD) is the standard algorithm for training machine learning models under differential privacy (DP). The major drawback of DP-SGD is the drop in utility which prior work has comprehensively studied. However, in practice another major drawback that hinders the large-scale deployment is the significantly higher computational cost. We conduct a comprehensive empirical study to quantify the computational cost of training deep learning models under DP and benchmark methods that aim at reducing the cost. Among these are more efficient implementations of DP-SGD and training with lower precision. Finally, we study the scaling behaviour using up to 80 GPUs.

Expansion of Visual Hints for Improved Generalization in Stereo Matching

Nov 01, 2022

Abstract:We introduce visual hints expansion for guiding stereo matching to improve generalization. Our work is motivated by the robustness of Visual Inertial Odometry (VIO) in computer vision and robotics, where a sparse and unevenly distributed set of feature points characterizes a scene. To improve stereo matching, we propose to elevate 2D hints to 3D points. These sparse and unevenly distributed 3D visual hints are expanded using a 3D random geometric graph, which enhances the learning and inference process. We evaluate our proposal on multiple widely adopted benchmarks and show improved performance without access to additional sensors other than the image sequence. To highlight practical applicability and symbiosis with visual odometry, we demonstrate how our methods run on embedded hardware.

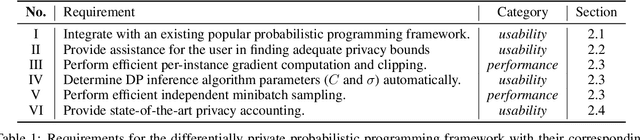

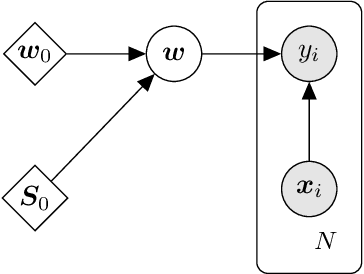

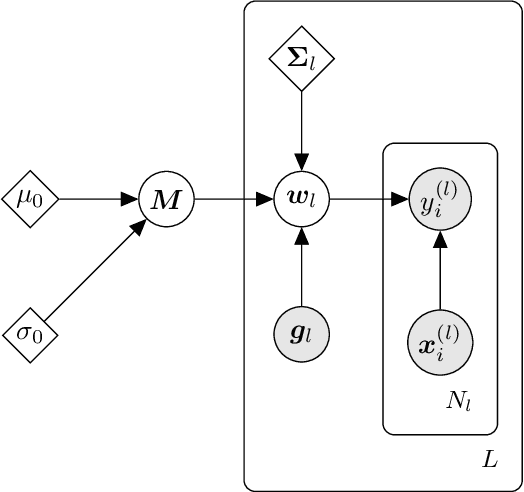

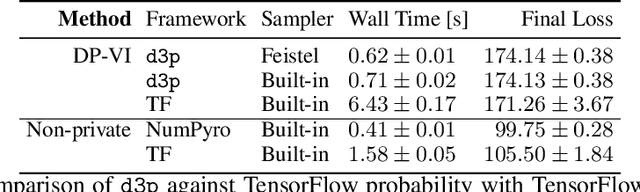

d3p -- A Python Package for Differentially-Private Probabilistic Programming

Mar 22, 2021

Abstract:We present d3p, a software package designed to help fielding runtime efficient widely-applicable Bayesian inference under differential privacy guarantees. d3p achieves general applicability to a wide range of probabilistic modelling problems by implementing the differentially private variational inference algorithm, allowing users to fit any parametric probabilistic model with a differentiable density function. d3p adopts the probabilistic programming paradigm as a powerful way for the user to flexibly define such models. We demonstrate the use of our software on a hierarchical logistic regression example, showing the expressiveness of the modelling approach as well as the ease of running the parameter inference. We also perform an empirical evaluation of the runtime of the private inference on a complex model and find an $\sim$10 fold speed-up compared to an implementation using TensorFlow Privacy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge