Nayyar Hussain

Heart Sound Segmentation using Bidirectional LSTMs with Attention

Apr 02, 2020

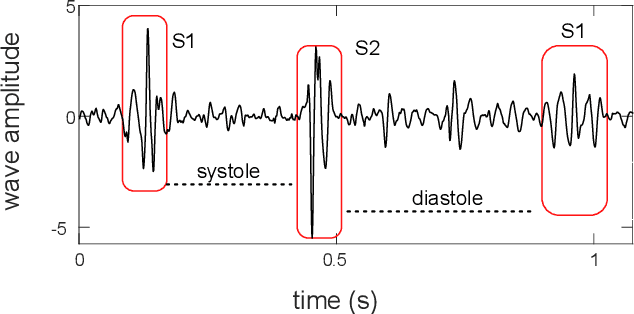

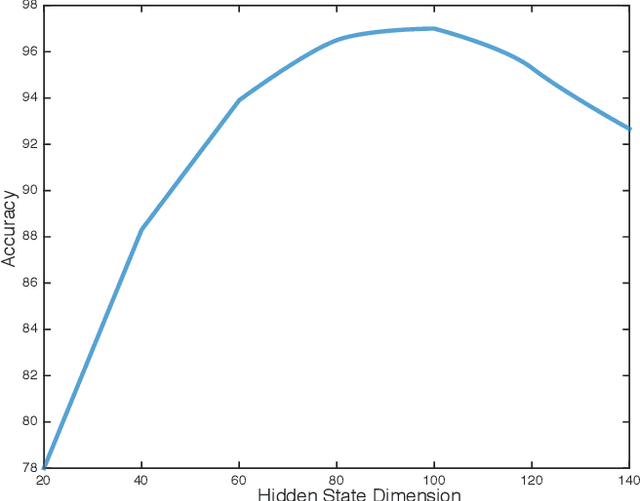

Abstract:This paper proposes a novel framework for the segmentation of phonocardiogram (PCG) signals into heart states, exploiting the temporal evolution of the PCG as well as considering the salient information that it provides for the detection of the heart state. We propose the use of recurrent neural networks and exploit recent advancements in attention based learning to segment the PCG signal. This allows the network to identify the most salient aspects of the signal and disregard uninformative information. The proposed method attains state-of-the-art performance on multiple benchmarks including both human and animal heart recordings. Furthermore, we empirically analyse different feature combinations including envelop features, wavelet and Mel Frequency Cepstral Coefficients (MFCC), and provide quantitative measurements that explore the importance of different features in the proposed approach. We demonstrate that a recurrent neural network coupled with attention mechanisms can effectively learn from irregular and noisy PCG recordings. Our analysis of different feature combinations shows that MFCC features and their derivatives offer the best performance compared to classical wavelet and envelop features. Heart sound segmentation is a crucial pre-processing step for many diagnostic applications. The proposed method provides a cost effective alternative to labour extensive manual segmentation, and provides a more accurate segmentation than existing methods. As such, it can improve the performance of further analysis including the detection of murmurs and ejection clicks. The proposed method is also applicable for detection and segmentation of other one dimensional biomedical signals.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge