Nannapas Banluesombatkul

EEG-BBNet: a Hybrid Framework for Brain Biometric using Graph Connectivity

Aug 17, 2022

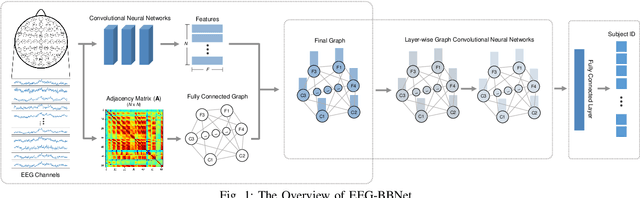

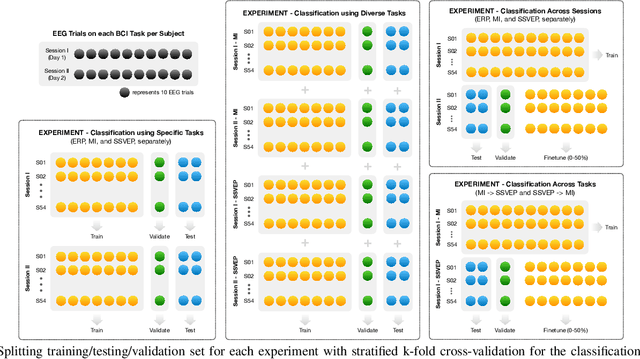

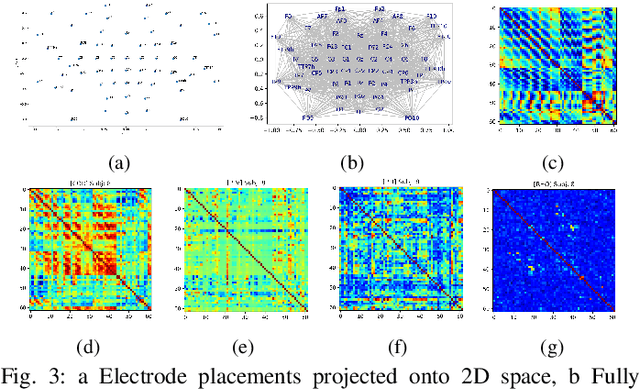

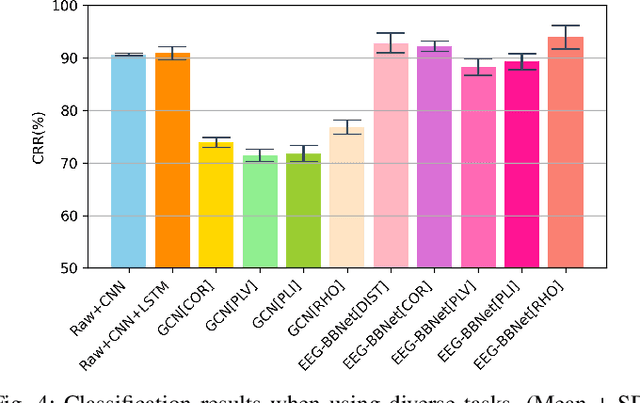

Abstract:Brain biometrics based on electroencephalography (EEG) have been used increasingly for personal identification. Traditional machine learning techniques as well as modern day deep learning methods have been applied with promising results. In this paper we present EEG-BBNet, a hybrid network which integrates convolutional neural networks (CNN) with graph convolutional neural networks (GCNN). The benefit of the CNN in automatic feature extraction and the capability of GCNN in learning connectivity between EEG electrodes through graph representation are jointly exploited. We examine various connectivity measures, namely the Euclidean distance, Pearson's correlation coefficient, phase-locked value, phase-lag index, and Rho index. The performance of the proposed method is assessed on a benchmark dataset consisting of various brain-computer interface (BCI) tasks and compared to other state-of-the-art approaches. We found that our models outperform all baselines in the event-related potential (ERP) task with an average correct recognition rates up to 99.26% using intra-session data. EEG-BBNet with Pearson's correlation and RHO index provide the best classification results. In addition, our model demonstrates greater adaptability using inter-session and inter-task data. We also investigate the practicality of our proposed model with smaller number of electrodes. Electrode placements over the frontal lobe region appears to be most appropriate with minimal lost in performance.

MetaSleepLearner: Fast Adaptation of Bio-signals-Based Sleep Stage Classifier to New Individual Subject Using Meta-Learning

Apr 14, 2020

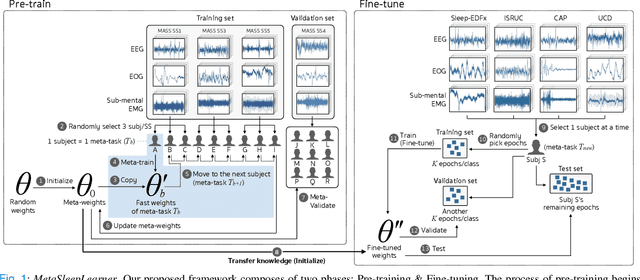

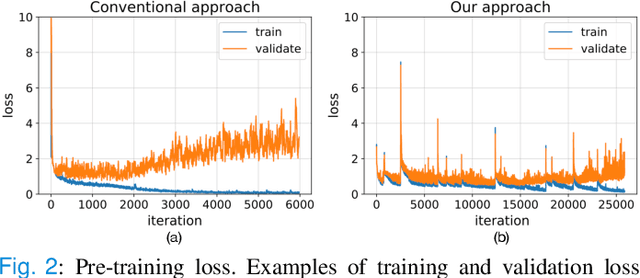

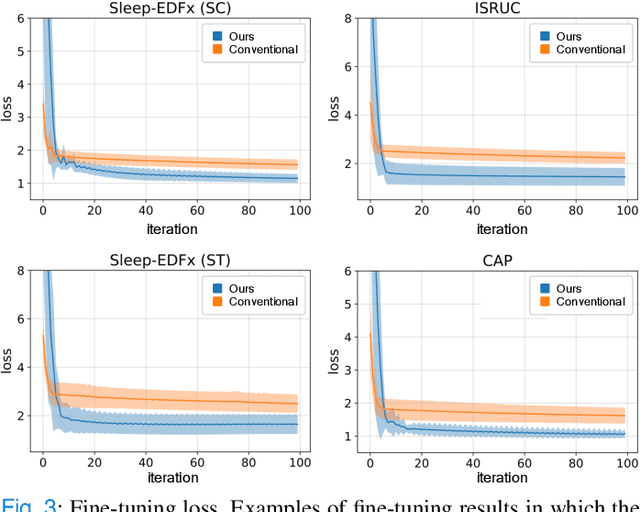

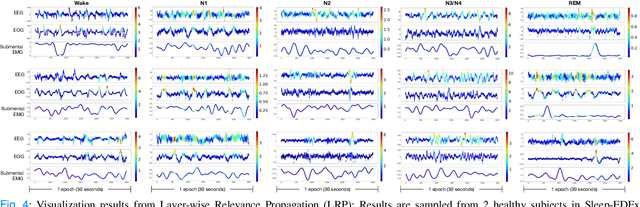

Abstract:Objective: Identifying bio-signals based-sleep stages requires time-consuming and tedious labor of skilled clinicians. Deep learning approaches have been introduced in order to challenge the automatic sleep stage classification conundrum. However, disadvantages can be posed in replacing the clinicians with the automatic system. Thus, we aim to develop a framework, capable of assisting the clinicians and lessening the workload. Methods: We proposed the transfer learning framework entitled MetaSleepLearner, using a Model Agnostic Meta-Learning (MAML), in order to transfer the acquired sleep staging knowledge from a large dataset to new individual subject. The capability of MAML was elicited for this task by allowing clinicians to label for a few samples and let the rest be handled by the system. Layer-wise Relevance Propagation (LRP) was also applied to understand the learning course of our approach. Results: In all acquired datasets, in comparison to the conventional approach, MetaSleepLearner achieved a range of 6.15 % to 12.12 % improvement with statistical difference in the mean of both approaches. The illustration of the model interpretation after the adaptation to each subject also confirmed that the performance was directed towards reasonable learning. Conclusion: MetaSleepLearner outperformed the conventional approach as a result from the fine-tuning using the recordings of both healthy subjects and patients. Significance: This is the first paper that investigated a non-conventional pre-training method, MAML, in this task, resulting in a framework for human-machine collaboration in sleep stage classification, easing the burden of the clinicians in labelling the sleep stages through only several epochs rather than an entire recording.

Affective EEG-Based Person Identification Using the Deep Learning Approach

Jul 15, 2018

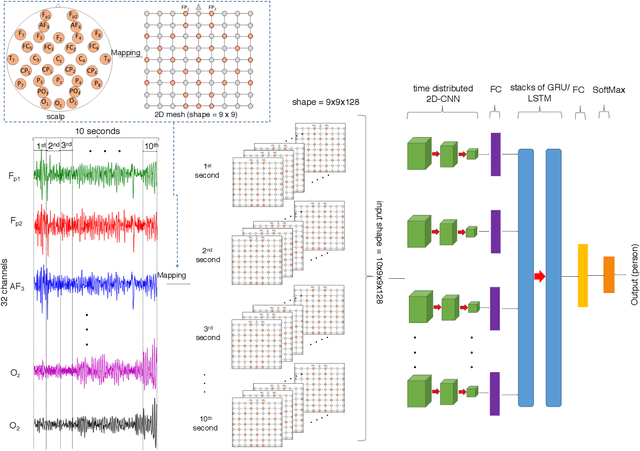

Abstract:There are several reports available on affective electroencephalography-based personal identification (affective EEG-based PI), one of which uses a small dataset and another reaching less than 90% of the mean correct recognition rate \emph{CRR},. Thus, the aim of this paper is to improve and evaluate the performance of affective EEG-based PI using a deep learning approach. The state-of-the-art EEG dataset DEAP was used as the standard for affective recognition. Thirty-two healthy participants participated in the experiment. They were asked to watch affective elicited music videos and score subjective ratings for forty video clips during the EEG measurement. An EEG amplifier with thirty-two electrodes was used to record affective EEG measurements from the participants. To identify personal EEG, a cascade of deep learning architectures was proposed, using a combination of Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs). CNNs are used to handle the spatial information from the EEG while RNNs extract the temporal information. There has been a cascade of CNNs, with recurrent models known as Long Short-Term Memory (CNN-LSTM) and Gate Recurrent Unit (CNN-GRU) for comparison. Experimental results indicate that CNN-GRU and CNN-LSTM can deal with an EEG (4--40 Hz) rom different affective states and reach up to 99.90--100% mean \emph{CRR}. On the other hand, a traditional machine learning approach such as a support vector machine (SVM) using power spectral density (PSD) as a feature does not reach 50% mean \emph{CRR}. To reduce the number of EEG electrodes from thirty-two to five for more practical application, $F_{3}$, $F_{4}$, $F_{z}$, $F_{7}$ and $F_{8}$ were found to be the best five electrodes for application in similar scenarios to those in this study. CNN-GRU and CNN-LSTM reached up to 99.17% and 98.23% mean \emph{CRR}, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge