Nabila Abraham

Explaining the Effectiveness of Multi-Task Learning for Efficient Knowledge Extraction from Spine MRI Reports

May 06, 2022

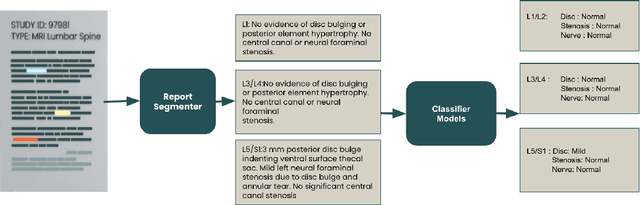

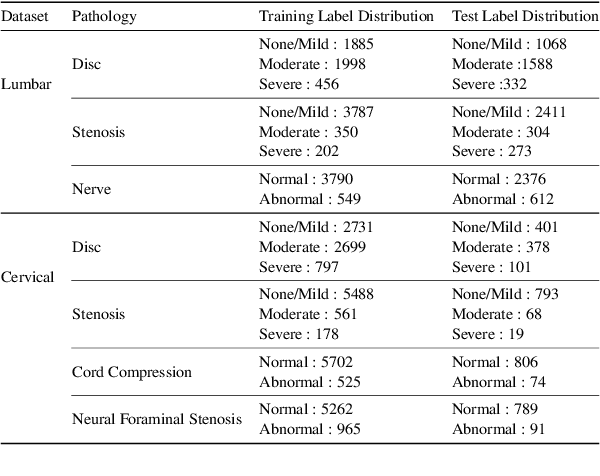

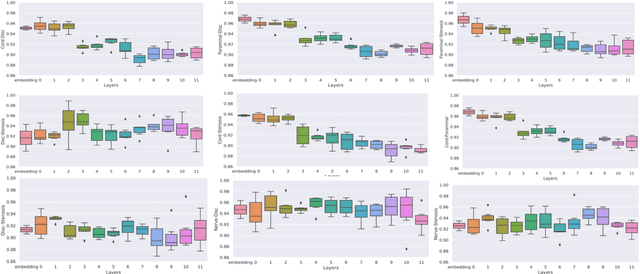

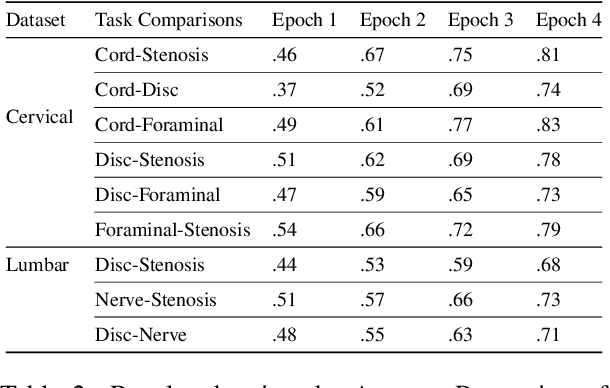

Abstract:Pretrained Transformer based models finetuned on domain specific corpora have changed the landscape of NLP. However, training or fine-tuning these models for individual tasks can be time consuming and resource intensive. Thus, a lot of current research is focused on using transformers for multi-task learning (Raffel et al.,2020) and how to group the tasks to help a multi-task model to learn effective representations that can be shared across tasks (Standley et al., 2020; Fifty et al., 2021). In this work, we show that a single multi-tasking model can match the performance of task specific models when the task specific models show similar representations across all of their hidden layers and their gradients are aligned, i.e. their gradients follow the same direction. We hypothesize that the above observations explain the effectiveness of multi-task learning. We validate our observations on our internal radiologist-annotated datasets on the cervical and lumbar spine. Our method is simple and intuitive, and can be used in a wide range of NLP problems.

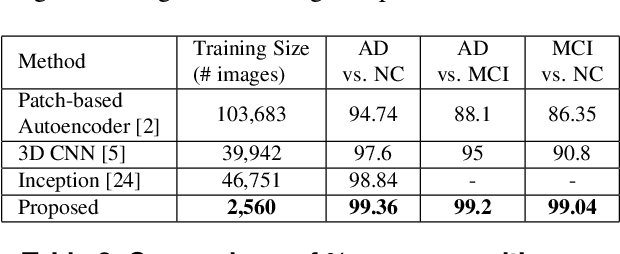

Transfer Learning with intelligent training data selection for prediction of Alzheimer's Disease

Jun 04, 2019

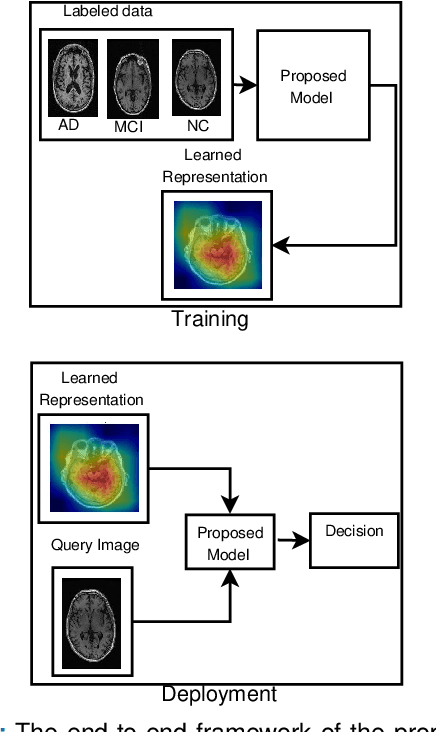

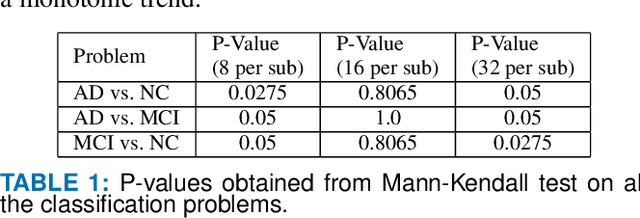

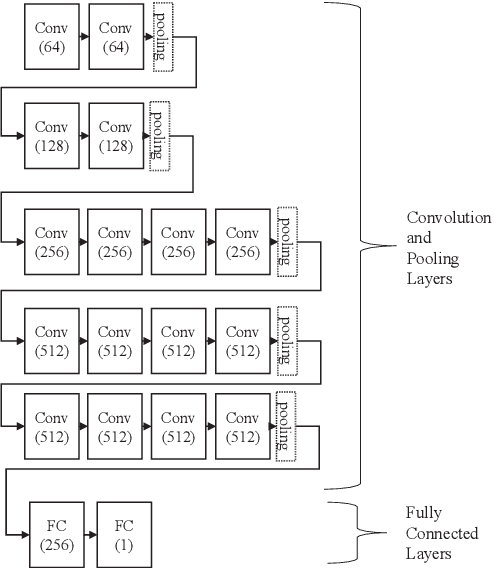

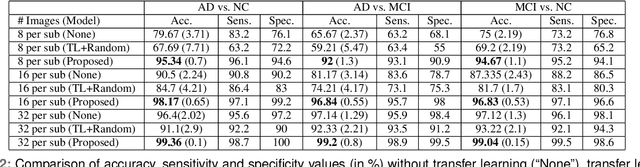

Abstract:Detection of Alzheimer's Disease (AD) from neuroimaging data such as MRI through machine learning has been a subject of intense research in recent years. Recent success of deep learning in computer vision has progressed such research further. However, common limitations with such algorithms are reliance on a large number of training images, and requirement of careful optimization of the architecture of deep networks. In this paper, we attempt solving these issues with transfer learning, where the state-of-the-art VGG architecture is initialized with pre-trained weights from large benchmark datasets consisting of natural images. The network is then fine-tuned with layer-wise tuning, where only a pre-defined group of layers are trained on MRI images. To shrink the training data size, we employ image entropy to select the most informative slices. Through experimentation on the ADNI dataset, we show that with training size of 10 to 20 times smaller than the other contemporary methods, we reach state-of-the-art performance in AD vs. NC, AD vs. MCI, and MCI vs. NC classification problems, with a 4% and a 7% increase in accuracy over the state-of-the-art for AD vs. MCI and MCI vs. NC, respectively. We also provide detailed analysis of the effect of the intelligent training data selection method, changing the training size, and changing the number of layers to be fine-tuned. Finally, we provide Class Activation Maps (CAM) that demonstrate how the proposed model focuses on discriminative image regions that are neuropathologically relevant, and can help the healthcare practitioner in interpreting the model's decision making process.

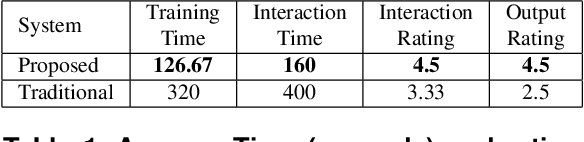

Machine Learning on Biomedical Images: Interactive Learning, Transfer Learning, Class Imbalance, and Beyond

Feb 13, 2019

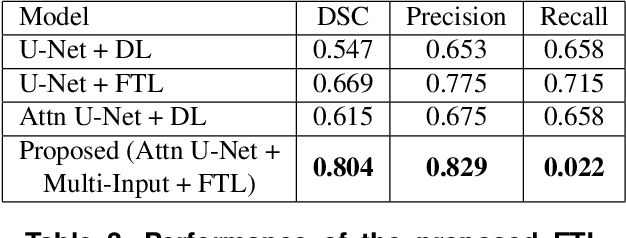

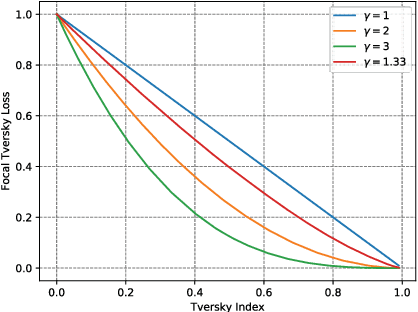

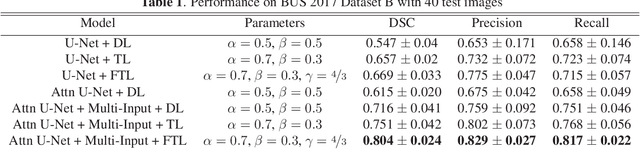

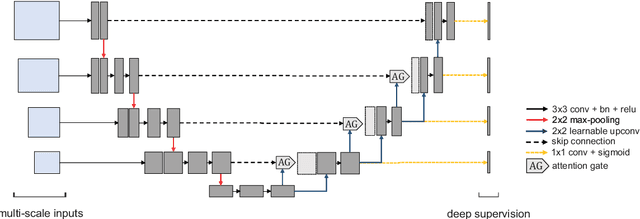

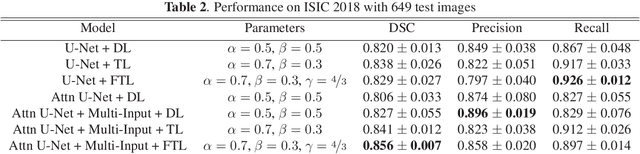

Abstract:In this paper, we highlight three issues that limit performance of machine learning on biomedical images, and tackle them through 3 case studies: 1) Interactive Machine Learning (IML): we show how IML can drastically improve exploration time and quality of direct volume rendering. 2) transfer learning: we show how transfer learning along with intelligent pre-processing can result in better Alzheimer's diagnosis using a much smaller training set 3) data imbalance: we show how our novel focal Tversky loss function can provide better segmentation results taking into account the imbalanced nature of segmentation datasets. The case studies are accompanied by in-depth analytical discussion of results with possible future directions.

A Novel Focal Tversky loss function with improved Attention U-Net for lesion segmentation

Oct 18, 2018

Abstract:We propose a generalized focal loss function based on the Tversky index to address the issue of data imbalance in medical image segmentation. Compared to the commonly used Dice loss, our loss function achieves a better trade off between precision and recall when training on small structures such as lesions. To evaluate our loss function, we improve the attention U-Net model by incorporating an image pyramid to preserve contextual features. We experiment on the BUS 2017 dataset and ISIC 2018 dataset where lesions occupy 4.84% and 21.4% of the images area and improve segmentation accuracy when compared to the standard U-Net by 25.7% and 3.6%, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge