Muhammad Nazrul Islam

Bengali Sign Language Recognition through Hand Pose Estimation using Multi-Branch Spatial-Temporal Attention Model

Aug 26, 2024

Abstract:Hand gesture-based sign language recognition (SLR) is one of the most advanced applications of machine learning, and computer vision uses hand gestures. Although, in the past few years, many researchers have widely explored and studied how to address BSL problems, specific unaddressed issues remain, such as skeleton and transformer-based BSL recognition. In addition, the lack of evaluation of the BSL model in various concealed environmental conditions can prove the generalized property of the existing model by facing daily life signs. As a consequence, existing BSL recognition systems provide a limited perspective of their generalisation ability as they are tested on datasets containing few BSL alphabets that have a wide disparity in gestures and are easy to differentiate. To overcome these limitations, we propose a spatial-temporal attention-based BSL recognition model considering hand joint skeletons extracted from the sequence of images. The main aim of utilising hand skeleton-based BSL data is to ensure the privacy and low-resolution sequence of images, which need minimum computational cost and low hardware configurations. Our model captures discriminative structural displacements and short-range dependency based on unified joint features projected onto high-dimensional feature space. Specifically, the use of Separable TCN combined with a powerful multi-head spatial-temporal attention architecture generated high-performance accuracy. The extensive experiments with a proposed dataset and two benchmark BSL datasets with a wide range of evaluations, such as intra- and inter-dataset evaluation settings, demonstrated that our proposed models achieve competitive performance with extremely low computational complexity and run faster than existing models.

ExplainableDetector: Exploring Transformer-based Language Modeling Approach for SMS Spam Detection with Explainability Analysis

May 12, 2024Abstract:SMS, or short messaging service, is a widely used and cost-effective communication medium that has sadly turned into a haven for unwanted messages, commonly known as SMS spam. With the rapid adoption of smartphones and Internet connectivity, SMS spam has emerged as a prevalent threat. Spammers have taken notice of the significance of SMS for mobile phone users. Consequently, with the emergence of new cybersecurity threats, the number of SMS spam has expanded significantly in recent years. The unstructured format of SMS data creates significant challenges for SMS spam detection, making it more difficult to successfully fight spam attacks in the cybersecurity domain. In this work, we employ optimized and fine-tuned transformer-based Large Language Models (LLMs) to solve the problem of spam message detection. We use a benchmark SMS spam dataset for this spam detection and utilize several preprocessing techniques to get clean and noise-free data and solve the class imbalance problem using the text augmentation technique. The overall experiment showed that our optimized fine-tuned BERT (Bidirectional Encoder Representations from Transformers) variant model RoBERTa obtained high accuracy with 99.84\%. We also work with Explainable Artificial Intelligence (XAI) techniques to calculate the positive and negative coefficient scores which explore and explain the fine-tuned model transparency in this text-based spam SMS detection task. In addition, traditional Machine Learning (ML) models were also examined to compare their performance with the transformer-based models. This analysis describes how LLMs can make a good impact on complex textual-based spam data in the cybersecurity field.

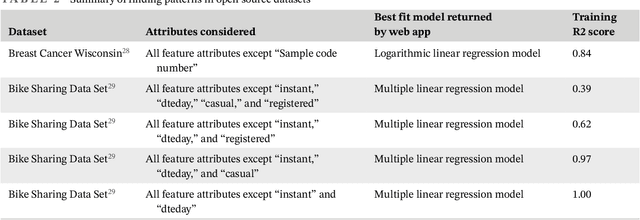

CurFi: An automated tool to find the best regression analysis model using curve fitting

May 16, 2022

Abstract:Regression analysis is a well known quantitative research method that primarily explores the relationship between one or more independent variables and a dependent variable. Conducting regression analysis manually on large datasets with multiple independent variables can be tedious. An automated system for regression analysis will be of great help for researchers as well as non-expert users. Thus, the objective of this research is to design and develop an automated curve fitting system. As outcome, a curve fitting system named "CurFi" was developed that uses linear regression models to fit a curve to a dataset and to find out the best fit model. The system facilitates to upload a dataset, split the dataset into training set and test set, select relevant features and label from the dataset; and the system will return the best fit linear regression model after training is completed. The developed tool would be a great resource for the users having limited technical knowledge who will also be able to find the best fit regression model for a dataset using the developed "CurFi" system.

A Dynamic Topic Identification and Labeling Approach of COVID-19 Tweets

Aug 13, 2021

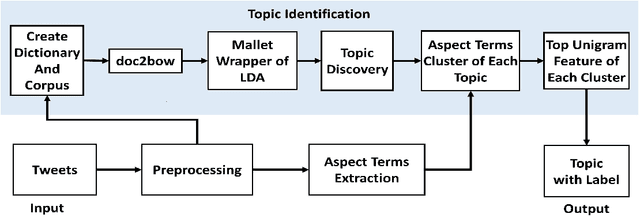

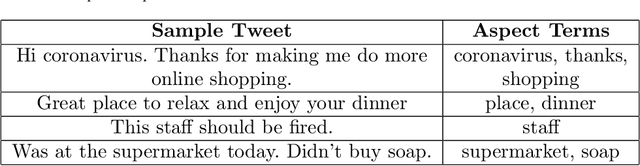

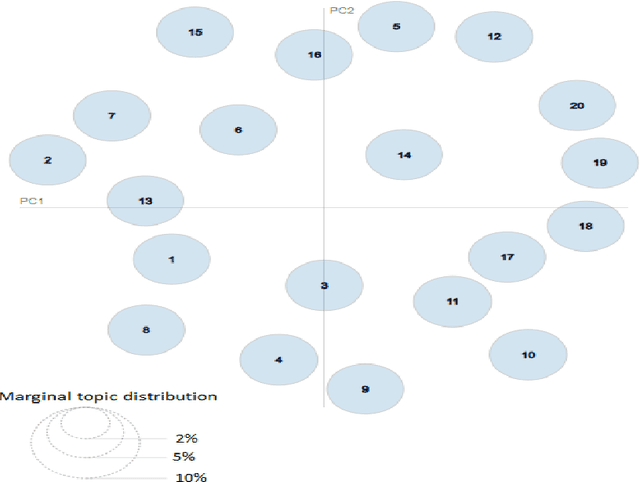

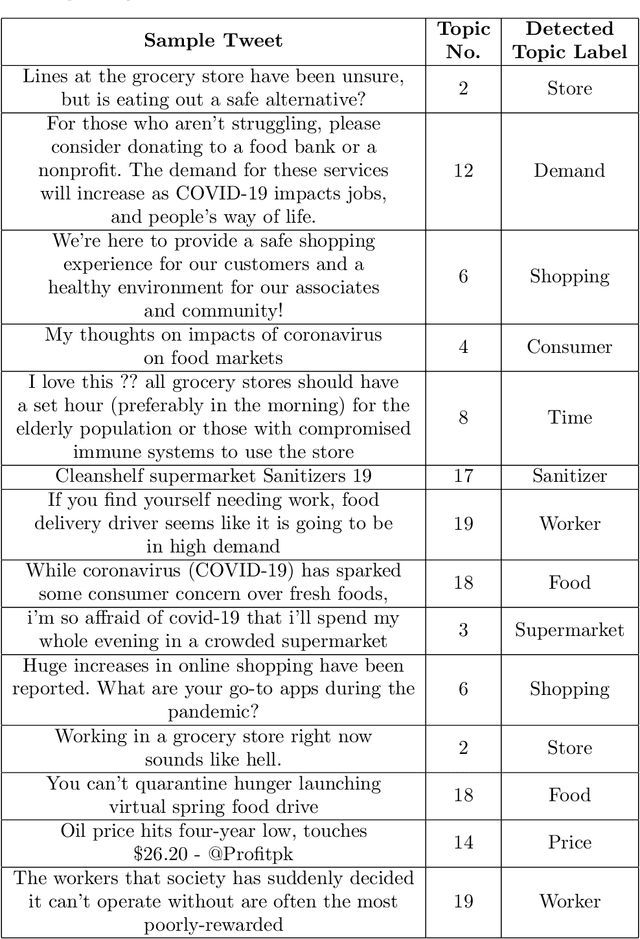

Abstract:This paper formulates the problem of dynamically identifying key topics with proper labels from COVID-19 Tweets to provide an overview of wider public opinion. Nowadays, social media is one of the best ways to connect people through Internet technology, which is also considered an essential part of our daily lives. In late December 2019, an outbreak of the novel coronavirus, COVID-19 was reported, and the World Health Organization declared an emergency due to its rapid spread all over the world. The COVID-19 epidemic has affected the use of social media by many people across the globe. Twitter is one of the most influential social media services, which has seen a dramatic increase in its use from the epidemic. Thus dynamic extraction of specific topics with labels from tweets of COVID-19 is a challenging issue for highlighting conversation instead of manual topic labeling approach. In this paper, we propose a framework that automatically identifies the key topics with labels from the tweets using the top Unigram feature of aspect terms cluster from Latent Dirichlet Allocation (LDA) generated topics. Our experiment result shows that this dynamic topic identification and labeling approach is effective having the accuracy of 85.48\% with respect to the manual static approach.

A Survey on the Use of AI and ML for Fighting the COVID-19 Pandemic

Aug 03, 2020

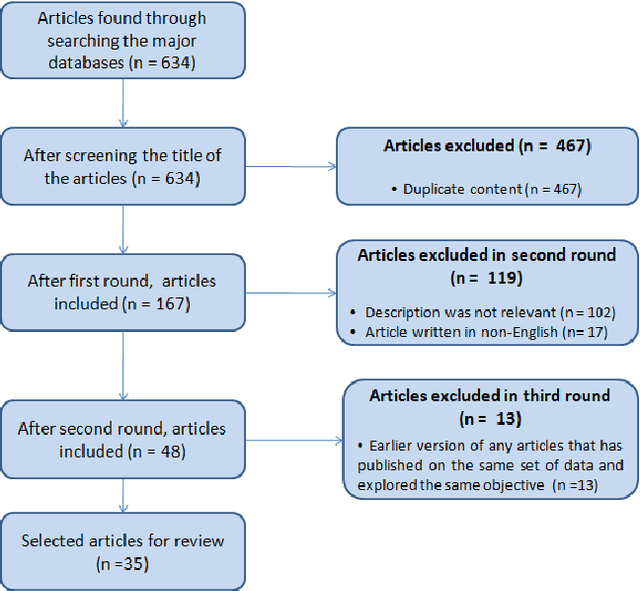

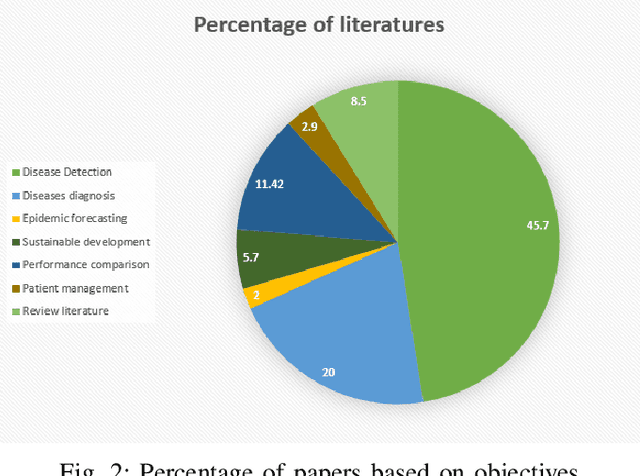

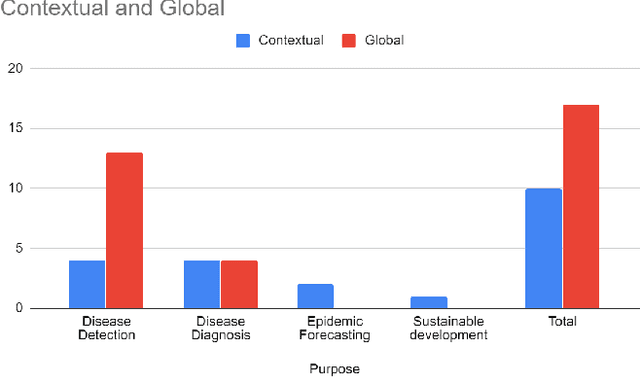

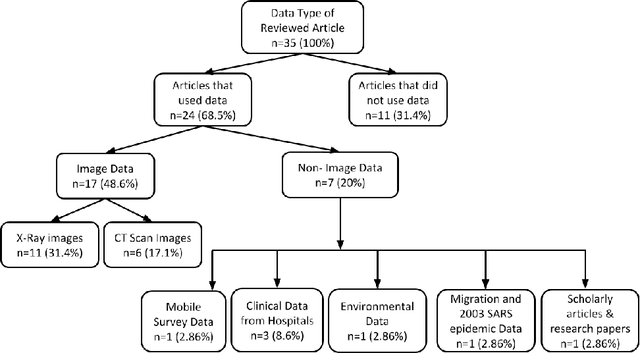

Abstract:Artificial intelligence (AI) and machine learning (ML) have made a paradigm shift in health care which, eventually can be used for decision support and forecasting by exploring the medical data. Recent studies showed that AI and ML can be used to fight against the COVID-19 pandemic. Therefore, the objective of this review study is to summarize the recent AI and ML based studies that have focused to fight against COVID-19 pandemic. From an initial set of 634 articles, a total of 35 articles were finally selected through an extensive inclusion-exclusion process. In our review, we have explored the objectives/aims of the existing studies (i.e., the role of AI/ML in fighting COVID-19 pandemic); context of the study (i.e., study focused to a specific country-context or with a global perspective); type and volume of dataset; methodology, algorithms or techniques adopted in the prediction or diagnosis processes; and mapping the algorithms/techniques with the data type highlighting their prediction/classification accuracy. We particularly focused on the uses of AI/ML in analyzing the pandemic data in order to depict the most recent progress of AI for fighting against COVID-19 and pointed out the potential scope of further research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge