Moran Barenboim

Value Gradients with Action Adaptive Search Trees in Continuous (PO)MDPs

Mar 15, 2025

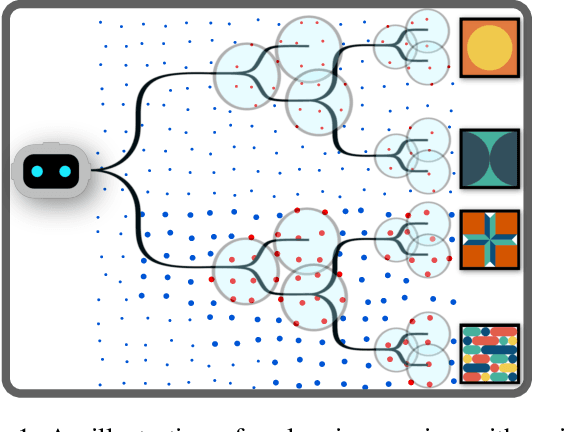

Abstract:Solving Partially Observable Markov Decision Processes (POMDPs) in continuous state, action and observation spaces is key for autonomous planning in many real-world mobility and robotics applications. Current approaches are mostly sample based, and cannot hope to reach near-optimal solutions in reasonable time. We propose two complementary theoretical contributions. First, we formulate a novel Multiple Importance Sampling (MIS) tree for value estimation, that allows to share value information between sibling action branches. The novel MIS tree supports action updates during search time, such as gradient-based updates. Second, we propose a novel methodology to compute value gradients with online sampling based on transition likelihoods. It is applicable to MDPs, and we extend it to POMDPs via particle beliefs with the application of the propagated belief trick. The gradient estimator is computed in practice using the MIS tree with efficient Monte Carlo sampling. These two parts are combined into a new planning algorithm Action Gradient Monte Carlo Tree Search (AGMCTS). We demonstrate in a simulated environment its applicability, advantages over continuous online POMDP solvers that rely solely on sampling, and we discuss further implications.

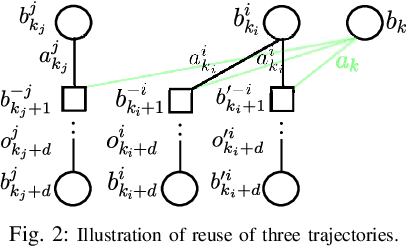

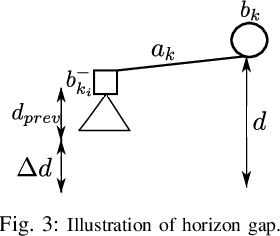

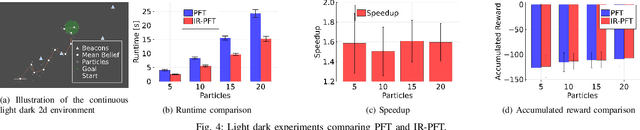

Previous Knowledge Utilization In Online Anytime Belief Space Planning

Dec 17, 2024

Abstract:Online planning under uncertainty remains a critical challenge in robotics and autonomous systems. While tree search techniques are commonly employed to construct partial future trajectories within computational constraints, most existing methods discard information from previous planning sessions considering continuous spaces. This study presents a novel, computationally efficient approach that leverages historical planning data in current decision-making processes. We provide theoretical foundations for our information reuse strategy and introduce an algorithm based on Monte Carlo Tree Search (MCTS) that implements this approach. Experimental results demonstrate that our method significantly reduces computation time while maintaining high performance levels. Our findings suggest that integrating historical planning information can substantially improve the efficiency of online decision-making in uncertain environments, paving the way for more responsive and adaptive autonomous systems.

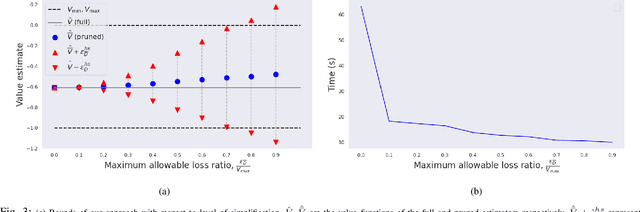

Simplifying Complex Observation Models in Continuous POMDP Planning with Probabilistic Guarantees and Practice

Nov 13, 2023

Abstract:Solving partially observable Markov decision processes (POMDPs) with high dimensional and continuous observations, such as camera images, is required for many real life robotics and planning problems. Recent researches suggested machine learned probabilistic models as observation models, but their use is currently too computationally expensive for online deployment. We deal with the question of what would be the implication of using simplified observation models for planning, while retaining formal guarantees on the quality of the solution. Our main contribution is a novel probabilistic bound based on a statistical total variation distance of the simplified model. We show that it bounds the theoretical POMDP value w.r.t. original model, from the empirical planned value with the simplified model, by generalizing recent results of particle-belief MDP concentration bounds. Our calculations can be separated into offline and online parts, and we arrive at formal guarantees without having to access the costly model at all during planning, which is also a novel result. Finally, we demonstrate in simulation how to integrate the bound into the routine of an existing continuous online POMDP solver.

Online POMDP Planning with Anytime Deterministic Guarantees

Oct 04, 2023

Abstract:Autonomous agents operating in real-world scenarios frequently encounter uncertainty and make decisions based on incomplete information. Planning under uncertainty can be mathematically formalized using partially observable Markov decision processes (POMDPs). However, finding an optimal plan for POMDPs can be computationally expensive and is feasible only for small tasks. In recent years, approximate algorithms, such as tree search and sample-based methodologies, have emerged as state-of-the-art POMDP solvers for larger problems. Despite their effectiveness, these algorithms offer only probabilistic and often asymptotic guarantees toward the optimal solution due to their dependence on sampling. To address these limitations, we derive a deterministic relationship between a simplified solution that is easier to obtain and the theoretically optimal one. First, we derive bounds for selecting a subset of the observations to branch from while computing a complete belief at each posterior node. Then, since a complete belief update may be computationally demanding, we extend the bounds to support reduction of both the state and the observation spaces. We demonstrate how our guarantees can be integrated with existing state-of-the-art solvers that sample a subset of states and observations. As a result, the returned solution holds deterministic bounds relative to the optimal policy. Lastly, we substantiate our findings with supporting experimental results.

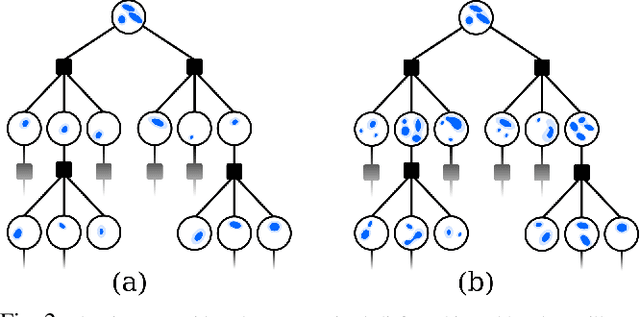

Data Association Aware POMDP Planning with Hypothesis Pruning Performance Guarantees

Mar 03, 2023

Abstract:Autonomous agents that operate in the real world must often deal with partial observability, which is commonly modeled as partially observable Markov decision processes (POMDPs). However, traditional POMDP models rely on the assumption of complete knowledge of the observation source, known as fully observable data association. To address this limitation, we propose a planning algorithm that maintains multiple data association hypotheses, represented as a belief mixture, where each component corresponds to a different data association hypothesis. However, this method can lead to an exponential growth in the number of hypotheses, resulting in significant computational overhead. To overcome this challenge, we introduce a pruning-based approach for planning with ambiguous data associations. Our key contribution is to derive bounds between the value function based on the complete set of hypotheses and the value function based on a pruned-subset of the hypotheses, enabling us to establish a trade-off between computational efficiency and performance. We demonstrate how these bounds can both be used to certify any pruning heuristic in retrospect and propose a novel approach to determine which hypotheses to prune in order to ensure a predefined limit on the loss. We evaluate our approach in simulated environments and demonstrate its efficacy in handling multi-modal belief hypotheses with ambiguous data associations.

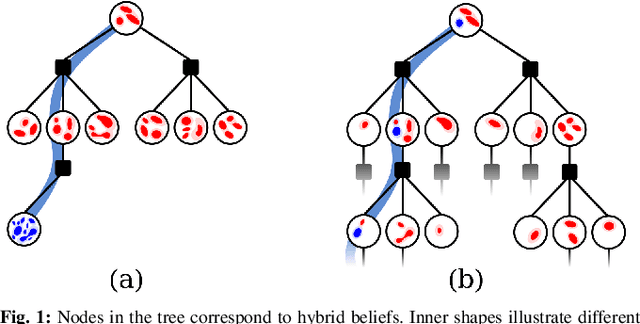

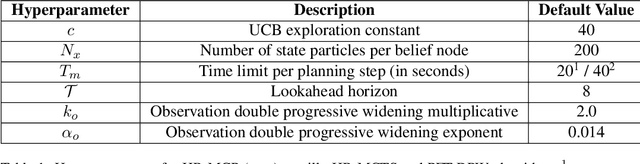

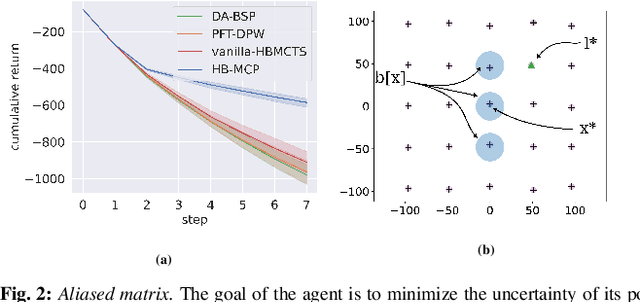

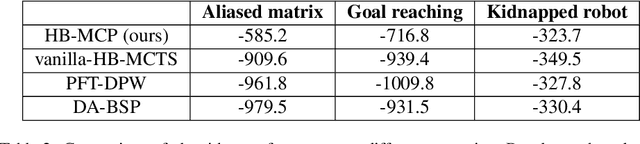

Monte Carlo Planning in Hybrid Belief POMDPs

Nov 14, 2022

Abstract:Real-world problems often require reasoning about hybrid beliefs, over both discrete and continuous random variables. Yet, such a setting has hardly been investigated in the context of planning. Moreover, existing online Partially Observable Markov Decision Processes (POMDPs) solvers do not support hybrid beliefs directly. In particular, these solvers do not address the added computational burden due to an increasing number of hypotheses with the planning horizon, which can grow exponentially. As part of this work, we present a novel algorithm, Hybrid Belief Monte Carlo Planning (HB-MCP) that utilizes the Monte Carlo Tree Search (MCTS) algorithm to solve a POMDP while maintaining a hybrid belief. We illustrate how the upper confidence bound (UCB) exploration bonus can be leveraged to guide the growth of hypotheses trees alongside the belief trees. We then evaluate our approach in highly aliased simulated environments where unresolved data association leads to multi-modal belief hypotheses.

Adaptive Information Belief Space Planning

Jan 28, 2022

Abstract:Reasoning about uncertainty is vital in many real-life autonomous systems. However, current state-of-the-art planning algorithms cannot either reason about uncertainty explicitly, or do so with a high computational burden. Here, we focus on making informed decisions efficiently, using reward functions that explicitly deal with uncertainty. We formulate an approximation, namely an abstract observation model, that uses an aggregation scheme to alleviate computational costs. We derive bounds on the expected information-theoretic reward function and, as a consequence, on the value function. We then propose a method to refine aggregation to achieve identical action selection with a fraction of the computational time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge