Miriam Jäger

FeatureGS: Eigenvalue-Feature Optimization in 3D Gaussian Splatting for Geometrically Accurate and Artifact-Reduced Reconstruction

Jan 29, 2025Abstract:3D Gaussian Splatting (3DGS) has emerged as a powerful approach for 3D scene reconstruction using 3D Gaussians. However, neither the centers nor surfaces of the Gaussians are accurately aligned to the object surface, complicating their direct use in point cloud and mesh reconstruction. Additionally, 3DGS typically produces floater artifacts, increasing the number of Gaussians and storage requirements. To address these issues, we present FeatureGS, which incorporates an additional geometric loss term based on an eigenvalue-derived 3D shape feature into the optimization process of 3DGS. The goal is to improve geometric accuracy and enhance properties of planar surfaces with reduced structural entropy in local 3D neighborhoods.We present four alternative formulations for the geometric loss term based on 'planarity' of Gaussians, as well as 'planarity', 'omnivariance', and 'eigenentropy' of Gaussian neighborhoods. We provide quantitative and qualitative evaluations on 15 scenes of the DTU benchmark dataset focusing on following key aspects: Geometric accuracy and artifact-reduction, measured by the Chamfer distance, and memory efficiency, evaluated by the total number of Gaussians. Additionally, rendering quality is monitored by Peak Signal-to-Noise Ratio. FeatureGS achieves a 30 % improvement in geometric accuracy, reduces the number of Gaussians by 90 %, and suppresses floater artifacts, while maintaining comparable photometric rendering quality. The geometric loss with 'planarity' from Gaussians provides the highest geometric accuracy, while 'omnivariance' in Gaussian neighborhoods reduces floater artifacts and number of Gaussians the most. This makes FeatureGS a strong method for geometrically accurate, artifact-reduced and memory-efficient 3D scene reconstruction, enabling the direct use of Gaussian centers for geometric representation.

HoloGS: Instant Depth-based 3D Gaussian Splatting with Microsoft HoloLens 2

May 03, 2024

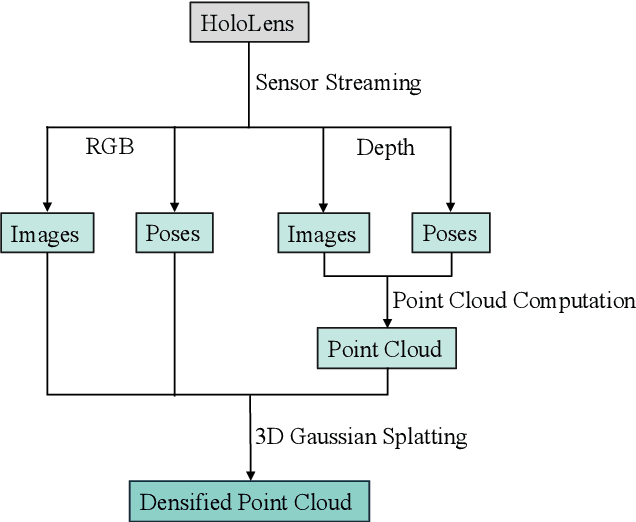

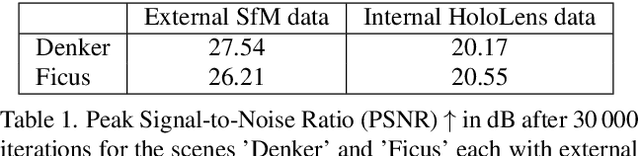

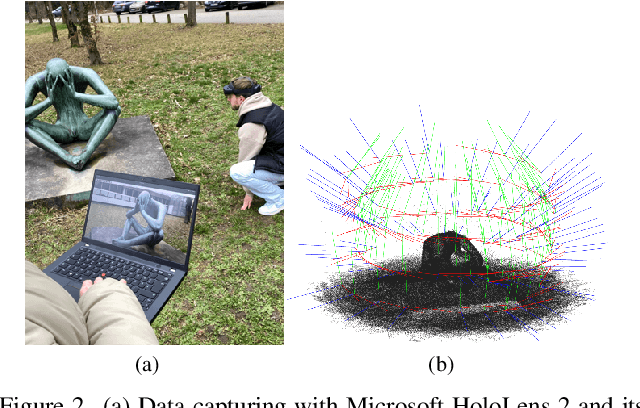

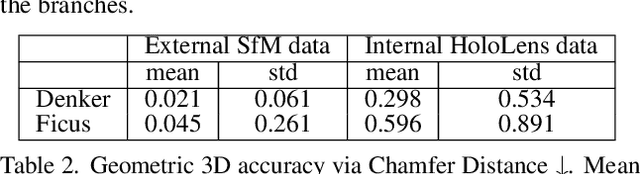

Abstract:In the fields of photogrammetry, computer vision and computer graphics, the task of neural 3D scene reconstruction has led to the exploration of various techniques. Among these, 3D Gaussian Splatting stands out for its explicit representation of scenes using 3D Gaussians, making it appealing for tasks like 3D point cloud extraction and surface reconstruction. Motivated by its potential, we address the domain of 3D scene reconstruction, aiming to leverage the capabilities of the Microsoft HoloLens 2 for instant 3D Gaussian Splatting. We present HoloGS, a novel workflow utilizing HoloLens sensor data, which bypasses the need for pre-processing steps like Structure from Motion by instantly accessing the required input data i.e. the images, camera poses and the point cloud from depth sensing. We provide comprehensive investigations, including the training process and the rendering quality, assessed through the Peak Signal-to-Noise Ratio, and the geometric 3D accuracy of the densified point cloud from Gaussian centers, measured by Chamfer Distance. We evaluate our approach on two self-captured scenes: An outdoor scene of a cultural heritage statue and an indoor scene of a fine-structured plant. Our results show that the HoloLens data, including RGB images, corresponding camera poses, and depth sensing based point clouds to initialize the Gaussians, are suitable as input for 3D Gaussian Splatting.

Density Uncertainty Quantification with NeRF-Ensembles: Impact of Data and Scene Constraints

Dec 22, 2023Abstract:In the fields of computer graphics, computer vision and photogrammetry, Neural Radiance Fields (NeRFs) are a major topic driving current research and development. However, the quality of NeRF-generated 3D scene reconstructions and subsequent surface reconstructions, heavily relies on the network output, particularly the density. Regarding this critical aspect, we propose to utilize NeRF-Ensembles that provide a density uncertainty estimate alongside the mean density. We demonstrate that data constraints such as low-quality images and poses lead to a degradation of the training process, increased density uncertainty and decreased predicted density. Even with high-quality input data, the density uncertainty varies based on scene constraints such as acquisition constellations, occlusions and material properties. NeRF-Ensembles not only provide a tool for quantifying the uncertainty but exhibit two promising advantages: Enhanced robustness and artifact removal. Through the utilization of NeRF-Ensembles instead of single NeRFs, small outliers are removed, yielding a smoother output with improved completeness of structures. Furthermore, applying percentile-based thresholds on density uncertainty outliers proves to be effective for the removal of large (foggy) artifacts in post-processing. We conduct our methodology on 3 different datasets: (i) synthetic benchmark dataset, (ii) real benchmark dataset, (iii) real data under realistic recording conditions and sensors.

3D Density-Gradient based Edge Detection on Neural Radiance Fields for Geometric Reconstruction

Sep 26, 2023

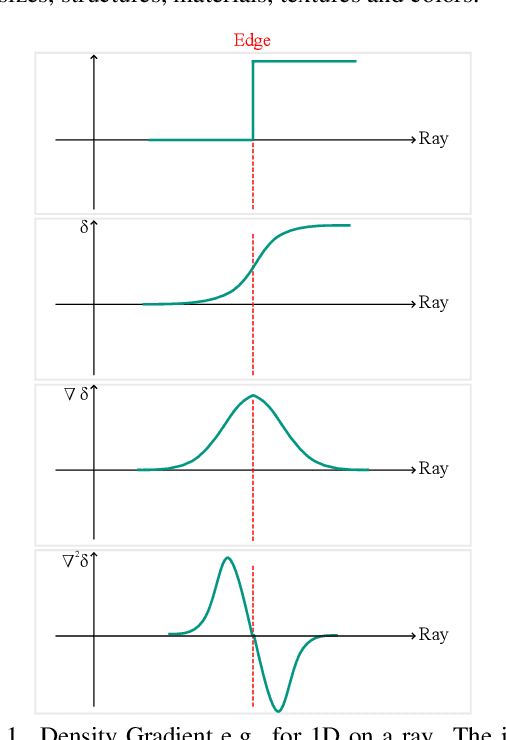

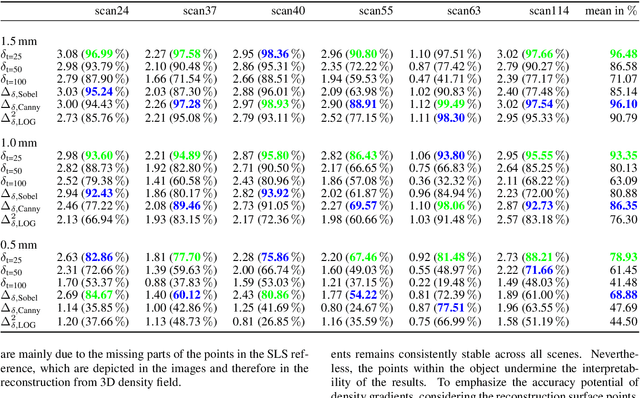

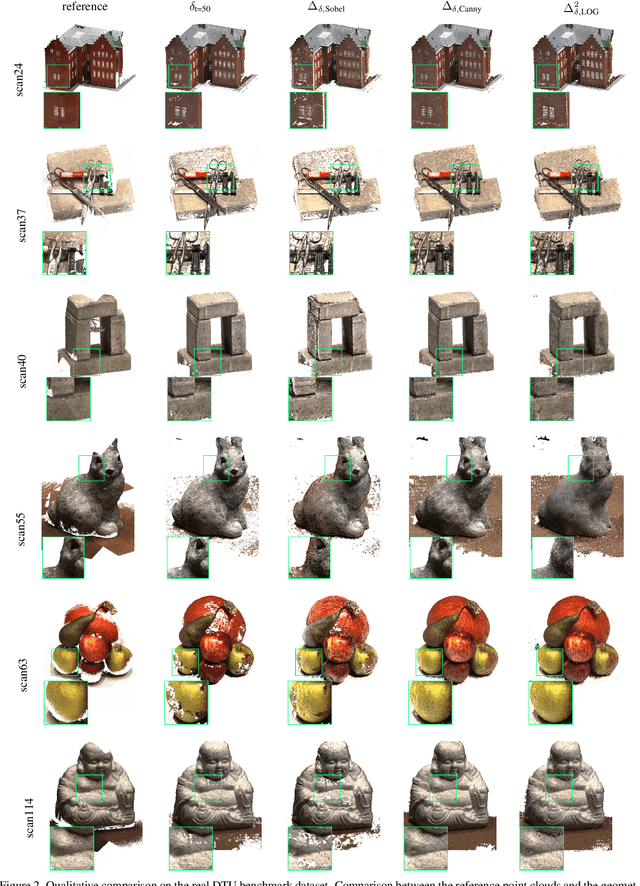

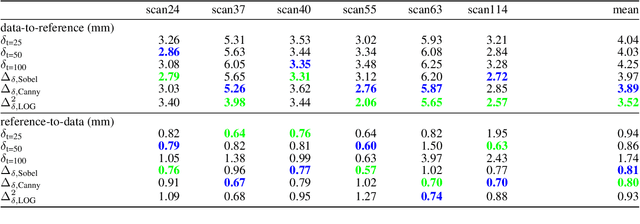

Abstract:Generating geometric 3D reconstructions from Neural Radiance Fields (NeRFs) is of great interest. However, accurate and complete reconstructions based on the density values are challenging. The network output depends on input data, NeRF network configuration and hyperparameter. As a result, the direct usage of density values, e.g. via filtering with global density thresholds, usually requires empirical investigations. Under the assumption that the density increases from non-object to object area, the utilization of density gradients from relative values is evident. As the density represents a position-dependent parameter it can be handled anisotropically, therefore processing of the voxelized 3D density field is justified. In this regard, we address geometric 3D reconstructions based on density gradients, whereas the gradients result from 3D edge detection filters of the first and second derivatives, namely Sobel, Canny and Laplacian of Gaussian. The gradients rely on relative neighboring density values in all directions, thus are independent from absolute magnitudes. Consequently, gradient filters are able to extract edges along a wide density range, almost independent from assumptions and empirical investigations. Our approach demonstrates the capability to achieve geometric 3D reconstructions with high geometric accuracy on object surfaces and remarkable object completeness. Notably, Canny filter effectively eliminates gaps, delivers a uniform point density, and strikes a favorable balance between correctness and completeness across the scenes.

A Comparative Neural Radiance Field (NeRF) 3D Analysis of Camera Poses from HoloLens Trajectories and Structure from Motion

Apr 20, 2023

Abstract:Neural Radiance Fields (NeRFs) are trained using a set of camera poses and associated images as input to estimate density and color values for each position. The position-dependent density learning is of particular interest for photogrammetry, enabling 3D reconstruction by querying and filtering the NeRF coordinate system based on the object density. While traditional methods like Structure from Motion are commonly used for camera pose calculation in pre-processing for NeRFs, the HoloLens offers an interesting interface for extracting the required input data directly. We present a workflow for high-resolution 3D reconstructions almost directly from HoloLens data using NeRFs. Thereby, different investigations are considered: Internal camera poses from the HoloLens trajectory via a server application, and external camera poses from Structure from Motion, both with an enhanced variant applied through pose refinement. Results show that the internal camera poses lead to NeRF convergence with a PSNR of 25\,dB with a simple rotation around the x-axis and enable a 3D reconstruction. Pose refinement enables comparable quality compared to external camera poses, resulting in improved training process with a PSNR of 27\,dB and a better 3D reconstruction. Overall, NeRF reconstructions outperform the conventional photogrammetric dense reconstruction using Multi-View Stereo in terms of completeness and level of detail.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge