Milan Horacek

Improving Generalization of Deep Networks for Inverse Reconstruction of Image Sequences

Mar 05, 2019

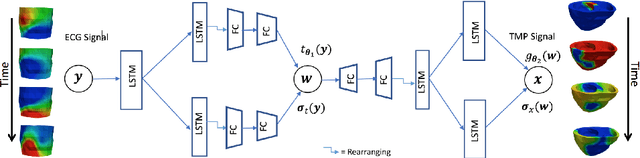

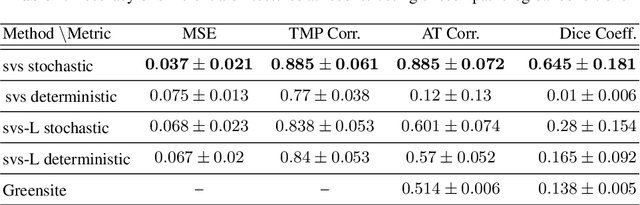

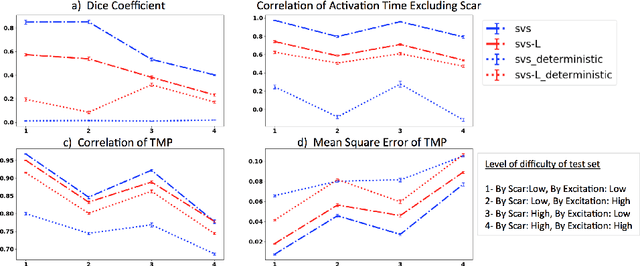

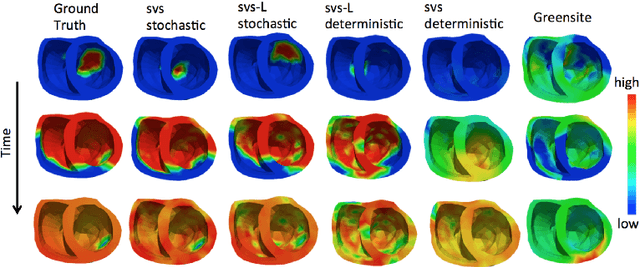

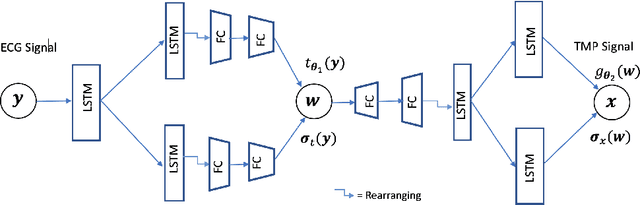

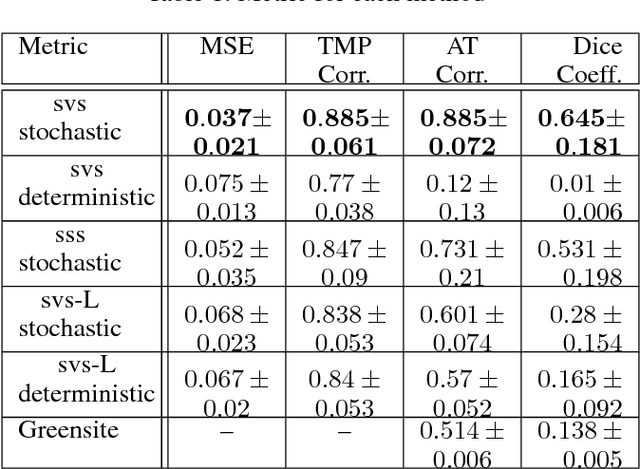

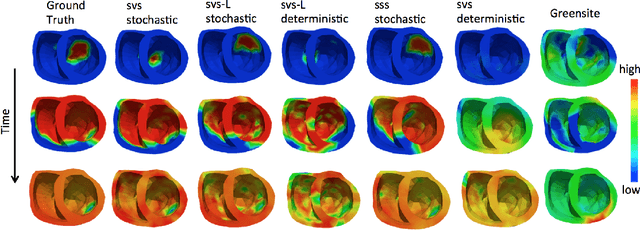

Abstract:Deep learning networks have shown state-of-the-art performance in many image reconstruction problems. However, it is not well understood what properties of representation and learning may improve the generalization ability of the network. In this paper, we propose that the generalization ability of an encoder-decoder network for inverse reconstruction can be improved in two means. First, drawing from analytical learning theory, we theoretically show that a stochastic latent space will improve the ability of a network to generalize to test data outside the training distribution. Second, following the information bottleneck principle, we show that a latent representation minimally informative of the input data will help a network generalize to unseen input variations that are irrelevant to the output reconstruction. Therefore, we present a sequence image reconstruction network optimized by a variational approximation of the information bottleneck principle with stochastic latent space. In the application setting of reconstructing the sequence of cardiac transmembrane potential from bodysurface potential, we assess the two types of generalization abilities of the presented network against its deterministic counterpart. The results demonstrate that the generalization ability of an inverse reconstruction network can be improved by stochasticity as well as the information bottleneck.

Improving Generalization of Sequence Encoder-Decoder Networks for Inverse Imaging of Cardiac Transmembrane Potential

Oct 12, 2018

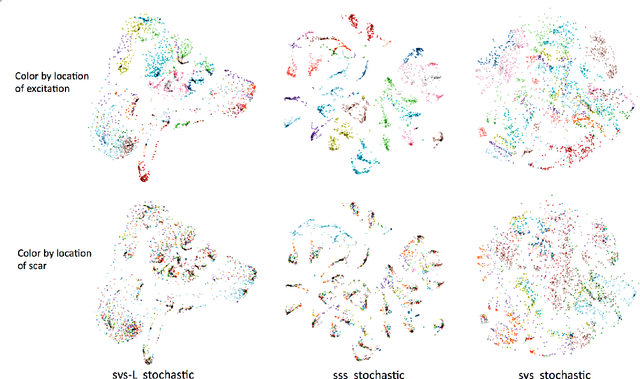

Abstract:Deep learning models have shown state-of-the-art performance in many inverse reconstruction problems. However, it is not well understood what properties of the latent representation may improve the generalization ability of the network. Furthermore, limited models have been presented for inverse reconstructions over time sequences. In this paper, we study the generalization ability of a sequence encoder decoder model for solving inverse reconstructions on time sequences. Our central hypothesis is that the generalization ability of the network can be improved by 1) constrained stochasticity and 2) global aggregation of temporal information in the latent space. First, drawing from analytical learning theory, we theoretically show that a stochastic latent space will lead to an improved generalization ability. Second, we consider an LSTM encoder-decoder architecture that compresses a global latent vector from all last-layer units in the LSTM encoder. This model is compared with alternative LSTM encoder-decoder architectures, each in deterministic and stochastic versions. The results demonstrate that the generalization ability of an inverse reconstruction network can be improved by constrained stochasticity combined with global aggregation of temporal information in the latent space.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge