Miguel A. González-Santamarta

Lessons Learned in Quadruped Deployment in Livestock Farming

Apr 24, 2024

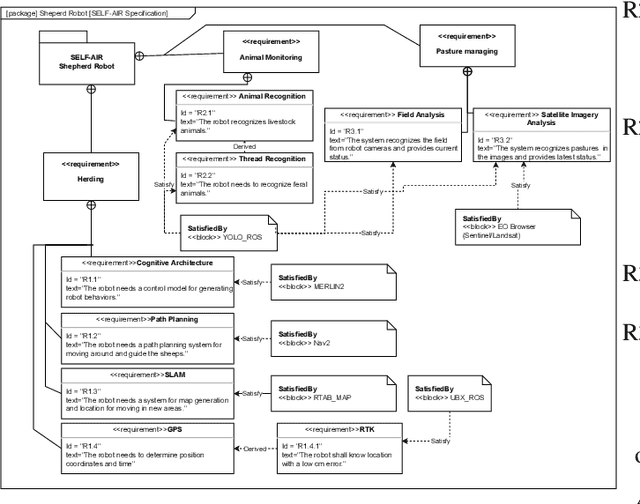

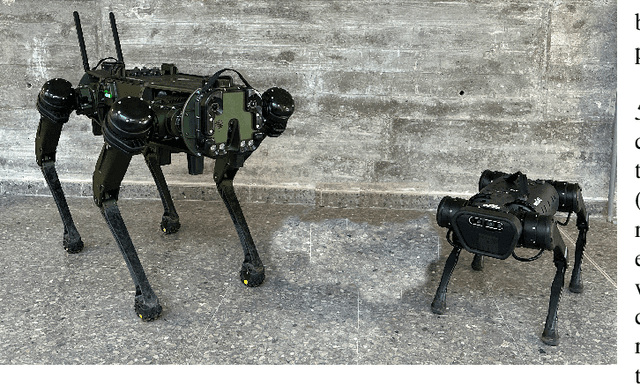

Abstract:The livestock industry faces several challenges, including labor-intensive management, the threat of predators and environmental sustainability concerns. Therefore, this paper explores the integration of quadruped robots in extensive livestock farming as a novel application of field robotics. The SELF-AIR project, an acronym for Supporting Extensive Livestock Farming with the use of Autonomous Intelligent Robots, exemplifies this innovative approach. Through advanced sensors, artificial intelligence, and autonomous navigation systems, these robots exhibit remarkable capabilities in navigating diverse terrains, monitoring large herds, and aiding in various farming tasks. This work provides insight into the SELF-AIR project, presenting the lessons learned.

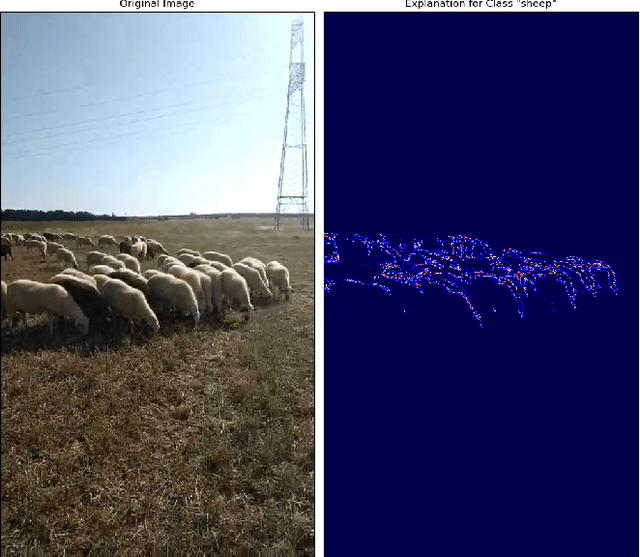

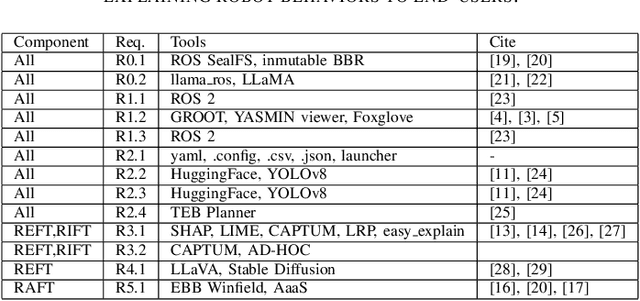

ROXIE: Defining a Robotic eXplanation and Interpretability Engine

Mar 25, 2024

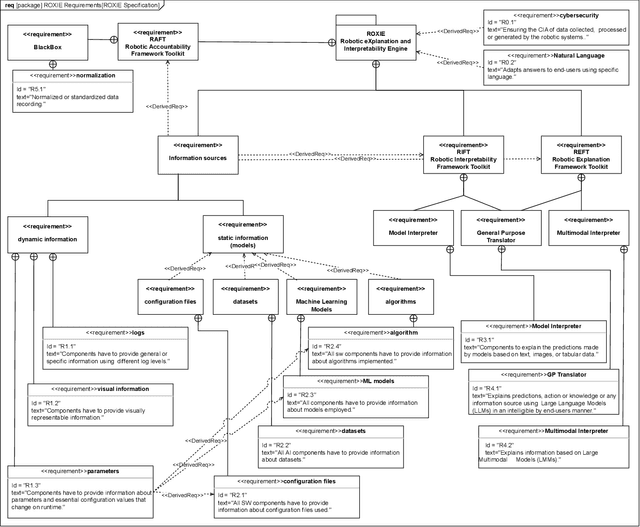

Abstract:In an era where autonomous robots increasingly inhabit public spaces, the imperative for transparency and interpretability in their decision-making processes becomes paramount. This paper presents the overview of a Robotic eXplanation and Interpretability Engine (ROXIE), which addresses this critical need, aiming to demystify the opaque nature of complex robotic behaviors. This paper elucidates the key features and requirements needed for providing information and explanations about robot decision-making processes. It also overviews the suite of software components and libraries available for deployment with ROS 2, empowering users to provide comprehensive explanations and interpretations of robot processes and behaviors, thereby fostering trust and collaboration in human-robot interactions.

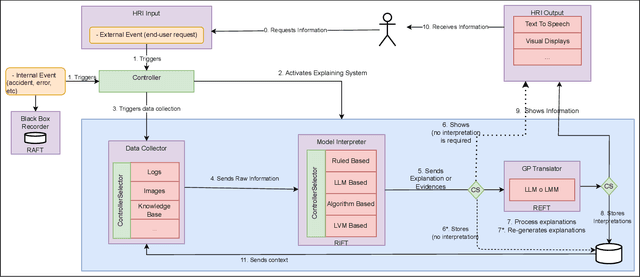

Explaining Autonomy: Enhancing Human-Robot Interaction through Explanation Generation with Large Language Models

Feb 06, 2024Abstract:This paper introduces a system designed to generate explanations for the actions performed by an autonomous robot in Human-Robot Interaction (HRI). Explainability in robotics, encapsulated within the concept of an eXplainable Autonomous Robot (XAR), is a growing research area. The work described in this paper aims to take advantage of the capabilities of Large Language Models (LLMs) in performing natural language processing tasks. This study focuses on the possibility of generating explanations using such models in combination with a Retrieval Augmented Generation (RAG) method to interpret data gathered from the logs of autonomous systems. In addition, this work also presents a formalization of the proposed explanation system. It has been evaluated through a navigation test from the European Robotics League (ERL), a Europe-wide social robotics competition. Regarding the obtained results, a validation questionnaire has been conducted to measure the quality of the explanations from the perspective of technical users. The results obtained during the experiment highlight the potential utility of LLMs in achieving explanatory capabilities in robots.

Using Large Language Models for Interpreting Autonomous Robots Behaviors

Apr 28, 2023Abstract:The deployment of autonomous robots in various domains has raised significant concerns about their trustworthiness and accountability. This study explores the potential of Large Language Models (LLMs) in analyzing ROS 2 logs generated by autonomous robots and proposes a framework for log analysis that categorizes log files into different aspects. The study evaluates the performance of three different language models in answering questions related to StartUp, Warning, and PDDL logs. The results suggest that GPT 4, a transformer-based model, outperforms other models, however, their verbosity is not enough to answer why or how questions for all kinds of actors involved in the interaction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge