Michael Gormish

Enhancing Worldwide Image Geolocation by Ensembling Satellite-Based Ground-Level Attribute Predictors

Jul 18, 2024

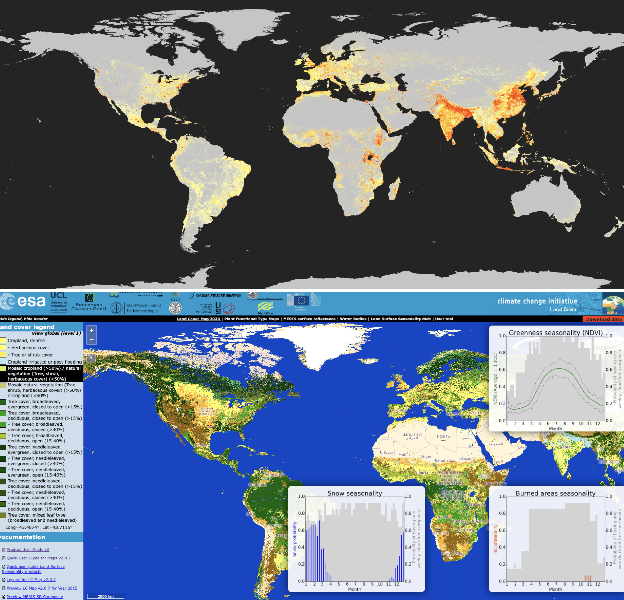

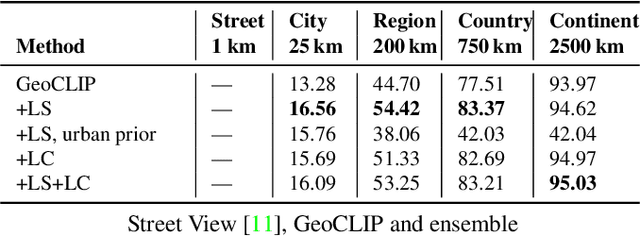

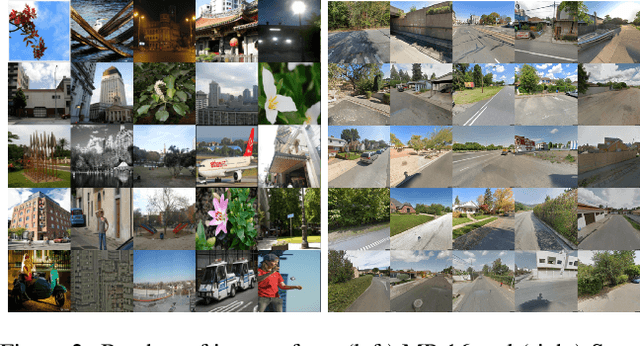

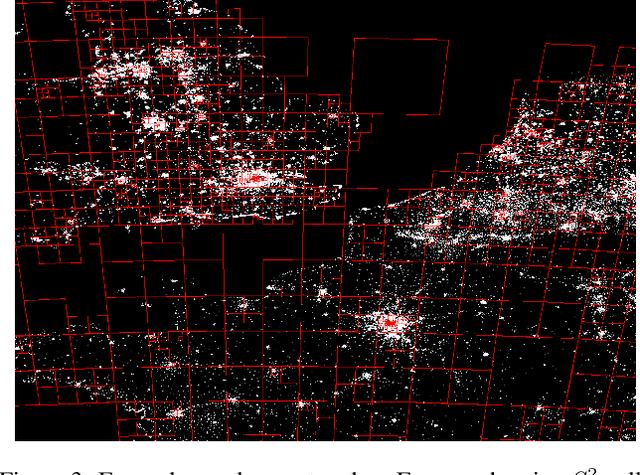

Abstract:Geolocating images of a ground-level scene entails estimating the location on Earth where the picture was taken, in absence of GPS or other location metadata. Typically, methods are evaluated by measuring the Great Circle Distance (GCD) between a predicted location and ground truth. However, this measurement is limited because it only evaluates a single point, not estimates of regions or score heatmaps. This is especially important in applications to rural, wilderness and under-sampled areas, where finding the exact location may not be possible, and when used in aggregate systems that progressively narrow down locations. In this paper, we introduce a novel metric, Recall vs Area (RvA), which measures the accuracy of estimated distributions of locations. RvA treats image geolocation results similarly to document retrieval, measuring recall as a function of area: For a ranked list of (possibly non-contiguous) predicted regions, we measure the accumulated area required for the region to contain the ground truth coordinate. This produces a curve similar to a precision-recall curve, where "precision" is replaced by square kilometers area, allowing evaluation of performance for different downstream search area budgets. Following directly from this view of the problem, we then examine a simple ensembling approach to global-scale image geolocation, which incorporates information from multiple sources to help address domain shift, and can readily incorporate multiple models, attribute predictors, and data sources. We study its effectiveness by combining the geolocation models GeoEstimation and the current SOTA GeoCLIP, with attribute predictors based on ORNL LandScan and ESA-CCI Land Cover. We find significant improvements in image geolocation for areas that are under-represented in the training set, particularly non-urban areas, on both Im2GPS3k and Street View images.

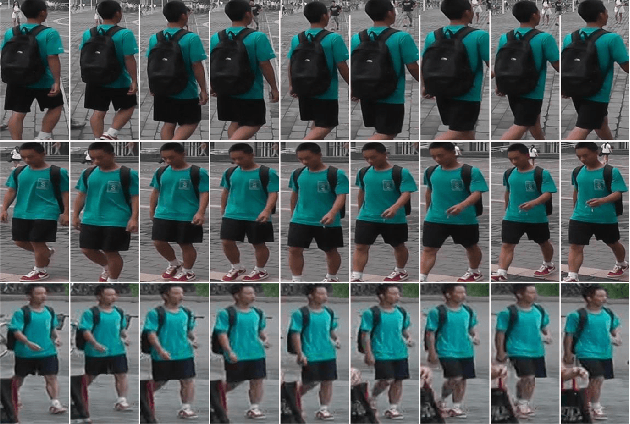

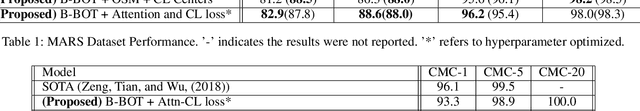

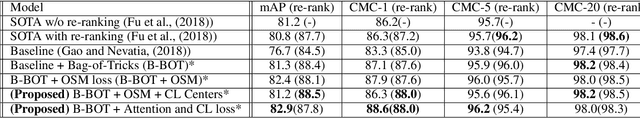

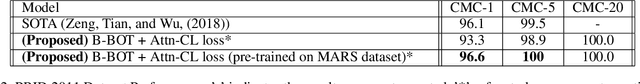

Video Person Re-ID: Fantastic Techniques and Where to Find Them

Nov 21, 2019

Abstract:The ability to identify the same person from multiple camera views without the explicit use of facial recognition is receiving commercial and academic interest. The current status-quo solutions are based on attention neural models. In this paper, we propose Attention and CL loss, which is a hybrid of center and Online Soft Mining (OSM) loss added to the attention loss on top of a temporal attention-based neural network. The proposed loss function applied with bag-of-tricks for training surpasses the state of the art on the common person Re-ID datasets, MARS and PRID 2011. Our source code is publicly available on github.

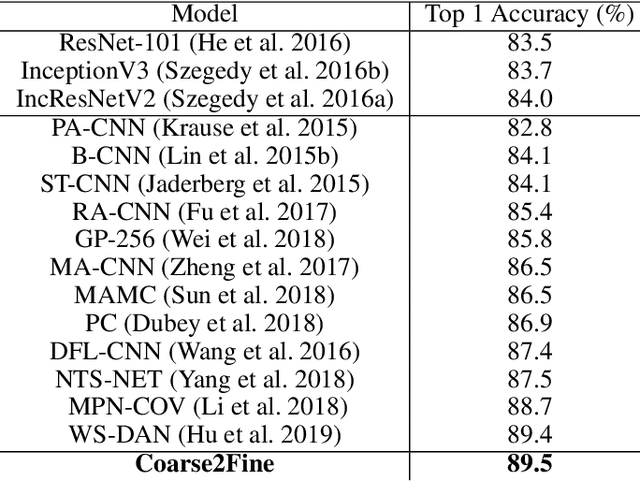

Coarse2Fine: A Two-stage Training Method for Fine-grained Visual Classification

Sep 06, 2019

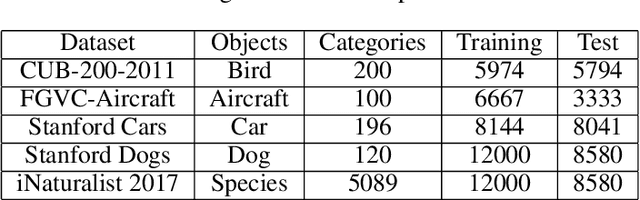

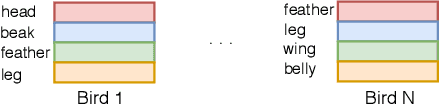

Abstract:Small inter-class and large intra-class variations are the main challenges in fine-grained visual classification. Objects from different classes share visually similar structures and objects in the same class can have different poses and viewpoints. Therefore, the proper extraction of discriminative local features (e.g. bird's beak or car's headlight) is crucial. Most of the recent successes on this problem are based upon the attention models which can localize and attend the local discriminative objects parts. In this work, we propose a training method for visual attention networks, Coarse2Fine, which creates a differentiable path from the input space to the attended feature maps. Coarse2Fine learns an inverse mapping function from the attended feature maps to the informative regions in the raw image, which will guide the attention maps to better attend the fine-grained features. We show Coarse2Fine and orthogonal initialization of the attention weights can surpass the state-of-the-art accuracies on common fine-grained classification tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge