Michael Buro

Subgoal-Guided Policy Heuristic Search with Learned Subgoals

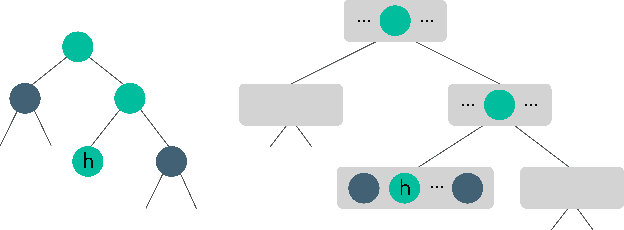

Jun 08, 2025Abstract:Policy tree search is a family of tree search algorithms that use a policy to guide the search. These algorithms provide guarantees on the number of expansions required to solve a given problem that are based on the quality of the policy. While these algorithms have shown promising results, the process in which they are trained requires complete solution trajectories to train the policy. Search trajectories are obtained during a trial-and-error search process. When the training problem instances are hard, learning can be prohibitively costly, especially when starting from a randomly initialized policy. As a result, search samples are wasted in failed attempts to solve these hard instances. This paper introduces a novel method for learning subgoal-based policies for policy tree search algorithms. The subgoals and policies conditioned on subgoals are learned from the trees that the search expands while attempting to solve problems, including the search trees of failed attempts. We empirically show that our policy formulation and training method improve the sample efficiency of learning a policy and heuristic function in this online setting.

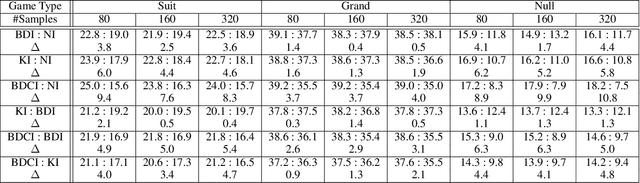

Transformer Based Planning in the Observation Space with Applications to Trick Taking Card Games

Apr 19, 2024Abstract:Traditional search algorithms have issues when applied to games of imperfect information where the number of possible underlying states and trajectories are very large. This challenge is particularly evident in trick-taking card games. While state sampling techniques such as Perfect Information Monte Carlo (PIMC) search has shown success in these contexts, they still have major limitations. We present Generative Observation Monte Carlo Tree Search (GO-MCTS), which utilizes MCTS on observation sequences generated by a game specific model. This method performs the search within the observation space and advances the search using a model that depends solely on the agent's observations. Additionally, we demonstrate that transformers are well-suited as the generative model in this context, and we demonstrate a process for iteratively training the transformer via population-based self-play. The efficacy of GO-MCTS is demonstrated in various games of imperfect information, such as Hearts, Skat, and "The Crew: The Quest for Planet Nine," with promising results.

History Filtering in Imperfect Information Games: Algorithms and Complexity

Nov 24, 2023

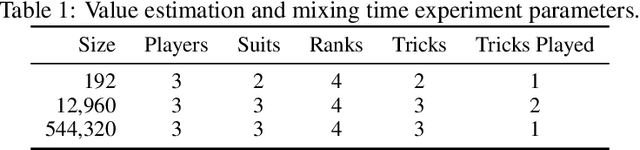

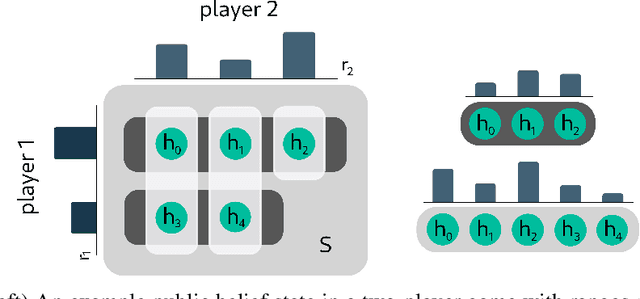

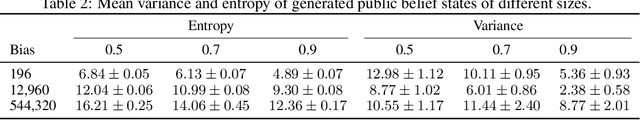

Abstract:Historically applied exclusively to perfect information games, depth-limited search with value functions has been key to recent advances in AI for imperfect information games. Most prominent approaches with strong theoretical guarantees require subgame decomposition - a process in which a subgame is computed from public information and player beliefs. However, subgame decomposition can itself require non-trivial computations, and its tractability depends on the existence of efficient algorithms for either full enumeration or generation of the histories that form the root of the subgame. Despite this, no formal analysis of the tractability of such computations has been established in prior work, and application domains have often consisted of games, such as poker, for which enumeration is trivial on modern hardware. Applying these ideas to more complex domains requires understanding their cost. In this work, we introduce and analyze the computational aspects and tractability of filtering histories for subgame decomposition. We show that constructing a single history from the root of the subgame is generally intractable, and then provide a necessary and sufficient condition for efficient enumeration. We also introduce a novel Markov Chain Monte Carlo-based generation algorithm for trick-taking card games - a domain where enumeration is often prohibitively expensive. Our experiments demonstrate its improved scalability in the trick-taking card game Oh Hell. These contributions clarify when and how depth-limited search via subgame decomposition can be an effective tool for sequential decision-making in imperfect information settings.

Inference-Based Deterministic Messaging For Multi-Agent Communication

Mar 03, 2021

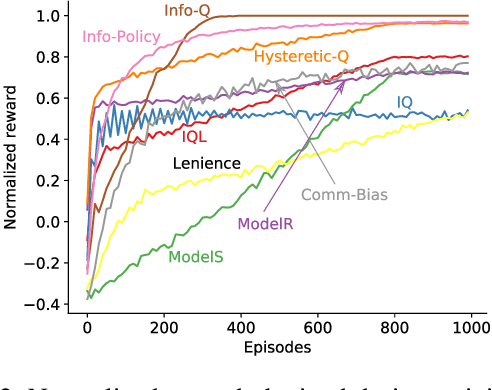

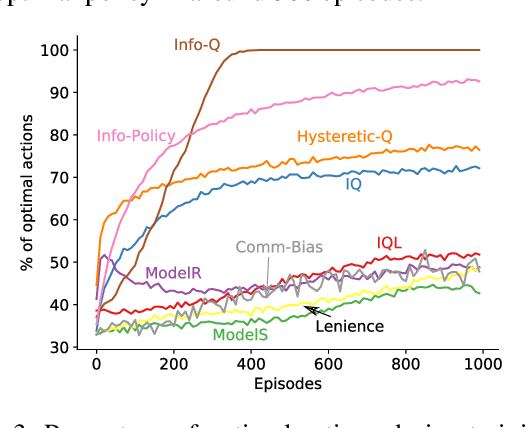

Abstract:Communication is essential for coordination among humans and animals. Therefore, with the introduction of intelligent agents into the world, agent-to-agent and agent-to-human communication becomes necessary. In this paper, we first study learning in matrix-based signaling games to empirically show that decentralized methods can converge to a suboptimal policy. We then propose a modification to the messaging policy, in which the sender deterministically chooses the best message that helps the receiver to infer the sender's observation. Using this modification, we see, empirically, that the agents converge to the optimal policy in nearly all the runs. We then apply this method to a partially observable gridworld environment which requires cooperation between two agents and show that, with appropriate approximation methods, the proposed sender modification can enhance existing decentralized training methods for more complex domains as well.

Bayes DistNet -- A Robust Neural Network for Algorithm Runtime Distribution Predictions

Dec 25, 2020

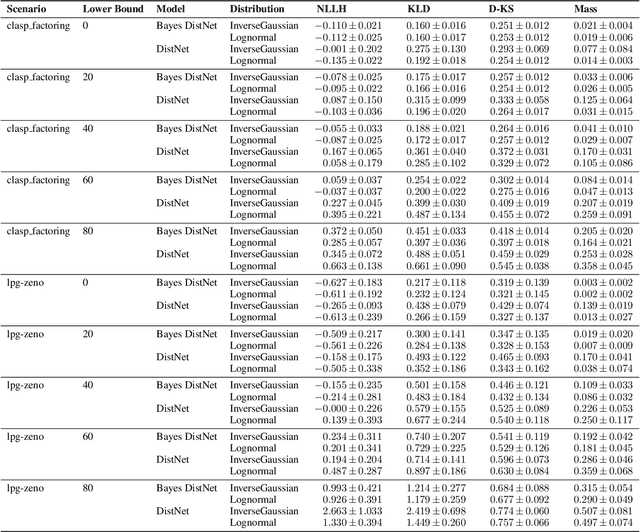

Abstract:Randomized algorithms are used in many state-of-the-art solvers for constraint satisfaction problems (CSP) and Boolean satisfiability (SAT) problems. For many of these problems, there is no single solver which will dominate others. Having access to the underlying runtime distributions (RTD) of these solvers can allow for better use of algorithm selection, algorithm portfolios, and restart strategies. Previous state-of-the-art methods directly try to predict a fixed parametric distribution that the input instance follows. In this paper, we extend RTD prediction models into the Bayesian setting for the first time. This new model achieves robust predictive performance in the low observation setting, as well as handling censored observations. This technique also allows for richer representations which cannot be achieved by the classical models which restrict their output representations. Our model outperforms the previous state-of-the-art model in settings in which data is scarce, and can make use of censored data such as lower bound time estimates, where that type of data would otherwise be discarded. It can also quantify its uncertainty in its predictions, allowing for algorithm portfolio models to make better informed decisions about which algorithm to run on a particular instance.

Single-Agent Optimization Through Policy Iteration Using Monte-Carlo Tree Search

May 22, 2020

Abstract:The combination of Monte-Carlo Tree Search (MCTS) and deep reinforcement learning is state-of-the-art in two-player perfect-information games. In this paper, we describe a search algorithm that uses a variant of MCTS which we enhanced by 1) a novel action value normalization mechanism for games with potentially unbounded rewards (which is the case in many optimization problems), 2) defining a virtual loss function that enables effective search parallelization, and 3) a policy network, trained by generations of self-play, to guide the search. We gauge the effectiveness of our method in "SameGame"---a popular single-player test domain. Our experimental results indicate that our method outperforms baseline algorithms on several board sizes. Additionally, it is competitive with state-of-the-art search algorithms on a public set of positions.

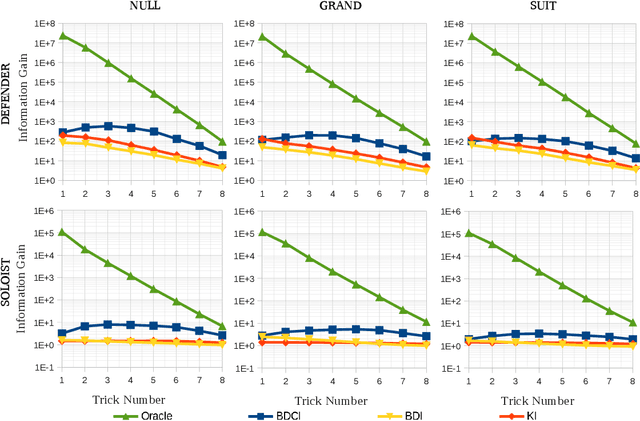

Policy Based Inference in Trick-Taking Card Games

May 27, 2019

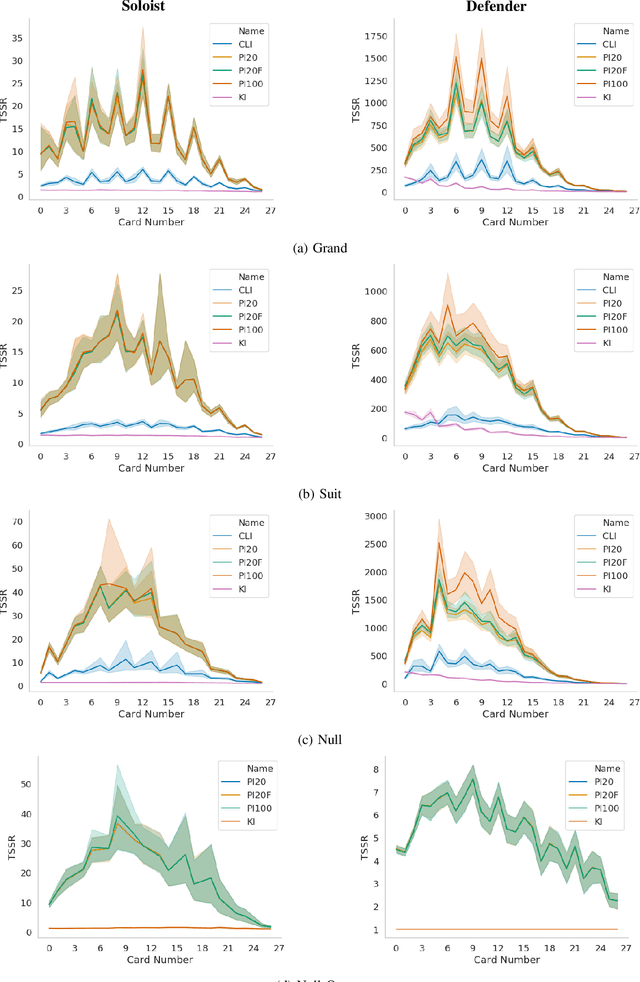

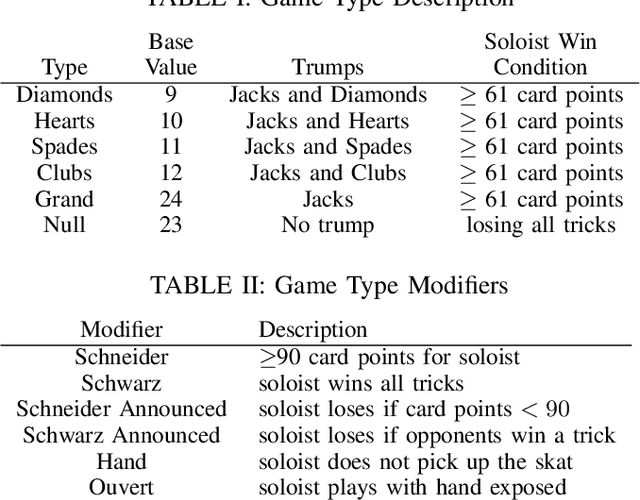

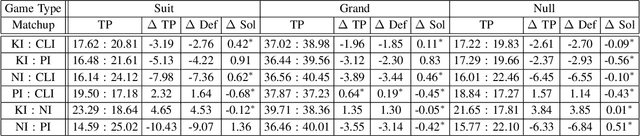

Abstract:Trick-taking card games feature a large amount of private information that slowly gets revealed through a long sequence of actions. This makes the number of histories exponentially large in the action sequence length, as well as creating extremely large information sets. As a result, these games become too large to solve. To deal with these issues many algorithms employ inference, the estimation of the probability of states within an information set. In this paper, we demonstrate a Policy Based Inference (PI) algorithm that uses player modelling to infer the probability we are in a given state. We perform experiments in the German trick-taking card game Skat, in which we show that this method vastly improves the inference as compared to previous work, and increases the performance of the state-of-the-art Skat AI system Kermit when it is employed into its determinized search algorithm.

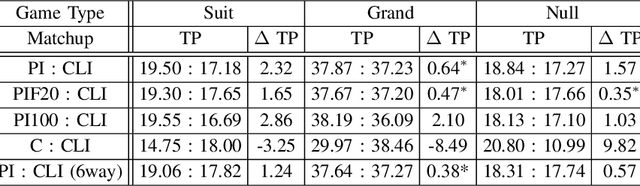

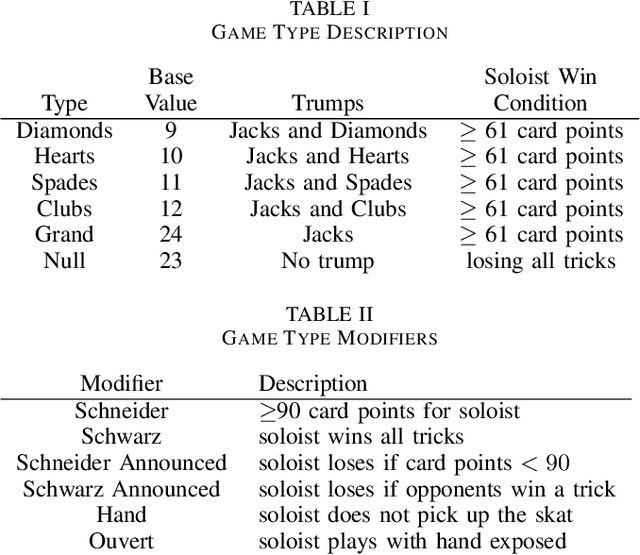

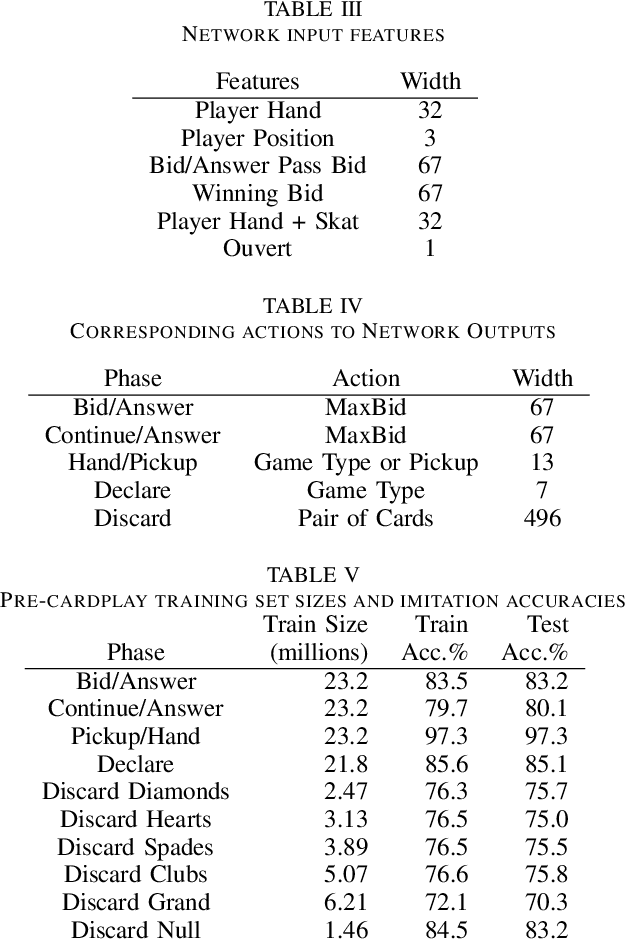

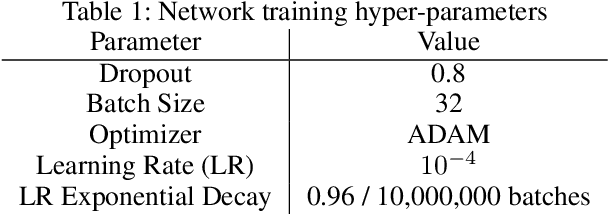

Learning Policies from Human Data for Skat

May 27, 2019

Abstract:Decision-making in large imperfect information games is difficult. Thanks to recent success in Poker, Counterfactual Regret Minimization (CFR) methods have been at the forefront of research in these games. However, most of the success in large games comes with the use of a forward model and powerful state abstractions. In trick-taking card games like Bridge or Skat, large information sets and an inability to advance the simulation without fully determinizing the state make forward search problematic. Furthermore, state abstractions can be especially difficult to construct because the precise holdings of each player directly impact move values. In this paper we explore learning model-free policies for Skat from human game data using deep neural networks (DNN). We produce a new state-of-the-art system for bidding and game declaration by introducing methods to a) directly vary the aggressiveness of the bidder and b) declare games based on expected value while mitigating issues with rarely observed state-action pairs. Although cardplay policies learned through imitation are slightly weaker than the current best search-based method, they run orders of magnitude faster. We also explore how these policies could be learned directly from experience in a reinforcement learning setting and discuss the value of incorporating human data for this task.

Improving Search with Supervised Learning in Trick-Based Card Games

Mar 22, 2019

Abstract:In trick-taking card games, a two-step process of state sampling and evaluation is widely used to approximate move values. While the evaluation component is vital, the accuracy of move value estimates is also fundamentally linked to how well the sampling distribution corresponds the true distribution. Despite this, recent work in trick-taking card game AI has mainly focused on improving evaluation algorithms with limited work on improving sampling. In this paper, we focus on the effect of sampling on the strength of a player and propose a novel method of sampling more realistic states given move history. In particular, we use predictions about locations of individual cards made by a deep neural network --- trained on data from human gameplay - in order to sample likely worlds for evaluation. This technique, used in conjunction with Perfect Information Monte Carlo (PIMC) search, provides a substantial increase in cardplay strength in the popular trick-taking card game of Skat.

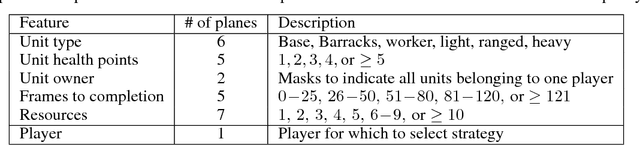

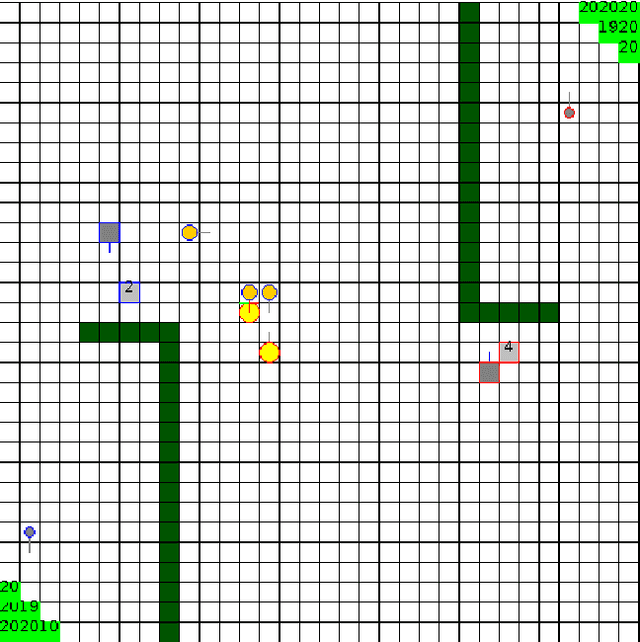

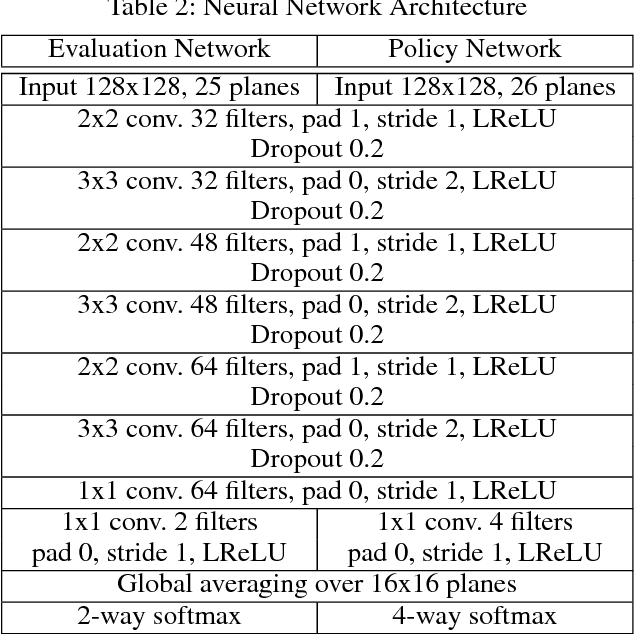

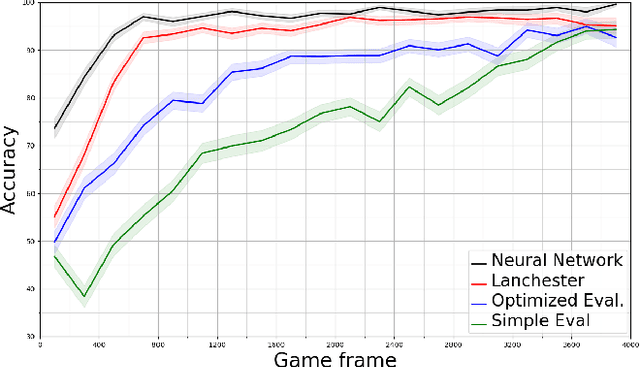

Combining Strategic Learning and Tactical Search in Real-Time Strategy Games

Sep 11, 2017

Abstract:A commonly used technique for managing AI complexity in real-time strategy (RTS) games is to use action and/or state abstractions. High-level abstractions can often lead to good strategic decision making, but tactical decision quality may suffer due to lost details. A competing method is to sample the search space which often leads to good tactical performance in simple scenarios, but poor high-level planning. We propose to use a deep convolutional neural network (CNN) to select among a limited set of abstract action choices, and to utilize the remaining computation time for game tree search to improve low level tactics. The CNN is trained by supervised learning on game states labelled by Puppet Search, a strategic search algorithm that uses action abstractions. The network is then used to select a script --- an abstract action --- to produce low level actions for all units. Subsequently, the game tree search algorithm improves the tactical actions of a subset of units using a limited view of the game state only considering units close to opponent units. Experiments in the microRTS game show that the combined algorithm results in higher win-rates than either of its two independent components and other state-of-the-art microRTS agents. To the best of our knowledge, this is the first successful application of a convolutional network to play a full RTS game on standard game maps, as previous work has focused on sub-problems, such as combat, or on very small maps.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge