Mengyang Chen

Are Large Language Models Good Fact Checkers: A Preliminary Study

Nov 29, 2023

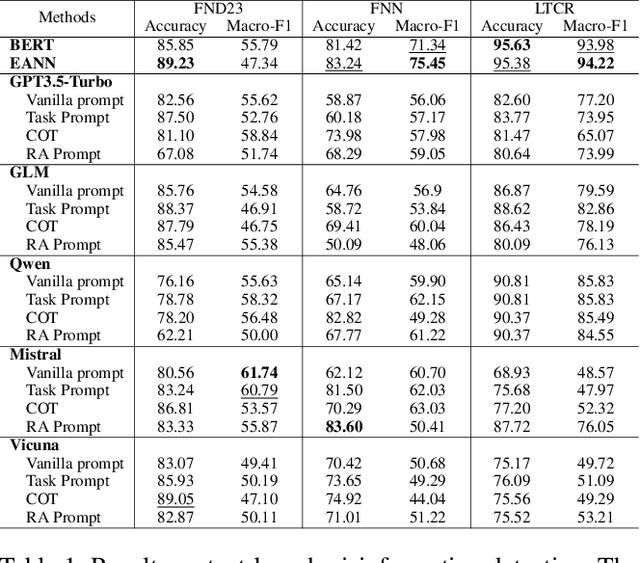

Abstract:Recently, Large Language Models (LLMs) have drawn significant attention due to their outstanding reasoning capabilities and extensive knowledge repository, positioning them as superior in handling various natural language processing tasks compared to other language models. In this paper, we present a preliminary investigation into the potential of LLMs in fact-checking. This study aims to comprehensively evaluate various LLMs in tackling specific fact-checking subtasks, systematically evaluating their capabilities, and conducting a comparative analysis of their performance against pre-trained and state-of-the-art low-parameter models. Experiments demonstrate that LLMs achieve competitive performance compared to other small models in most scenarios. However, they encounter challenges in effectively handling Chinese fact verification and the entirety of the fact-checking pipeline due to language inconsistencies and hallucinations. These findings underscore the need for further exploration and research to enhance the proficiency of LLMs as reliable fact-checkers, unveiling the potential capability of LLMs and the possible challenges in fact-checking tasks.

Can Large Language Models Understand Content and Propagation for Misinformation Detection: An Empirical Study

Nov 21, 2023

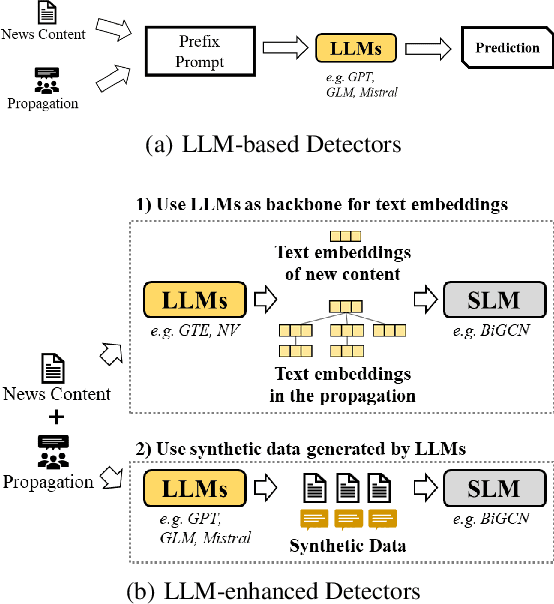

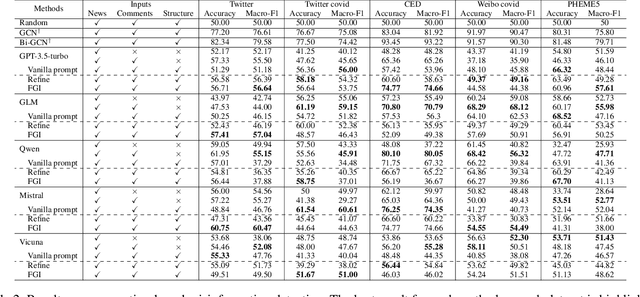

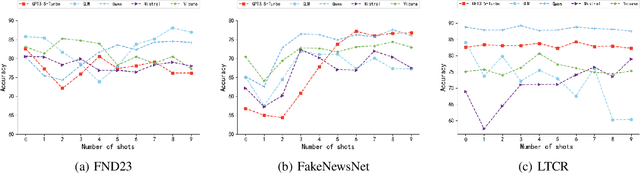

Abstract:Large Language Models (LLMs) have garnered significant attention for their powerful ability in natural language understanding and reasoning. In this paper, we present a comprehensive empirical study to explore the performance of LLMs on misinformation detection tasks. This study stands as the pioneering investigation into the understanding capabilities of multiple LLMs regarding both content and propagation across social media platforms. Our empirical studies on five misinformation detection datasets show that LLMs with diverse prompts achieve comparable performance in text-based misinformation detection but exhibit notably constrained capabilities in comprehending propagation structure compared to existing models in propagation-based misinformation detection. Besides, we further design four instruction-tuned strategies to enhance LLMs for both content and propagation-based misinformation detection. These strategies boost LLMs to actively learn effective features from multiple instances or hard instances, and eliminate irrelevant propagation structures, thereby achieving better detection performance. Extensive experiments further demonstrate LLMs would play a better capacity in content and propagation structure under these proposed strategies and achieve promising detection performance. These findings highlight the potential ability of LLMs to detect misinformation.

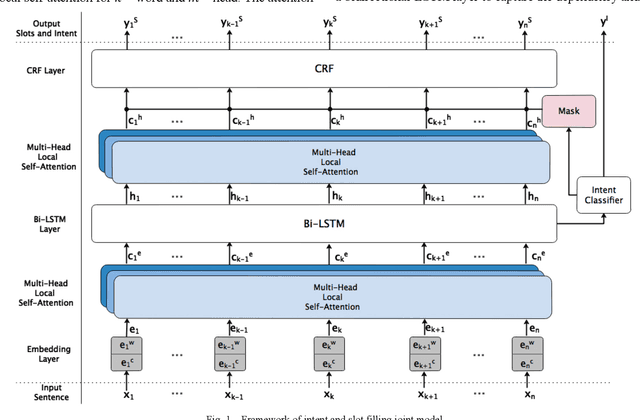

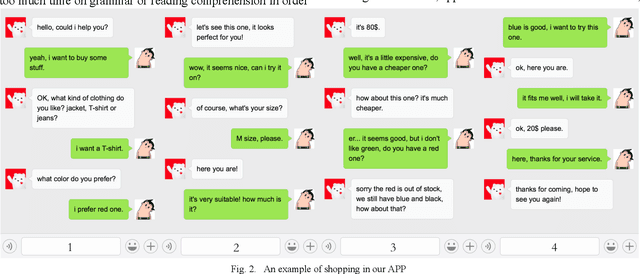

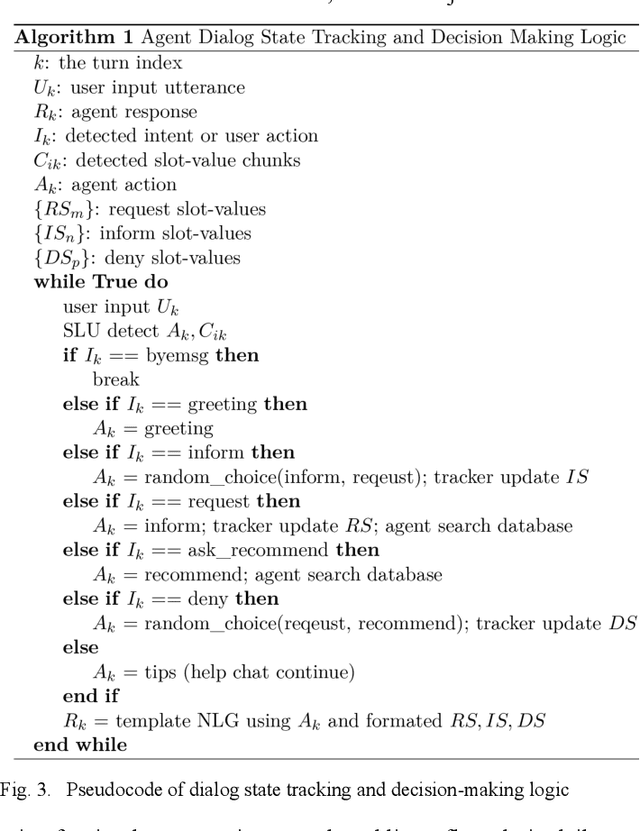

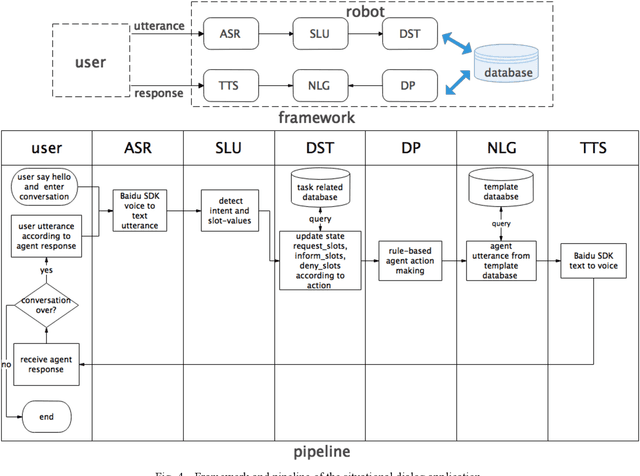

A Self-Attention Joint Model for Spoken Language Understanding in Situational Dialog Applications

May 27, 2019

Abstract:Spoken language understanding (SLU) acts as a critical component in goal-oriented dialog systems. It typically involves identifying the speakers intent and extracting semantic slots from user utterances, which are known as intent detection (ID) and slot filling (SF). SLU problem has been intensively investigated in recent years. However, these methods just constrain SF results grammatically, solve ID and SF independently, or do not fully utilize the mutual impact of the two tasks. This paper proposes a multi-head self-attention joint model with a conditional random field (CRF) layer and a prior mask. The experiments show the effectiveness of our model, as compared with state-of-the-art models. Meanwhile, online education in China has made great progress in the last few years. But there are few intelligent educational dialog applications for students to learn foreign languages. Hence, we design an intelligent dialog robot equipped with different scenario settings to help students learn communication skills.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge