Meng-Fan Chang

Clo-HDnn: A 4.66 TFLOPS/W and 3.78 TOPS/W Continual On-Device Learning Accelerator with Energy-efficient Hyperdimensional Computing via Progressive Search

Jul 23, 2025Abstract:Clo-HDnn is an on-device learning (ODL) accelerator designed for emerging continual learning (CL) tasks. Clo-HDnn integrates hyperdimensional computing (HDC) along with low-cost Kronecker HD Encoder and weight clustering feature extraction (WCFE) to optimize accuracy and efficiency. Clo-HDnn adopts gradient-free CL to efficiently update and store the learned knowledge in the form of class hypervectors. Its dual-mode operation enables bypassing costly feature extraction for simpler datasets, while progressive search reduces complexity by up to 61% by encoding and comparing only partial query hypervectors. Achieving 4.66 TFLOPS/W (FE) and 3.78 TOPS/W (classifier), Clo-HDnn delivers 7.77x and 4.85x higher energy efficiency compared to SOTA ODL accelerators.

MARS: Multi-macro Architecture SRAM CIM-Based Accelerator with Co-designed Compressed Neural Networks

Oct 24, 2020

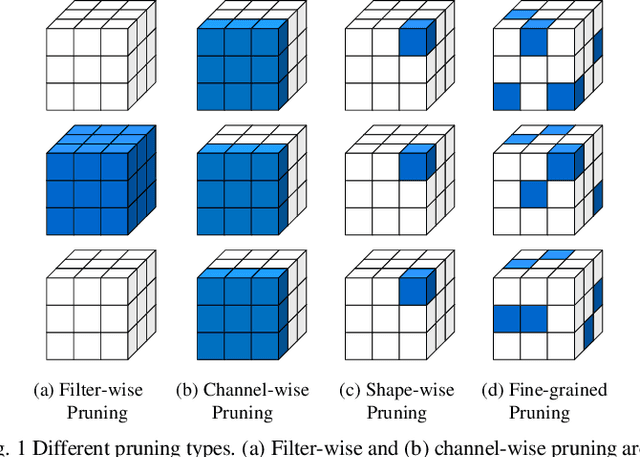

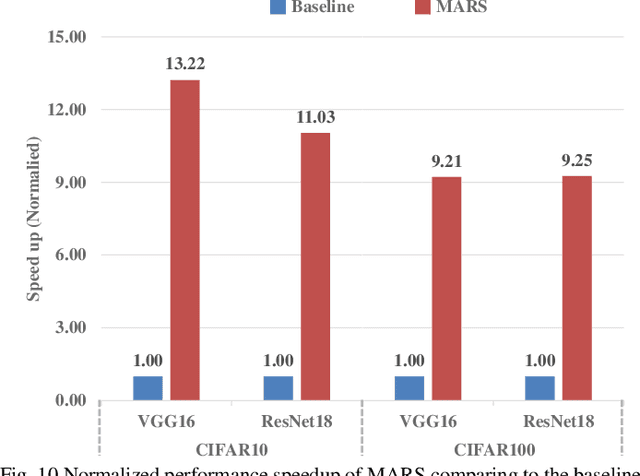

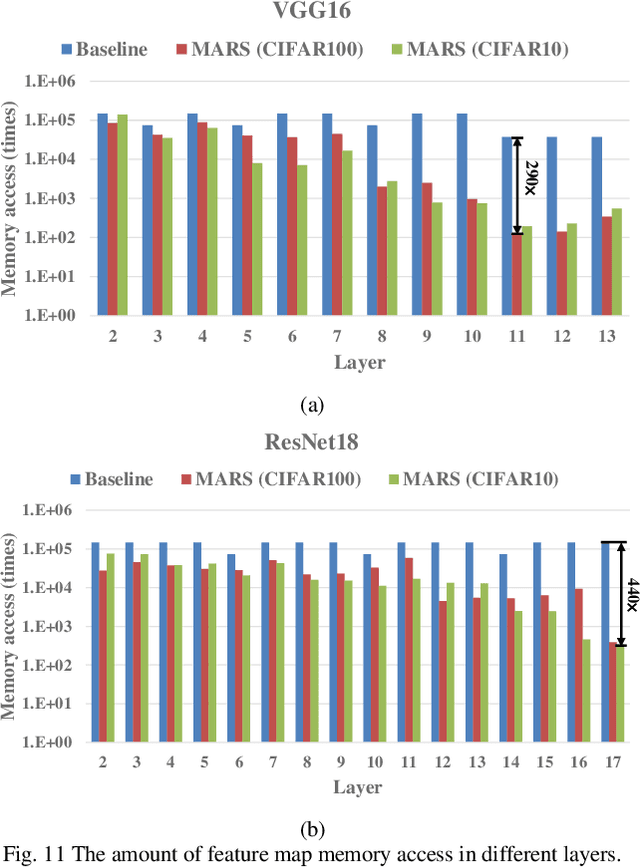

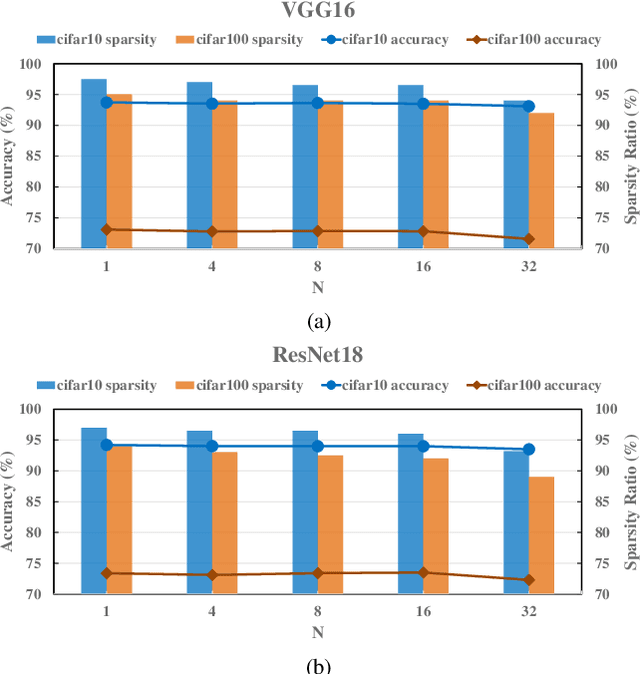

Abstract:Convolutional neural networks (CNNs) play a key role in deep learning applications. However, the large storage overheads and the substantial computation cost of CNNs are problematic in hardware accelerators. Computing-in-memory (CIM) architecture has demonstrated great potential to effectively compute large-scale matrix-vector multiplication. However, the intensive multiply and accumulation (MAC) operations executed at the crossbar array and the limited capacity of CIM macros remain bottlenecks for further improvement of energy efficiency and throughput. To reduce computation costs, network pruning and quantization are two widely studied compression methods to shrink the model size. However, most of the model compression algorithms can only be implemented in digital-based CNN accelerators. For implementation in a static random access memory (SRAM) CIM-based accelerator, the model compression algorithm must consider the hardware limitations of CIM macros, such as the number of word lines and bit lines that can be turned on at the same time, as well as how to map the weight to the SRAM CIM macro. In this study, a software and hardware co-design approach is proposed to design an SRAM CIM-based CNN accelerator and an SRAM CIM-aware model compression algorithm. To lessen the high-precision MAC required by batch normalization (BN), a quantization algorithm that can fuse BN into the weights is proposed. Furthermore, to reduce the number of network parameters, a sparsity algorithm that considers a CIM architecture is proposed. Last, MARS, a CIM-based CNN accelerator that can utilize multiple SRAM CIM macros as processing units and support a sparsity neural network, is proposed.

Conditional Activation for Diverse Neurons in Heterogeneous Networks

Mar 13, 2018

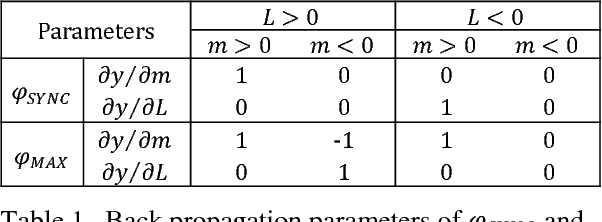

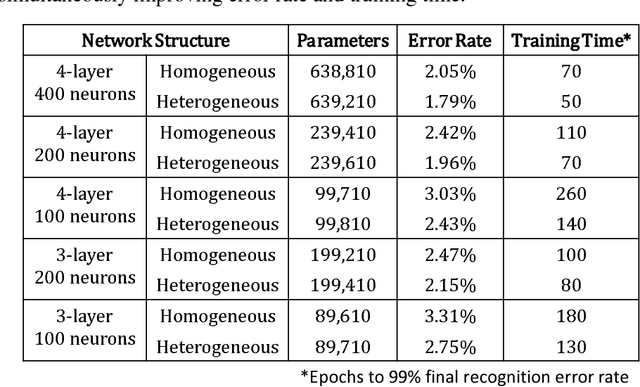

Abstract:In this paper, we propose a new scheme for modelling the diverse behavior of neurons. We introduce the conditional activation, in which a neurons activation function is dynamically modified by a control signal. We apply this method to recreate behavior of special neurons existing in the human auditory and visual system. A heterogeneous multilayered perceptron (MLP) incorporating the developed models demonstrates simultaneous improvement in learning speed and performance across a various number of hidden units and layers, compared to a homogeneous network composed of the conventional neuron model. For similar performance, the proposed model lowers the memory for storing network parameters significantly.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge