Meng Pan

Compare learning: bi-attention network for few-shot learning

Mar 25, 2022

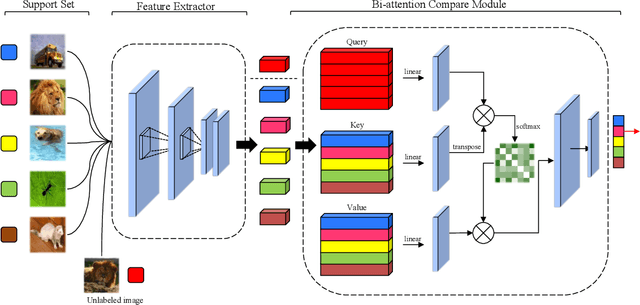

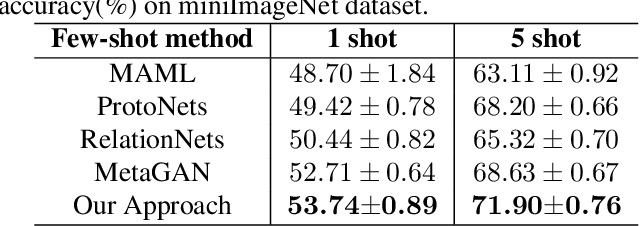

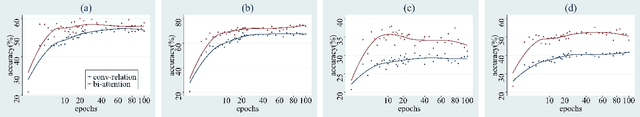

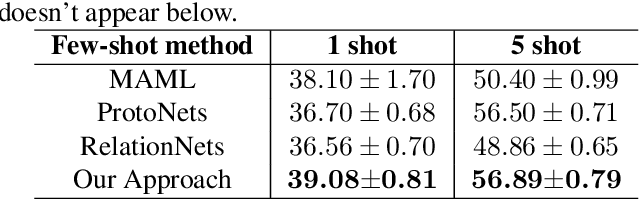

Abstract:Learning with few labeled data is a key challenge for visual recognition, as deep neural networks tend to overfit using a few samples only. One of the Few-shot learning methods called metric learning addresses this challenge by first learning a deep distance metric to determine whether a pair of images belong to the same category, then applying the trained metric to instances from other test set with limited labels. This method makes the most of the few samples and limits the overfitting effectively. However, extant metric networks usually employ Linear classifiers or Convolutional neural networks (CNN) that are not precise enough to globally capture the subtle differences between vectors. In this paper, we propose a novel approach named Bi-attention network to compare the instances, which can measure the similarity between embeddings of instances precisely, globally and efficiently. We verify the effectiveness of our model on two benchmarks. Experiments show that our approach achieved improved accuracy and convergence speed over baseline models.

Automated Augmentation with Reinforcement Learning and GANs for Robust Identification of Traffic Signs using Front Camera Images

Nov 15, 2019

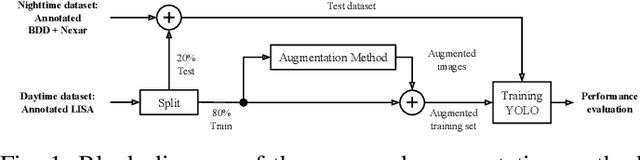

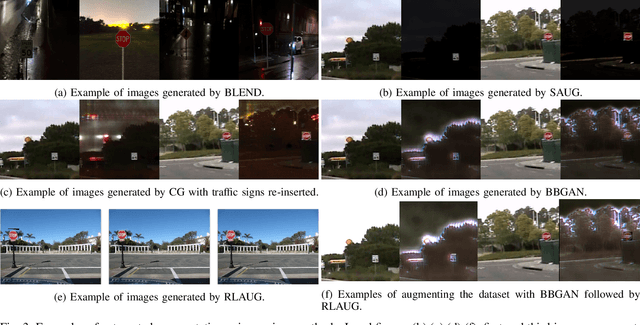

Abstract:Traffic sign identification using camera images from vehicles plays a critical role in autonomous driving and path planning. However, the front camera images can be distorted due to blurriness, lighting variations and vandalism which can lead to degradation of detection performances. As a solution, machine learning models must be trained with data from multiple domains, and collecting and labeling more data in each new domain is time consuming and expensive. In this work, we present an end-to-end framework to augment traffic sign training data using optimal reinforcement learning policies and a variety of Generative Adversarial Network (GAN) models, that can then be used to train traffic sign detector modules. Our automated augmenter enables learning from transformed nightime, poor lighting, and varying degrees of occlusions using the LISA Traffic Sign and BDD-Nexar dataset. The proposed method enables mapping training data from one domain to another, thereby improving traffic sign detection precision/recall from 0.70/0.66 to 0.83/0.71 for nighttime images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge