Mehrdad Khatir

Concept Formation and Alignment in Language Models: Bridging Statistical Patterns in Latent Space to Concept Taxonomy

Jun 08, 2024

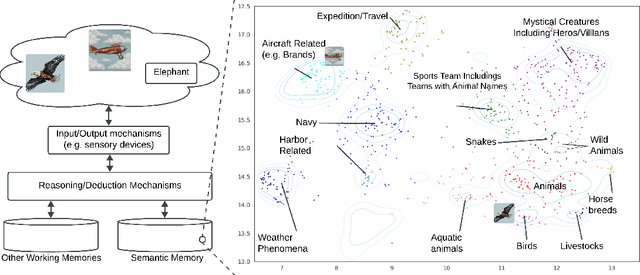

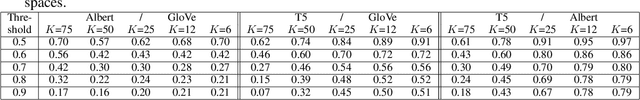

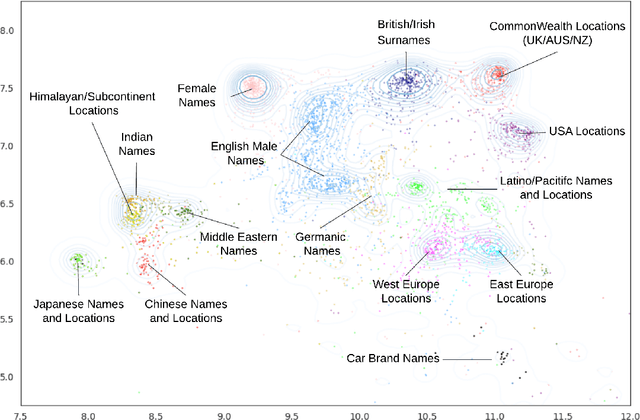

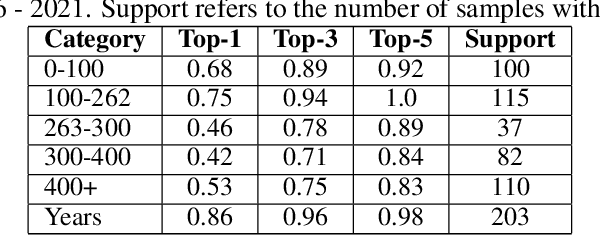

Abstract:This paper explores the concept formation and alignment within the realm of language models (LMs). We propose a mechanism for identifying concepts and their hierarchical organization within the semantic representations learned by various LMs, encompassing a spectrum from early models like Glove to the transformer-based language models like ALBERT and T5. Our approach leverages the inherent structure present in the semantic embeddings generated by these models to extract a taxonomy of concepts and their hierarchical relationships. This investigation sheds light on how LMs develop conceptual understanding and opens doors to further research to improve their ability to reason and leverage real-world knowledge. We further conducted experiments and observed the possibility of isolating these extracted conceptual representations from the reasoning modules of the transformer-based LMs. The observed concept formation along with the isolation of conceptual representations from the reasoning modules can enable targeted token engineering to open the door for potential applications in knowledge transfer, explainable AI, and the development of more modular and conceptually grounded language models.

Pseudo-Poincaré: A Unification Framework for Euclidean and Hyperbolic Graph Neural Networks

Jun 09, 2022

Abstract:Hyperbolic neural networks have recently gained significant attention due to their promising results on several graph problems including node classification and link prediction. The primary reason for this success is the effectiveness of the hyperbolic space in capturing the inherent hierarchy of graph datasets. However, they are limited in terms of generalization, scalability, and have inferior performance when it comes to non-hierarchical datasets. In this paper, we take a completely orthogonal perspective for modeling hyperbolic networks. We use Poincar\'e disk to model the hyperbolic geometry and also treat it as if the disk itself is a tangent space at origin. This enables us to replace non-scalable M\"obius gyrovector operations with an Euclidean approximation, and thus simplifying the entire hyperbolic model to a Euclidean model cascaded with a hyperbolic normalization function. Our approach does not adhere to M\"obius math, yet it still works in the Riemannian manifold, hence we call it Pseudo-Poincar\'e framework. We applied our non-linear hyperbolic normalization to the current state-of-the-art homogeneous and multi-relational graph networks and demonstrate significant improvements in performance compared to both Euclidean and hyperbolic counterparts. The primary impact of this work lies in its ability to capture hierarchical features in the Euclidean space, and thus, can replace hyperbolic networks without loss in performance metrics while simultaneously leveraging the power of Euclidean networks such as interpretability and efficient execution of various model components.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge