Mats Persson

PPFM: Image denoising in photon-counting CT using single-step posterior sampling Poisson flow generative models

Dec 19, 2023Abstract:Diffusion and Poisson flow models have shown impressive performance in a wide range of generative tasks, including low-dose CT image denoising. However, one limitation in general, and for clinical applications in particular, is slow sampling. Due to their iterative nature, the number of function evaluations (NFE) required is usually on the order of $10-10^3$, both for conditional and unconditional generation. In this paper, we present posterior sampling Poisson flow generative models (PPFM), a novel image denoising technique for low-dose and photon-counting CT that produces excellent image quality whilst keeping NFE=1. Updating the training and sampling processes of Poisson flow generative models (PFGM)++, we learn a conditional generator which defines a trajectory between the prior noise distribution and the posterior distribution of interest. We additionally hijack and regularize the sampling process to achieve NFE=1. Our results shed light on the benefits of the PFGM++ framework compared to diffusion models. In addition, PPFM is shown to perform favorably compared to current state-of-the-art diffusion-style models with NFE=1, consistency models, as well as popular deep learning and non-deep learning-based image denoising techniques, on clinical low-dose CT images and clinical images from a prototype photon-counting CT system.

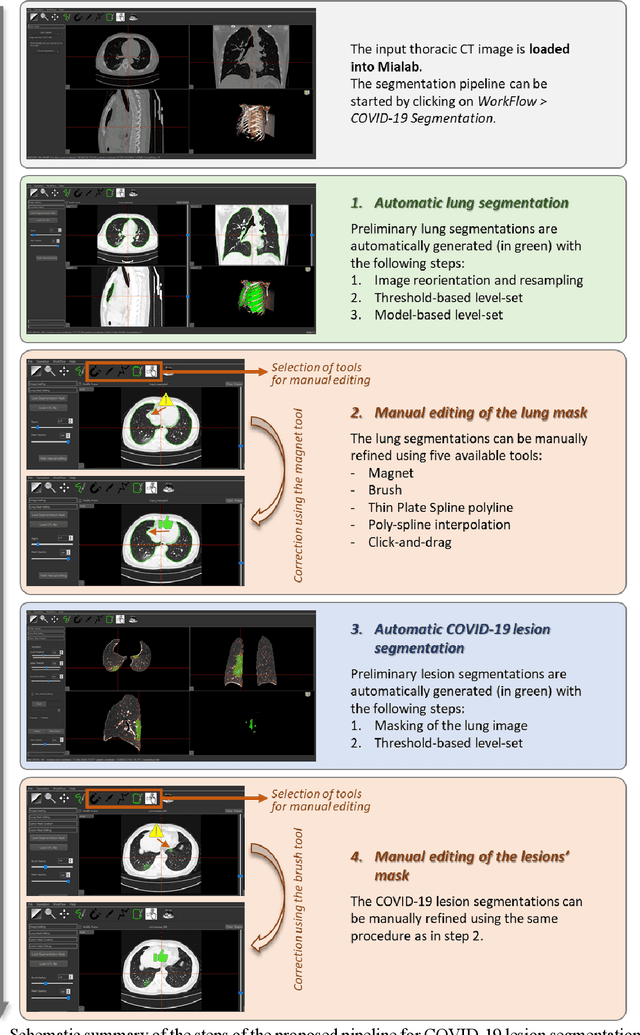

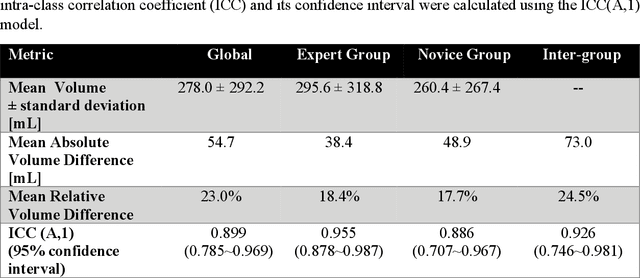

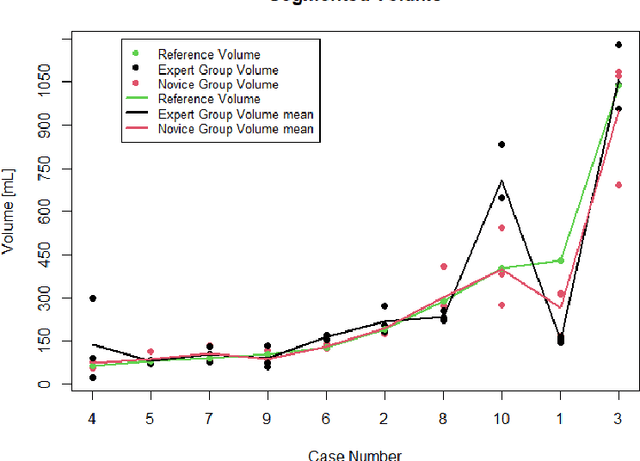

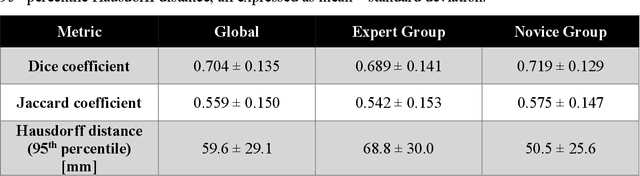

Development and evaluation of a 3D annotation software for interactive COVID-19 lesion segmentation in chest CT

Jan 13, 2021

Abstract:Segmentation of COVID-19 lesions from chest CT scans is of great importance for better diagnosing the disease and investigating its extent. However, manual segmentation can be very time consuming and subjective, given the lesions' large variation in shape, size and position. On the other hand, we still lack large manually segmented datasets that could be used for training machine learning-based models for fully automatic segmentation. In this work, we propose a new interactive and user-friendly tool for COVID-19 lesion segmentation, which works by alternating automatic steps (based on level-set segmentation and statistical shape modeling) with manual correction steps. The present software was tested by two different expertise groups: one group of three radiologists and one of three users with an engineering background. Promising segmentation results were obtained by both groups, which achieved satisfactory agreement both between- and within-group. Moreover, our interactive tool was shown to significantly speed up the lesion segmentation process, when compared to fully manual segmentation. Finally, we investigated inter-observer variability and how it is strongly influenced by several subjective factors, showing the importance for AI researchers and clinical doctors to be aware of the uncertainty in lesion segmentation results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge