Martin F. Kraus

Efficient and high accuracy 3-D OCT angiography motion correction in pathology

Oct 14, 2020

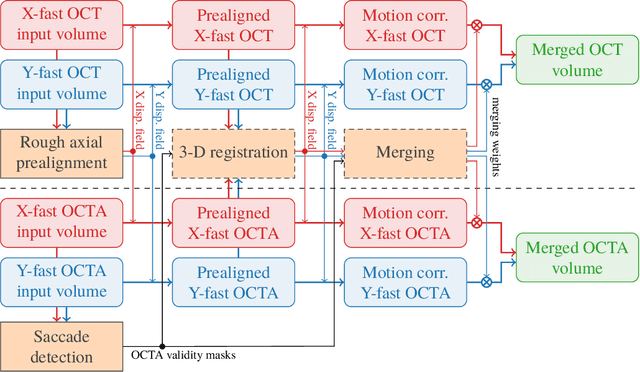

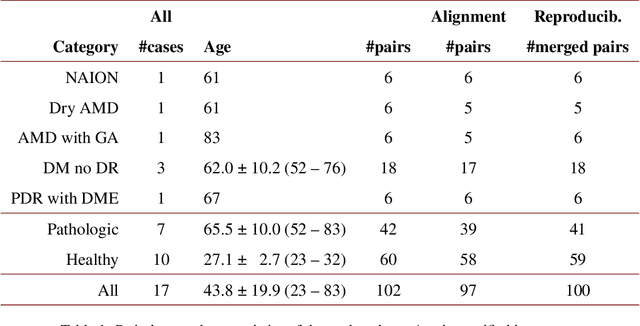

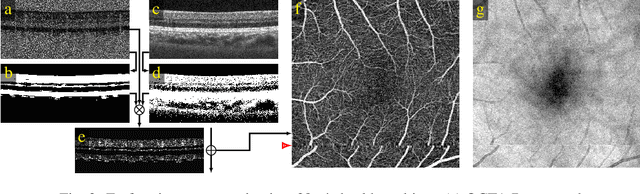

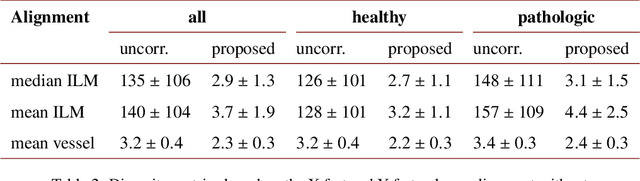

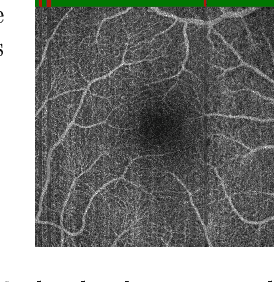

Abstract:We propose a novel method for non-rigid 3-D motion correction of orthogonally raster-scanned optical coherence tomography angiography volumes. This is the first approach that aligns predominantly axial structural features like retinal layers and transverse angiographic vascular features in a joint optimization. Combined with the use of orthogonal scans and favorization of kinematically more plausible displacements, the approach allows subpixel alignment and micrometer-scale distortion correction in all 3 dimensions. As no specific structures or layers are segmented, the approach is by design robust to pathologic changes. It is furthermore designed for highly parallel implementation and brief runtime, allowing its integration in clinical routine even for high density or wide-field scans. We evaluated the algorithm with metrics related to clinically relevant features in a large-scale quantitative evaluation based on 204 volumetric scans of 17 subjects including both a wide range of pathologies and healthy controls. Using this method, we achieve state-of-the-art axial performance and show significant advances in both transverse co-alignment and distortion correction, especially in the pathologic subgroup.

Deep OCT Angiography Image Generation for Motion Artifact Suppression

Jan 08, 2020

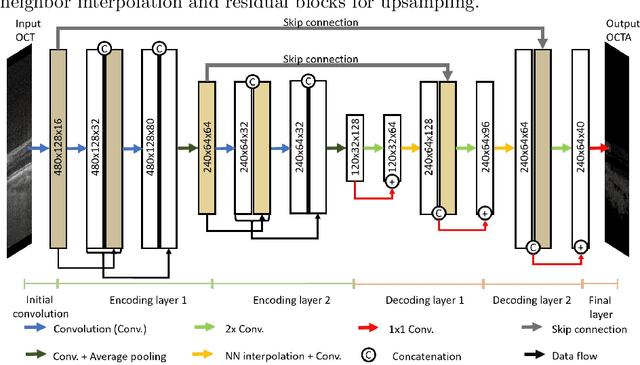

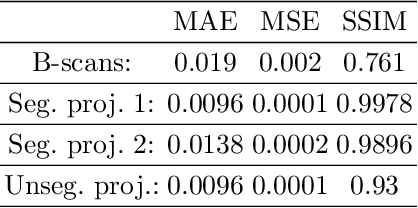

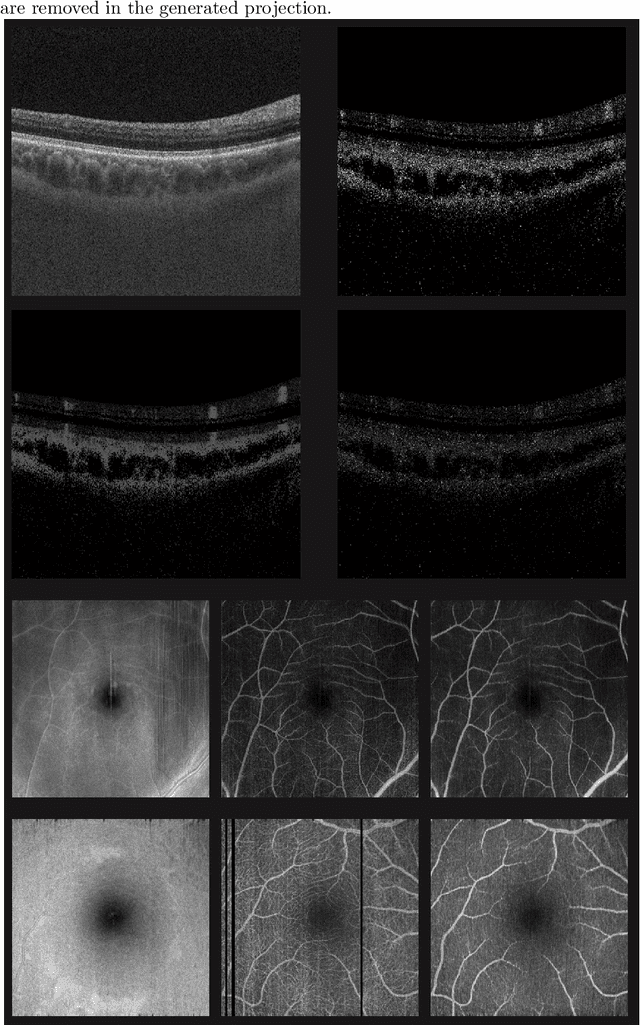

Abstract:Eye movements, blinking and other motion during the acquisition of optical coherence tomography (OCT) can lead to artifacts, when processed to OCT angiography (OCTA) images. Affected scans emerge as high intensity (white) or missing (black) regions, resulting in lost information. The aim of this research is to fill these gaps using a deep generative model for OCT to OCTA image translation relying on a single intact OCT scan. Therefore, a U-Net is trained to extract the angiographic information from OCT patches. At inference, a detection algorithm finds outlier OCTA scans based on their surroundings, which are then replaced by the trained network. We show that generative models can augment the missing scans. The augmented volumes could then be used for 3-D segmentation or increase the diagnostic value.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge