Markus Miettinen

Technical University Darmstadt

ARGUS: Context-Based Detection of Stealthy IoT Infiltration Attacks

Feb 16, 2023Abstract:IoT application domains, device diversity and connectivity are rapidly growing. IoT devices control various functions in smart homes and buildings, smart cities, and smart factories, making these devices an attractive target for attackers. On the other hand, the large variability of different application scenarios and inherent heterogeneity of devices make it very challenging to reliably detect abnormal IoT device behaviors and distinguish these from benign behaviors. Existing approaches for detecting attacks are mostly limited to attacks directly compromising individual IoT devices, or, require predefined detection policies. They cannot detect attacks that utilize the control plane of the IoT system to trigger actions in an unintended/malicious context, e.g., opening a smart lock while the smart home residents are absent. In this paper, we tackle this problem and propose ARGUS, the first self-learning intrusion detection system for detecting contextual attacks on IoT environments, in which the attacker maliciously invokes IoT device actions to reach its goals. ARGUS monitors the contextual setting based on the state and actions of IoT devices in the environment. An unsupervised Deep Neural Network (DNN) is used for modeling the typical contextual device behavior and detecting actions taking place in abnormal contextual settings. This unsupervised approach ensures that ARGUS is not restricted to detecting previously known attacks but is also able to detect new attacks. We evaluated ARGUS on heterogeneous real-world smart-home settings and achieve at least an F1-Score of 99.64% for each setup, with a false positive rate (FPR) of at most 0.03%.

Close the Gate: Detecting Backdoored Models in Federated Learning based on Client-Side Deep Layer Output Analysis

Oct 14, 2022

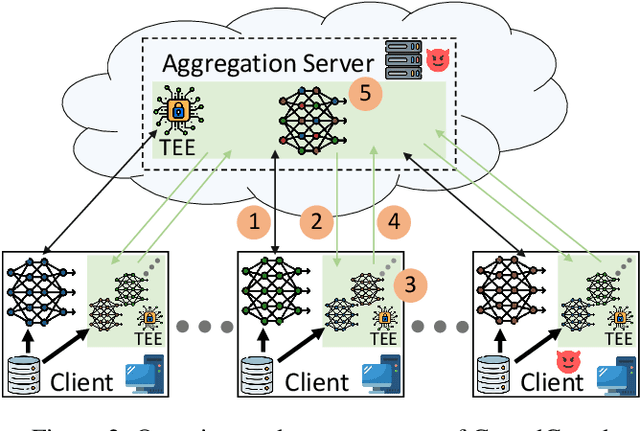

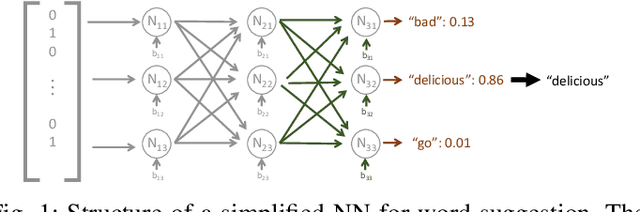

Abstract:Federated Learning (FL) is a scheme for collaboratively training Deep Neural Networks (DNNs) with multiple data sources from different clients. Instead of sharing the data, each client trains the model locally, resulting in improved privacy. However, recently so-called targeted poisoning attacks have been proposed that allow individual clients to inject a backdoor into the trained model. Existing defenses against these backdoor attacks either rely on techniques like Differential Privacy to mitigate the backdoor, or analyze the weights of the individual models and apply outlier detection methods that restricts these defenses to certain data distributions. However, adding noise to the models' parameters or excluding benign outliers might also reduce the accuracy of the collaboratively trained model. Additionally, allowing the server to inspect the clients' models creates a privacy risk due to existing knowledge extraction methods. We propose \textit{CrowdGuard}, a model filtering defense, that mitigates backdoor attacks by leveraging the clients' data to analyze the individual models before the aggregation. To prevent data leaks, the server sends the individual models to secure enclaves, running in client-located Trusted Execution Environments. To effectively distinguish benign and poisoned models, even if the data of different clients are not independently and identically distributed (non-IID), we introduce a novel metric called \textit{HLBIM} to analyze the outputs of the DNN's hidden layers. We show that the applied significance-based detection algorithm combined can effectively detect poisoned models, even in non-IID scenarios.

DeepSight: Mitigating Backdoor Attacks in Federated Learning Through Deep Model Inspection

Jan 03, 2022

Abstract:Federated Learning (FL) allows multiple clients to collaboratively train a Neural Network (NN) model on their private data without revealing the data. Recently, several targeted poisoning attacks against FL have been introduced. These attacks inject a backdoor into the resulting model that allows adversary-controlled inputs to be misclassified. Existing countermeasures against backdoor attacks are inefficient and often merely aim to exclude deviating models from the aggregation. However, this approach also removes benign models of clients with deviating data distributions, causing the aggregated model to perform poorly for such clients. To address this problem, we propose DeepSight, a novel model filtering approach for mitigating backdoor attacks. It is based on three novel techniques that allow to characterize the distribution of data used to train model updates and seek to measure fine-grained differences in the internal structure and outputs of NNs. Using these techniques, DeepSight can identify suspicious model updates. We also develop a scheme that can accurately cluster model updates. Combining the results of both components, DeepSight is able to identify and eliminate model clusters containing poisoned models with high attack impact. We also show that the backdoor contributions of possibly undetected poisoned models can be effectively mitigated with existing weight clipping-based defenses. We evaluate the performance and effectiveness of DeepSight and show that it can mitigate state-of-the-art backdoor attacks with a negligible impact on the model's performance on benign data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge