Marian Bittner

Controlled Face Manipulation and Synthesis for Data Augmentation

Feb 22, 2026Abstract:Deep learning vision models excel with abundant supervision, but many applications face label scarcity and class imbalance. Controllable image editing can augment scarce labeled data, yet edits often introduce artifacts and entangle non-target attributes. We study this in facial expression analysis, targeting Action Unit (AU) manipulation where annotation is costly and AU co-activation drives entanglement. We present a facial manipulation method that operates in the semantic latent space of a pre-trained face generator (Diffusion Autoencoder). Using lightweight linear models, we reduce entanglement of semantic features via (i) dependency-aware conditioning that accounts for AU co-activation, and (ii) orthogonal projection that removes nuisance attribute directions (e.g., glasses), together with an expression neutralization step to enable absolute AU edit. We use these edits to balance AU occurrence by editing labeled faces and to diversify identities/demographics via controlled synthesis. Augmenting AU detector training with the generated data improves accuracy and yields more disentangled predictions with fewer co-activation shortcuts, outperforming alternative data-efficient training strategies and suggesting improvements similar to what would require substantially more labeled data in our learning-curve analysis. Compared to prior methods, our edits are stronger, produce fewer artifacts, and preserve identity better.

Towards Single Camera Human 3D-Kinematics

Jan 13, 2023

Abstract:Markerless estimation of 3D Kinematics has the great potential to clinically diagnose and monitor movement disorders without referrals to expensive motion capture labs; however, current approaches are limited by performing multiple de-coupled steps to estimate the kinematics of a person from videos. Most current techniques work in a multi-step approach by first detecting the pose of the body and then fitting a musculoskeletal model to the data for accurate kinematic estimation. Errors in training data of the pose detection algorithms, model scaling, as well the requirement of multiple cameras limit the use of these techniques in a clinical setting. Our goal is to pave the way toward fast, easily applicable and accurate 3D kinematic estimation \xdeleted{in a clinical setting}. To this end, we propose a novel approach for direct 3D human kinematic estimation D3KE from videos using deep neural networks. Our experiments demonstrate that the proposed end-to-end training is robust and outperforms 2D and 3D markerless motion capture based kinematic estimation pipelines in terms of joint angles error by a large margin (35\% from 5.44 to 3.54 degrees). We show that D3KE is superior to the multi-step approach and can run at video framerate speeds. This technology shows the potential for clinical analysis from mobile devices in the future.

* Published in the MDPI Sensors special Issue "Sensors and Musculoskeletal Dynamics to Evaluate Human Movement" on December 28, 2022

Real-time Webcam Heart-Rate and Variability Estimation with Clean Ground Truth for Evaluation

Dec 31, 2020

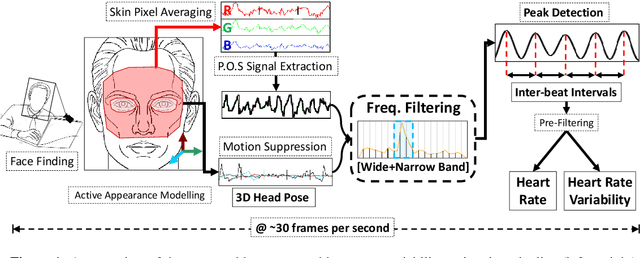

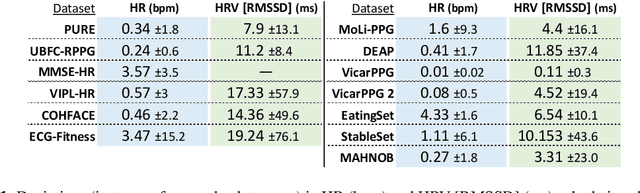

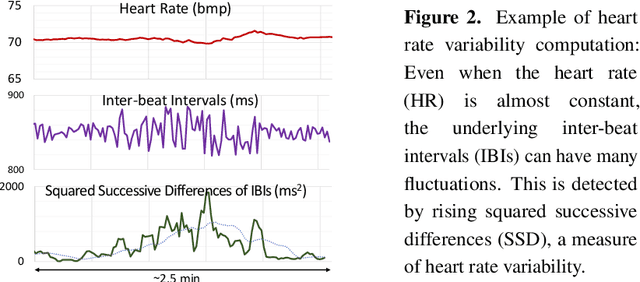

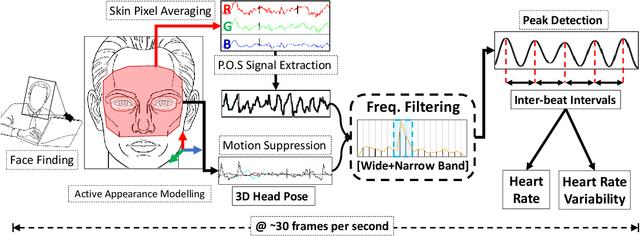

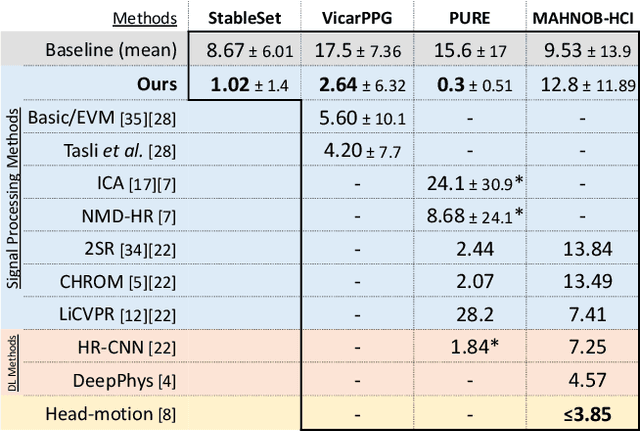

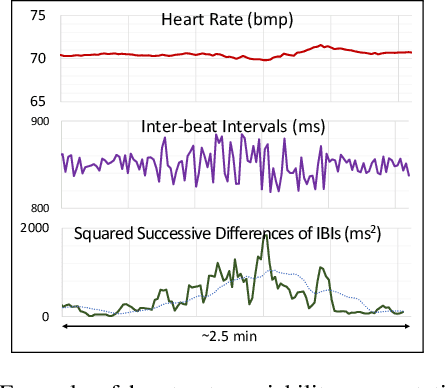

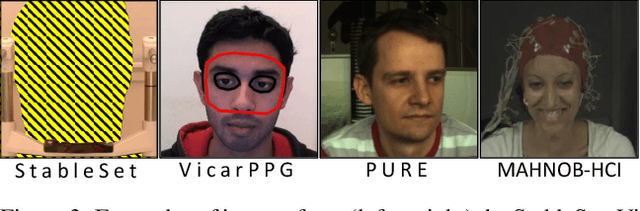

Abstract:Remote photo-plethysmography (rPPG) uses a camera to estimate a person's heart rate (HR). Similar to how heart rate can provide useful information about a person's vital signs, insights about the underlying physio/psychological conditions can be obtained from heart rate variability (HRV). HRV is a measure of the fine fluctuations in the intervals between heart beats. However, this measure requires temporally locating heart beats with a high degree of precision. We introduce a refined and efficient real-time rPPG pipeline with novel filtering and motion suppression that not only estimates heart rates, but also extracts the pulse waveform to time heart beats and measure heart rate variability. This unsupervised method requires no rPPG specific training and is able to operate in real-time. We also introduce a new multi-modal video dataset, VicarPPG 2, specifically designed to evaluate rPPG algorithms on HR and HRV estimation. We validate and study our method under various conditions on a comprehensive range of public and self-recorded datasets, showing state-of-the-art results and providing useful insights into some unique aspects. Lastly, we make available CleanerPPG, a collection of human-verified ground truth peak/heart-beat annotations for existing rPPG datasets. These verified annotations should make future evaluations and benchmarking of rPPG algorithms more accurate, standardized and fair.

* Published in the MDPI Applied Sciences journal special issue Video Analysis for Health Monitoring on December 2, 2020. arXiv admin note: text overlap with arXiv:1909.01206

Efficient Real-Time Camera Based Estimation of Heart Rate and Its Variability

Sep 03, 2019

Abstract:Remote photo-plethysmography (rPPG) uses a remotely placed camera to estimating a person's heart rate (HR). Similar to how heart rate can provide useful information about a person's vital signs, insights about the underlying physio/psychological conditions can be obtained from heart rate variability (HRV). HRV is a measure of the fine fluctuations in the intervals between heart beats. However, this measure requires temporally locating heart beats with a high degree of precision. We introduce a refined and efficient real-time rPPG pipeline with novel filtering and motion suppression that not only estimates heart rate more accurately, but also extracts the pulse waveform to time heart beats and measure heart rate variability. This method requires no rPPG specific training and is able to operate in real-time. We validate our method on a self-recorded dataset under an idealized lab setting, and show state-of-the-art results on two public dataset with realistic conditions (VicarPPG and PURE).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge