Marco Cristoforetti

IT-DPC-SRI: A Cloud-Optimized Archive of Italian Radar Precipitation (2010-2025)

Feb 16, 2026Abstract:We present IT-DPC-SRI, the first publicly available long-term archive of Italian weather radar precipitation estimates, spanning 16 years (2010--2025). The dataset contains Surface Rainfall Intensity (SRI) observations from the Italian Civil Protection Department's national radar mosaic, harmonized into a coherent Analysis-Ready Cloud-Optimized (ARCO) Zarr datacube. The archive comprises over one million timesteps at temporal resolutions from 15 to 5 minutes, covering a $1200\times1400$ kilometer domain at 1 kilometer spatial resolution, compressed from 7TB to 51GB on disk. We address the historical fragmentation of Italian radar data - previously scattered across heterogeneous formats (OPERA BUFR, HDF5, GeoTIFF) with varying spatial domains and projections - by reprocessing the entire record into a unified store. The dataset is accessible as a static versioned snapshot on Zenodo, via cloud-native access on the ECMWF European Weather Cloud, and as a continuously updated live version on the ArcoDataHub platform. This release fills a significant gap in European radar data availability, as Italy does not participate in the EUMETNET OPERA pan-European radar composite. The dataset is released under a CC BY-SA 4.0 license.

GPTCast: a weather language model for precipitation nowcasting

Jul 02, 2024

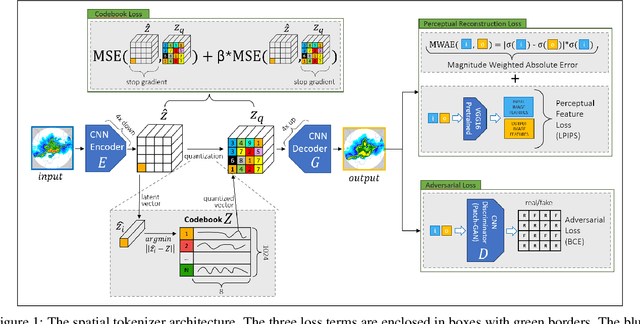

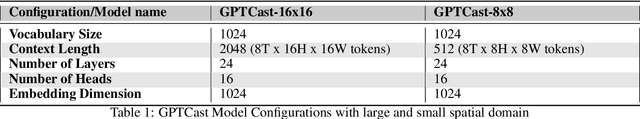

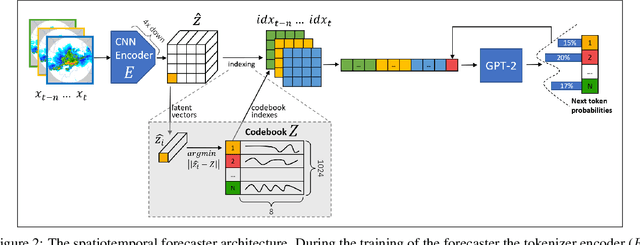

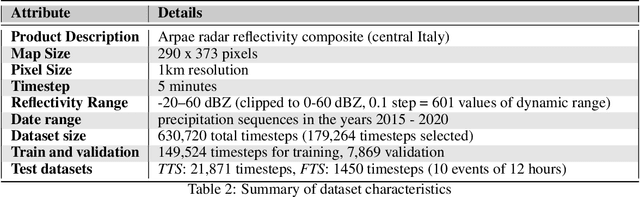

Abstract:This work introduces GPTCast, a generative deep-learning method for ensemble nowcast of radar-based precipitation, inspired by advancements in large language models (LLMs). We employ a GPT model as a forecaster to learn spatiotemporal precipitation dynamics using tokenized radar images. The tokenizer is based on a Quantized Variational Autoencoder featuring a novel reconstruction loss tailored for the skewed distribution of precipitation that promotes faithful reconstruction of high rainfall rates. The approach produces realistic ensemble forecasts and provides probabilistic outputs with accurate uncertainty estimation. The model is trained without resorting to randomness, all variability is learned solely from the data and exposed by model at inference for ensemble generation. We train and test GPTCast using a 6-year radar dataset over the Emilia-Romagna region in Northern Italy, showing superior results compared to state-of-the-art ensemble extrapolation methods.

Can AI be enabled to dynamical downscaling? Training a Latent Diffusion Model to mimic km-scale COSMO-CLM downscaling of ERA5 over Italy

Jun 19, 2024

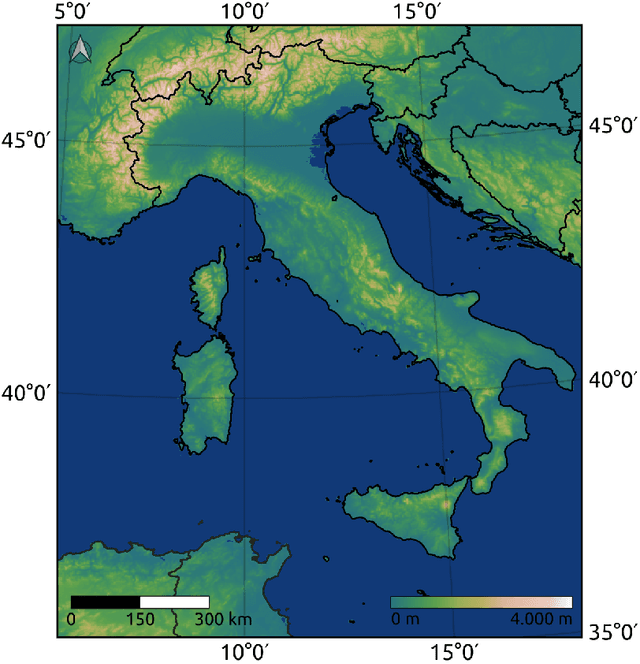

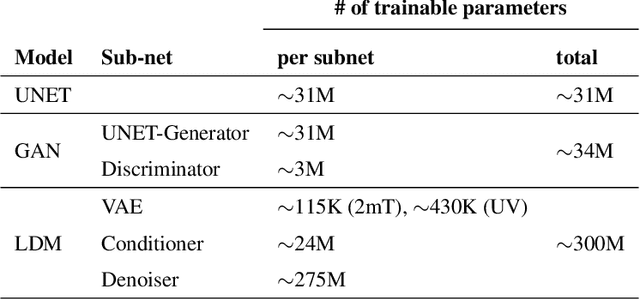

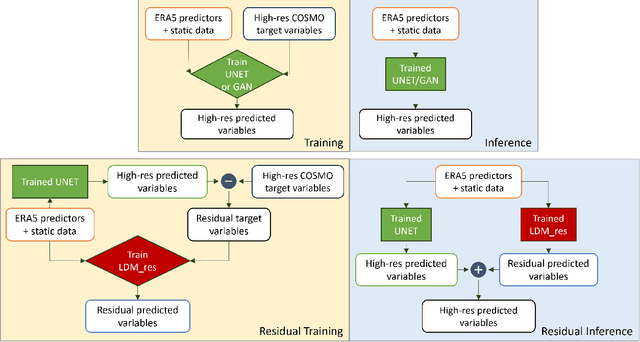

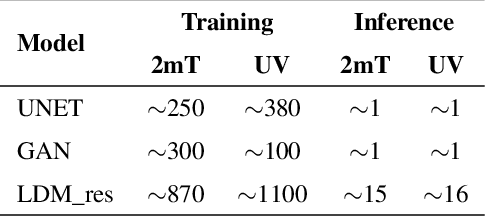

Abstract:Downscaling techniques are one of the most prominent applications of Deep Learning (DL) in Earth System Modeling. A robust DL downscaling model can generate high-resolution fields from coarse-scale numerical model simulations, saving the timely and resourceful applications of regional/local models. Additionally, generative DL models have the potential to provide uncertainty information, by generating ensemble-like scenario pools, a task that is computationally prohibitive for traditional numerical simulations. In this study, we apply a Latent Diffusion Model (LDM) to downscale ERA5 data over Italy up to a resolution of 2 km. The high-resolution target data consists of results from a high-resolution dynamical downscaling performed with COSMO-CLM. Our goal is to demonstrate that recent advancements in generative modeling enable DL-based models to deliver results comparable to those of numerical dynamical downscaling models, given the same input data (i.e., ERA5 data), preserving the realism of fine-scale features and flow characteristics. The training and testing database consists of hourly data from 2000 to 2020. The target variables of this study are 2-m temperature and 10-m horizontal wind components. A selection of predictors from ERA5 is used as input to the LDM, and a residual approach against a reference UNET is leveraged in applying the LDM. The performance of the generative LDM is compared with reference baselines of increasing complexity: quadratic interpolation of ERA5, a UNET, and a Generative Adversarial Network (GAN) built on the same reference UNET. Results highlight the improvements introduced by the LDM architecture and the residual approach over these baselines. The models are evaluated on a yearly test dataset, assessing the models' performance through deterministic metrics, spatial distribution of errors, and reconstruction of frequency and power spectra distributions.

High Resolution Forecasting of Heat Waves impacts on Leaf Area Index by Multiscale Multitemporal Deep Learning

Sep 13, 2019

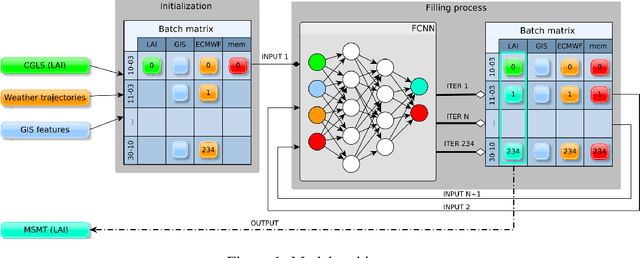

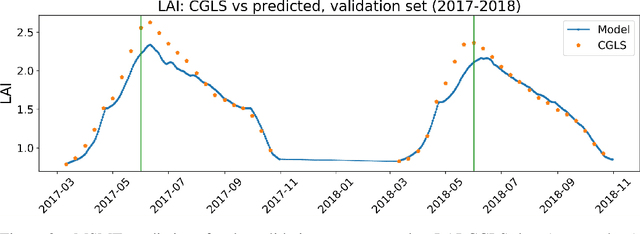

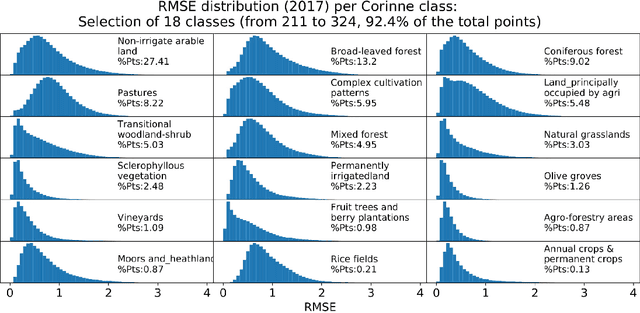

Abstract:Climate change impacts could cause progressive decrease of crop quality and yield, up to harvest failures. In particular, heat waves and other climate extremes can lead to localized food shortages and even threaten food security of communities worldwide. In this study, we apply a deep learning architecture for high resolution forecasting (300 m, 10 days) of the Leaf Area Index (LAI), whose dynamics has been widely used to model the growth phase of crops and impact of heat waves. LAI models can be computed at 0.1 degree spatial resolution with an auto regressive component adjusted with weather conditions, validated with remote sensing measurements. However model actionability is poor in regions of varying terrain morphology at this scale (about 8 km at the Alps latitude). Our deep learning model aims instead at forecasting LAI by training multiscale multitemporal (MSMT) data from the Copernicus Global Land Service (CGLS) project for all Europe at 300m resolution and medium-resolution historical weather data. Further, the deep learning model inputs integrate high-resolution land surface features, known to improve forecasts of agricultural productivity. The historical weather data are then replaced with forecast values to predict LAI values at 10 day horizon on Europe. We propose the MSMT model to develop a high resolution crop-specific warning system for mitigating damage due to heat waves and other extreme events.

Towards meaningful physics from generative models

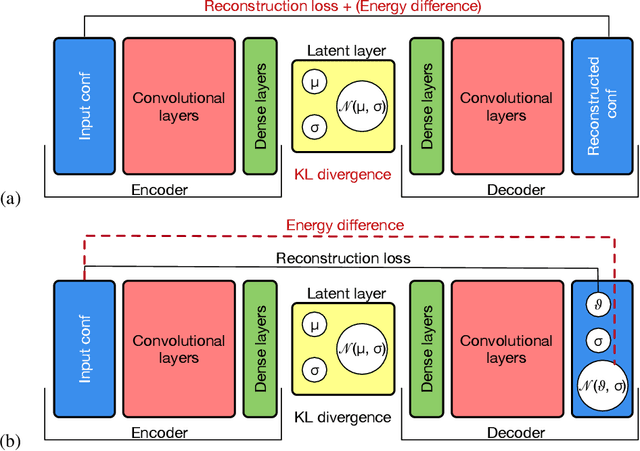

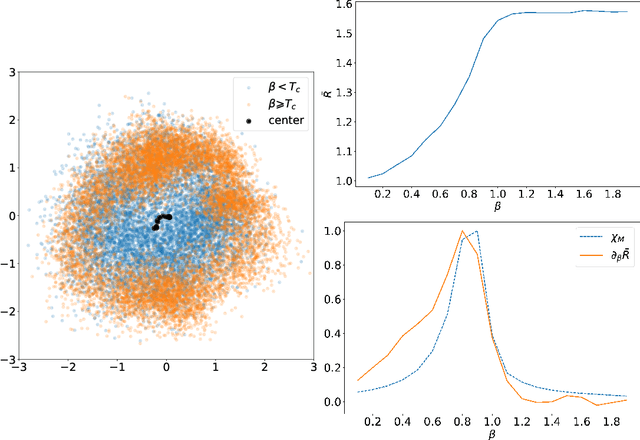

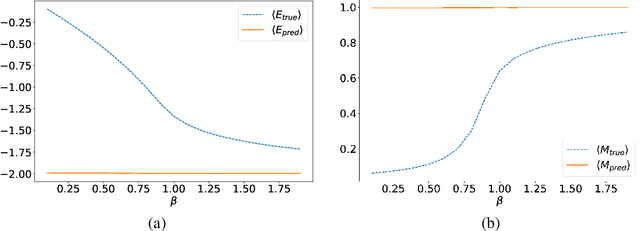

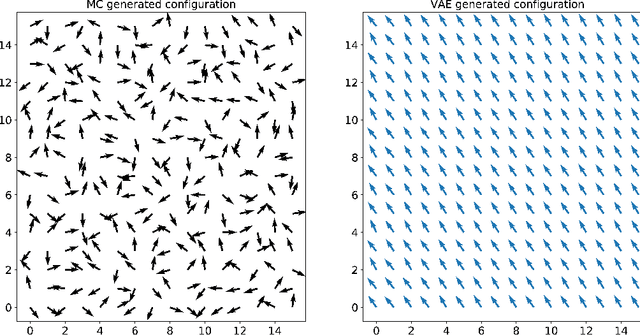

May 26, 2017

Abstract:In several physical systems, important properties characterizing the system itself are theoretically related with specific degrees of freedom. Although standard Monte Carlo simulations provide an effective tool to accurately reconstruct the physical configurations of the system, they are unable to isolate the different contributions corresponding to different degrees of freedom. Here we show that unsupervised deep learning can become a valid support to MC simulation, coupling useful insights in the phases detection task with good reconstruction performance. As a testbed we consider the 2D XY model, showing that a deep neural network based on variational autoencoders can detect the continuous Kosterlitz-Thouless (KT) transitions, and that, if endowed with the appropriate constrains, they generate configurations with meaningful physical content.

Sparse Predictive Structure of Deconvolved Functional Brain Networks

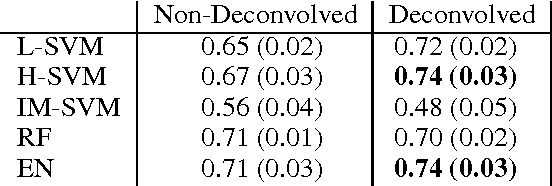

Oct 24, 2013

Abstract:The functional and structural representation of the brain as a complex network is marked by the fact that the comparison of noisy and intrinsically correlated high-dimensional structures between experimental conditions or groups shuns typical mass univariate methods. Furthermore most network estimation methods cannot distinguish between real and spurious correlation arising from the convolution due to nodes' interaction, which thus introduces additional noise in the data. We propose a machine learning pipeline aimed at identifying multivariate differences between brain networks associated to different experimental conditions. The pipeline (1) leverages the deconvolved individual contribution of each edge and (2) maps the task into a sparse classification problem in order to construct the associated "sparse deconvolved predictive network", i.e., a graph with the same nodes of those compared but whose edge weights are defined by their relevance for out of sample predictions in classification. We present an application of the proposed method by decoding the covert attention direction (left or right) based on the single-trial functional connectivity matrix extracted from high-frequency magnetoencephalography (MEG) data. Our results demonstrate how network deconvolution matched with sparse classification methods outperforms typical approaches for MEG decoding.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge