Mahsa Shakeri

Polytechnique Montreal, Canada, CHU Sainte-Justine Research Center, Montreal, Canada

Polarization-Based Eye Tracking with Personalized Siamese Architectures

Mar 26, 2026Abstract:Head-mounted devices integrated with eye tracking promise a solution for natural human-computer interaction. However, they typically require per-user calibration for optimal performance due to inter-person variability. A differential personalization approach using Siamese architectures learns relative gaze displacements and reconstructs absolute gaze from a small set of calibration frames. In this paper, we benchmark Siamese personalization on polarization-enabled eye tracking. For benchmarking, we use a 338-subject dataset captured with a polarization-sensitive camera and 850 nm illumination. We achieve performance comparable to linear calibration with 10-fold fewer samples. Using polarization inputs for Siamese personalization reduces gaze error by up to 12% compared to near-infrared (NIR)-based inputs. Combining Siamese personalization with linear calibration yields further improvements of up to 13% over a linearly calibrated baseline. These results establish Siamese personalization as a practical approach enabling accurate eye tracking.

Prior-based Coregistration and Cosegmentation

Jul 22, 2016

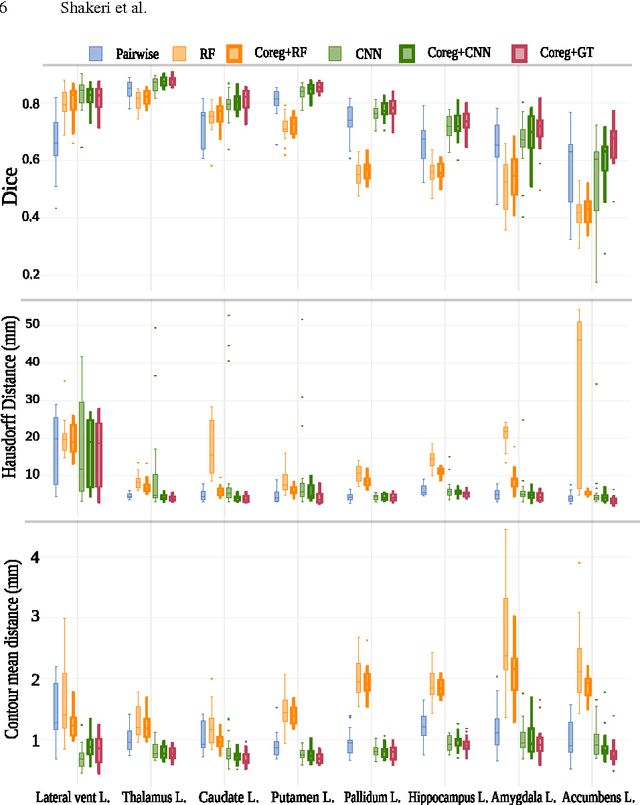

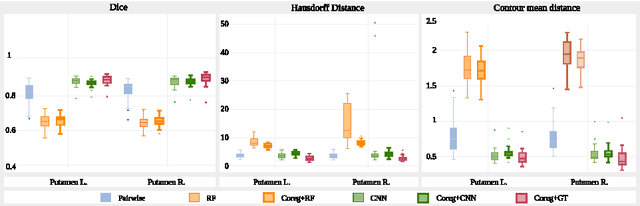

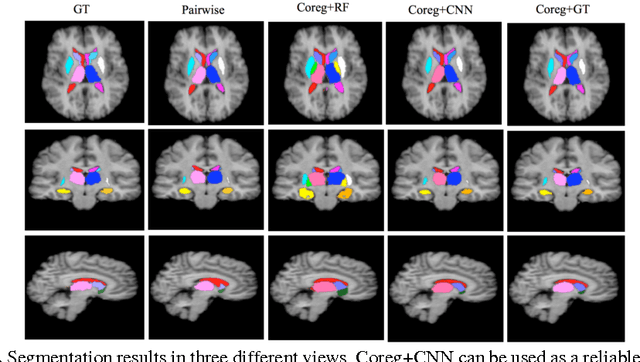

Abstract:We propose a modular and scalable framework for dense coregistration and cosegmentation with two key characteristics: first, we substitute ground truth data with the semantic map output of a classifier; second, we combine this output with population deformable registration to improve both alignment and segmentation. Our approach deforms all volumes towards consensus, taking into account image similarities and label consistency. Our pipeline can incorporate any classifier and similarity metric. Results on two datasets, containing annotations of challenging brain structures, demonstrate the potential of our method.

* The first two authors contributed equally

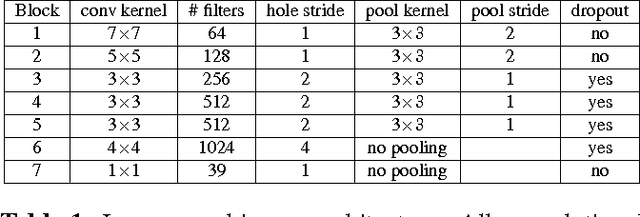

Sub-cortical brain structure segmentation using F-CNN's

Feb 05, 2016

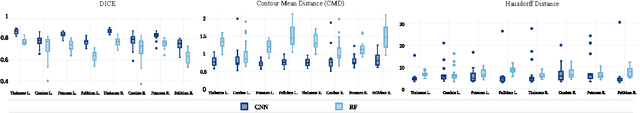

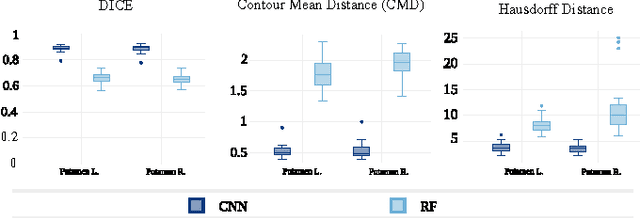

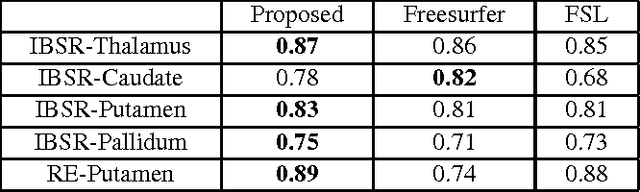

Abstract:In this paper we propose a deep learning approach for segmenting sub-cortical structures of the human brain in Magnetic Resonance (MR) image data. We draw inspiration from a state-of-the-art Fully-Convolutional Neural Network (F-CNN) architecture for semantic segmentation of objects in natural images, and adapt it to our task. Unlike previous CNN-based methods that operate on image patches, our model is applied on a full blown 2D image, without any alignment or registration steps at testing time. We further improve segmentation results by interpreting the CNN output as potentials of a Markov Random Field (MRF), whose topology corresponds to a volumetric grid. Alpha-expansion is used to perform approximate inference imposing spatial volumetric homogeneity to the CNN priors. We compare the performance of the proposed pipeline with a similar system using Random Forest-based priors, as well as state-of-art segmentation algorithms, and show promising results on two different brain MRI datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge