Luke Robinson

Select2Plan: Training-Free ICL-Based Planning through VQA and Memory Retrieval

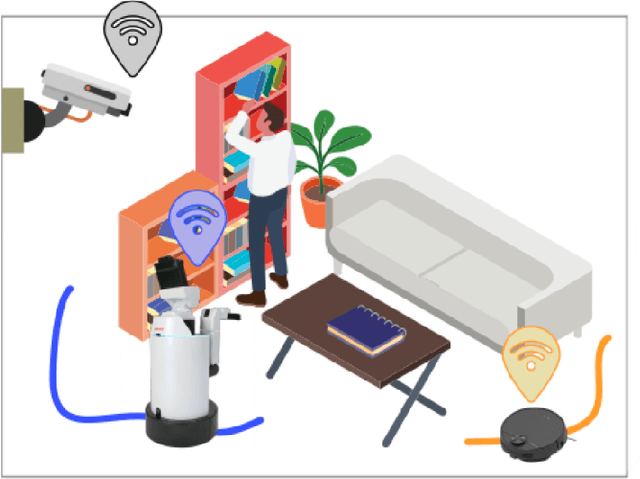

Nov 06, 2024Abstract:This study explores the potential of off-the-shelf Vision-Language Models (VLMs) for high-level robot planning in the context of autonomous navigation. Indeed, while most of existing learning-based approaches for path planning require extensive task-specific training/fine-tuning, we demonstrate how such training can be avoided for most practical cases. To do this, we introduce Select2Plan (S2P), a novel training-free framework for high-level robot planning which completely eliminates the need for fine-tuning or specialised training. By leveraging structured Visual Question-Answering (VQA) and In-Context Learning (ICL), our approach drastically reduces the need for data collection, requiring a fraction of the task-specific data typically used by trained models, or even relying only on online data. Our method facilitates the effective use of a generally trained VLM in a flexible and cost-efficient way, and does not require additional sensing except for a simple monocular camera. We demonstrate its adaptability across various scene types, context sources, and sensing setups. We evaluate our approach in two distinct scenarios: traditional First-Person View (FPV) and infrastructure-driven Third-Person View (TPV) navigation, demonstrating the flexibility and simplicity of our method. Our technique significantly enhances the navigational capabilities of a baseline VLM of approximately 50% in TPV scenario, and is comparable to trained models in the FPV one, with as few as 20 demonstrations.

NeRFoot: Robot-Footprint Estimation for Image-Based Visual Servoing

Aug 02, 2024

Abstract:This paper investigates the utility of Neural Radiance Fields (NeRF) models in extending the regions of operation of a mobile robot, controlled by Image-Based Visual Servoing (IBVS) via static CCTV cameras. Using NeRF as a 3D-representation prior, the robot's footprint may be extrapolated geometrically and used to train a CNN-based network to extract it online from the robot's appearance alone. The resulting footprint results in a tighter bound than a robot-wide bounding box, allowing the robot's controller to prescribe more optimal trajectories and expand its safe operational floor area.

OORD: The Oxford Offroad Radar Dataset

Mar 05, 2024Abstract:There is a growing academic interest as well as commercial exploitation of millimetre-wave scanning radar for autonomous vehicle localisation and scene understanding. Although several datasets to support this research area have been released, they are primarily focused on urban or semi-urban environments. Nevertheless, rugged offroad deployments are important application areas which also present unique challenges and opportunities for this sensor technology. Therefore, the Oxford Offroad Radar Dataset (OORD) presents data collected in the rugged Scottish highlands in extreme weather. The radar data we offer to the community are accompanied by GPS/INS reference - to further stimulate research in radar place recognition. In total we release over 90GiB of radar scans as well as GPS and IMU readings by driving a diverse set of four routes over 11 forays, totalling approximately 154km of rugged driving. This is an area increasingly explored in literature, and we therefore present and release examples of recent open-sourced radar place recognition systems and their performance on our dataset. This includes a learned neural network, the weights of which we also release. The data and tools are made freely available to the community at https://oxford-robotics-institute.github.io/oord-dataset.

Robot-Relay : Building-Wide, Calibration-Less Visual Servoing with Learned Sensor Handover Network

Oct 24, 2023Abstract:We present a system which grows and manages a network of remote viewpoints during the natural installation cycle for a newly installed camera network or a newly deployed robot fleet. No explicit notion of camera position or orientation is required, neither global - i.e. relative to a building plan - nor local - i.e. relative to an interesting point in a room. Furthermore, no metric relationship between viewpoints is required. Instead, we leverage our prior work in effective remote control without extrinsic or intrinsic calibration and extend it to the multi-camera setting. In this, we memorise, from simultaneous robot detections in the tracker thread, soft pixel-wise topological connections between viewpoints. We demonstrate our system with repeated autonomous traversals of workspaces connected by a network of six cameras across a productive office environment.

Visual Servoing on Wheels: Robust Robot Orientation Estimation in Remote Viewpoint Control

Jun 26, 2023

Abstract:This work proposes a fast deployment pipeline for visually-servoed robots which does not assume anything about either the robot - e.g. sizes, colour or the presence of markers - or the deployment environment. In this, accurate estimation of robot orientation is crucial for successful navigation in complex environments; manual labelling of angular values is, though, time-consuming and possibly hard to perform. For this reason, we propose a weakly supervised pipeline that can produce a vast amount of data in a small amount of time. We evaluate our approach on a dataset of remote camera images captured in various indoor environments demonstrating high tracking performances when integrated into a fully-autonomous pipeline with a simple controller. With this, we then analyse the data requirement of our approach, showing how it is possible to deploy a new robot in a new environment in less than 30.00 min.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge