Luke Breitfeller

Multi-Scale Contrastive Co-Training for Event Temporal Relation Extraction

Sep 01, 2022

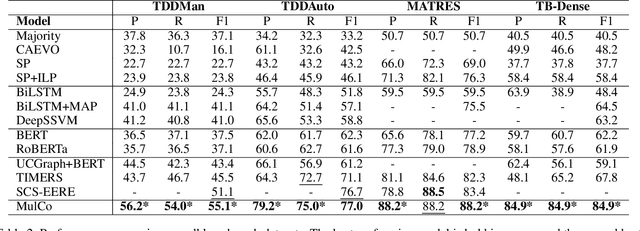

Abstract:Extracting temporal relationships between pairs of events in texts is a crucial yet challenging problem for natural language understanding. Depending on the distance between the events, models must learn to differently balance information from local and global contexts surrounding the event pair for temporal relation prediction. Learning how to fuse this information has proved challenging for transformer-based language models. Therefore, we present MulCo: Multi-Scale Contrastive Co-Training, a technique for the better fusion of local and global contextualized features. Our model uses a BERT-based language model to encode local context and a Graph Neural Network (GNN) to represent global document-level syntactic and temporal characteristics. Unlike previous state-of-the-art methods, which use simple concatenation on multi-view features or select optimal sentences using sophisticated reinforcement learning approaches, our model co-trains GNN and BERT modules using a multi-scale contrastive learning objective. The GNN and BERT modules learn a synergistic parameterization by contrasting GNN multi-layer multi-hop subgraphs (i.e., global context embeddings) and BERT outputs (i.e., local context embeddings) through end-to-end back-propagation. We empirically demonstrate that MulCo provides improved ability to fuse local and global contexts encoded using BERT and GNN compared to the current state-of-the-art. Our experimental results show that MulCo achieves new state-of-the-art results on several temporal relation extraction datasets.

STAGE: Tool for Automated Extraction of Semantic Time Cues to Enrich Neural Temporal Ordering Models

May 15, 2021

Abstract:Despite achieving state-of-the-art accuracy on temporal ordering of events, neural models showcase significant gaps in performance. Our work seeks to fill one of these gaps by leveraging an under-explored dimension of textual semantics: rich semantic information provided by explicit textual time cues. We develop STAGE, a system that consists of a novel temporal framework and a parser that can automatically extract time cues and convert them into representations suitable for integration with neural models. We demonstrate the utility of extracted cues by integrating them with an event ordering model using a joint BiLSTM and ILP constraint architecture. We outline the functionality of the 3-part STAGE processing approach, and show two methods of integrating its representations with the BiLSTM-ILP model: (i) incorporating semantic cues as additional features, and (ii) generating new constraints from semantic cues to be enforced in the ILP. We demonstrate promising results on two event ordering datasets, and highlight important issues in semantic cue representation and integration for future research.

Time-series Insights into the Process of Passing or Failing Online University Courses using Neural-Induced Interpretable Student States

May 01, 2019

Abstract:This paper addresses a key challenge in Educational Data Mining, namely to model student behavioral trajectories in order to provide a means for identifying students most at-risk, with the goal of providing supportive interventions. While many forms of data including clickstream data or data from sensors have been used extensively in time series models for such purposes, in this paper we explore the use of textual data, which is sometimes available in the records of students at large, online universities. We propose a time series model that constructs an evolving student state representation using both clickstream data and a signal extracted from the textual notes recorded by human mentors assigned to each student. We explore how the addition of this textual data improves both the predictive power of student states for the purpose of identifying students at risk for course failure as well as for providing interpretable insights about student course engagement processes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge