Luis Baumela

Universidad Politécnica de Madrid

Pose-guided multi-task video transformer for driver action recognition

Jul 18, 2024Abstract:We investigate the task of identifying situations of distracted driving through analysis of in-car videos. To tackle this challenge we introduce a multi-task video transformer that predicts both distracted actions and driver pose. Leveraging VideoMAEv2, a large pre-trained architecture, our approach incorporates semantic information from human keypoint locations to enhance action recognition and decrease computational overhead by minimizing the number of spatio-temporal tokens. By guiding token selection with pose and class information, we notably reduce the model's computational requirements while preserving the baseline accuracy. Our model surpasses existing state-of-the art results in driver action recognition while exhibiting superior efficiency compared to current video transformer-based approaches.

BEBLID: Boosted efficient binary local image descriptor

Feb 07, 2024Abstract:Efficient matching of local image features is a fundamental task in many computer vision applications. However, the real-time performance of top matching algorithms is compromised in computationally limited devices, such as mobile phones or drones, due to the simplicity of their hardware and their finite energy supply. In this paper we introduce BEBLID, an efficient learned binary image descriptor. It improves our previous real-valued descriptor, BELID, making it both more efficient for matching and more accurate. To this end we use AdaBoost with an improved weak-learner training scheme that produces better local descriptions. Further, we binarize our descriptor by forcing all weak-learners to have the same weight in the strong learner combination and train it in an unbalanced data set to address the asymmetries arising in matching and retrieval tasks. In our experiments BEBLID achieves an accuracy close to SIFT and better computational efficiency than ORB, the fastest algorithm in the literature.

BAdaCost: Multi-class Boosting with Costs

Feb 06, 2024

Abstract:We present BAdaCost, a multi-class cost-sensitive classification algorithm. It combines a set of cost-sensitive multi-class weak learners to obtain a strong classification rule within the Boosting framework. To derive the algorithm we introduce CMEL, a Cost-sensitive Multi-class Exponential Loss that generalizes the losses optimized in various classification algorithms such as AdaBoost, SAMME, Cost-sensitive AdaBoost and PIBoost. Hence unifying them under a common theoretical framework. In the experiments performed we prove that BAdaCost achieves significant gains in performance when compared to previous multi-class cost-sensitive approaches. The advantages of the proposed algorithm in asymmetric multi-class classification are also evaluated in practical multi-view face and car detection problems.

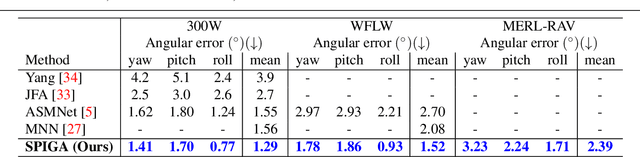

On the representation and methodology for wide and short range head pose estimation

Jan 11, 2024Abstract:Head pose estimation (HPE) is a problem of interest in computer vision to improve the performance of face processing tasks in semi-frontal or profile settings. Recent applications require the analysis of faces in the full 360{\deg} rotation range. Traditional approaches to solve the semi-frontal and profile cases are not directly amenable for the full rotation case. In this paper we analyze the methodology for short- and wide-range HPE and discuss which representations and metrics are adequate for each case. We show that the popular Euler angles representation is a good choice for short-range HPE, but not at extreme rotations. However, the Euler angles' gimbal lock problem prevents them from being used as a valid metric in any setting. We also revisit the current cross-data set evaluation methodology and note that the lack of alignment between the reference systems of the training and test data sets negatively biases the results of all articles in the literature. We introduce a procedure to quantify this misalignment and a new methodology for cross-data set HPE that establishes new, more accurate, SOTA for the 300W-LP|Biwi benchmark. We also propose a generalization of the geodesic angular distance metric that enables the construction of a loss that controls the contribution of each training sample to the optimization of the model. Finally, we introduce a wide range HPE benchmark based on the CMU Panoptic data set.

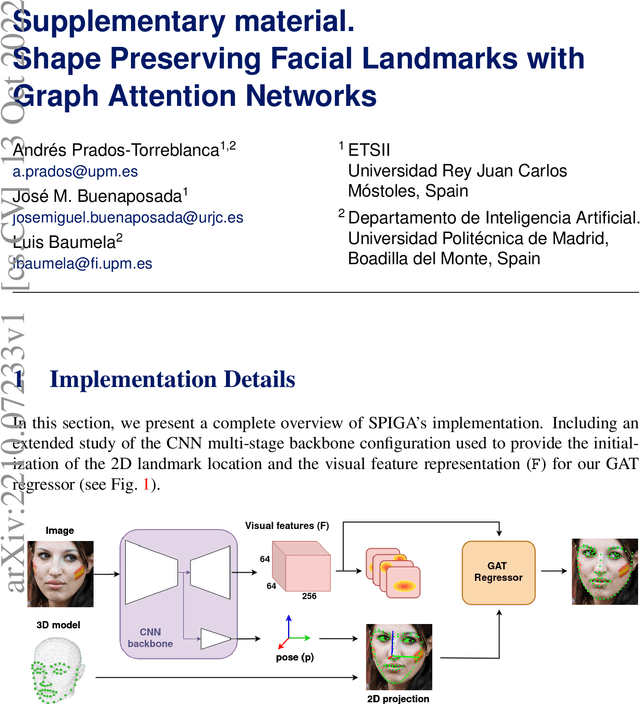

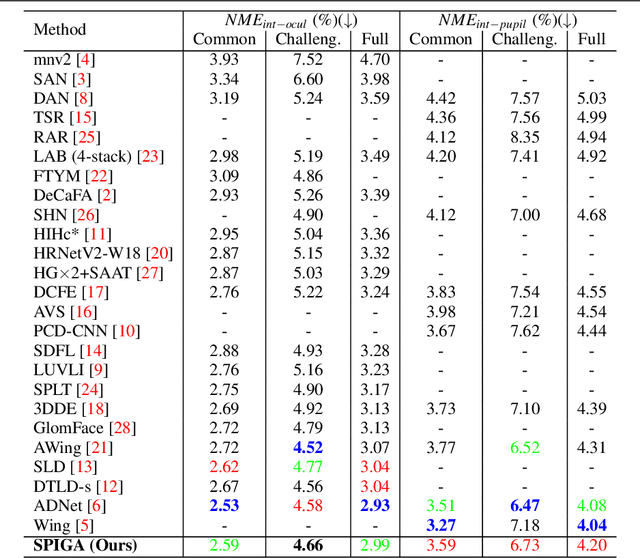

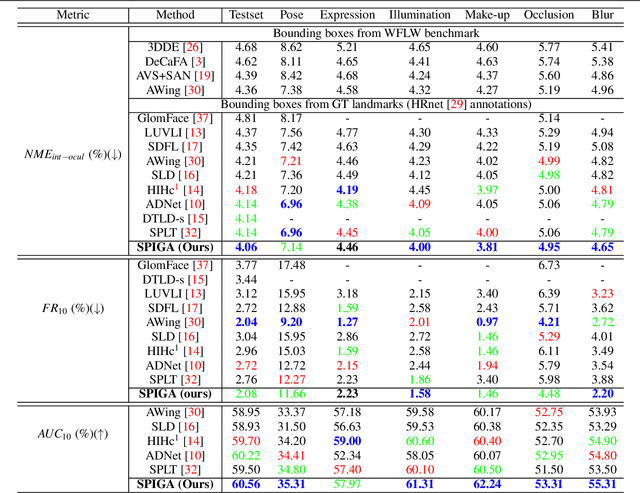

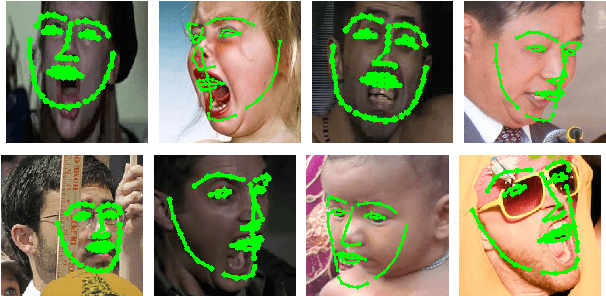

Shape Preserving Facial Landmarks with Graph Attention Networks

Oct 13, 2022

Abstract:Top-performing landmark estimation algorithms are based on exploiting the excellent ability of large convolutional neural networks (CNNs) to represent local appearance. However, it is well known that they can only learn weak spatial relationships. To address this problem, we propose a model based on the combination of a CNN with a cascade of Graph Attention Network regressors. To this end, we introduce an encoding that jointly represents the appearance and location of facial landmarks and an attention mechanism to weigh the information according to its reliability. This is combined with a multi-task approach to initialize the location of graph nodes and a coarse-to-fine landmark description scheme. Our experiments confirm that the proposed model learns a global representation of the structure of the face, achieving top performance in popular benchmarks on head pose and landmark estimation. The improvement provided by our model is most significant in situations involving large changes in the local appearance of landmarks.

Multi-task head pose estimation in-the-wild

Feb 04, 2022

Abstract:We present a deep learning-based multi-task approach for head pose estimation in images. We contribute with a network architecture and training strategy that harness the strong dependencies among face pose, alignment and visibility, to produce a top performing model for all three tasks. Our architecture is an encoder-decoder CNN with residual blocks and lateral skip connections. We show that the combination of head pose estimation and landmark-based face alignment significantly improve the performance of the former task. Further, the location of the pose task at the bottleneck layer, at the end of the encoder, and that of tasks depending on spatial information, such as visibility and alignment, in the final decoder layer, also contribute to increase the final performance. In the experiments conducted the proposed model outperforms the state-of-the-art in the face pose and visibility tasks. By including a final landmark regression step it also produces face alignment results on par with the state-of-the-art.

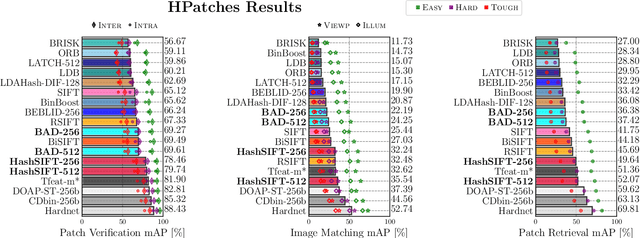

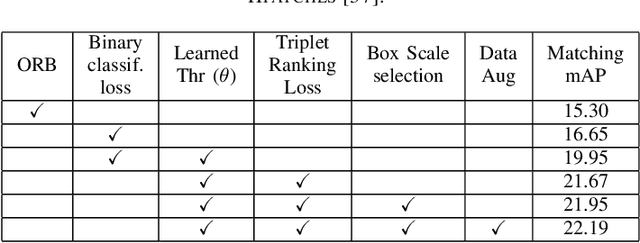

Revisiting Binary Local Image Description for Resource Limited Devices

Aug 18, 2021

Abstract:The advent of a panoply of resource limited devices opens up new challenges in the design of computer vision algorithms with a clear compromise between accuracy and computational requirements. In this paper we present new binary image descriptors that emerge from the application of triplet ranking loss, hard negative mining and anchor swapping to traditional features based on pixel differences and image gradients. These descriptors, BAD (Box Average Difference) and HashSIFT, establish new operating points in the state-of-the-art's accuracy vs.\ resources trade-off curve. In our experiments we evaluate the accuracy, execution time and energy consumption of the proposed descriptors. We show that BAD bears the fastest descriptor implementation in the literature while HashSIFT approaches in accuracy that of the top deep learning-based descriptors, being computationally more efficient. We have made the source code public.

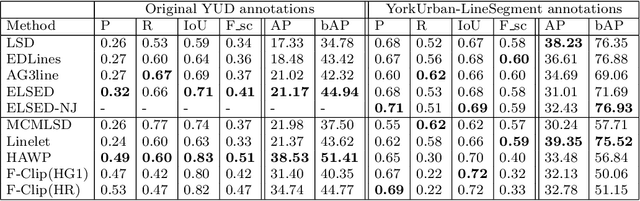

ELSED: Enhanced Line SEgment Drawing

Aug 06, 2021

Abstract:Detecting local features, such as corners, segments or blobs, is the first step in the pipeline of many Computer Vision applications. Its speed is crucial for real time applications. In this paper we present ELSED, the fastest line segment detector in the literature. The key for its efficiency is a local segment growing algorithm that connects gradient aligned pixels in presence of small discontinuities. The proposed algorithm not only runs in devices with very low end hardware, but may also be parametrized to foster the detection of short or longer segments, depending on the task at hand. We also introduce new metrics to evaluate the accuracy and repeatability of segment detectors. In our experiments with different public benchmarks we prove that our method is the most efficient in the literature and quantify the accuracy traded for such gain.

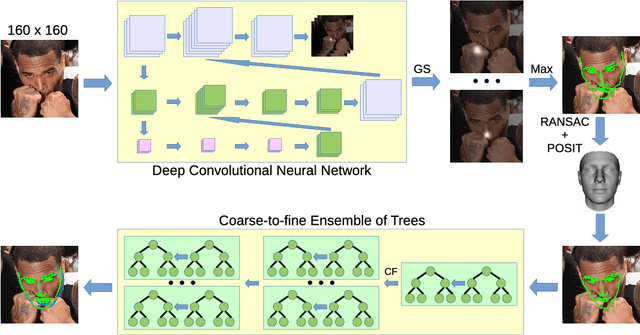

Face Alignment using a 3D Deeply-initialized Ensemble of Regression Trees

Feb 05, 2019

Abstract:Face alignment algorithms locate a set of landmark points in images of faces taken in unrestricted situations. State-of-the-art approaches typically fail or lose accuracy in the presence of occlusions, strong deformations, large pose variations and ambiguous configurations. In this paper we present 3DDE, a robust and efficient face alignment algorithm based on a coarse-to-fine cascade of ensembles of regression trees. It is initialized by robustly fitting a 3D face model to the probability maps produced by a convolutional neural network. With this initialization we address self-occlusions and large face rotations. Further, the regressor implicitly imposes a prior face shape on the solution, addressing occlusions and ambiguous face configurations. Its coarse-to-fine structure tackles the combinatorial explosion of parts deformation. In the experiments performed, 3DDE improves the state-of-the-art in 300W, COFW, AFLW and WFLW data sets. Finally, given that 3DDE can also be trained with missing and occluded landmarks, we have been able to perform cross-dataset experiments that reveal the existence of a significant data set bias in these benchmarks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge