Luís Sarmento

Equivariant neural networks for recovery of Hadamard matrices

Jan 31, 2022

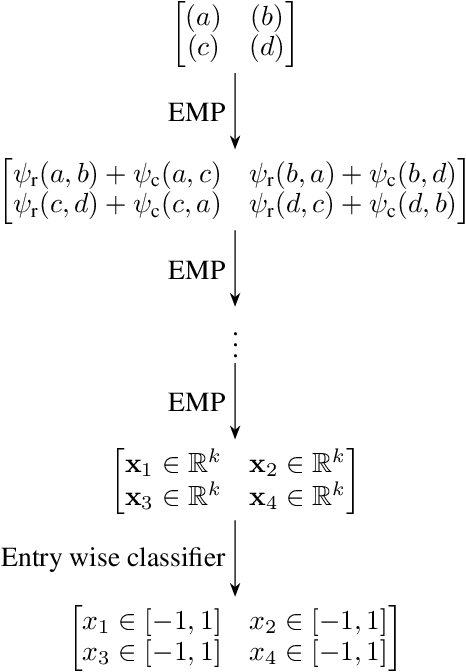

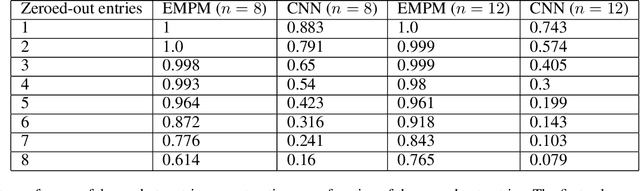

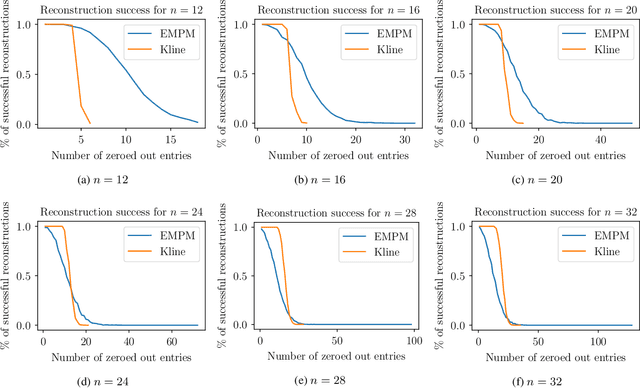

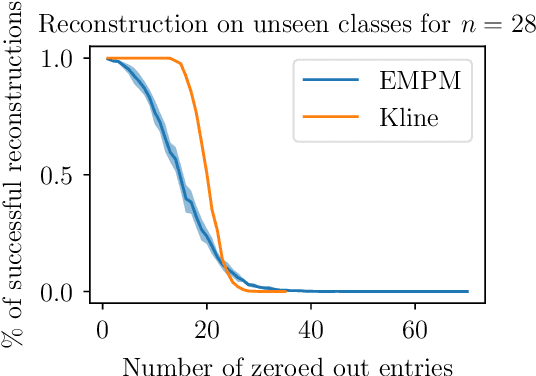

Abstract:We propose a message passing neural network architecture designed to be equivariant to column and row permutations of a matrix. We illustrate its advantages over traditional architectures like multi-layer perceptrons (MLPs), convolutional neural networks (CNNs) and even Transformers, on the combinatorial optimization task of recovering a set of deleted entries of a Hadamard matrix. We argue that this is a powerful application of the principles of Geometric Deep Learning to fundamental mathematics, and a potential stepping stone toward more insights on the Hadamard conjecture using Machine Learning techniques.

Learning Word Embeddings from the Portuguese Twitter Stream: A Study of some Practical Aspects

Sep 04, 2017

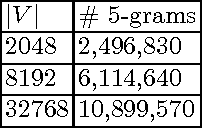

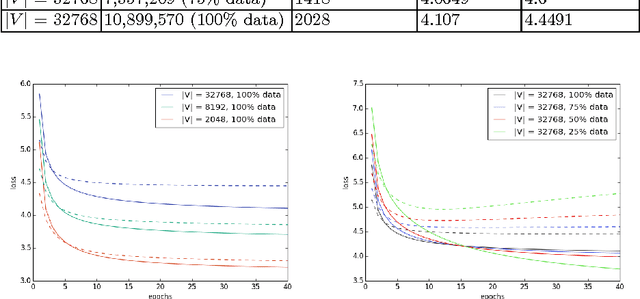

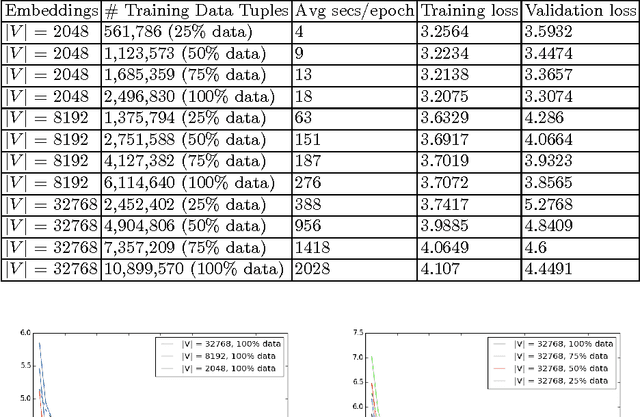

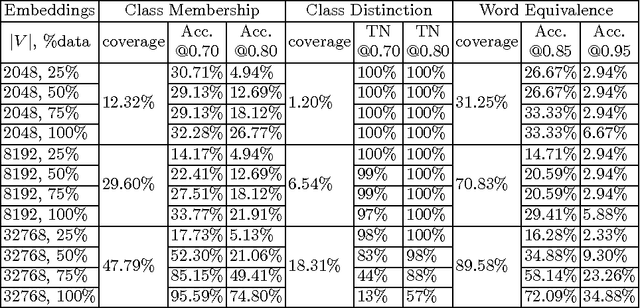

Abstract:This paper describes a preliminary study for producing and distributing a large-scale database of embeddings from the Portuguese Twitter stream. We start by experimenting with a relatively small sample and focusing on three challenges: volume of training data, vocabulary size and intrinsic evaluation metrics. Using a single GPU, we were able to scale up vocabulary size from 2048 words embedded and 500K training examples to 32768 words over 10M training examples while keeping a stable validation loss and approximately linear trend on training time per epoch. We also observed that using less than 50\% of the available training examples for each vocabulary size might result in overfitting. Results on intrinsic evaluation show promising performance for a vocabulary size of 32768 words. Nevertheless, intrinsic evaluation metrics suffer from over-sensitivity to their corresponding cosine similarity thresholds, indicating that a wider range of metrics need to be developed to track progress.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge