Lorenzo Panchetti

TEAM: a parameter-free algorithm to teach collaborative robots motions from user demonstrations

Sep 14, 2022

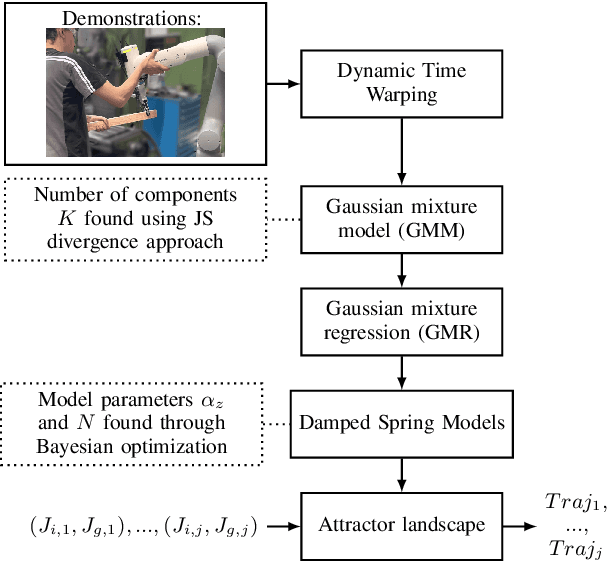

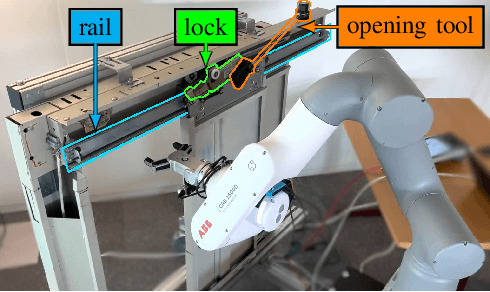

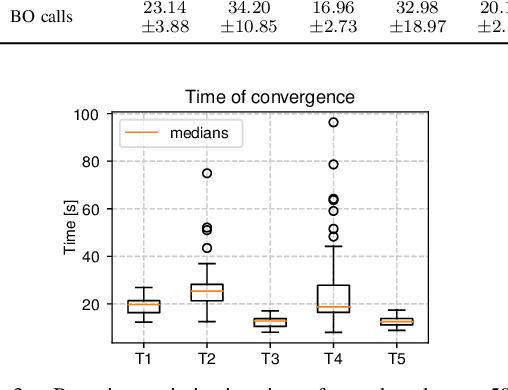

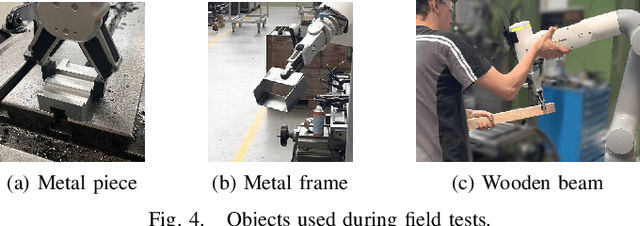

Abstract:Collaborative robots (cobots) built to work alongside humans must be able to quickly learn new skills and adapt to new task configurations. Learning from demonstration (LfD) enables cobots to learn and adapt motions to different use conditions. However, state-of-the-art LfD methods require manually tuning intrinsic parameters and have rarely been used in industrial contexts without experts. In this paper, the development and implementation of a LfD framework for industrial applications with naive users is presented. We propose a parameter-free method based on probabilistic movement primitives, where all the parameters are pre-determined using Jensen-Shannon divergence and bayesian optimization; thus, users do not have to perform manual parameter tuning. This method learns motions from a small dataset of user demonstrations, and generalizes the motion to various scenarios and conditions. We evaluate the method extensively in two field tests: one where the cobot works on elevator door maintenance, and one where three Schindler workers teach the cobot tasks useful for their workflow. Errors between the cobot end-effector and target positions range from $0$ to $1.48\pm0.35$mm. For all tests, no task failures were reported. Questionnaires completed by the Schindler workers highlighted the method's ease of use, feeling of safety, and the accuracy of the reproduced motion. Our code and recorded trajectories are made available online for reproduction.

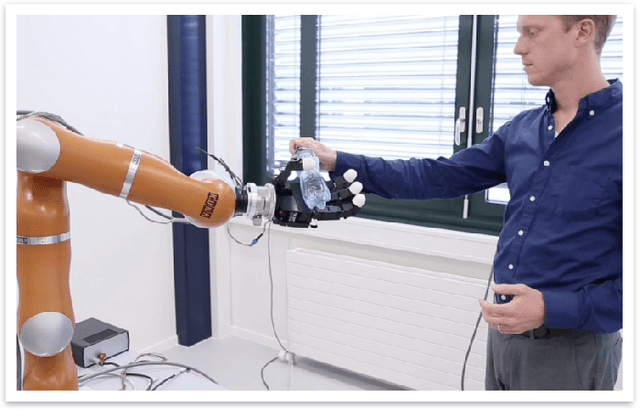

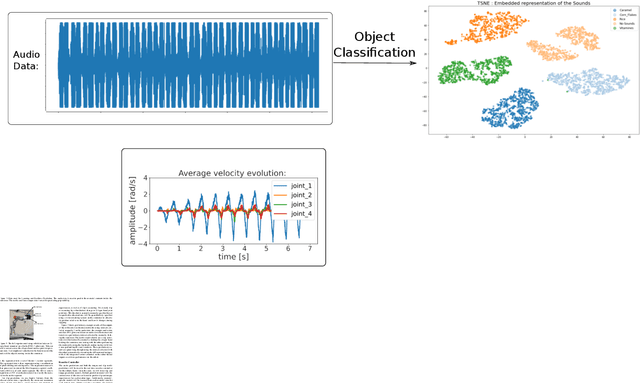

Multimodal Sensory Learning for Real-time, Adaptive Manipulation

Oct 09, 2021

Abstract:Adaptive control for real-time manipulation requires quick estimation and prediction of object properties. While robot learning in this area primarily focuses on using vision, many tasks cannot rely on vision due to object occlusion. Here, we formulate a learning framework that uses multimodal sensory fusion of tactile and audio data in order to quickly characterize and predict an object's properties. The predictions are used in a developed reactive controller to adapt the grip on the object to compensate for the predicted inertial forces experienced during motion. Drawing inspiration from how humans interact with objects, we propose an experimental setup from which we can understand how to best utilize different sensory signals and actively interact with and manipulate objects to quickly learn their object properties for safe manipulation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge