Lorenzo Masia

Point Cloud-based Grasping for Soft Hand Exoskeleton

Apr 04, 2025Abstract:Grasping is a fundamental skill for interacting with and manipulating objects in the environment. However, this ability can be challenging for individuals with hand impairments. Soft hand exoskeletons designed to assist grasping can enhance or restore essential hand functions, yet controlling these soft exoskeletons to support users effectively remains difficult due to the complexity of understanding the environment. This study presents a vision-based predictive control framework that leverages contextual awareness from depth perception to predict the grasping target and determine the next control state for activation. Unlike data-driven approaches that require extensive labelled datasets and struggle with generalizability, our method is grounded in geometric modelling, enabling robust adaptation across diverse grasping scenarios. The Grasping Ability Score (GAS) was used to evaluate performance, with our system achieving a state-of-the-art GAS of 91% across 15 objects and healthy participants, demonstrating its effectiveness across different object types. The proposed approach maintained reconstruction success for unseen objects, underscoring its enhanced generalizability compared to learning-based models.

Environment-based Assistance Modulation for a Hip Exosuit via Computer Vision

Nov 28, 2022

Abstract:Just like in humans vision plays a fundamental role in guiding adaptive locomotion, when designing the control strategy for a walking assistive technology, Computer Vision may bring substantial improvements when performing an environment-based assistance modulation. In this work, we developed a hip exosuit controller able to distinguish among three different walking terrains through the use of an RGB camera and to adapt the assistance accordingly. The system was tested with seven healthy participants walking throughout an overground path comprising of staircases and level ground. Subjects performed the task with the exosuit disabled (Exo Off), constant assistance profile (Vision Off ), and with assistance modulation (Vision On). Our results showed that the controller was able to promptly classify in real-time the path in front of the user with an overall accuracy per class above the 85%, and to perform assistance modulation accordingly. Evaluation related to the effects on the user showed that Vision On was able to outperform the other two conditions: we obtained significantly higher metabolic savings than Exo Off, with a peak of about -20% when climbing up the staircase and about -16% in the overall path, and than Vision Off when ascending or descending stairs. Such advancements in the field may yield to a step forward for the exploitation of lightweight walking assistive technologies in real-life scenarios.

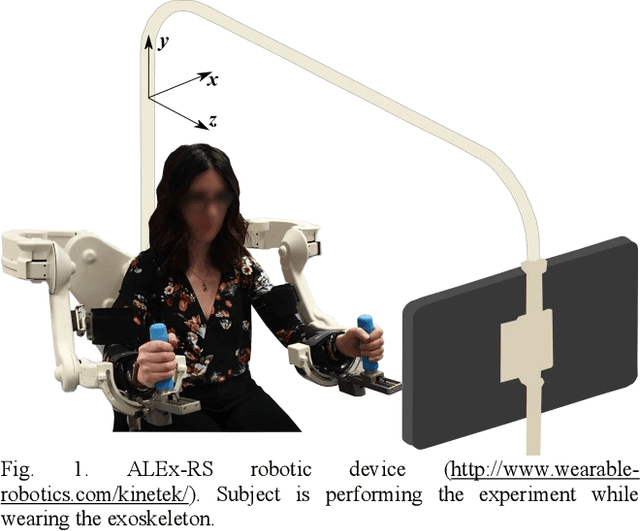

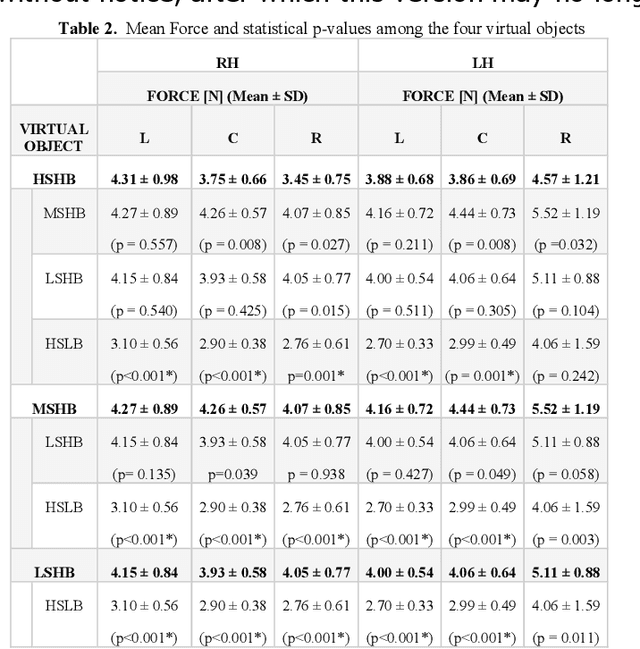

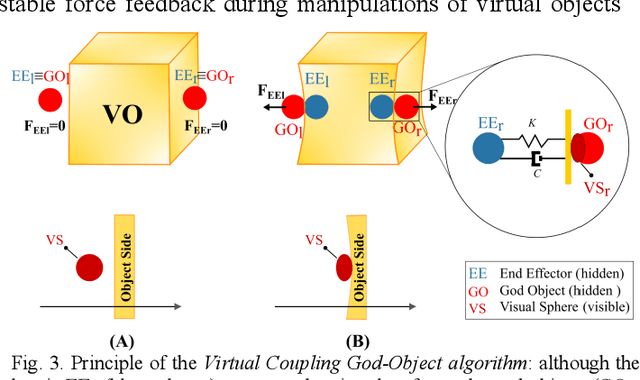

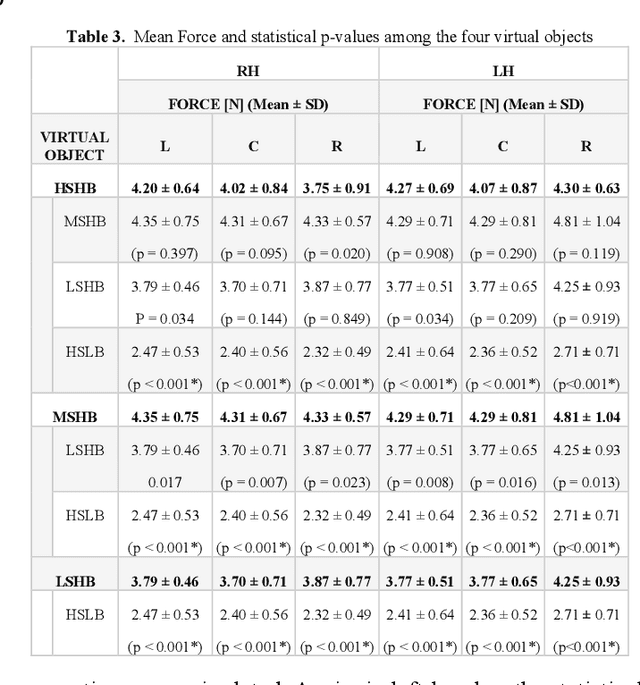

Bimanual Motor Strategies and Handedness Role During Human-Exoskeleton Haptic Interaction

Nov 22, 2022

Abstract:Bimanual object manipulation involves multiple visuo-haptic sensory feedbacks arising from the interaction with the environment that are managed from the central nervous system and consequently translated in motor commands. Kinematic strategies that occur during bimanual coupled tasks are still a scientific debate despite modern advances in haptics and robotics. Current technologies may have the potential to provide realistic scenarios involving the entire upper limb extremities during multi-joint movements but are not yet exploited to their full potential. The present study explores how hands dynamically interact when manipulating a shared object through the use of two impedance-controlled exoskeletons programmed to simulate bimanually coupled manipulation of virtual objects. We enrolled twenty-six participants (2 groups: right-handed and left-handed) who were requested to use both hands to grab simulated objects across the robot workspace and place them in specific locations. The virtual objects were rendered with different dynamic proprieties and textures influencing the manipulation strategies to complete the tasks. Results revealed that the roles of hands are related to the movement direction, the haptic features, and the handedness preference. Outcomes suggested that the haptic feedback affects bimanual strategies depending on the movement direction. However, left-handers show better control of the force applied between the two hands, probably due to environmental pressures for right-handed manipulations.

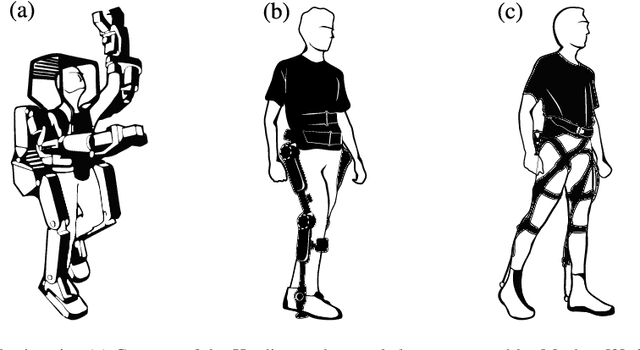

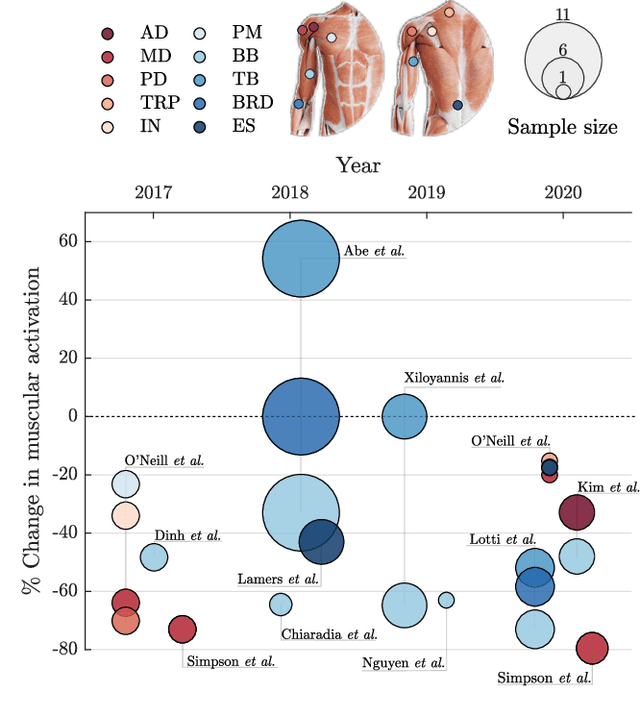

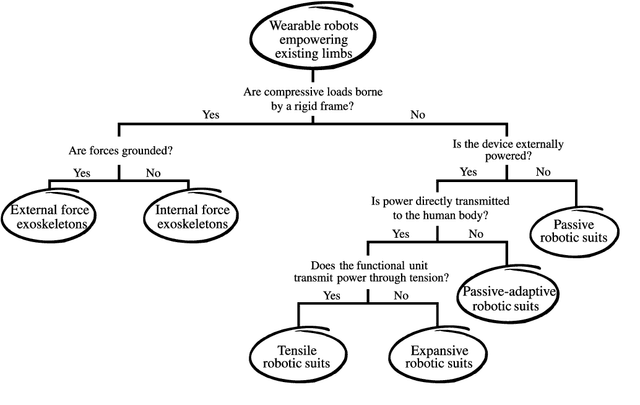

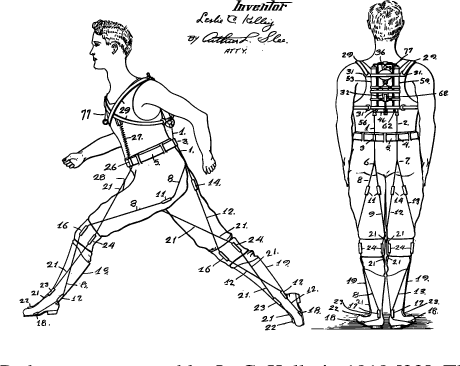

Soft robotic suits: State of the art, core technologies and open challenges

May 30, 2021

Abstract:Wearable robots are undergoing a disruptive transition, from the rigid machines that populated the science-fiction world in the early eighties to lightweight robotic apparel, hardly distinguishable from our daily clothes. In less than a decade of development, soft robotic suits have achieved important results in human motor assistance and augmentation. In this paper, we start by giving a definition of soft robotic suits and proposing a taxonomy to classify existing systems. We then critically review the modes of actuation, the physical human-robot interface and the intention-detection strategies of state of the art soft robotic suits, highlighting the advantages and limitations of different approaches. Finally, we discuss the impact of this new technology on human movements, for both augmenting human function and supporting motor impairments, and identify areas that are in need of further development.

* Accepted as a Survey Paper on IEEE Transaction on Robotics

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge