Longjie Hu

Understanding the Complexity and Its Impact on Testing in ML-Enabled Systems

Jan 10, 2023

Abstract:Machine learning (ML) enabled systems are emerging with recent breakthroughs in ML. A model-centric view is widely taken by the literature to focus only on the analysis of ML models. However, only a small body of work takes a system view that looks at how ML components work with the system and how they affect software engineering for MLenabled systems. In this paper, we adopt this system view, and conduct a case study on Rasa 3.0, an industrial dialogue system that has been widely adopted by various companies around the world. Our goal is to characterize the complexity of such a largescale ML-enabled system and to understand the impact of the complexity on testing. Our study reveals practical implications for software engineering for ML-enabled systems.

Characterizing Performance Bugs in Deep Learning Systems

Dec 03, 2021

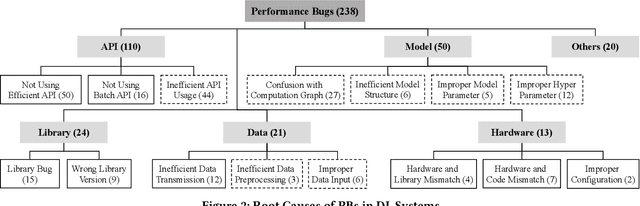

Abstract:Deep learning (DL) has been increasingly applied to a variety of domains. The programming paradigm shift from traditional systems to DL systems poses unique challenges in engineering DL systems. Performance is one of the challenges, and performance bugs(PBs) in DL systems can cause severe consequences such as excessive resource consumption and financial loss. While bugs in DL systems have been extensively investigated, PBs in DL systems have hardly been explored. To bridge this gap, we present the first comprehensive study to characterize symptoms, root causes, and introducing and exposing stages of PBs in DL systems developed in TensorFLow and Keras, with a total of 238 PBs collected from 225 StackOverflow posts. Our findings shed light on the implications on developing high performance DL systems, and detecting and localizing PBs in DL systems. We also build the first benchmark of 56 PBs in DL systems, and assess the capability of existing approaches in tackling them. Moreover, we develop a static checker DeepPerf to detect three types of PBs, and identify 488 new PBs in 130 GitHub projects.62 and 18 of them have been respectively confirmed and fixed by developers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge