Ling Tong

MultiNet with Transformers: A Model for Cancer Diagnosis Using Images

Jan 21, 2023

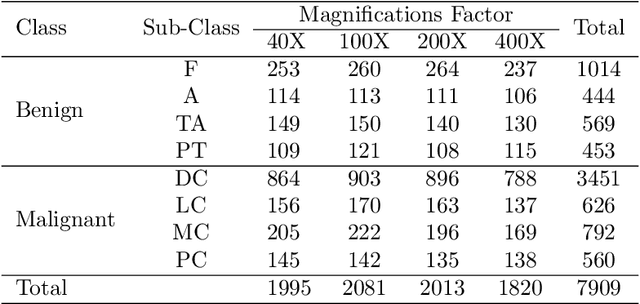

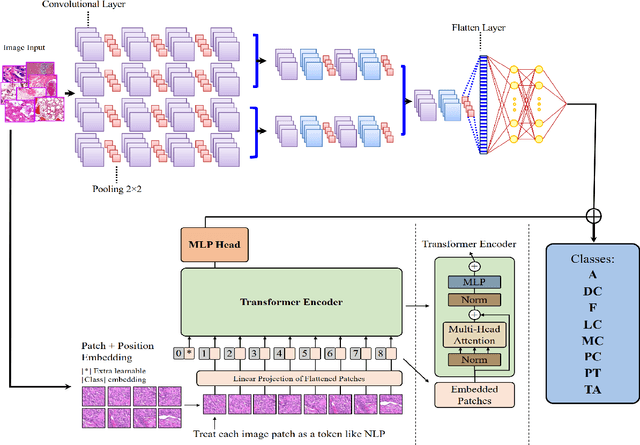

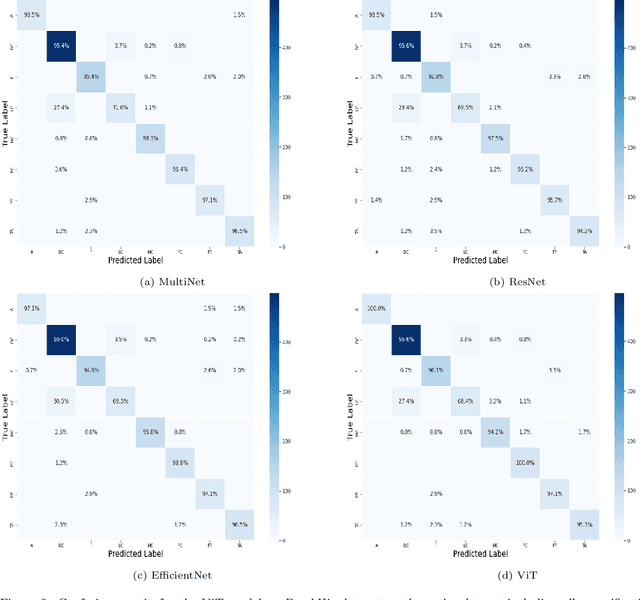

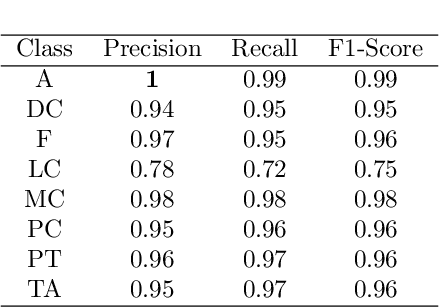

Abstract:Cancer is a leading cause of death in many countries. An early diagnosis of cancer based on biomedical imaging ensures effective treatment and a better prognosis. However, biomedical imaging presents challenges to both clinical institutions and researchers. Physiological anomalies are often characterized by slight abnormalities in individual cells or tissues, making them difficult to detect visually. Traditionally, anomalies are diagnosed by radiologists and pathologists with extensive training. This procedure, however, demands the participation of professionals and incurs a substantial cost. The cost makes large-scale biological image classification impractical. In this study, we provide unique deep neural network designs for multiclass classification of medical images, in particular cancer images. We incorporated transformers into a multiclass framework to take advantage of data-gathering capability and perform more accurate classifications. We evaluated models on publicly accessible datasets using various measures to ensure the reliability of the models. Extensive assessment metrics suggest this method can be used for a multitude of classification tasks.

A Deep Learning Study on Osteosarcoma Detection from Histological Images

Nov 02, 2020

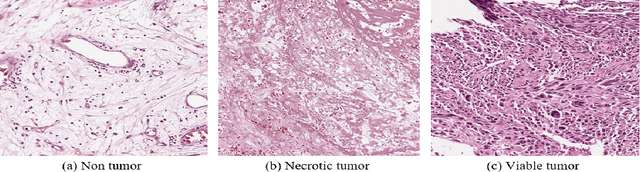

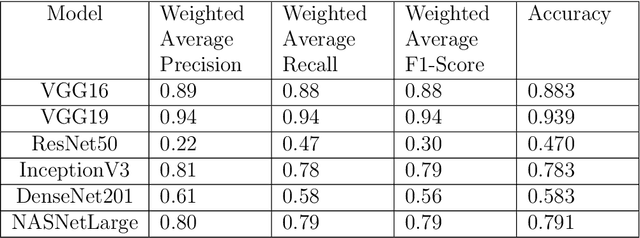

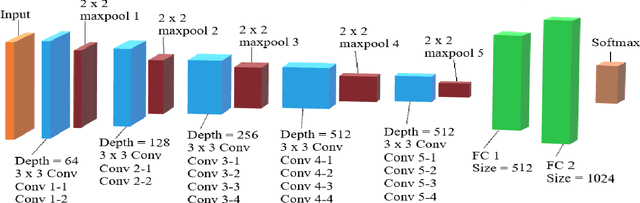

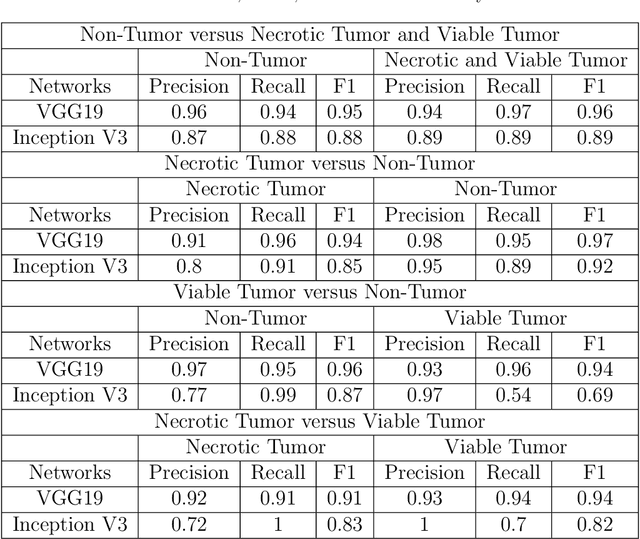

Abstract:In the U.S, 5-10\% of new pediatric cases of cancer are primary bone tumors. The most common type of primary malignant bone tumor is osteosarcoma. The intention of the present work is to improve the detection and diagnosis of osteosarcoma using computer-aided detection (CAD) and diagnosis (CADx). Such tools as convolutional neural networks (CNNs) can significantly decrease the surgeon's workload and make a better prognosis of patient conditions. CNNs need to be trained on a large amount of data in order to achieve a more trustworthy performance. In this study, transfer learning techniques, pre-trained CNNs, are adapted to a public dataset on osteosarcoma histological images to detect necrotic images from non-necrotic and healthy tissues. First, the dataset was preprocessed, and different classifications are applied. Then, Transfer learning models including VGG19 and Inception V3 are used and trained on Whole Slide Images (WSI) with no patches, to improve the accuracy of the outputs. Finally, the models are applied to different classification problems, including binary and multi-class classifiers. Experimental results show that the accuracy of the VGG19 has the highest, 96\%, performance amongst all binary classes and multiclass classification. Our fine-tuned model demonstrates state-of-the-art performance on detecting malignancy of Osteosarcoma based on histologic images.

Predicting Urban Dispersal Events: A Two-Stage Framework through Deep Survival Analysis on Mobility Data

May 03, 2019

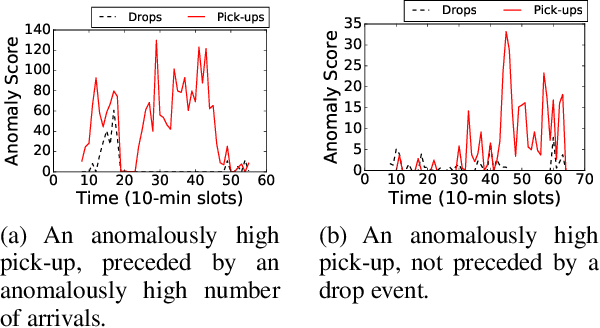

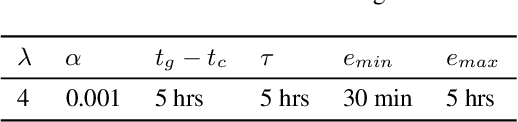

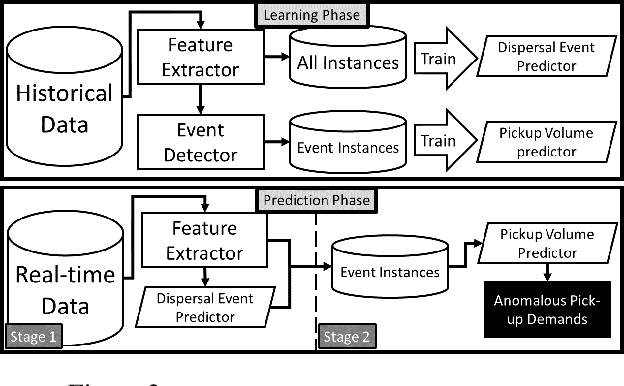

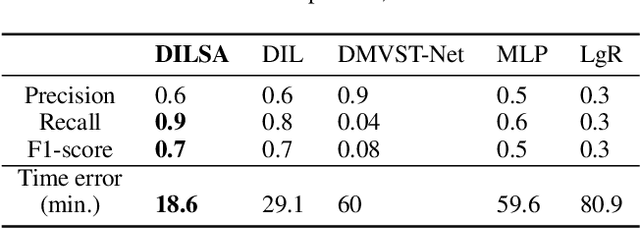

Abstract:Urban dispersal events are processes where an unusually large number of people leave the same area in a short period. Early prediction of dispersal events is important in mitigating congestion and safety risks and making better dispatching decisions for taxi and ride-sharing fleets. Existing work mostly focuses on predicting taxi demand in the near future by learning patterns from historical data. However, they fail in case of abnormality because dispersal events with abnormally high demand are non-repetitive and violate common assumptions such as smoothness in demand change over time. Instead, in this paper we argue that dispersal events follow a complex pattern of trips and other related features in the past, which can be used to predict such events. Therefore, we formulate the dispersal event prediction problem as a survival analysis problem. We propose a two-stage framework (DILSA), where a deep learning model combined with survival analysis is developed to predict the probability of a dispersal event and its demand volume. We conduct extensive case studies and experiments on the NYC Yellow taxi dataset from 2014-2016. Results show that DILSA can predict events in the next 5 hours with F1-score of 0.7 and with average time error of 18 minutes. It is orders of magnitude better than the state-ofthe-art deep learning approaches for taxi demand prediction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge