Liam Clark

Performant, Memory Efficient and Scalable Multi-Agent Reinforcement Learning

Oct 02, 2024

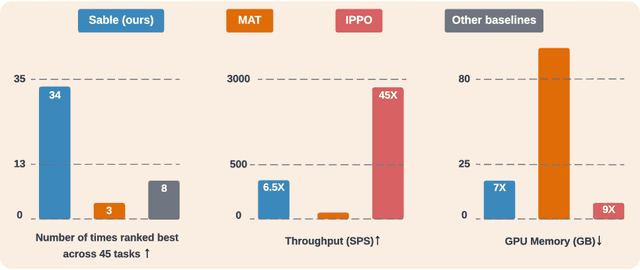

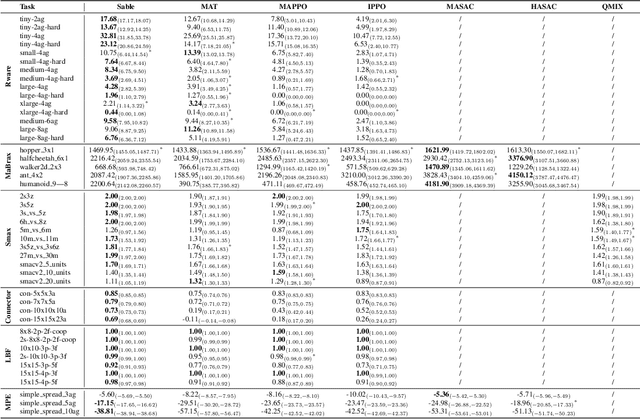

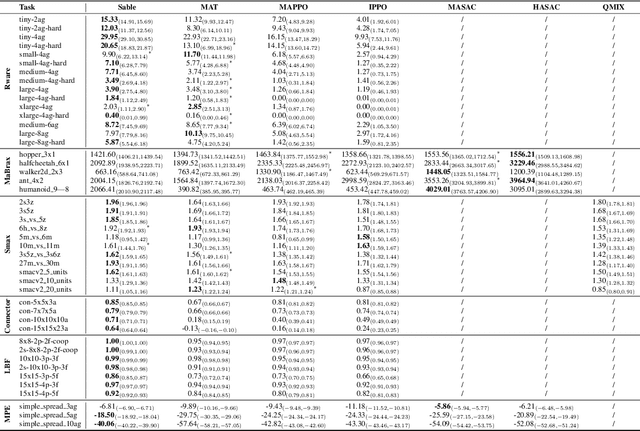

Abstract:As the field of multi-agent reinforcement learning (MARL) progresses towards larger and more complex environments, achieving strong performance while maintaining memory efficiency and scalability to many agents becomes increasingly important. Although recent research has led to several advanced algorithms, to date, none fully address all of these key properties simultaneously. In this work, we introduce Sable, a novel and theoretically sound algorithm that adapts the retention mechanism from Retentive Networks to MARL. Sable's retention-based sequence modelling architecture allows for computationally efficient scaling to a large number of agents, as well as maintaining a long temporal context, making it well-suited for large-scale partially observable environments. Through extensive evaluations across six diverse environments, we demonstrate how Sable is able to significantly outperform existing state-of-the-art methods in the majority of tasks (34 out of 45, roughly 75\%). Furthermore, Sable demonstrates stable performance as we scale the number of agents, handling environments with more than a thousand agents while exhibiting a linear increase in memory usage. Finally, we conduct ablation studies to isolate the source of Sable's performance gains and confirm its efficient computational memory usage. Our results highlight Sable's performance and efficiency, positioning it as a leading approach to MARL at scale.

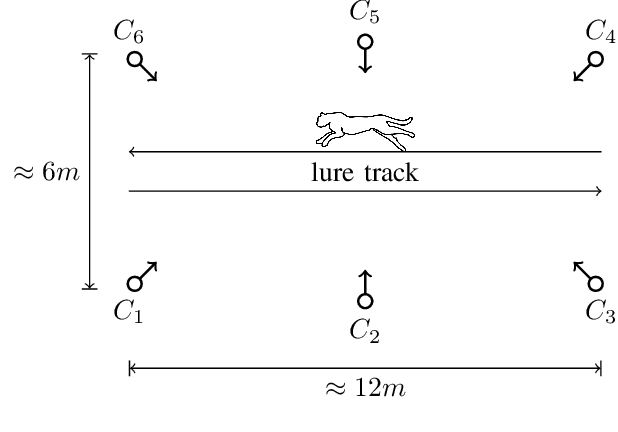

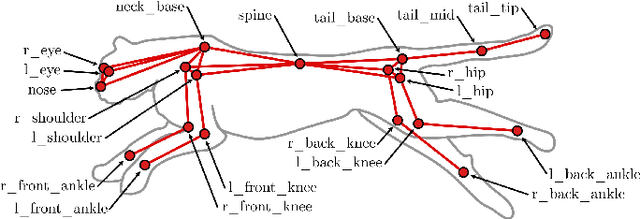

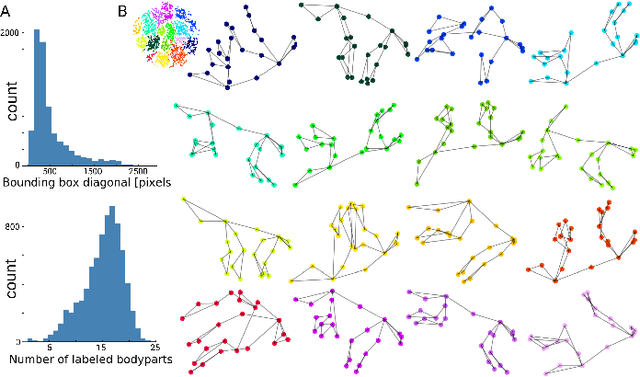

AcinoSet: A 3D Pose Estimation Dataset and Baseline Models for Cheetahs in the Wild

Mar 24, 2021

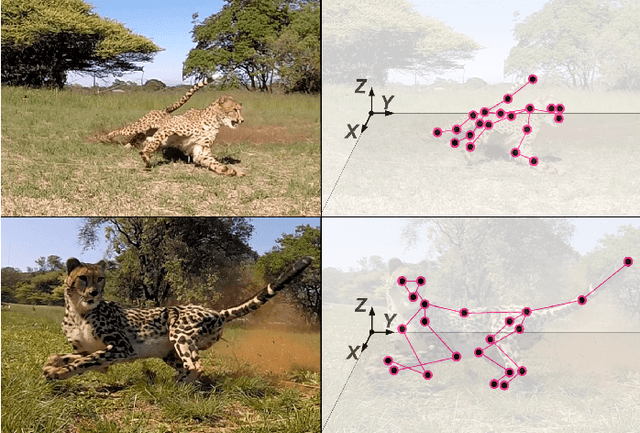

Abstract:Animals are capable of extreme agility, yet understanding their complex dynamics, which have ecological, biomechanical and evolutionary implications, remains challenging. Being able to study this incredible agility will be critical for the development of next-generation autonomous legged robots. In particular, the cheetah (acinonyx jubatus) is supremely fast and maneuverable, yet quantifying its whole-body 3D kinematic data during locomotion in the wild remains a challenge, even with new deep learning-based methods. In this work we present an extensive dataset of free-running cheetahs in the wild, called AcinoSet, that contains 119,490 frames of multi-view synchronized high-speed video footage, camera calibration files and 7,588 human-annotated frames. We utilize markerless animal pose estimation to provide 2D keypoints. Then, we use three methods that serve as strong baselines for 3D pose estimation tool development: traditional sparse bundle adjustment, an Extended Kalman Filter, and a trajectory optimization-based method we call Full Trajectory Estimation. The resulting 3D trajectories, human-checked 3D ground truth, and an interactive tool to inspect the data is also provided. We believe this dataset will be useful for a diverse range of fields such as ecology, neuroscience, robotics, biomechanics as well as computer vision.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge