Lazaros Grammatikopoulos

Has Anything Changed? 3D Change Detection by 2D Segmentation Masks

Dec 02, 2023

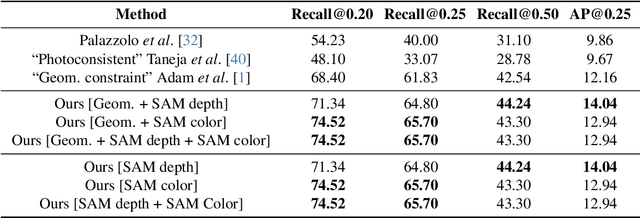

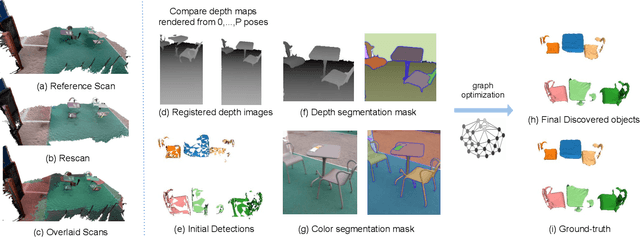

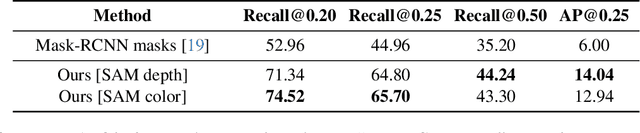

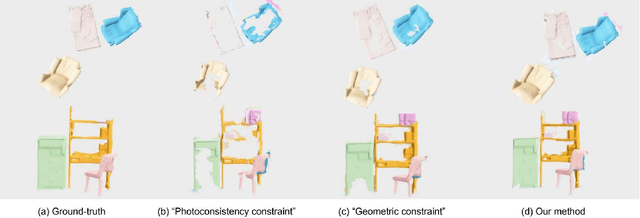

Abstract:As capturing devices become common, 3D scans of interior spaces are acquired on a daily basis. Through scene comparison over time, information about objects in the scene and their changes is inferred. This information is important for robots and AR and VR devices, in order to operate in an immersive virtual experience. We thus propose an unsupervised object discovery method that identifies added, moved, or removed objects without any prior knowledge of what objects exist in the scene. We model this problem as a combination of a 3D change detection and a 2D segmentation task. Our algorithm leverages generic 2D segmentation masks to refine an initial but incomplete set of 3D change detections. The initial changes, acquired through render-and-compare likely correspond to movable objects. The incomplete detections are refined through graph optimization, distilling the information of the 2D segmentation masks in the 3D space. Experiments on the 3Rscan dataset prove that our method outperforms competitive baselines, with SoTA results.

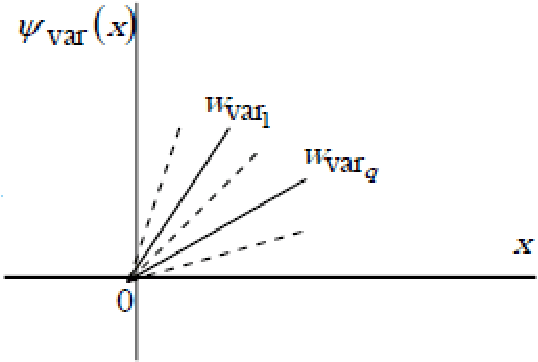

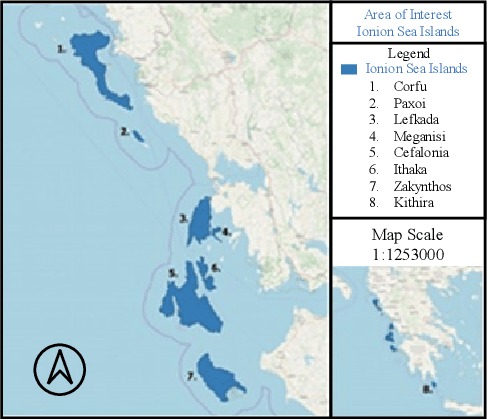

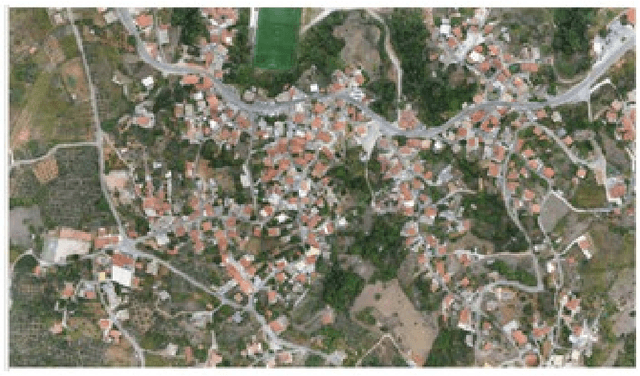

Very Deep Super-Resolution of Remotely Sensed Images with Mean Square Error and Var-norm Estimators as Loss Functions

Jul 30, 2020

Abstract:In this work, very deep super-resolution (VDSR) method is presented for improving the spatial resolution of remotely sensed (RS) images for scale factor 4. The VDSR net is re-trained with Sentinel-2 images and with drone aero orthophoto images, thus becomes RS-VDSR and Aero-VDSR, respectively. A novel loss function, the Var-norm estimator, is proposed in the regression layer of the convolutional neural network during re-training and prediction. According to numerical and optical comparisons, the proposed nets RS-VDSR and Aero-VDSR can outperform VDSR during prediction with RS images. RS-VDSR outperforms VDSR up to 3.16 dB in terms of PSNR in Sentinel-2 images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge