Kien Nguyen Thanh

Zoom-shot: Fast and Efficient Unsupervised Zero-Shot Transfer of CLIP to Vision Encoders with Multimodal Loss

Jan 22, 2024Abstract:The fusion of vision and language has brought about a transformative shift in computer vision through the emergence of Vision-Language Models (VLMs). However, the resource-intensive nature of existing VLMs poses a significant challenge. We need an accessible method for developing the next generation of VLMs. To address this issue, we propose Zoom-shot, a novel method for transferring the zero-shot capabilities of CLIP to any pre-trained vision encoder. We do this by exploiting the multimodal information (i.e. text and image) present in the CLIP latent space through the use of specifically designed multimodal loss functions. These loss functions are (1) cycle-consistency loss and (2) our novel prompt-guided knowledge distillation loss (PG-KD). PG-KD combines the concept of knowledge distillation with CLIP's zero-shot classification, to capture the interactions between text and image features. With our multimodal losses, we train a $\textbf{linear mapping}$ between the CLIP latent space and the latent space of a pre-trained vision encoder, for only a $\textbf{single epoch}$. Furthermore, Zoom-shot is entirely unsupervised and is trained using $\textbf{unpaired}$ data. We test the zero-shot capabilities of a range of vision encoders augmented as new VLMs, on coarse and fine-grained classification datasets, outperforming the previous state-of-the-art in this problem domain. In our ablations, we find Zoom-shot allows for a trade-off between data and compute during training; and our state-of-the-art results can be obtained by reducing training from 20% to 1% of the ImageNet training data with 20 epochs. All code and models are available on GitHub.

Boosting Zero-shot Classification with Synthetic Data Diversity via Stable Diffusion

Feb 08, 2023

Abstract:Recent research has shown it is possible to perform zero-shot classification tasks by training a classifier with synthetic data generated by a diffusion model. However, the performance of this approach is still inferior to that of recent vision-language models. It has been suggested that the reason for this is a domain gap between the synthetic and real data. In our work, we show that this domain gap is not the main issue, and that diversity in the synthetic dataset is more important. We propose a $\textit{bag of tricks}$ to improve diversity and are able to achieve performance on par with one of the vision-language models, CLIP. More importantly, this insight allows us to endow zero-shot classification capabilities on any classification model.

Im2Mesh GAN: Accurate 3D Hand Mesh Recovery from a Single RGB Image

Jan 27, 2021

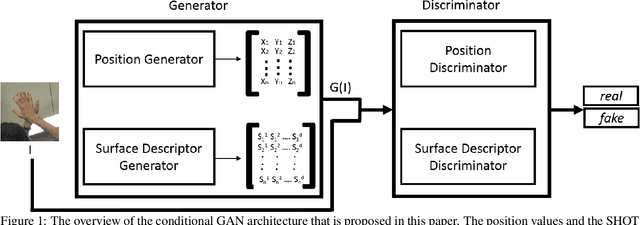

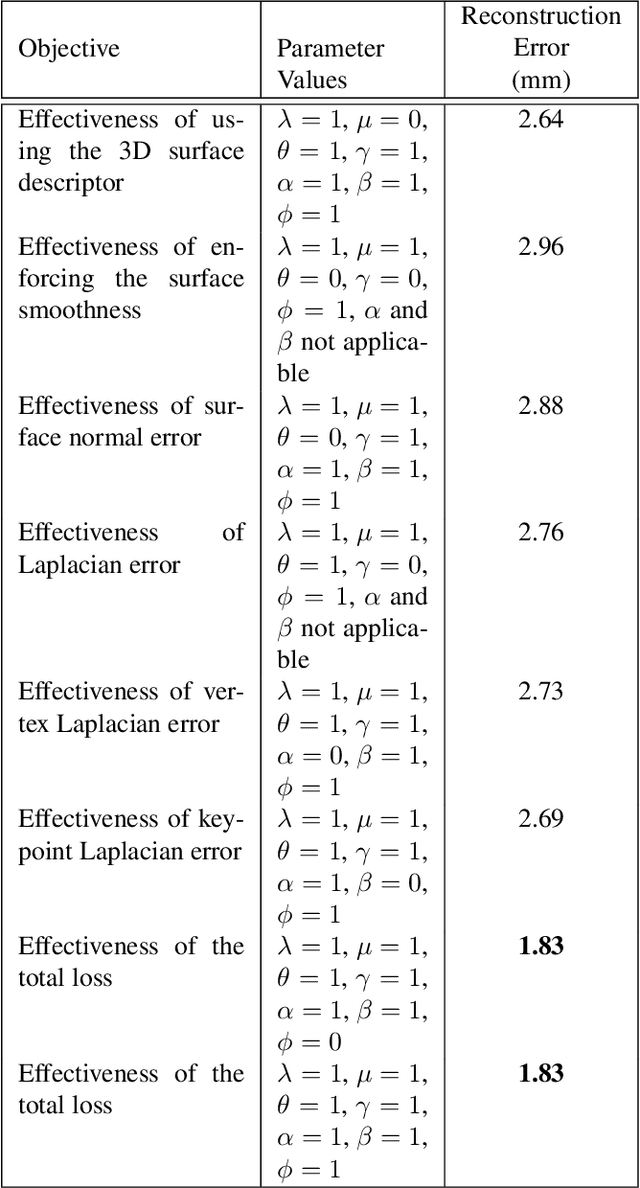

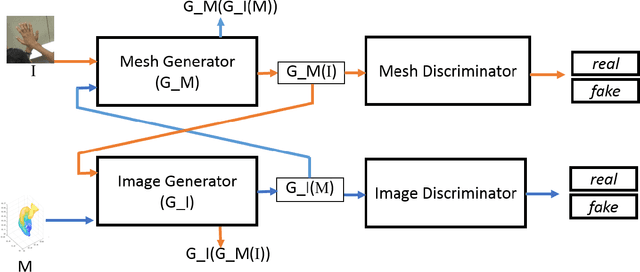

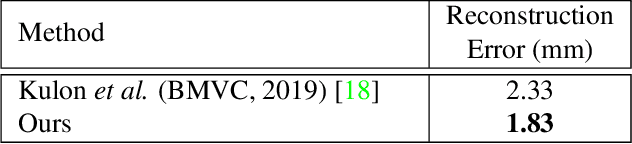

Abstract:This work addresses hand mesh recovery from a single RGB image. In contrast to most of the existing approaches where the parametric hand models are employed as the prior, we show that the hand mesh can be learned directly from the input image. We propose a new type of GAN called Im2Mesh GAN to learn the mesh through end-to-end adversarial training. By interpreting the mesh as a graph, our model is able to capture the topological relationship among the mesh vertices. We also introduce a 3D surface descriptor into the GAN architecture to further capture the 3D features associated. We experiment two approaches where one can reap the benefits of coupled groundtruth data availability of images and the corresponding meshes, while the other combats the more challenging problem of mesh estimations without the corresponding groundtruth. Through extensive evaluations we demonstrate that the proposed method outperforms the state-of-the-art.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge