Kevin Luong

Command A: An Enterprise-Ready Large Language Model

Apr 01, 2025

Abstract:In this report we describe the development of Command A, a powerful large language model purpose-built to excel at real-world enterprise use cases. Command A is an agent-optimised and multilingual-capable model, with support for 23 languages of global business, and a novel hybrid architecture balancing efficiency with top of the range performance. It offers best-in-class Retrieval Augmented Generation (RAG) capabilities with grounding and tool use to automate sophisticated business processes. These abilities are achieved through a decentralised training approach, including self-refinement algorithms and model merging techniques. We also include results for Command R7B which shares capability and architectural similarities to Command A. Weights for both models have been released for research purposes. This technical report details our original training pipeline and presents an extensive evaluation of our models across a suite of enterprise-relevant tasks and public benchmarks, demonstrating excellent performance and efficiency.

Hierarchically Gated Experts for Efficient Online Continual Learning

Dec 22, 2024

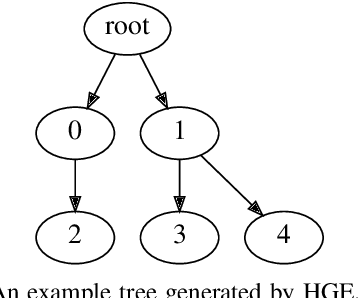

Abstract:Continual Learning models aim to learn a set of tasks under the constraint that the tasks arrive sequentially with no way to access data from previous tasks. The Online Continual Learning framework poses a further challenge where the tasks are unknown and instead the data arrives as a single stream. Building on existing work, we propose a method for identifying these underlying tasks: the Gated Experts (GE) algorithm, where a dynamically growing set of experts allows for new knowledge to be acquired without catastrophic forgetting. Furthermore, we extend GE to Hierarchically Gated Experts (HGE), a method which is able to efficiently select the best expert for each data sample by organising the experts into a hierarchical structure. On standard Continual Learning benchmarks, GE and HGE are able to achieve results comparable with current methods, with HGE doing so more efficiently.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge